use gradient descent to seek out the optimum values of their weights. Linear regression, logistic regression, neural networks, and enormous language fashions all depend on this precept. Within the earlier articles, we used easy gradient descent as a result of it’s simpler to point out and simpler to know.

The identical precept additionally seems at scale in fashionable giant language fashions, the place coaching requires adjusting tens of millions or billions of parameters.

Nonetheless, actual coaching not often makes use of the fundamental model. It’s typically too sluggish or too unstable. Trendy programs use variants of gradient descent that enhance pace, stability, or convergence.

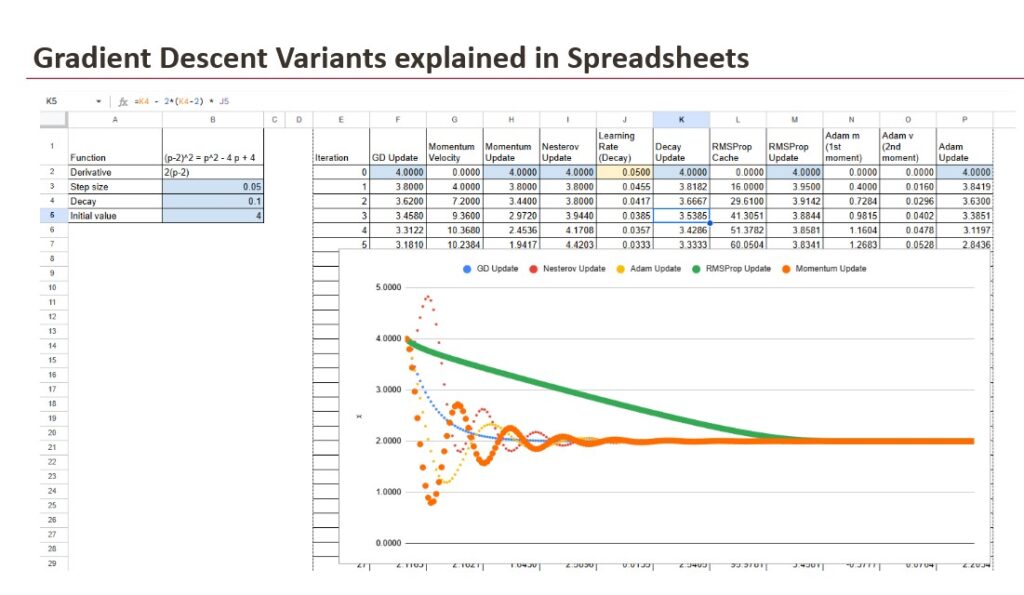

On this bonus article, we deal with these variants. We have a look at why they exist, what downside they clear up, and the way they alter the replace rule. We don’t use a dataset right here. We use one variable and one operate, solely to make the habits seen. The purpose is to point out the motion, to not prepare a mannequin.

1. Gradient Descent and the Replace Mechanism

1.1 Drawback setup

To make these concepts seen, we won’t use a dataset right here, as a result of datasets introduce noise and make it tougher to watch the habits straight. As an alternative, we are going to use a single operate:

f(x) = (x – 2)²

We begin at x = 4, and the gradient is:

gradient = 2*(x – 2)

This straightforward setup removes distractions. The target is to not prepare a mannequin, however to know how the totally different optimisation guidelines change the motion towards the minimal.

1.2 The construction behind each replace

Each optimisation methodology that follows on this article is constructed on the identical loop, even when the inner logic turns into extra subtle.

- First, we learn the present worth of x.

- Then, we compute the gradient with the expression 2*(x – 2).

- Lastly, we replace x in line with the particular rule outlined by the chosen variant.

The vacation spot stays the identical and the gradient all the time factors within the right course, however the way in which we transfer alongside this course modifications from one methodology to a different. This modification in motion is the essence of every variant.

1.3 Primary gradient descent because the baseline

Primary gradient descent applies a direct replace based mostly on the present gradient and a set studying price:

x = x – lr * 2*(x – 2)

That is probably the most intuitive type of studying as a result of the replace rule is simple to know and simple to implement. The strategy strikes steadily towards the minimal, nevertheless it typically does so slowly, and it could battle when the educational price shouldn’t be chosen rigorously. It represents the muse on which all different variants are constructed.

2. Studying Fee Decay

Studying Fee Decay doesn’t change the replace rule itself. It modifications the scale of the educational price throughout iterations in order that the optimisation turns into extra secure close to the minimal. Giant steps assist when x is much from the goal, however smaller steps are safer when x will get near the minimal. Decay reduces the danger of overshooting and produces a smoother touchdown.

There may be not a single decay method. A number of schedules exist in apply:

- exponential decay

- inverse decay (the one proven within the spreadsheet)

- step-based decay

- linear decay

- cosine or cyclical schedules

All of those comply with the identical thought: the educational price turns into smaller over time, however the sample is determined by the chosen schedule.

Within the spreadsheet instance, the decay method is the inverse type:

lr_t = lr / (1 + decay * iteration)

With the replace rule:

x = x – lr_t * 2*(x – 2)

This schedule begins with the total studying price on the first iteration, then steadily reduces it. In the beginning of the optimisation, the step dimension is giant sufficient to maneuver shortly. As x approaches the minimal, the educational price shrinks, stabilising the replace and avoiding oscillation.

On the chart, each curves begin at x = 4. The fastened studying price model strikes quicker at first however approaches the minimal with much less stability. The decay model strikes extra slowly however stays managed. This confirms that decay doesn’t change the course of the replace. It solely modifications the step dimension, and that change impacts the habits.

3. Momentum Strategies

Gradient Descent strikes within the right course however will be sluggish on flat areas. Momentum strategies deal with this by including inertia to the replace.

They accumulate course over time, which creates quicker progress when the gradient stays constant. This household consists of normal Momentum, which builds pace, and Nesterov Momentum, which anticipates the subsequent place to scale back overshooting.

3.1 Normal momentum

Normal momentum introduces the concept of inertia into the educational course of. As an alternative of reacting solely to the present gradient, the replace retains a reminiscence of earlier gradients within the type of a velocity variable:

velocity = 0.9velocity + 2(x – 2)

x = x – lr * velocity

This strategy accelerates studying when the gradient stays constant for a number of iterations, which is particularly helpful in flat or shallow areas.

Nonetheless, the identical inertia that generates pace also can result in overshooting the minimal, which creates oscillations across the goal.

3.2 Nesterov Momentum

Nesterov Momentum is a refinement of the earlier methodology. As an alternative of updating the rate on the present place alone, the strategy first estimates the place the subsequent place might be, after which evaluates the gradient at that anticipated location:

velocity = 0.9velocity + 2((x – 0.9*velocity) – 2)

x = x – lr * velocity

This look-ahead behaviour reduces the overshooting impact that may seem in common Momentum, which ends up in a smoother strategy to the minimal and fewer oscillations. It retains the good thing about pace whereas introducing a extra cautious sense of course.

4. Adaptive Gradient Strategies

Adaptive Gradient Strategies modify the replace based mostly on info gathered throughout coaching. As an alternative of utilizing a set studying price or relying solely on the present gradient, these strategies adapt to the size and habits of current gradients.

The purpose is to scale back the step dimension when gradients develop into unstable and to permit regular progress when the floor is extra predictable. This strategy is helpful in deep networks or irregular loss surfaces, the place the gradient can change in magnitude from one step to the subsequent.

4.1 RMSProp (Root Imply Sq. Propagation)

RMSProp stands for Root Imply Sq. Propagation. It retains a operating common of squared gradients in a cache, and this worth influences how aggressively the replace is utilized:

cache = 0.9cache + (2(x – 2))²

x = x – lr / sqrt(cache) * 2*(x – 2)

The cache turns into bigger when gradients are unstable, which reduces the replace dimension. When gradients are small, the cache grows extra slowly, and the replace stays near the conventional step. This makes RMSProp efficient in conditions the place the gradient scale shouldn’t be constant, which is frequent in deep studying fashions.

4.2 Adam (Adaptive Second Estimation)

Adam stands for Adaptive Second Estimation. It combines the concept of Momentum with the adaptive behaviour of RMSProp. It retains a shifting common of gradients to seize course, and a shifting common of squared gradients to seize scale:

m = 0.9m + 0.1(2(x – 2)) v = 0.999v + 0.001(2(x – 2))²

x = x – lr * m / sqrt(v)

The variable m behaves like the rate in momentum, and the variable v behaves just like the cache in RMSProp. Adam updates each values at each iteration, which permits it to speed up when progress is obvious and shrink the step when the gradient turns into unstable. This steadiness between pace and management is what makes Adam a normal selection in neural community coaching.

4.3 Different Adaptive Strategies

Adam and RMSProp are the most typical adaptive strategies, however they don’t seem to be the one ones. A number of associated strategies exist, every with a selected goal:

- AdaGrad adjusts the educational price based mostly on the total historical past of squared gradients, however the price can shrink too shortly.

- AdaDelta modifies AdaGrad by limiting how a lot the historic gradient impacts the replace.

- Adamax makes use of the infinity norm and will be extra secure for very giant gradients.

- Nadam provides Nesterov-style look-ahead behaviour to Adam.

- RAdam makes an attempt to stabilise Adam within the early part of coaching.

- AdamW separates weight decay from the gradient replace and is really useful in lots of fashionable frameworks.

These strategies comply with the identical thought as RMSProp and Adam: adapting the replace to the habits of the gradients. They characterize refinements or extensions of the ideas launched above, and they’re a part of the identical broader household of adaptive optimisation algorithms.

Conclusion

All strategies on this article intention for a similar purpose: shifting x towards the minimal. The distinction is the trail. Gradient Descent gives the fundamental rule. Momentum provides pace, and Nesterov improves management. RMSProp adapts the step to gradient scale. Adam combines these concepts, and Studying Fee Decay adjusts the step dimension over time.

Every methodology solves a selected limitation of the earlier one. None of them substitute the baseline. They prolong it. In apply, optimisation shouldn’t be one rule, however a set of mechanisms that work collectively.

The purpose stays the identical. The motion turns into more practical.