Synthetic intelligence has dramatically improved how robots understand the world.

Laptop imaginative and prescient permits robots to detect objects, acknowledge patterns, and navigate complicated environments. Cameras assist robots establish elements on a conveyor, find packages in a bin, and keep away from obstacles in warehouses.

However when a robotic must decide up an object, imaginative and prescient alone will not be sufficient.

To govern objects reliably, robots want one thing people depend on continuously: contact.

That is the place tactile sensing turns into important.

Most robotic methods at present rely closely on cameras.

Imaginative and prescient works effectively for:

- object detection

- pose estimation

- navigation

- scene understanding

However cameras can’t measure bodily interplay.

When a robotic grips an object, many vital variables seem that cameras can’t observe instantly:

- contact pressure

- strain distribution

- friction

- slip

- compliance of supplies

For instance, think about selecting up a moist glass, a delicate material, or a inflexible steel element.

Every requires a special grasp technique. People robotically modify grip power primarily based on what we really feel. Robots that rely solely on imaginative and prescient should infer these properties not directly, which is way more durable.

This limitation explains why manipulation stays one of many greatest challenges in robotics.

Human arms include a number of sorts of mechanoreceptors that detect completely different elements of contact.

These receptors permit us to understand:

- sustained strain

- vibration

- pores and skin deformation

- texture

- temperature

Collectively, these alerts assist us carry out dexterous duties akin to:

- tightening our grip when an object begins to slide

- adjusting finger place throughout manipulation

- recognizing objects with out trying

Robotic methods want comparable capabilities to realize dependable manipulation.

Tactile sensing provides robots the power to understand contact dynamics, which is crucial for interacting with the bodily world.

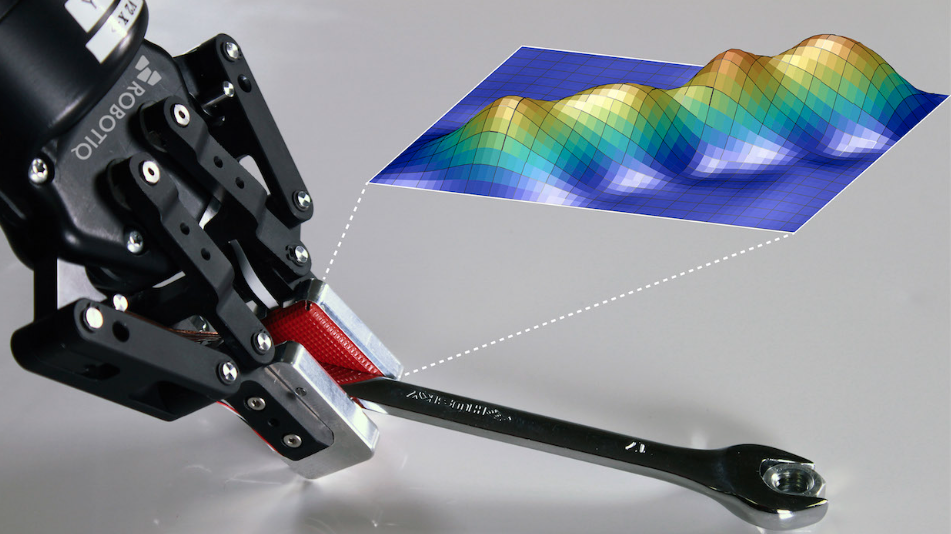

Fashionable tactile sensing methods can seize a number of sorts of data throughout a grasp.

Key sensing modalities embody:

Strain

Measures the dimensions, form, and depth of contact.

Strain knowledge helps robots decide:

- grasp high quality

- object pose within the gripper

- object identification

Vibration

Detects speedy adjustments involved.

That is helpful for figuring out:

- slip occasions

- collisions

- floor interactions

Proprioception

Measures the configuration of the gripper itself.

This helps robots perceive:

- finger positions

- gripper form

- object deformation throughout greedy

Collectively, these alerts give robots a a lot richer understanding of interplay with objects.

What tactile sensing means in robotics

Tactile sensing refers to applied sciences that permit robots to detect and interpret bodily contact with objects.

Not like imaginative and prescient methods, tactile sensors measure interplay instantly on the level of contact.

Frequent tactile sensing capabilities embody:

- strain detection (contact location and depth)

- vibration sensing (slip detection)

- pressure distribution throughout the gripper

- finger configuration and object deformation

These alerts permit robots to adapt their grasp, detect instability, and manipulate objects extra reliably.

As robotics strikes towards bodily AI, tactile sensing is changing into an essential complement to imaginative and prescient methods.

Though tactile sensing has existed in robotics analysis for years, adoption in trade has been slower.

A number of challenges clarify why.

Sensor sturdiness

Many tactile sensors developed in analysis labs are fragile and never designed for industrial environments.

Manufacturing environments introduce:

- mud

- vibrations

- temperature adjustments

- steady operation

Sensors should face up to thousands and thousands of cycles.

Knowledge interpretation

Tactile alerts are complicated.

Not like photographs, which people can simply interpret, tactile knowledge is:

- excessive dimensional

- noisy

- strongly linked to bodily mechanics

Understanding what tactile alerts imply throughout manipulation can require refined fashions and sign processing.

Lack of normal datasets

One other problem is the shortage of huge tactile datasets.

Imaginative and prescient methods profit from billions of photographs and movies obtainable on-line. Tactile knowledge, then again, should be collected by way of real-world interactions, which is way more durable to scale.

Regardless of these challenges, tactile sensing is changing into more and more essential in robotics.

A number of tendencies are accelerating adoption:

- improved sensor sturdiness

- advances in AI and sign processing

- rising curiosity in bodily AI

- growing demand for robots that may deal with unstructured environments

Robots are not restricted to repetitive manufacturing unit duties. They’re being requested to carry out extra complicated manipulation duties, akin to:

- bin selecting

- versatile materials dealing with

- meeting operations

- human–robotic collaboration

These duties require robots to adapt to uncertainty, which makes tactile suggestions extraordinarily precious.

Imaginative and prescient will stay a elementary sensing modality in robotics.

However the robots that achieve real-world environments will mix a number of types of notion.

Future robotic methods will depend on:

- imaginative and prescient for international notion

- tactile sensing for contact understanding

- pressure sensing for interplay management

Collectively, these sensing methods permit robots to maneuver past easy automation and towards adaptive manipulation.

This mixture is likely one of the key constructing blocks of bodily AI.

In our white paper, we discover how sensing, {hardware} design, and Lean Robotics rules are shaping the subsequent technology of automation.

Discover the complete framework behind bodily AI

Find out how mechanical design, sensing, and lean robotics rules assist flip AI robotics demos into dependable automation methods.

Learn the white paper: Giving physical AI a hand