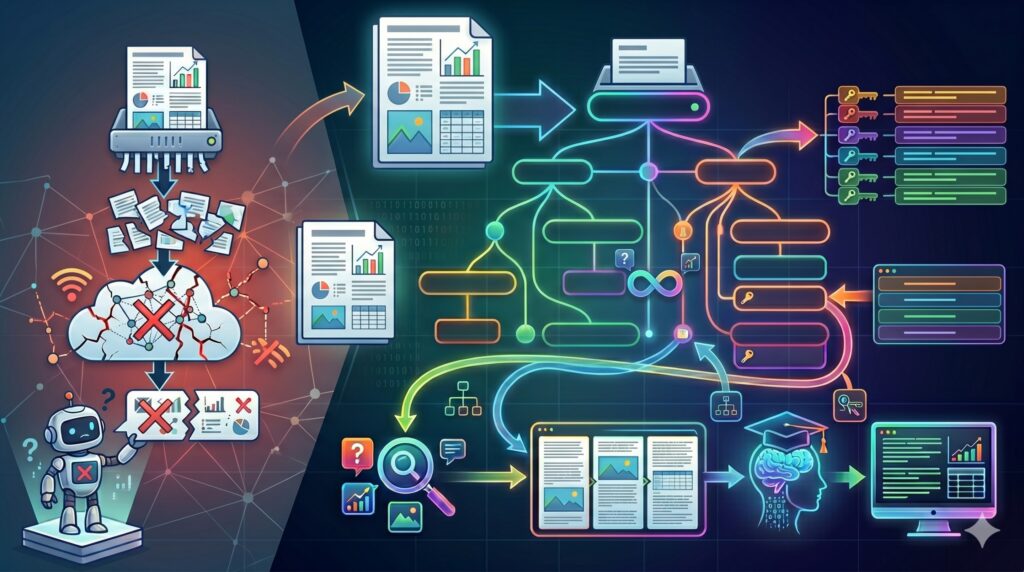

an image is value a thousand phrases. But, only a few enterprise chatbots can reliably return photos grounded of their supply paperwork.

Why is that?

The reason being that though this may be a big enhancement from the text-only person expertise, it’s tough to do reliably and constantly. Nevertheless, there is no such thing as a dearth of use instances the place this may be invaluable. From clients of real-estate initiatives to service technicians querying in regards to the newest machine parameters, customers would completely want to see the focused, related property photos and upkeep tables as a part of the response. As an alternative, the very best we will do is to get a response with hyperlinks to the supply paperwork (brochures, movies, manuals) and webpages.

On this article, I’ll current an open-source MultiModal Proxy-Pointer RAG pipeline, that may obtain this, primarily, as a result of it seems to be at a doc as a hierarchical tree of semantic blocks, fairly than a bag-of-words that must be shredded blindly into chunks to reply a question.

This can be a follow-up to my earlier articles on Proxy-Pointer RAG, the place I’ve explored the structure rationale and implementation at size. Right here, we are going to discover the next:

- Why is multimodal response a tough drawback to unravel? And what are the present strategies that may be utilized?

- How Proxy-Pointer achieves it with full scalability and minimal value with a text-only pipeline — no multimodal embeddings required

- A working prototype with take a look at queries so that you can attempt utilizing the open-source repo.

Let’s start.

Multimodality and Regular RAG

After we consider multimodal RAG, it nearly all the time means you possibly can search the data base utilizing photos together with a textual content question. It’s hardly ever the opposite approach round. To grasp the explanations, let’s take a look at the potential approaches how that is typically executed:

Picture captioning

Run a OCR/Imaginative and prescient mannequin on the pictures, flip the picture right into a paragraph of textual content, and index it into a piece together with different textual content. This isn’t very best, for the reason that sliding-window chunking could consequence within the picture caption being cut up throughout chunks.

The core challenge is a misalignment between retrieval models and semantic models. Conventional RAG retrieves arbitrary chunks, whereas which means—and particularly photos—belongs to coherent sections of a doc.

When a piece is retrieved, the LLM could solely see a partial caption (e.g., for Determine 5), making it tough to find out whether or not the picture is definitely related to this chunk or to an adjoining one which was not retrieved. As well as, the synthesizer usually receives a number of chunks from totally different paperwork with no shared context, probably containing a number of unrelated picture captions. This makes it tough for the LLM to reliably determine which, if any, of the pictures are related to the person’s question.

Multimodal Embedding

One other strategy is to embed each photos and textual content right into a shared vector area utilizing a multimodal mannequin. Whereas this allows cross-modal retrieval, it introduces a distinct problem. Multimodal embeddings optimize for similarity, not grounding. Visually or structurally comparable artifacts—akin to monetary tables throughout totally different corporations—can seem almost equivalent in vector area, even when just one is related to the question.

With out the context of doc construction, the system retrieves candidates based mostly on similarity however can not confidently decide which picture really belongs within the response. Consequently, the LLM is compelled to decide on between a number of believable however probably incorrect visuals—usually making it safer to return none in any respect than danger exhibiting the mistaken one.

Proxy-Pointer solves this by changing the text-based chunking with a tree-based one. We don’t chunk by character depend; we chunk by Sectional Boundaries. If a bit incorporates 3 paragraphs and a pair of photos, not one of the chunks transcend and bleed into the next part. The LLM can take into account every part as a totally impartial semantic unit and might make the judgement on the pictures in them with confidence.

Let’s see how it will work in follow.

Prototype Setup

I constructed a Multimodal chatbot on 5 AI analysis papers (all CC-BY licensed). These are CLIP, Nemobot, GaLore, VectorFusion and VectorPainter. For pdf extraction, Adobe PDF Extract API was used. As might be anticipated, the papers comprise dense textual content together with a complete of 270 photos (figures, tables, formulae) between them, that could possibly be extracted by Adobe. The embedding mannequin used is gemini-embedding-001 (with dimensions decreased to 1536 from default 3072, which makes the search sooner and reduces reminiscence utilization). This can be a text-only embedding mannequin. No multimodal embedding mannequin is used. For all LLM makes use of (noise filter, re-ranker, synthesizer and last imaginative and prescient filter), gemini-3.1-flash-lite-preview is used. Vector index used is FAISS.

Multimodal Proxy-Pointer Structure

In my earlier deep-dives, I shared proof that Proxy-Pointer RAG may obtain 100% accuracy on monetary 10-Ok paperwork by indexing “Strategic Pointers” (breadcrumbs like `Financials > Merchandise 1A > Danger Components`) as a substitute of uncooked chunks.

For multimodal output, we modify the pipeline steps with the next premise — photos (figures, tables, formulae, video clips and so forth) might be extracted as artifact information (.jpg, .png, .svg, .mp4 and so forth) and saved alongside the doc content material. That is fairly easy if the supply doc is a webpage or XML. For pdfs, though not good, an extractor akin to Adobe PDF Extract API, used right here can extract the tables and figures as artifacts.

Within the extracted doc itself, which in our case is a markdown, each determine is current as a relative path eg;  throughout the textual content, which factors to the precise filename. Following is an illustration:

Moreover, impressed by the Tangram puzzle which varieties totally different objects utilizing a set of primary components, as illustrated in Fig. 2(b), we reform the synthesis process as a rearrangement of a set of strokes extracted from the reference picture.

“The Starry Evening”

“Self-Portrait”

Which brings us to the next key perception that Proxy-Pointer makes use of. In follow, the LLM doesn’t have to see the picture itself to find out relevance. As an alternative, it solely must know that a picture exists inside a selected part of the doc. Since Proxy-Pointer retrieval brings in full sections—fairly than fragmented chunks—the LLM can depend on the part’s full context to evaluate relevance. This turns picture choice right into a conditional determination based mostly on the part’s which means and the person question, fairly than an open-ended search drawback based mostly on multimodal similarity match.

That is precisely how people learn. We don’t leap to view each desk and determine talked about —we first use the part context and our question to determine which of them are value taking a look at.

Right here is the indexing pipeline:

Skeleton Tree: As earlier than, we parse the Markdown headings right into a hierarchical tree with pure python. Solely now, a figures array is nested inside every node, which notes each determine discovered inside that node (part) together with path. The trail is used to retrieve the picture file for show. The remainder of the fields are self-explanatory as follows:

{

"title": "1 Introduction",

"node_id": "0003",

"line_num": 17,

"figures": [

{

"fig_id": "fig_1",

"filename": "figures/fileoutpart0.png"

},

{

"fig_id": "fig_2",

"filename": "tables/fileoutpart1.png"

}

]

},The following 4 steps primarily stay the identical as earlier than:

Breadcrumb Injection: Prepend the complete structural path (Galore > 3. Methodology > 3.1. Zero Convolution) to each chunk earlier than embedding.

Construction-Guided Chunking: Break up textual content inside part boundaries, by no means throughout them

Noise Filtering: Take away distracting sections (TOC, glossary, government summaries, references) from the index utilizing a LLM.

Pointer-Primarily based Context: Use retrieved chunks as pointers to load the full, unbroken doc part (which now comprise picture paths inside textual content) for the synthesizer

The up to date retrieval pipeline for multimodal retrieval is as follows:

Stage 1 (Broad Recall): FAISS returns the highest 200 chunks by embedding similarity. These are deduplicated by `(doc_id, node_id)` to make sure we’re taking a look at distinctive doc sections, leading to a shortlist of the highest 50 candidate nodes. This step stays identical as earlier than.

Stage 2 (Anchor-Conscious Structural Re-Rating): The re-ranker now receives the Full Breadcrumb Path (as earlier than) + a Semantic Snippet (150 characters) for every of the 50 candidates. This was launched as a result of, in contrast to monetary 10-Ks or technical manuals, educational papers usually make the most of generic, non-descriptive headings (like ‘3. Experiments,’ ‘4. Optimization,’ or ‘5. Comparability’). This requires the LLM to have a tiny ‘semantic trace’ to precisely pinpoint which of these obscure sections really incorporates the precision and similarity scores the person is asking for.

Stage 3 (Synthesis and Context-Conscious Picture Choice): The Synthesizer LLM critiques the ultimate okay=5 sections and varieties the textual content response. As well as it makes the visible name on the pictures discovered inside as to which must be displayed. It scans the sections for the picture paths and selects an inventory of max 6 photos that appears most related to the question. As well as, the synthesizer additionally varieties correct picture labels for show even when that desk or determine doesn’t have any express caption by the creator.

The above pipeline is ready to present 95% accuracy for picture retrievals on the 20 query benchmark I created, as judged by Claude. I’ve shared just a few of the leads to the subsequent part. The complete outcomes can be found within the repo. Moreover, for those who want to refine the outcomes much more, the subsequent step is an non-obligatory Imaginative and prescient filter.

Stage 4 (Imaginative and prescient Filter — non-obligatory): For additional refinements of the chosen photos, an non-obligatory Imaginative and prescient choice step might be turned on in config.py. Right here the LLM is requested to really see the 6 photos utilizing its Imaginative and prescient capabilities, take into account the person question and textual content response and drop any photos that don’t appear related. This leads to exact, curated photos being proven within the response however provides just a few seconds of latency. This was not used for the benchmark take a look at outcomes.

Lastly a easy streamlit UI is created to visualise the outputs.

Outcomes

I attempted the bot on a 20 query set — from exact retrieval, to cross doc reasoning, structural reasoning and so forth. Full outcomes are current in my github repo. The outcomes are: 17 good retrievals, 1 no picture retrieved, 2 partial retrievals. Nevertheless, an important statement right here is that there is no such thing as a case the place an incorrect picture from a doc not associated to the question has leaked into the outcomes, thereby eroding belief within the bot. That is potential because of the surgical accuracy of Proxy-Pointer. The place picture retrievals are usually not sufficient, they’re because of the LLM variance and Baby-node causes that I focus on within the subsequent part.

Following is a snapshot of some outcomes. For brevity, the textual content responses are truncated right here:

Exact Information Retrieval Query: What are the hyperparameters used for fine-tuning RoBERTa-Base for GaLore throughout totally different GLUE duties?

The hyperparameters used for fine-tuning RoBERTa-Base for GaLore throughout GLUE duties embrace a coaching period of 30 epochs, a most sequence size of 512, and a batch measurement of 32 f

Sources:

• Galore > D. High quality-Tuning Experiments > D.1. Particulars of High quality-Tuning on GLUE

• Galore > Epochs

• Galore > 5. Experiments

AI-Verified Proof Record:

• Galore - Desk 7: Hyperparameters of fine-tuning RoBERTa base for GaLore (Galore/tables/fileoutpart157.png)Cross Doc Reasoning Query: Each GaLore and CLIP-CITE deal with the problem of environment friendly mannequin coaching. Examine GaLore’s gradient projection strategy with CLIP-CITE’s fine-tuning technique by way of reminiscence effectivity and data preservation.

Reminiscence Effectivity

GaLore: Focuses on lowering the reminiscence footprint of optimizer states (e.g., Adam) by projecting gradients right into a low-rank subspace. By monitoring solely low-rank gradient statistics, it avoids storing full-rank matrices. It's extra memory-efficient than LoRA

..contd

Sources:

• Galore > 4. GaLore for Reminiscence-Environment friendly Coaching > 4.2. Reminiscence-Environment friendly Optimization

• Galore > 5. Experiments > 5.4. Reminiscence-Environment friendly High quality-Tuning

• CLIP > 4 Experiments > 4.3 Additional Evaluation

AI-Verified Proof Record:

• Galore - Desk 1: Comparability between GaLore and LoRA (Galore/tables/fileoutpart39.png)

• CLIP - Desk 5: Comparability performances and coaching effectivity (CLIP/tables/fileoutpart18.png)

Visible Question: Describe the VectorFusion pipeline levels: how is the raster picture first sampled, then transformed to SVG, and at last fine-tuned utilizing latent rating distillation

The VectorFusion pipeline generates SVGs by means of three major levels:

Raster Sampling: A raster picture is sampled from a pretrained text-to-image diffusion mannequin (e.g., Steady Diffusion) utilizing a Runge-Kutta solver. To make sure iconographic aesthetics, the enter textual content is appended with a immediate suffix

..contd

Sources:

• VectorFusion > 4. Technique: VectorFusion

• VectorFusion > 4. Technique: VectorFusion > 4.1. A baseline: text-to-image-to-vector

• VectorFusion > 4. Technique: VectorFusion > 4.2. Sampling vector graphics by optimization

AI-Verified Proof Record:

• VectorFusion - Determine 3: VectorFusion generates SVGs in three levels. (VectorFusion/figures/fileoutpart29.png)

• VectorFusion - Determine 5: Overview of VectorFusion’s latent rating distillation optimization process. (VectorFusion/figures/fileoutpart33.png)

Visible Query: What video games are applied in Nemobot, and the way are they categorized in response to Shannon’s sport taxonomy?

Nemobot implements quite a lot of video games categorized in response to Shannon’s sport taxonomy, which classifies machines based mostly on their decision-making logic:

Class 1: Dictionary-Primarily based Machines

Tic Tac Toe: Makes use of a dictionary-based system the place the LLM agent dynamically generates optimum strikes for sport states, successfully compressing the state-action dictionary into its inference capabilities.

..contd

Sources:

• NemoBot > II. GAME PLAYING WITH LARGE LANGUAGE MODELS > A. Shannon’s Taxonomy of AI Video games with LLMs

• NemoBot > IV. EVALUATION OF NEMOBOT GAMES > A. Dictionary-Primarily based Sport: Tic Tac Toe

• NemoBot > I. INTRODUCTION

AI-Verified Proof Record:

• NemoBot - Desk I: Nemobot video games categorized in response to Shannon’s sport taxonomy (NemoBot/tables/fileoutpart5.png)

Edge Circumstances & Design Commerce-offs

LLM non-determinism

Because the picture choice is being carried out by the LLM, even with temperature = 0.0, repeated runs of the identical question could floor barely totally different photos. Relying upon our desire, we could really feel some are extra related than others.

Baby-node figures

Particular queries (eg; What's Imaginative and prescient Distillation Loss?) are prone to discover sections with the actual idea and show the exact method and figures significantly better, than broad ones (eg; Examine Vectorfusion pipeline with Vectorpainter). The broad queries are prone to retrieve header-level nodes (sections) whereas the related figures could reside inside youngster nodes, which don’t match throughout the okay=5 context window. Nevertheless, asking about both pipeline individually would work fantastic, since all 5 slots go to at least one paper, bringing sufficient youngster nodes — and subsequently the related figures — into context.

Indifferent picture paths

This strategy assumes the picture path (e.g., ``) bodily exists throughout the retrieved part. If a determine is referenced in textual content however saved in a separate part (say, an Appendix) that isn’t retrieved, it received’t be surfaced. A sensible workaround is to call picture information in a approach that may be derived — `table_1.jpg`, `figure_3.png` — so the synthesizer can assemble the trail from the reference, fairly than counting on generic extractor names like `fileoutpart1.png`. No matter strategy, the core precept holds: no multimodal embedding or visible interpretation is required. Full part context is ample for the LLM to make clever picture choices.

Open-Supply Repository

Proxy-Pointer is totally open-source (MIT License) and might be accessed at Proxy-Pointer Github repository. The Multimodal pipeline is being added to the identical repo along with the prevailing Textual content-Solely model.

It’s designed for a 5-minute quickstart:

MultiModal/

├── src/

│ ├── config.py # Mannequin choice (Gemini 3.1 Flash Lite)

│ ├── agent/

│ │ └── mm_rag_bot.py # MultiModal RAG Logic

│ ├── indexing/

│ │ ├── md_tree_builder.py # Construction Tree generator

│ │ └── build_md_index.py # Vector index builder

│ └── extraction/

│ └── extract_pdf.py # Adobe pdf Extraction to MD logic

├── knowledge/ # Unified Information Hub

│ ├── extracted_papers/ # Processed Markdown & Figures

│ └── pdf/ # Unique Supply PDFs

├── outcomes/ # Benchmarking Hub

│ ├── test_log.json # 20-query outcomes & metrics

│ └── test_queries.json # Benchmark questions

├── app.py # Streamlit Multimodal UI

└── run_test_suite.py # Automated benchmark runnerKey Takeaways

- Multimodal RAG just isn’t primarily a imaginative and prescient drawback—it’s a retrieval alignment drawback.

The problem just isn’t extracting or embedding photos, however confidently associating them with the suitable semantic context. - Chunk-based retrieval breaks visible coherence.

Sliding-window chunking fragments captions and disconnects photos from their true semantic models, making dependable choice tough. - Multimodal embeddings introduce ambiguity, not readability.

Visually comparable artifacts (e.g., tables, diagrams) are tough to tell apart in the identical vector area, making it arduous to tell apart relevance with out structural grounding. - Construction is the lacking layer.

Treating paperwork as hierarchical semantic models permits photos to inherit which means from their part, enabling assured choice. - Proxy-Pointer reframes the issue.

As an alternative of looking for photos immediately, it retrieves sections and selects photos conditionally based mostly on full context —turning a tough retrieval drawback into an easier filtering process. - Accuracy issues extra for visuals than textual content.

Exhibiting an incorrect picture might be extra damaging than omitting one fully, making precision vital for enterprise use instances.

Conclusion

Multimodal responses have lengthy been seen as the subsequent step within the evolution of RAG techniques. But, regardless of advances in imaginative and prescient fashions and multimodal embeddings, reliably returning related photos alongside textual content stays an unsolved drawback.

The reason being delicate however elementary: conventional RAG pipelines function on fragmented chunks, whereas which means—particularly visible which means—lives on the degree of full doc construction. With out aligning retrieval to semantic models, even probably the most superior fashions battle to make the proper visible associations.

Proxy-Pointer MultiModal RAG addresses this hole by upgrading the inspiration from flat chunks to structured context. By retrieving full sections and treating picture paths as tips to artifacts inside them, it permits correct, scalable, and cost-efficient multimodal responses—with out counting on costly multimodal embeddings.

The result’s a sensible step ahead: chatbots that don’t simply narrate, however present exact proof —all the time grounded in the suitable context.

Clone the repo. Strive your individual paperwork. Let me know your ideas.

Join with me and share your feedback at www.linkedin.com/in/partha-sarkar-lets-talk-AI

All analysis papers used on this article can be found at CLIP , Nemobot , GaLore, VectorFusion and VectorPainter with CC-BY license. Code and benchmark outcomes are open-source below the MIT License. Photos used on this article are generated utilizing Google Gemini.