perceive the distinction between raisins, inexperienced peppers and a salt shaker? Extra importantly, how can they work out how you can fold a t-shirt?

That’s the magic of Visible-Language-Motion (VLA) fashions.

This text is a concise abstract of recent visual-language-models (VLAs), which has been distilled from a meta-analysis of the most recent “frontrunning” fashions, together with related mathematical ideas.

You’ll be taught:

- Helpful conjectures

- The mathematical fundamentals

- Actual-world neural architectures

- How VLAs are educated

Preliminaries

If any of the next ideas are international to you, it’s worthwhile to spend a while studying them: they cowl key elements of recent data-driven multimodal robotic management (particularly VLAs).

- Transformers — the dominant structure patterns of as we speak’s VLAs comprise a Visible Language Mannequin (VLM) spine, which is a transformer based mostly visible+language encoder

- Representation Learning — The advances in VLAs are strongly pushed from optimizing realized representations, or projections to latent house, for management insurance policies

- Imitation Learning — Studying motion insurance policies based mostly on demonstration knowledge generated by human motion or teleoperated robotic trajectories

- Policy Optimization — Excessive performing robotic management insurance policies typically implement a mix of imitation studying and coverage optimization, making a stochastic coverage able to generalizing to new domains and duties.

Helpful Conjectures

These are on no account absolute legal guidelines. In my view, these conjectures are useful to understanding (and constructing) brokers which work together with the world.

💭Latent illustration studying could possibly be foundational to intelligence

Whereas unproven, and vastly oversimplified right here, I imagine this to be true given the next:

- LLMs, or different transformer fashions don’t be taught the grammar of English language, or any language. They be taught an embedding: a map which geometrically initiatives tokens, or quantized observations, into semantically related representations in N-dimensional latent house.

- Some main AI researchers, resembling Yann LeCun (together with his Joint Embedding Predictive Structure, or JEPA), argue that human-level AI requires “World Fashions” (LeCun et al., “A Path In direction of Autonomous Machine Intelligence”). A world mannequin not often predicts in pixel house, however predicts in latent house, making causal reasoning and prediction summary and tractable. This offers a robotic a way of “If I drop the glass, it is going to break.”

- From biology, neuroscientists + the “free vitality precept.” (Karl Friston, “The Free-Power Precept: A Unified Mind Principle?”) A deeply complicated matter with many branches, at a excessive stage, posit that the mind makes predictions and minimizes error (variational free vitality) based mostly off inside “latent” fashions. After I say latent, I’m additionally drawing on the neural manifold speculation (Gallego et al., “A Unifying Perspective on Neural Manifolds and Circuits for Cognition”) as utilized to this house

I understand that this can be a very profound and sophisticated conjecture which is up for debate. Nevertheless, it could be exhausting to argue in opposition to studying illustration idea on condition that all the newest VLAs use latent house projections as a core constructing block of their architectures.

💭Imitation is key to vitality environment friendly, strong robotic locomotion

Why did it take so lengthy to get strolling proper? No human [expert] priors. Right here’s an instance of locomotion, as demonstrated by Google Deepmind vs. DeepMimic, a really impactful paper which demonstrated the unreasonable effectiveness of coaching together with skilled demonstrations. Whereas vitality wasn’t explicitly measured, evaluating the 2 reveals the impact of imitation on environment friendly humanoid locomotion.

Instance 1: From Deepmind’s “Emergence of Locomotion Behaviours in Wealthy Environments” (Heess et al., 2017)

Instance 2: DeepMimic: Instance-Guided Deep Reinforcement Studying of Physics-Primarily based Character Expertise (Peng et al., 2018)

On Teloperation (Teleop)

Teleoperation is clearly evident within the coaching of the most recent humanoids, and even newest demonstrations of robotic management.

However, teleop isn’t a unclean phrase. In truth, It’s essential. Right here’s how teleoperation may help coverage formation and optimization.

As a substitute of the robotic trying to generate management outputs from scratch (e.g. the awkward jerky actions from the primary profitable humanoid management insurance policies), we might increase our coverage optimization with samples from a good clean dataset, which represents the right motion trajectory, as carried out by a human in teleoperation.

Because of this, as a robotic learns inside representations of visible observations, an skilled can present precision management knowledge. So after I immediate “transfer x to y”, a robotic cannot solely be taught a stochastic strong coverage as realized with coverage optimization strategies, but in addition clone with imitation priors.

Though the reference knowledge wasn’t teleoperated motion, human movement priors and imitation studying is employed by Determine AI of their newest VLA: Helix 02: A Unified Whole-Body Loco-Manipulation VLA, containing a further system (S0), which was educated on joint retargeted human motion priors and is used for steady, complete physique locomotion.

Job postings by the corporate, together with this one for Humanoid Robot Pilot, strengthen the argument.

Understanding latent representations and producing wealthy, skilled pushed trajectory knowledge are each extraordinarily helpful within the house of recent robotic management.

The Mathematical Fundamentals

Once more, this isn’t an exhaustive abstract of each lemma and proof that are foundational, however sufficient to satiate your urge for food with hyperlinks to deep dive in the event you so select.

Regardless that a VLA appears complicated, at its core, a VLA mannequin reduces down right into a easy conditioned coverage studying drawback. By that I imply, We wish a operate generally denoted within the coverage kind, which maps what a robotic sees and hears (in pure language) to what it ought to do.

This operate offers an motion output (over all of the actions that the robotic can carry out) for each remark (what it sees and hears) for each time state. For contemporary VLAs, this breaks down into sequences as little as 50Hz.

How can we get this output?

Formally, think about a robotic working in {a partially} observable Markov resolution course of (POMDP). At every timestep :

- The robotic receives an remark , sometimes an RGB picture (or set of photos or video body) plus the interior proprioceptive state (joint angles, gripper state).

- It’s given a language instruction : a natural-language string like “choose up the coke can and transfer it to the left.”

- It should produce an motion , often a vector of end-effector deltas and a gripper command.

The VLA’s job is to be taught a coverage:

that maximizes the likelihood of process success throughout numerous environments, directions, and embodiments. Some formulations situation on remark historical past, moderately than a single body, however most trendy VLAs function on the present remark (or a brief window) together with purpose tokens and the robots present proprioceptive state and depend on motion chunking (extra on that shortly) for temporal coherence.

Right here, the stochastic coverage is realized by way of coverage optimization. Refer again to the conditions.

The motion house

Understanding how robots understand and work together with the setting is the muse of studying extra complicated matters.

Right here, I describe the motion house for a easy robotic, however the identical framework extends seamlessly to extra superior humanoid methods.

A typical single arm robotic manipulator has 7 DoF (levels of freedom) with a 1 DoF gripper.

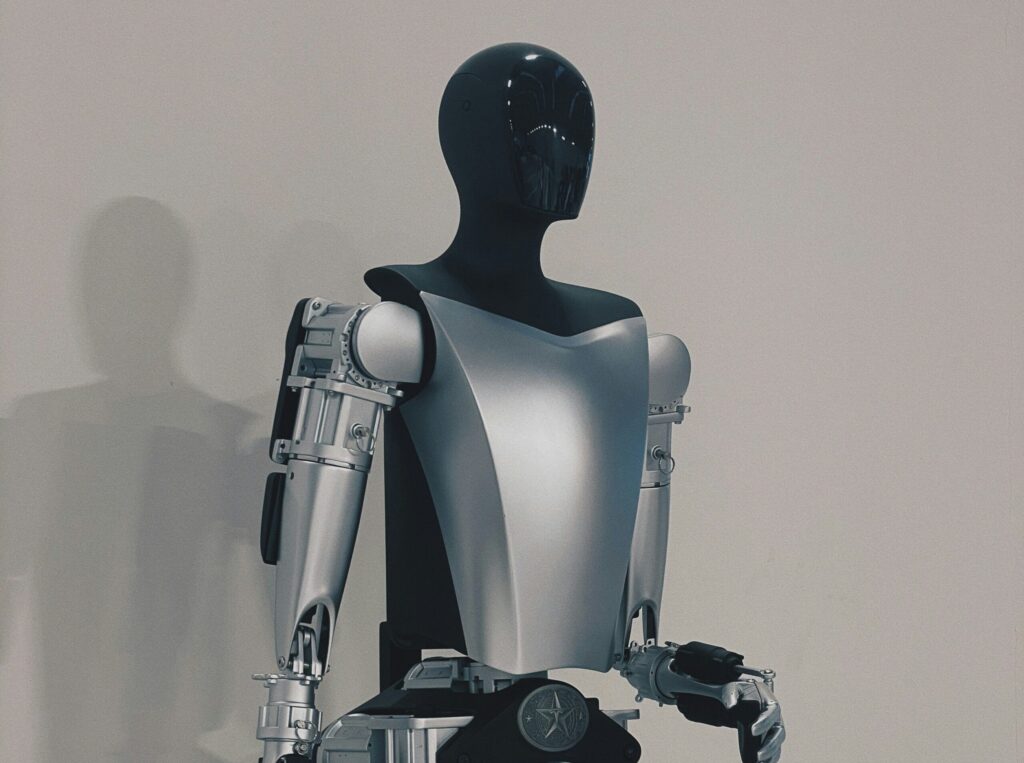

As expressed, this can be a simplified management system. For instance, the cellular robots utilized in have as much as 19 DoF, whereas humanoid robots, resembling Tesla’s Optimus and Boston Dynamics have as much as 65+ DoF, with 22 within the “palms” alone.

Vectorizing a single robotic configuration (sometimes expressed as angles) offers us:

This offers us a 8 dimensional house representing all attainable poses for our arm.

A management command is expressed in deltas, e.g. enhance angle by + a gripper state. This offers us

Why is that this vital?

The vectors of each the state and management instructions are each steady.

Producing output actions () from inside representations is among the most consequential resolution selections driving lively VLA studying. Fashionable fashions use one of many following three methods.

Technique #1: Motion Tokenization

The concept is comparatively easy: Discretize every motion dimension into Okay uniform bins (sometimes . Every bin index turns into a token appended to the language mannequin’s vocabulary.

An motion vector turns into a sequence of tokens, and the mannequin predicts them autoregressively, similar to coaching GPT.

the place and every a (quantized) is

So every management command is a “phrase” in an area of attainable “phrases”, the “vocabulary” and the mannequin is educated virtually precisely like GPT: predict the subsequent token given the sequence of tokens.

This method is used fairly successfully in RT-2 and OpenVLA. A number of the earliest examples of profitable VLAs.

Sadly, for precision management duties, the discretization leaves us with a “quantization” error which can’t simply be recovered. Meaning, when translating -> , we lose precision. This can lead to jerky, awkward management insurance policies which break down for duties like, “choose up this tiny screw.”

Technique 2: Diffusion-Primarily based Motion Heads

Slightly than discretizing, you possibly can maintain actions steady and mannequin the conditional distribution utilizing a denoising diffusion course of.

This diffusion course of was sampled from Octo (Octo: An Open-Supply Generalist Robotic Coverage), however they’re equally utilized over varied architectures, resembling Gr00t

Run a ahead go of the transformer spine to acquire the educated “illustration.” This single latent vector is a illustration of the visible discipline, the instruction tokens, and purpose tokens at any state. We denote this as

Run the diffusion course of, which might be summarized with the next steps:

Pattern an preliminary latent (Noise)

Run Okay denoising steps utilizing a realized community, right here is a realized diffusion mannequin.

Every replace:

present noisy motion

predicts the noise to take away, conditioned on the illustration from our transformer spine and timestep

: scales the denoising correction

added : reintroduces managed noise (stochasticity)

: rescales in response to the noise schedule

I’m considerably mangling the usual notation for Denoising Diffusion Probabilistic Fashions (DDPM). The abstraction is right.

This course of, iteratively carried out with the educated diffusion mannequin produces a stochastic and steady motion pattern. As a result of this motion pattern is conditioned on the encoder output, , our educated diffusion mannequin solely generates actions related to the enter context

Diffusion heads shine when the motion distribution is multimodal. there is perhaps a number of legitimate methods to understand an object, and a unimodal Gaussian (or a single discrete token) can’t seize that effectively, as a result of limitations of quantization as mentioned.

Technique #3 – Movement matching

The successor of diffusion has additionally discovered a house in robotic management.

As a substitute of stochastic denoising, a movement matching mannequin elegantly learns a velocity discipline, which determines how you can transfer a pattern from noise to the goal distribution.

This velocity discipline might be summarized by:

At each level in house and time, which route ought to x transfer, and how briskly?

How can we be taught this velocity discipline in follow, particularly within the area of steady management?

The movement matching course of described under was taken from π0: A Imaginative and prescient-Language-Motion Movement Mannequin for Normal Robotic Management

Start with a sound motion sequence

Corrupt with noise, creating

the place and

= 0 = pure noise, = 1 = goal

Be taught the vector discipline with the loss operate:

Right here, the goal vector discipline is just the mathematical spinoff of this path with respect to time .

It represents the precise route and velocity it is advisable transfer to get from the noise to the true motion. We’ve this, as a result of we did the noising! Merely calculate the distinction (noised motion – floor reality) at every timestep.

Now the elegant piece. At inference, now we have no floor reality of actions, however we do have our educated vector discipline mannequin.

As a result of our vector discipline mannequin, , now precisely predicts steady outputs over noised samples, we are able to use the ahead Euler integration rule, as specified right here:

to maneuver us incrementally from noise to wash steady motion samples with . We use the straightforward Euler technique over 10 integration steps [as used in ] for latency.

At step 0, we’ve bought largely noise. At step 10, we’ve bought a bit of actions that are correct and exact for steady management.

If movement matching nonetheless evades you, this article, which visually animates movement matching with a toy drawback, could be very useful.

Actual-world Neural Architectures

This abstract structure is synthesized from OpenVLA, NVIDIA’s GR00t, π0.5, and Determine’s Helix 02, that are a number of the newest cutting-edge VLAs.

There are variations, some delicate and a few not so delicate, however the core constructing blocks are very comparable throughout every.

Enter Encoding

First we have to encode what the robotic sees into , which is foundational for studying movement, diffusion and so on.

Photos

Uncooked photos are processed by a pretrained imaginative and prescient encoder. For instance, π0 by way of PaliGemma makes use of SigLIP, and Gr00t makes use of ViT (Imaginative and prescient Transformers).

These encoders convert our sequence of uncooked picture pixels, sampled at (~5-10 hz) right into a sequence of visible tokens.

Language

The command “fold the socks within the laundry basket” will get tokenized utilizing utilizing the LLM’s tokenizer, sometimes a SentencePiece or BPE tokenizer, producing a sequence of token embeddings. In some instances, like Gemma (π0) or LLama2 (OpenVLA), these embeddings share a latent house with our visible tokens.

Once more, there are architectural variations. The principle takeaway right here is that photos + language are encoded into semantically related sequences in latent house, in order that they are often consumed by a pretrained VLM.

Structuring the remark house with the VLM spine

The visible tokens and language tokens are concatenated right into a single sequence, which is fed by the pretrained language mannequin spine appearing as a multimodal reasoner.

VLM backbones typically have multimodal outputs, like bounding containers for object detection, captions on photos, language based mostly subtasks, and so on. however the first objective for utilizing a pretrained VLM is producing intermediate representations with semantic that means.

- Gr00t N1 extracts embeddings from middleman layers of the LLM (Eagle)

- OpenVLA positive tunes the VLM to foretell discrete actions instantly, the output tokens of the LLM (Llama 2) are then projected to steady actions

- π0.5 additionally positive tunes the VLM (SigLIP + Gemma) to output discrete motion tokens, that are then utilized by an motion skilled to generate steady actions

Motion Heads

As coated in depth above, the fused illustration is decoded into actions by way of considered one of three methods: motion tokenization (OpenVLA), diffusion (GR00T N1), or movement matching (π0, π0.5). The decoded actions are sometimes motion chunks, a brief horizon of future actions (e.g., the subsequent 16–50 timesteps) predicted concurrently. The robotic executes these actions open-loop or re-plans at every chunk boundary.

Motion chunking is vital for smoothness. With out it, per-step motion prediction introduces noise as a result of every prediction is impartial. Through the use of a coherent trajectory, the mannequin amortizes its planning over a window, producing smoother, extra constant movement.

How VLAs are educated

Fashionable VLAs don’t prepare from scratch. They inherit billions of parameters value of prior data:

- The imaginative and prescient encoder (e.g., SigLIP, DINOv2) is pretrained on internet-scale image-text datasets (tons of of thousands and thousands to billions of image-text pairs). This offers the robotic’s “eyes” a wealthy understanding of objects, spatial relationships, and semantics earlier than it ever sees a robotic arm.

- The language mannequin backbones (e.g., Llama 2, Gemma) are pretrained on trillions of tokens of textual content, giving it broad reasoning, instruction following, and customary sense data.

These pretrained elements are important to generalization, permitting the robotic to know what a cup is, what a t-shirt is, and so on. with no need to coach from scratch.

A number of Coaching Phases

VLAs use a number of coaching phases. Part 1 sometimes consists of positive tuning utilizing numerous knowledge collected from actual robotic management duties and/or synthetically generated knowledge, and Part 2 focuses on embodiment particular coaching and motion head specialization.

Part 1: Pretraining

The VLA is educated on large-scale robotic demonstration datasets. Some examples of coaching knowledge:

- OpenVLA was educated on the Open X-Embodiment dataset, a community-aggregated assortment of ~1M+ robotic trajectories throughout 22 robotic embodiments and 160,000+ duties.

- π0 was educated on over 10,000 hours of dexterous manipulation knowledge collected throughout a number of Bodily Intelligence robotic platforms.

- Proprietary fashions like GR00T N1 and Helix additionally leverage giant in-house datasets, typically supplemented with simulation knowledge.

The purpose of pretraining is to be taught the foundational mapping from multimodal observations (imaginative and prescient, language, proprioception) to action-relevant representations that switch throughout duties, environments and robotic embodiments. This consists of:

- Latent illustration studying

- Alignment of actions to visible + language tokens

- Object detection and localization

Pretraining sometimes doesn’t produce a profitable robotic coverage. It supplies the coverage a normal basis which might be specialised with focused publish coaching. This permits the pretraining part to make use of robotic trajectories that don’t match the goal robotic platform, and even simulated human interplay knowledge

Part 2: Put up coaching

The purpose of publish coaching is to specialize the pretrained mannequin right into a normal, process and embodiment-specific coverage that may function in real-world environments.

Pretraining offers us normal representations and priors, publish coaching aligns and refines the coverage with exact necessities and aims, together with:

- Embodiment: mapping the anticipated motion trajectories to the exact joint, actuator instructions that are required by the robotic platform

- Job specialization: refining the coverage for particular duties, e.g. the duties required by a manufacturing facility robotic or a home cleansing robotic

- Refinement: acquiring excessive precision steady trajectories enabling positive motor management and dynamics

Put up coaching supplies the true management coverage which has been educated on knowledge matched for deployment. The top result’s a coverage that retains the generalization and provides precision required for the real-world [we hope].

Wrapping up

Visible-Language-Motion (VLA) fashions matter as a result of they unify notion, reasoning, and management right into a single realized system. As a substitute of constructing separate pipelines for imaginative and prescient, planning, and actuation, a VLA instantly maps what a robotic sees and is instructed into what it ought to do.

An apart on attainable futures

Embodied intelligence argues that cognition shouldn’t be separate from motion or setting. Notion, reasoning, and motion technology are tightly coupled. Intelligence, itself, might require some form of bodily “vessel” which might purpose with it’s setting.

VLAs might be interpreted as an early realization of this concept. They take away boundaries between notion and management by studying a direct mapping from multimodal observations to actions. In doing so, they shift robotics away from specific symbolic pipelines and towards methods that function over shared latent representations grounded within the bodily world. The place they take us from right here, continues to be mysterious and thought upsetting 🙂

References

- Heess, N. et al. (2017). Emergence of Locomotion Behaviours in Wealthy Environments. DeepMind.

- Peng, X. B. et al. (2018). DeepMimic: Instance-Guided Deep Reinforcement Studying of Physics-Primarily based Character Expertise. ACM Transactions on Graphics.

- Octo Mannequin Staff (2024). Octo: An Open-Supply Generalist Robotic Coverage.

- Brohan, A. et al. (2023). RT-2: Imaginative and prescient-Language-Motion Fashions Switch Net Information to Robotic Management. Google DeepMind.

- OpenVLA Challenge (2024). OpenVLA: Open Imaginative and prescient-Language-Motion Fashions. https://openvla.github.io/

- Staff, Bodily Intelligence (2024). π0: A Imaginative and prescient-Language-Motion Movement Mannequin for Normal Robotic Management.

- Bodily Intelligence (2025). π0.5: Improved Imaginative and prescient-Language-Motion Movement Fashions for Robotic Management.

- NVIDIA Analysis (2024). GR00T: Generalist Robotic Insurance policies.

- Karl Friston (2010). The Free-Power Precept: A Unified Mind Principle? Nature Critiques Neuroscience.

- Gallego, J. et al. (2021). A Unifying Perspective on Neural Manifolds and Circuits for Cognition. Present Opinion in Neurobiology.