I be one of the attention-grabbing discoveries (and matters) in synthetic intelligence, leaving apart the talk about whether or not that is intelligence in any respect or not.

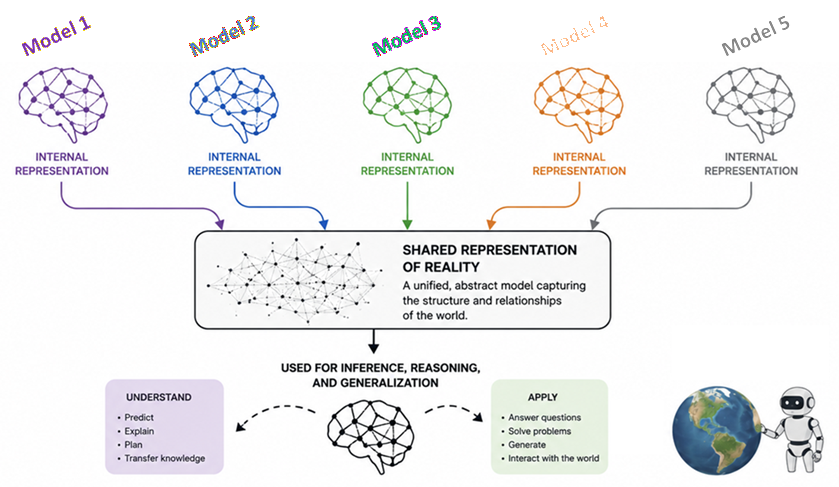

We (I no less than!) assume that when you skilled one AI mannequin purely on, say, pictures and one other purely on textual content, they’d develop solely other ways of “pondering” — not getting into the dialogue about what this precisely means. Our notion could be that they use fully totally different architectures and course of fully totally different knowledge, so they need to, by all logic, have fully totally different “brains”, even when they each are good at their duties with pictures and textual content.

However in response to some thrilling analysis from varied teams, that isn’t the case in any respect!

Already in 2024, MIT introduced stable proof that each main AI mannequin is secretly converging to the identical “pondering core” (or mind, or no matter you wish to name it). As these fashions get greater and extra highly effective, they’re all arriving at the very same conclusion about how the world is structured. Perhaps this wasn’t apparent with the early fashions, as a result of they have been dangerous at reasoning; but it surely turns into an increasing number of evident as they get higher. And allegedly, I might say, the explanation for that’s that if they’re all appropriate then they MUST be creating a really comparable illustration of actuality.

The allegory of the (AI) cave

To know why that is taking place, some researchers appeared again 2,400 years to Plato’s “Allegory of the Cave” — leading to some attention-grabbing preprint titles containing concepts such because the “Platonic Illustration Speculation”. Primarily, Plato believed that we people are like prisoners in a cave, watching shadows flicker on a wall. We expect the shadows (our perceptions) are “actuality”, however they’re really simply projections of a deeper, hidden, extra advanced actuality present exterior the cave.

One of many many papers I learn to arrange this (hyperlinks on the finish) argues that AI fashions are doing the very same factor, and that in doing so that they converge to the identical mannequin of how the world works so as to perceive the enter shadows.

The billions of strains of textual content, the trillions of pixels in pictures, the countless audio recordsdata used for coaching of our trendy AI fashions are simply their notion (“shadows”) of our world. These highly effective fashions are taking a look at these totally different shadows of human knowledge and, fully independently, they’re discovering the very same underlying construction of the universe to make sense of it.

Totally different eyes, identical imaginative and prescient

Right here is the mind-blowing half, to me no less than: A mannequin that solely “sees” pictures and a mannequin that solely “reads” textual content are measuring the space between ideas in the very same method (if they’re each adequate).

In the event you ask a imaginative and prescient mannequin to map the “distance” between an image of a “canine” and a “wolf”, after which ask a language mannequin to map the space between the phrase “canine” and the phrase “wolf”, the mathematical constructions they construct have gotten an increasing number of comparable as they will higher distinguish the 2 animals.

In different phrases, it looks like as these fashions scale up and turn into higher, they cease being a multitude of random connections. Analysis exhibits that they have a tendency to align, and specifically that as imaginative and prescient fashions and language fashions get bigger, the best way they symbolize knowledge turns into an increasing number of alike. So wonderful, don’t you suppose!

Why scale modifications every thing

Based on the analysis obtainable, this all appears to be fairly common and taking place with trendy fashions from all corporations and skilled with totally different sources, so long as the mannequin itself is succesful sufficient. In reality, as a mannequin will get bigger, no matter it’s, it undergoes a “part change” of their inside pondering. Analysis appears to point that these fashions cease merely memorizing their particular duties and moderately begin to construct up a statistical mannequin of actuality itself.

And apparently, this occurs due to some “selective stress” appearing on the fashions:

- Job generality: If you would like an AI to be good at every thing, there is just one “greatest” approach to symbolize the world such that it doesn’t overfit but will be predicted. Since there’s solely ONE greatest method, they need to all get to it!

- Capability: Massive fashions have the “room” to search out probably the most elegant, easy resolution. However having ample room when it comes to structure of variety of parameters have to be balanced with avoiding overfitting.

- Simplicity bias: Deep networks really desire easy options over advanced ones, once more particularly if overfitting is averted.

One necessary factor is that the totally different AI fashions may adapt to those items of selective stress at totally different speeds (or with totally different ranges of effectivity); however they’re actually all heading towards the identical last state of maximal understanding achieved by means of the identical inside illustration of how the world works.

Probably the most trendy analysis on “information mechanisms”

If I have been 25 years youthful and needed to selected a profession now, I might most likely selected one thing like pc sciences blended with psychology. As a result of to me, right here’s the place probably the most thrilling a part of the AI world is. Learn why!

A current survey on “information mechanisms” in LLMs provides one other layer to all this described above. It means that information in these fashions isn’t simply scattered randomly; moderately, it evolves from easy memorization to advanced “comprehension and software”. There would then be some sort of “dynamic intelligence” at play. The development that information and functionality are inclined to converge into the identical illustration areas appears to occur throughout the whole synthetic neural mannequin group, no matter knowledge, modality, or goal. Even information that we people haven’t fairly grasped but (or that solely domain-specific consultants grasp, say, learn how to construct up music for a composer or why and the way photons can entangle for a physicist) is being mapped by these fashions as they discover patterns (following the instance, say in music or quantum physics) that our organic brains can’t course of as rapidly.

Why that is so cool, and an analogy with how we people study

That is a kind of uncommon moments the place math, pc science, and philosophy collide. It appears to me just like the fashions are constructing a unified notion of actuality in the one method they will ingest it: as phrases, pictures, and sounds. Probably not that totally different to how infants study, maybe although in a unique order and including extra inputs together with bodily ones (robust, bumps, and so on.), and naturally coupled to bodily outputs (crying, laughing, transferring limbs, strolling, …)

Inside our brains, in any case, multimodality can also be all built-in and works underneath the identical international understanding (which, sure, will be tricked with illusions, however that’s for one more day!). In different phrases, our brains additionally map every thing to a single actuality, which is our private interpretation of how the world works. If I take a photograph of an apple, if I write the phrase “apple”, if I report the sound of somebody biting an apple, these are three totally different “shadows” however they’re all projected from the identical precise, bodily actuality of the apple. Even when the apple is one shade of the opposite, even artificially painted, or if I write the phrase in different languages, “manzana” or “pomme” will, if I converse Spanish and French respectively, mirror sort of the identical shadow.

World illustration of actuality + bodily inputs + bodily outputs –> robots

The entire thing above, together with the analogy with infants and people, can then be extrapolated an increasing number of. Or no less than I wish to suppose that.

Add capabilities for bodily inputs and outputs to a world, well-working AI mannequin of actuality, and now we have a robotic that may study to interpret and work together with the world. Laborious-wire into it an “intuition” to outlive, and nicely… who is aware of the place this will go. However we aren’t too totally different from that building, don’t you suppose?

I’ll go away this right here, to not enter that debate, however please alternate with me about it!

Let’s drop it right here with the clear, research-based proof that we’re now not simply constructing instruments to assist us write emails, summarize a factor, write code, or edit or generate photographs. We’re constructing digital mirrors of the universe. And we’re watching, in real-time, as silicon and code independently uncover the internal workings of the world we stay in.

References

To write down this publish I went intimately by means of these two super-interesting works: a examine introduced as a preprint in 2024, and a more moderen assessment about information mechanisms in LLMs:

The Platonic Illustration Speculation: https://arxiv.org/html/2405.07987v5

Information Mechanisms in Massive Language Fashions: A Survey and Perspective: https://aclanthology.org/2024.findings-emnlp.416/