Quicker, smarter, extra responsive AI applications – that’s what your customers count on. However when giant language fashions (LLMs) are sluggish to reply, person expertise suffers. Each millisecond counts.

With Cerebras’ high-speed inference endpoints, you may scale back latency, pace up mannequin responses, and keep high quality at scale with fashions like Llama 3.1-70B. By following a couple of easy steps, you’ll be capable to customise and deploy your personal LLMs, providing you with the management to optimize for each pace and high quality.

On this weblog, we’ll stroll you thru you the way to:

- Arrange Llama 3.1-70B within the DataRobot LLM Playground.

- Generate and apply an API key to leverage Cerebras for inference.

- Customise and deploy smarter, sooner functions.

By the top, you’ll be able to deploy LLMs that ship pace, precision, and real-time responsiveness.

Prototype, customise, and check LLMs in a single place

Prototyping and testing generative AI fashions typically require a patchwork of disconnected instruments. However with a unified, integrated environment for LLMs, retrieval strategies, and analysis metrics, you may transfer from thought to working prototype sooner and with fewer roadblocks.

This streamlined process means you may give attention to constructing efficient, high-impact AI functions with out the effort of piecing collectively instruments from totally different platforms.

Let’s stroll by way of a use case to see how one can leverage these capabilities to develop smarter, faster AI applications.

Use case: Rushing up LLM interference with out sacrificing high quality

Low latency is important for constructing quick, responsive AI functions. However accelerated responses don’t have to come back at the price of high quality.

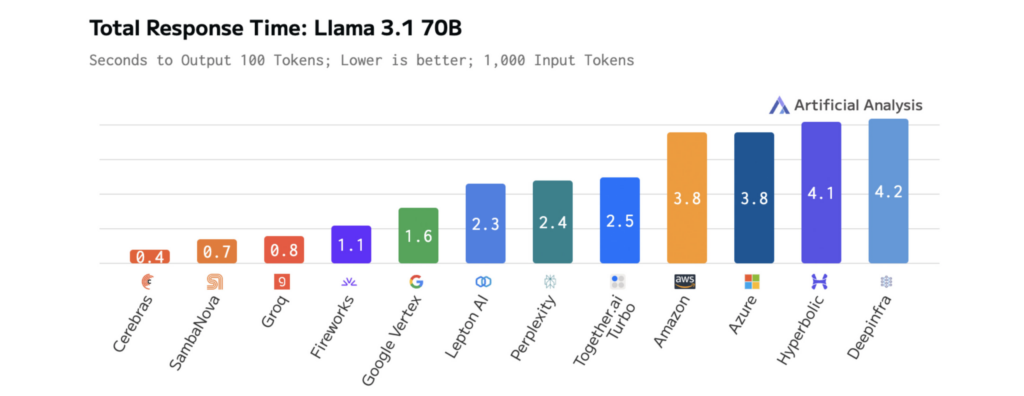

The pace of Cerebras Inference outperforms different platforms, enabling builders to construct functions that really feel easy, responsive, and clever.

When mixed with an intuitive growth expertise, you may:

- Scale back LLM latency for sooner person interactions.

- Experiment extra effectively with new fashions and workflows.

- Deploy functions that reply immediately to person actions.

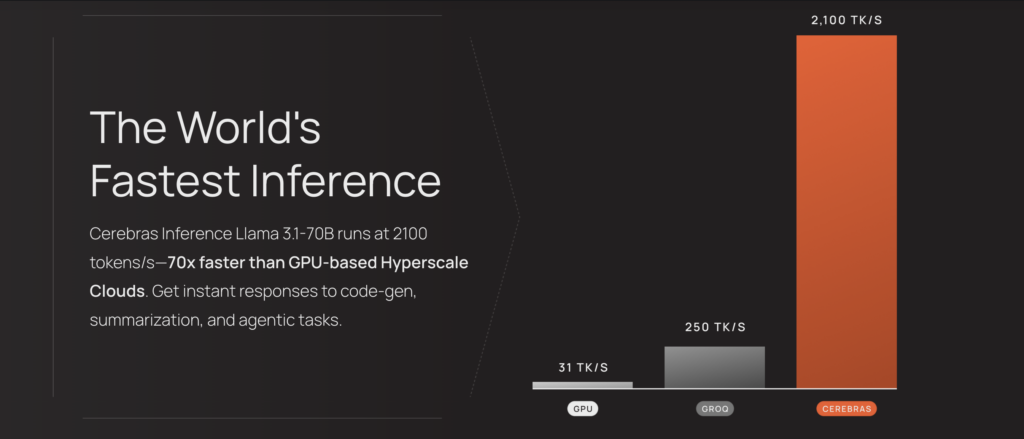

The diagrams beneath present Cerebras’ efficiency on Llama 3.1-70B, illustrating sooner response instances and decrease latency than different platforms. This permits fast iteration throughout growth and real-time efficiency in manufacturing.

How mannequin measurement impacts LLM pace and efficiency

As LLMs develop bigger and extra advanced, their outputs develop into extra related and complete — however this comes at a value: elevated latency. Cerebras tackles this problem with optimized computations, streamlined information switch, and clever decoding designed for pace.

These pace enhancements are already remodeling AI functions in industries like prescribed drugs and voice AI. For instance:

- GlaxoSmithKline (GSK) makes use of Cerebras Inference to speed up drug discovery, driving larger productiveness.

- LiveKit has boosted the efficiency of ChatGPT’s voice mode pipeline, attaining sooner response instances than conventional inference options.

The outcomes are measurable. On Llama 3.1-70B, Cerebras delivers 70x sooner inference than vanilla GPUs, enabling smoother, real-time interactions and sooner experimentation cycles.

This efficiency is powered by Cerebras’ third-generation Wafer-Scale Engine (WSE-3), a customized processor designed to optimize the tensor-based, sparse linear algebra operations that drive LLM inference.

By prioritizing efficiency, effectivity, and suppleness, the WSE-3 ensures sooner, extra constant outcomes throughout mannequin efficiency.

Cerebras Inference’s pace reduces the latency of AI functions powered by their fashions, enabling deeper reasoning and extra responsive person experiences. Accessing these optimized fashions is easy — they’re hosted on Cerebras and accessible through a single endpoint, so you can begin leveraging them with minimal setup.

Step-by-step: How one can customise and deploy Llama 3.1-70B for low-latency AI

Integrating LLMs like Llama 3.1-70B from Cerebras into DataRobot permits you to customise, check, and deploy AI fashions in only a few steps. This course of helps sooner growth, interactive testing, and larger management over LLM customization.

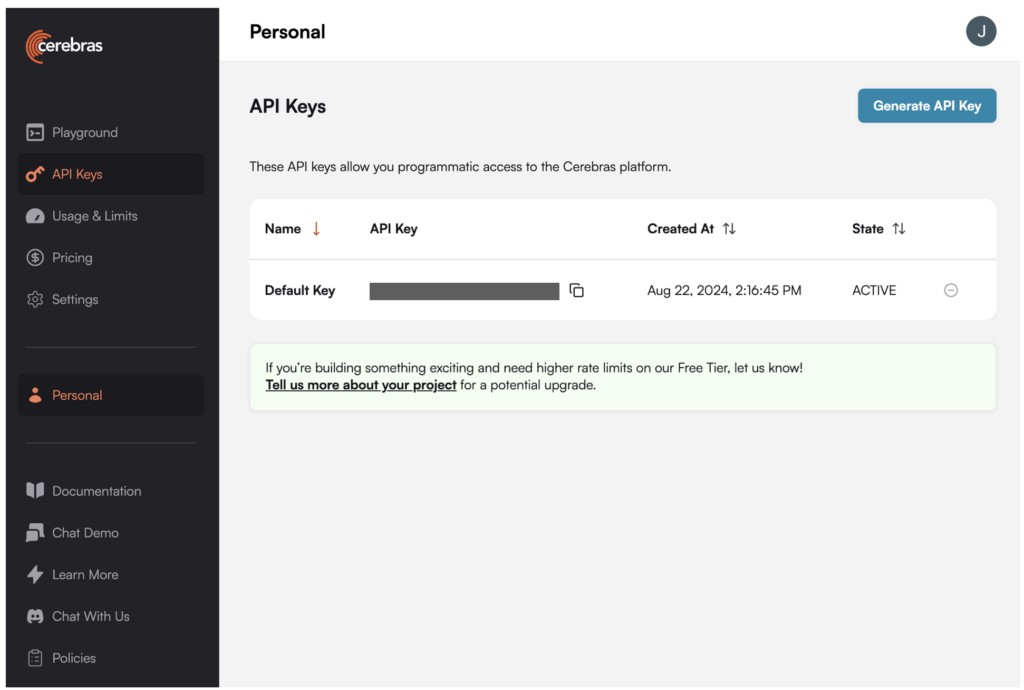

1. Generate an API key for Llama 3.1-70B within the Cerebras platform.

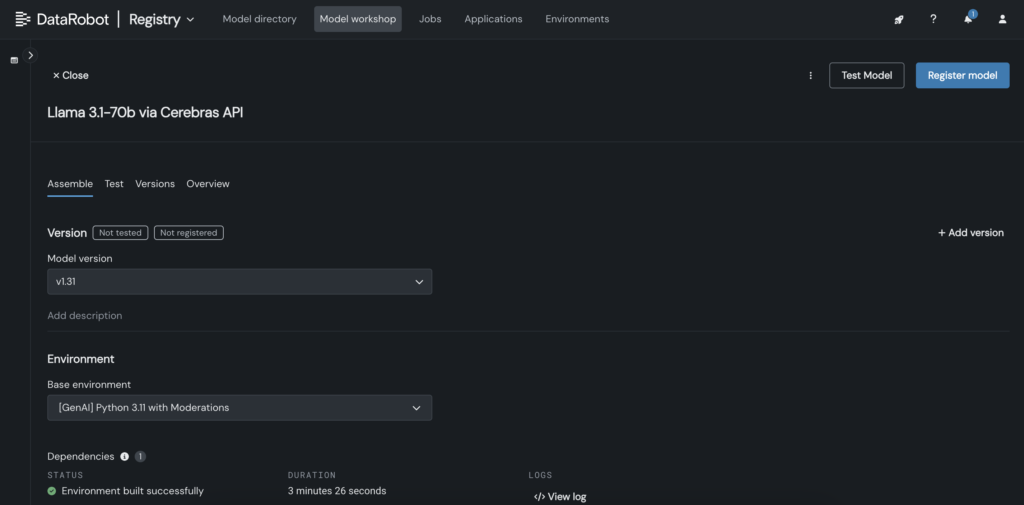

2. In DataRobot, create a customized mannequin within the Mannequin Workshop that calls out to the Cerebras endpoint the place Llama 3.1 70B is hosted.

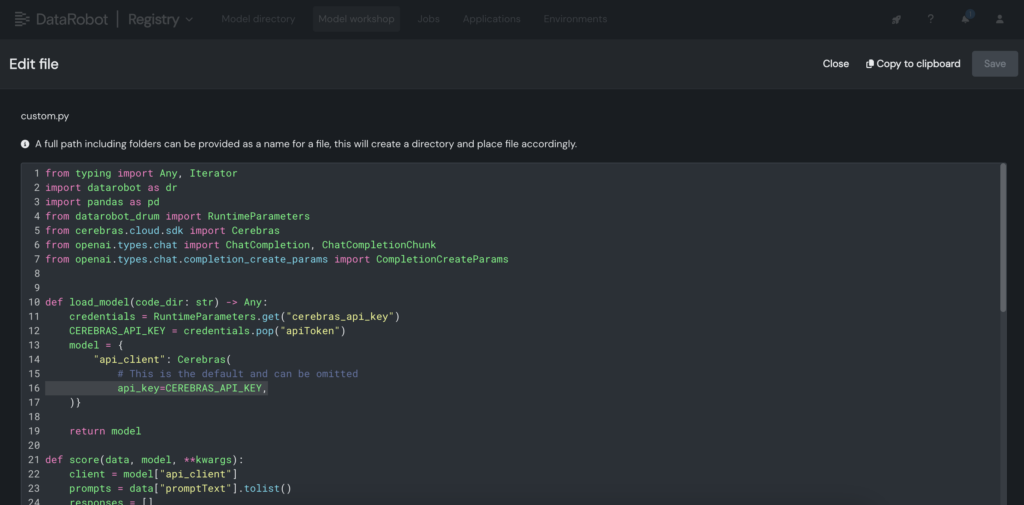

3. Throughout the customized mannequin, place the Cerebras API key throughout the customized.py file.

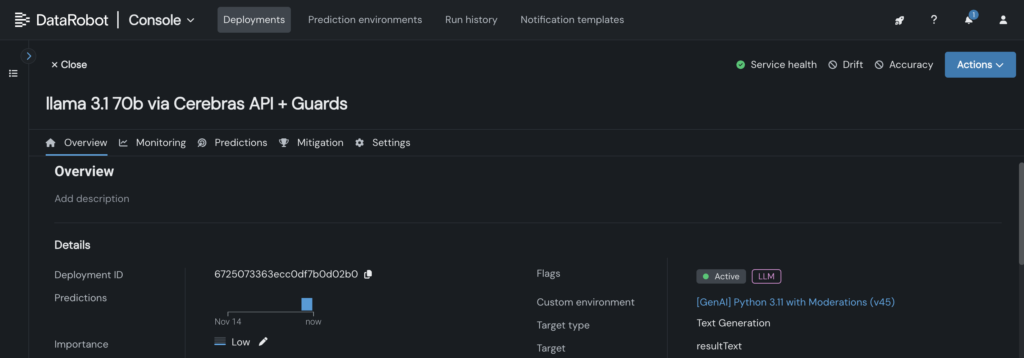

4. Deploy the customized mannequin to an endpoint within the DataRobot Console, enabling LLM blueprints to leverage it for inference.

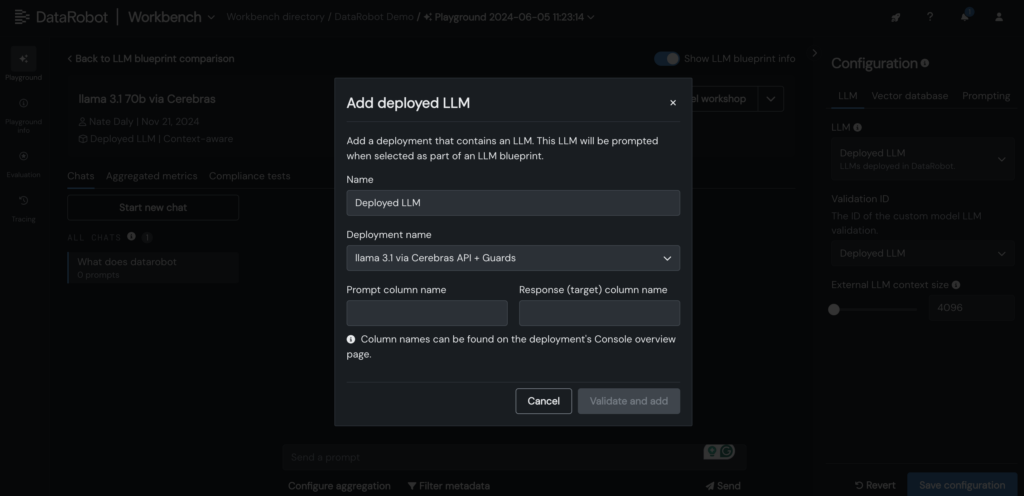

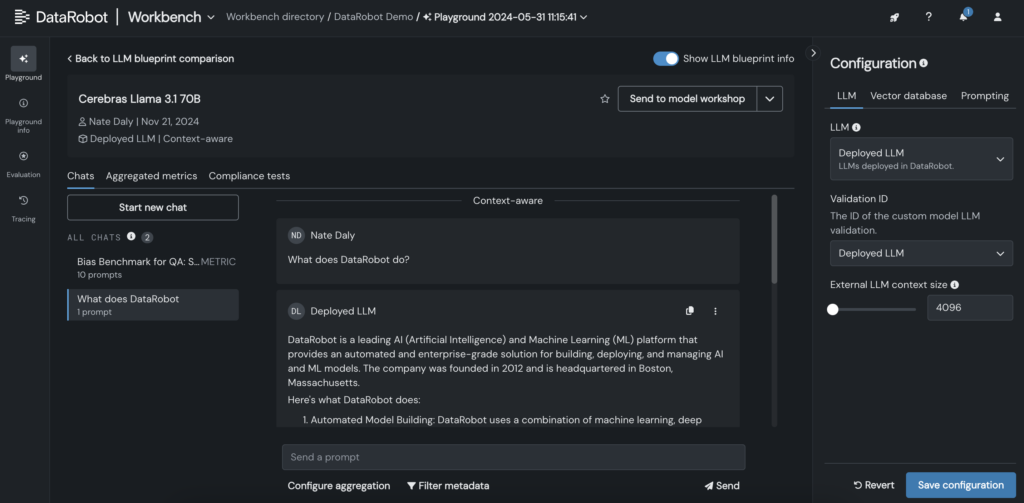

5. Add your deployed Cerebras LLM to the LLM blueprint within the DataRobot LLM Playground to begin chatting with Llama 3.1 -70B.

6. As soon as the LLM is added to the blueprint, check responses by adjusting prompting and retrieval parameters, and examine outputs with different LLMs immediately within the DataRobot GUI.

Broaden the bounds of LLM inference to your AI functions

Deploying LLMs like Llama 3.1-70B with low latency and real-time responsiveness is not any small activity. However with the suitable instruments and workflows, you may obtain each.

By integrating LLMs into DataRobot’s LLM Playground and leveraging Cerebras’ optimized inference, you may simplify customization, pace up testing, and scale back complexity – all whereas sustaining the efficiency your customers count on.

As LLMs develop bigger and extra highly effective, having a streamlined course of for testing, customization, and integration, shall be important for groups seeking to keep forward.

Discover it your self. Entry Cerebras Inference, generate your API key, and begin constructing AI applications in DataRobot.

Concerning the creator

Kumar Venkateswar is VP of Product, Platform and Ecosystem at DataRobot. He leads product administration for DataRobot’s foundational companies and ecosystem partnerships, bridging the gaps between environment friendly infrastructure and integrations that maximize AI outcomes. Previous to DataRobot, Kumar labored at Amazon and Microsoft, together with main product administration groups for Amazon SageMaker and Amazon Q Enterprise.

Nathaniel Daly is a Senior Product Supervisor at DataRobot specializing in AutoML and time collection merchandise. He’s targeted on bringing advances in information science to customers such that they’ll leverage this worth to resolve actual world enterprise issues. He holds a level in Arithmetic from College of California, Berkeley.