Many cultures discover the thought of a figurative third eye that enhances notion. For autonomous autos, the idea is kind of literal: radar, working alongside cameras and LiDAR. These methods are sometimes onboard the automobile, actively gathering knowledge on its environment because it strikes. Now, researchers at Rice College, led by Kun Woo Cho, have developed an off-vehicle radar system, EyeDAR, which they are saying can considerably improve autos’ sensing accuracy by speaking essential site visitors knowledge to onboard methods.

Autonomous autos “see” their environment with a mixture of three complementary methods: radar, LiDAR, and cameras. Cameras, the optical sensors we all know and love, allow visible notion by totally figuring out pedestrians, autos, and site visitors management units. Subsequent is LiDAR (Mild Detection and Ranging), an lively sensing know-how that emits laser pulses and measures the time of their return to generate a high-resolution 3D level cloud. LiDAR fills key gaps in spatial notion left by radar and imaginative and prescient methods. It additionally offers correct depth notion, however like cameras, it’s vulnerable to climate situations.

Lastly, we now have radar. Similar to bats’ echolocation, this radio frequency know-how works by emitting radio waves in predetermined instructions. Objects inside the radar’s path replicate among the waves again on the radar emitter. Onboard processors then analyze the traits of the returning waves to find out the space, place, and different details about the reflecting objects. Radar is not impacted by lighting and climate situations.

Jared Jones/Rice College

Nevertheless, one key limitation of radar lies in its very mode of operation. The know-how depends on mirrored waves to acquire knowledge, however these reflections aren’t at all times environment friendly. In follow, objects in a radar’s path usually return solely a fraction of the transmitted sign, with a lot of the waves scattered away as a substitute. For autonomous autos, this implies the onboard radar incessantly receives incomplete info.

In consequence, site visitors components akin to highway customers round corners, pedestrians behind massive objects, and different obscured hazards can simply go unnoticed. Even when a radar system detects the presence of one thing, the weak return sign could make exact identification tough. For instance, a cease signal would possibly as effectively be a tall, slender man with a big crimson hat!

As autonomous autos, from freight vans to supply robots, turn out to be more and more commonplace, security calls for are rising as effectively. As soon as accepted, sensor limitations are actually considered as regarding vulnerabilities.

To handle detection lapses in radar, the researchers want to prolong sensing past in-vehicle methods to highway infrastructure utilizing EyeDAR. The gadget is a low-power millimeter-wave radar sensor that might improve automobile sensing accuracy by offering in-vehicle methods with essential knowledge on surrounding site visitors.

Jared Jones/Rice College

Mounted on roadside infrastructure akin to site visitors lights, highway indicators, and billboards, EyeDAR enhances sensing by capturing the majority of mirrored waves that will in any other case scatter away, offering autonomous autos with a much more full image of surrounding site visitors.

“It’s like including one other set of eyes for automotive radar methods,” says Cho.

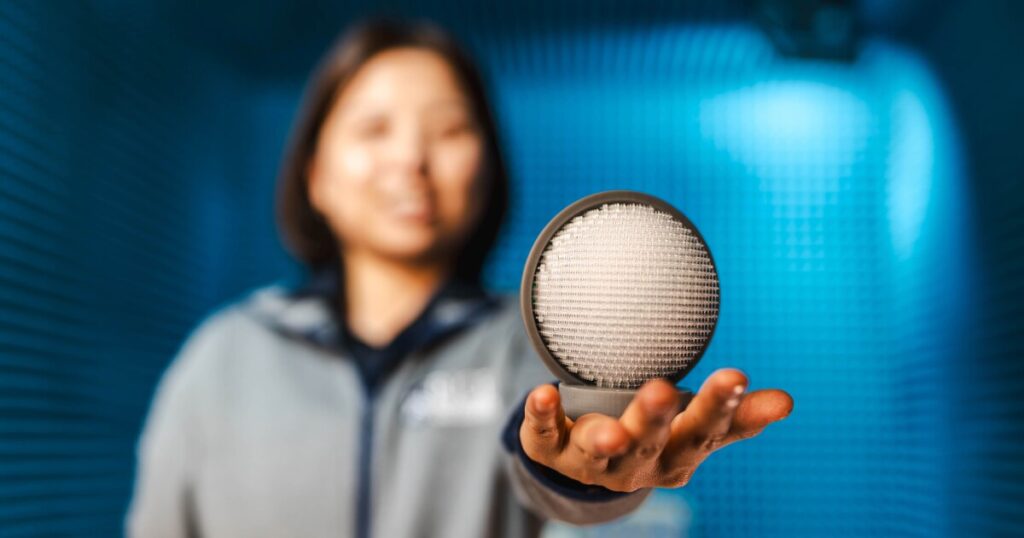

The gadget itself is an orange-sized sensor comprising two principal parts that operate very similar to the lens and retina of the eyes. The primary is a Luneburg metamaterial lens, 3D-printed from resin. The lens focuses incoming alerts from totally different instructions onto a hard and fast focus, bringing us to the second element – an antenna array lined up behind the lens. The antenna array receives and detects the spatial info of the incoming alerts, then delivers this info again to the automotive radar.

Two excellent traits of EyeDAR that make it fascinating are its compact design and its metamaterial-based sign processing. Whereas conventional radar methods use massive antenna arrays and sophisticated computational algorithms to interpret knowledge, EyeDAR’s bodily design performs the processing.

“Our lens consists of over 8,000 uniquely formed, extraordinarily small components with a various refractive index,” Cho says.

The positioning of every component inside the lens allows it to find out the precise route and depth of incoming alerts solely from its bodily form. This design is what makes it a metamaterial. As a result of these 1000’s of tiny constructions are engineered to bend and focus waves as they move by means of, the fabric itself acts as a hardwired analog processor. This basically “pre-calculates” spatial knowledge on the velocity of sunshine, eliminating the necessity for the heavy, power-hungry digital computing sometimes required to make sense of a chaotic site visitors surroundings.

Testing revealed that EyeDAR can resolve goal instructions greater than 200 instances sooner than typical radar designs, marking a big development in analog processing over digital.

One other distinctive attribute of the gadget is that it doesn’t generate new waves. As an alternative, it gathers and processes scattered waves from the goal objects and displays the clear alerts again to the in-vehicle radar system.

Cho believes that EyeDAR’s mixture of compact, low-cost, non-complex structure with ultrafast analog processing makes widespread deployment throughout roads and highways possible.

Jared Jones/Rice College

Nevertheless, Emeka Moronu, manufacturing business knowledgeable, expresses doubts about its widespread adoption. “Even when the mathematics is ideal, the manufacturing precision required is staggering,” says Moronu. “We’re asking a 3D printer to completely execute 1000’s of microscopic geometries that should stay flawless whereas baked within the solar or frozen in a storm. Scaling that degree of metamaterial complexity from a managed lab to a rugged, mass-produced roadside product is the last word hurdle.”

Regardless, EyeDAR has actual potential. A community of those units would permit vehicles to see far past the vary of onboard radar methods. As well as, this know-how might be tailored for drones, robotics, and surveillance.

Supply: Rice University