TLDR

- in healthcare typically output binary selections resembling illness or no illness, which by themselves can’t produce a significant AUC.

- AUC continues to be the usual option to evaluate danger and detection fashions in medication, and it requires steady scores that allow us rank sufferers by danger.

- This put up describes a number of sensible methods for changing agentic outputs into steady scores in order that AUC based mostly comparisons with conventional fashions stay legitimate and honest.

Agent and Space Underneath the Curve Disconnect

Agentic AI programs have gotten more and more widespread as they decrease the barrier to entry for AI options. They accomplish this by leveraging foundational fashions in order that assets don’t all the time must be spent on coaching a customized mannequin from the bottom up or on a number of rounds of fine-tuning.

I observed that roughly 20–25% of the papers at NeurIPS 2025 have been centered on agentic options. Brokers for medical purposes are rising in parallel and gaining reputation. These programs embrace LLM pushed pipelines, retrieval augmented brokers, and multi step resolution frameworks. They will synthesize heterogeneous information, purpose step-by-step, and produce contextual suggestions or selections.

Most of those programs are constructed to reply questions like “Does this affected person have the illness” or “Ought to we order this check” as an alternative of “What’s the likelihood that this affected person has the illness.” In different phrases, they have an inclination to provide onerous selections and explanations, not calibrated possibilities.

In distinction, conventional medical danger and detection fashions are normally evaluated with the world below the receiver working attribute curve or AUC. AUC is deeply embedded in scientific prediction work and is the default metric for evaluating fashions in lots of imaging, danger, and screening research.

This creates a spot. If our new fashions are agentic and resolution centered, however our analysis requirements are likelihood based mostly, we want strategies that join the 2. The remainder of this put up focuses on what AUC truly wants, why binary outputs usually are not sufficient, and find out how to derive steady scores from agentic frameworks in order that AUC stays usable.

Why AUC Issues and Why Binary Outputs Fail

AUC is commonly thought-about the gold commonplace metric in medical purposes as a result of it handles the imbalance between instances and controls higher than easy accuracy, particularly in datasets that mirror actual world prevalence.

Accuracy is usually a deceptive metric when illness prevalence is low. For instance, breast most cancers prevalence in a screening inhabitants is roughly 5 in 1000. A mannequin that predicts “no most cancers” for each case would nonetheless have very excessive accuracy, however the false damaging fee can be unacceptably excessive. In an actual scientific context, that is clearly a foul mannequin, regardless of its accuracy.

AUC measures how effectively a mannequin separates optimistic instances from damaging instances. It does this by taking a look at a steady rating for every particular person and asking how effectively these scores rank positives above negatives. This rating based mostly view is why AUC stays helpful even when lessons are extremely imbalanced.

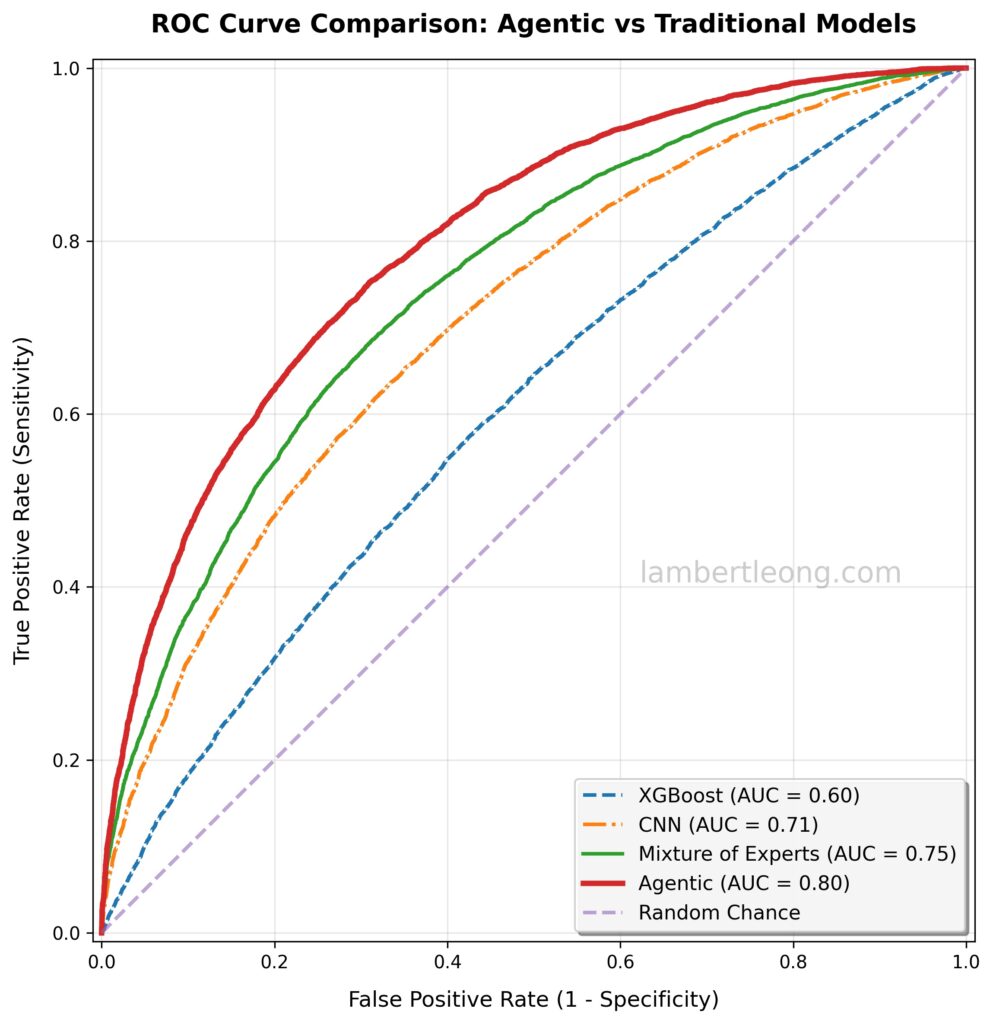

Whereas I observed nice modern work on the intersection of brokers and well being at NeurIPS, I didn’t see many papers that reported an AUC. I additionally didn’t see many who in contrast a brand new agentic strategy to an current or established typical machine studying or deep studying mannequin utilizing commonplace metrics. With out this, it’s tough to calibrate and perceive how significantly better these agentic options truly are, if in any respect.

Most present agentic outputs don’t lend themselves naturally to acquiring AUCs. With this text, the aim is to suggest strategies to acquire AUC for agentic programs in order that we are able to begin a concrete dialog about efficiency positive factors in comparison with earlier and current options.

How AUC is computed

To totally perceive the hole and admire makes an attempt at an answer, we should always evaluate how AUCs are calculated.

Let

- y ∈ {0, 1} be the true label

- s ∈ ℝ be the mannequin rating for every particular person

The ROC curve is constructed by sweeping a threshold t throughout the total vary of scores and computing

- Sensitivity at every threshold

- Specificity at every threshold

AUC can then be interpreted as

The likelihood {that a} randomly chosen optimistic case has a better rating than a randomly chosen damaging case.

This interpretation solely is smart if the scores comprise sufficient granularity to induce a rating throughout people. In apply, which means we want steady or a minimum of finely ordered values, not simply zeros and ones.

Why binary agentic outputs break AUC

Agentic programs typically output solely a binary resolution. For instance:

- “illness” mapped to 1

- “no illness” mapped to 0

If these are the one attainable outputs, then there are solely two distinctive scores. After we sweep thresholds over this set, the ROC curve collapses to at most one nontrivial level plus the trivial endpoints. There is no such thing as a wealthy set of thresholds and no significant rating.

On this case, the AUC turns into both undefined or degenerate. It additionally can’t be pretty in comparison with AUC values from conventional fashions that output steady possibilities.

To judge agentic options utilizing AUC, we should create a steady rating that captures how strongly the agent believes {that a} case is optimistic.

What we want

To compute an AUC for an agentic system, we want a steady rating that displays its underlying danger evaluation, confidence, or rating. The rating doesn’t need to be a wonderfully calibrated likelihood. It solely wants to offer an ordering throughout sufferers that’s in step with the agent’s inside notion of danger.

Under is an inventory of sensible methods for reworking agentic outputs into such scores.

Strategies To Derive Steady Scores From Agentic Techniques

- Extract inside mannequin log possibilities.

- Ask the agent to output an express likelihood.

- Use Monte Carlo repeated sampling to estimate a likelihood.

- Convert retrieval similarity scores into danger scores.

- Practice a calibration mannequin on high of agent outputs.

- Sweep a tunable threshold or configuration contained in the agent to approximate an ROC curve.

Comparability Desk

| Technique | Professionals | Cons |

|---|---|---|

| Log possibilities | Steady, secure sign that aligns with mannequin reasoning and rating | Requires entry to logits and will be delicate to immediate format |

| Specific likelihood output | Easy, intuitive, and simple to speak to clinicians and reviewers | Calibration high quality depends upon prompting and mannequin habits |

| Monte Carlo sampling | Captures the agent’s true resolution uncertainty with out inside entry | Computationally costlier and requires a number of runs per affected person |

| Retrieval similarity | Perfect for retrieval-based programs and easy to compute | Could not absolutely mirror downstream resolution logic or total reasoning |

| Calibration mannequin | Converts structured or categorical outputs into clean danger scores and may enhance calibration | Requires labeled information and provides a secondary mannequin to the pipeline |

| Threshold sweeping | Works even when the agent solely exposes binary outputs and a tunable parameter | Produces an approximate AUC that depends upon how the parameter impacts selections |

Within the subsequent part, every technique is described in additional element, together with why it really works, when it’s most acceptable, and what limitations to remember.

Technique 1. Extract inside mannequin log possibilities

I sometimes lean towards this technique at any time when I can entry the mannequin’s last output layer or token-level log possibilities. Not all APIs expose this info, however once they do, it tends to provide probably the most dependable and secure rating sign. In my expertise, utilizing inside log possibilities typically yields habits closest to that of typical classifiers, making downstream ROC evaluation each simple and sturdy.

Idea

Many agentic programs depend on a big language mannequin or different differentiable mannequin internally. Throughout decoding, these fashions compute token degree log possibilities. Even when the ultimate output is a binary label, the mannequin nonetheless evaluates how seemingly every choice is.

If the agent decides between “illness” and “no illness” as its last consequence, we are able to extract:

- log p(illness)

- log p(no illness)

and outline a steady rating resembling:

- s = log p(illness) − log p(no illness)

This rating is larger when the mannequin favors the illness label and decrease when it favors the no illness label.

Why this works

- Log possibilities are steady and supply a clean rating sign.

- They straight encode the mannequin’s desire between outcomes.

- They’re a pure match for ROC evaluation, since AUC solely wants rating, not good calibration.

Greatest for

- Agentic frameworks which might be clearly LLM based mostly.

- Conditions the place you’ve entry to token degree log possibilities by means of the mannequin or API.

- Experiments the place you care about exact rating high quality.

Warning

- Not all APIs expose log possibilities.

- The values will be delicate to immediate formatting and output template decisions, so you will need to hold these constant throughout sufferers and fashions.

Technique 2. Ask the agent to output a likelihood

That is the tactic I exploit most frequently in apply, and the one I see adopted most steadily in utilized agentic programs. It really works with commonplace APIs and doesn’t require entry to mannequin internals. Nonetheless, I’ve repeatedly encountered calibration points. Even when brokers are instructed to output possibilities between 0 and 1 (or 0 and 100), the ensuing values are sometimes nonetheless pseudo-binary, clustering close to extremes resembling above 90% or under 10%, with little illustration in between. Significant calibration sometimes requires offering express reference examples resembling illustrating what 0%, 10%, or 20% danger appears like. This nonetheless, provides further immediate complexity and makes the strategy barely extra fragile.

Idea

If the agent already produces step-by-step reasoning, we are able to lengthen the ultimate step to incorporate an estimated likelihood. For instance, you may instruct the system:

After finishing your reasoning, output a line of the shape:

risk_probability:

that represents the likelihood that this affected person has or will develop the illness.

The numeric worth on this line turns into the continual rating.

Why this works

- It generates a direct steady scalar output for every affected person.

- It doesn’t require low degree entry to logits or inside layers.

- It’s straightforward to elucidate to clinicians, collaborators, or reviewers who count on a numeric likelihood.

Greatest for

- Analysis pipelines the place interpretability and communication are vital.

- Settings the place you may modify prompts however not the underlying mannequin internals.

- Early stage experiments and prototypes.

Warning

- The returned likelihood will not be effectively calibrated with out additional adjustment.

- Small immediate adjustments can shift the distribution of possibilities, so immediate design must be mounted earlier than critical analysis.

Technique 3. Use Monte Carlo repeated sampling

That is one other technique I’ve used to assemble a prediction distribution and derive a likelihood estimate. When sufficient samples are generated per enter, it really works effectively and supplies a tangible sense of uncertainty. The principle disadvantage is value: repeated sampling shortly turns into costly in each time and compute. In apply, I’ve used this strategy together with Technique 2. To do this, we first run repeated sampling to generate an empirical distribution and calibration examples, then switching to direct likelihood outputs (Technique 2) as soon as that vary is best established.

Idea

Many agentic programs use stochastic sampling once they purpose, retrieve info, or generate textual content. This randomness will be exploited to estimate an empirical likelihood.

For every affected person:

- Run the agent on the identical enter N occasions.

- Depend what number of occasions it predicts illness.

- Outline the rating as

- s = (variety of illness predictions) / N

This frequency behaves like an estimated likelihood of illness based on the agent.

Why this works

- It turns discrete sure or no predictions right into a steady likelihood estimate.

- It captures the agent’s inside uncertainty, as mirrored in its sampling habits.

- It doesn’t require log possibilities or particular entry to the mannequin.

Greatest for

- Stochastic LLM brokers that produce completely different outputs once you change the random seed or temperature.

- Agentic pipelines that incorporate random decisions in retrieval or planning.

- Situations the place you desire a conceptually easy likelihood estimate.

Warning

- Operating N repeated inferences per affected person will increase computation time.

- The variance of the estimate decreases with N, so you have to select N massive sufficient for stability however sufficiently small to remain environment friendly.

Technique 4. Convert retrieval similarity scores into danger scores

Idea

Retrieval augmented brokers sometimes question a vector database of previous sufferers, scientific notes, or imaging derived embeddings. The retrieval stage produces similarity scores between the present affected person and saved exemplars.

When you have a set of excessive danger or optimistic exemplars, you may outline a rating resembling

- s = maxj similarity(x, ej)

the place ej indexes embeddings from recognized optimistic instances and similarity is one thing like cosine similarity.

The extra comparable the affected person is to beforehand seen optimistic instances, the upper the rating.

Why this works

- Similarity scores are naturally steady and infrequently effectively structured.

- Retrieval high quality tends to trace illness patterns if the exemplar set is chosen fastidiously.

- The scoring step exists even when the downstream agent logic makes solely a binary resolution.

Greatest for

- Retrieval-augmented-generation (RAG) brokers.

- Techniques which might be explicitly prototype based mostly.

- Conditions the place embedding and retrieval elements are already effectively tuned.

Warning

- Retrieval similarity might seize solely a part of the reasoning that results in the ultimate resolution.

- Biases within the embedding house can distort the rating distribution and must be monitored.

Technique 5. Practice a calibration mannequin on high of agent outputs

Idea

Some agentic programs output structured classes resembling low, medium, or excessive danger, or generate explanations that comply with a constant template. These categorical or structured outputs will be transformed to steady scores utilizing a small calibration mannequin.

For instance:

- Encode classes as options.

- Optionally embed textual explanations into vectors.

- Practice logistic regression, isotonic regression, or one other easy mannequin to map these options to a danger likelihood.

The calibration mannequin learns find out how to assign steady scores based mostly on how the agent’s outputs correlate with true labels.

Why this works

- It converts coarse or discrete outputs into clean, usable scores.

- It will possibly enhance calibration by aligning scores with noticed consequence frequencies.

- It’s aligned with established apply, resembling mapping BI-RADS classes to breast most cancers danger.

Greatest for

- Brokers that output danger classes, scores on an inside scale, or structured explanations.

- Scientific workflows the place calibrated possibilities are wanted for resolution assist or shared resolution making.

- Settings the place labeled consequence information is out there for becoming the calibration mannequin.

Warning

- This strategy introduces a second mannequin that should be documented and maintained.

- It requires sufficient labeled information to coach and validate the calibration step.

Technique 6. Sweep a tunable threshold or configuration contained in the agent

Idea

Some agentic programs expose configuration parameters that management how aggressive or conservative they’re. Examples embrace:

- A sensitivity or danger tolerance setting.

- The variety of retrieved paperwork.

- The variety of reasoning steps to carry out earlier than making a choice.

If the agent stays strictly binary at every setting, you may deal with the configuration parameter as a pseudo threshold:

- Select a number of parameter values that vary from conservative to aggressive.

- For every worth, run the agent on all sufferers and report sensitivity and specificity.

- Plot these working factors to kind an approximate ROC curve.

- Compute the world below this curve as an approximate AUC.

Why this works

- It converts a inflexible binary resolution system into a set of working factors.

- The ensuing curve will be interpreted equally to a standard ROC curve, though the x axis is managed not directly by means of the configuration parameter slightly than a direct rating threshold.

- It’s paying homage to resolution curve evaluation, which additionally examines efficiency throughout a spread of resolution thresholds.

Greatest for

- Rule based mostly or deterministic brokers with tunable configuration parameters.

- Techniques the place possibilities and logits are inaccessible.

- Situations the place you care about commerce offs between sensitivity and specificity at completely different working modes.

Warning

- The ensuing AUC is approximate and based mostly on parameter sweeps slightly than direct rating thresholds.

- Interpretation depends upon understanding how the parameter impacts the underlying resolution logic.

Ultimate Ideas

Agentic programs have gotten central to AI together with medical use instances, however their tendency to output onerous selections conflicts with how we historically consider danger and detection fashions. AUC continues to be a normal reference level in lots of scientific and analysis settings, and AUC requires steady scores that enable significant rating of sufferers.

The strategies on this put up present sensible methods to bridge the hole. By extracting log possibilities, asking the agent for express possibilities, utilizing repeated sampling, exploiting retrieval similarity, coaching a small calibration mannequin, or sweeping configuration thresholds, we are able to assemble steady scores that respect the agent’s inside habits and nonetheless assist rigorous AUC based mostly comparisons.

This retains new agentic options grounded in opposition to established baselines and permits us to guage them utilizing the identical language and strategies that clinicians, statisticians, and reviewers already perceive. With an AUC, we are able to actually consider if the agentic system is including worth.

Related Sources

Initially revealed at https://www.lambertleong.com on December 20, 2025.