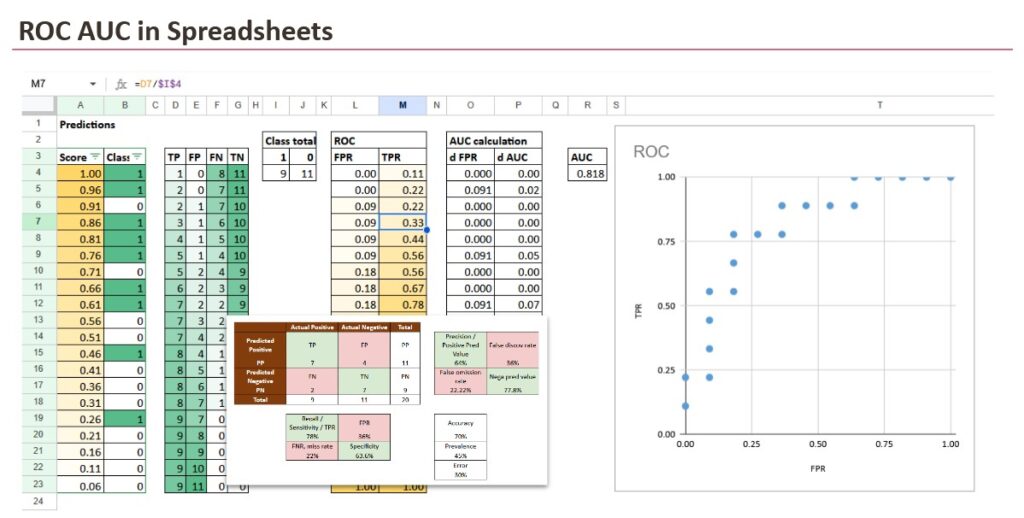

, we’ll implement AUC in Excel.

AUC is often used for classification duties as a efficiency metric.

However we begin with a confusion matrix, as a result of that’s the place everybody begins in observe. Then we’ll see why a single confusion matrix shouldn’t be sufficient.

And we may even reply these questions:

- AUC means Space Beneath the Curve, however beneath which curve?

- The place does that curve come from?

- Why is the realm significant?

- Is AUC a likelihood? (Sure, it has a probabilistic interpretation)

1. Why a confusion matrix shouldn’t be sufficient

1.1 Scores from fashions

A classifier will often give us scores, not ultimate selections. The choice comes later, after we select a threshold.

In the event you learn the earlier “Introduction Calendar” articles, you’ve got already seen that “rating” can imply various things relying on the mannequin household:

- Distance-based fashions (reminiscent of k-NN) typically compute the proportion of neighbors for a given class (or a distance-based confidence), which turns into a rating.

- Density-based fashions compute a chance beneath every class, then normalize to get a ultimate (posterior) likelihood.

- Classification Tree-based fashions typically output the proportion of a given class among the many coaching samples contained in the leaf (that’s the reason many factors share the identical rating).

- Weight-based fashions (linear fashions, kernels, neural networks) compute a weighted sum or a non-linear rating, and generally apply a calibration step (sigmoid, softmax, Platt scaling, and so forth.) to map it to a likelihood.

So irrespective of the strategy, we find yourself with the identical scenario: a rating per remark.

Then, in observe, we decide a threshold, typically 0.5, and we convert scores into predicted lessons.

And that is precisely the place the confusion matrix enters the story.

1.2 The confusion matrix at one threshold

As soon as a threshold is chosen, each remark turns into a binary resolution:

- predicted optimistic (1) or predicted unfavorable (0)

From that, we will rely 4 numbers:

- TP (True Positives): predicted 1 and really 1

- TN (True Negatives): predicted 0 and really 0

- FP (False Positives): predicted 1 however really 0

- FN (False Negatives): predicted 0 however really 1

This 2×2 counting desk is the confusion matrix.

Then we sometimes compute ratios reminiscent of:

- Precision = TP / (TP + FP)

- Recall (TPR) = TP / (TP + FN)

- Specificity = TN / (TN + FP)

- FPR = FP / (FP + TN)

- Accuracy = (TP + TN) / Complete

To this point, every thing is clear and intuitive.

However there’s a hidden limitation: all these values rely upon the brink. So the confusion matrix evaluates the mannequin at one working level, not the mannequin itself.

1.3 When one threshold breaks every thing

This can be a unusual instance, however it nonetheless makes the purpose very clearly.

Think about that your threshold is about to 0.50, and all scores are under 0.50.

Then the classifier predicts:

- Predicted Optimistic: none

- Predicted Unfavourable: everybody

So that you get:

- TP = 0, FP = 0

- FN = 10, TN = 10

This can be a completely legitimate confusion matrix. It additionally creates a really unusual feeling:

- Precision turns into

#DIV/0!as a result of there are not any predicted positives. - Recall is 0% since you didn’t seize any optimistic.

- Accuracy is 50%, which sounds “not too dangerous”, although the mannequin discovered nothing.

Nothing is unsuitable with the confusion matrix. The problem is the query we requested it to reply.

A confusion matrix solutions: “How good is the mannequin at this particular threshold?”

If the brink is poorly chosen, the confusion matrix could make a mannequin look ineffective, even when the scores comprise actual separation.

And in your desk, the separation is seen: positives typically have scores round 0.49, negatives are extra round 0.20 or 0.10. The mannequin shouldn’t be random. Your threshold is just too strict.

That’s the reason a single threshold shouldn’t be sufficient.

What we want as a substitute is a method to consider the mannequin throughout thresholds, not at a single one.

2. ROC

First we have now to construct the curve, since AUC stands for Space Beneath a Curve, so we have now to know this curve.

2.1 What ROC means (and what it’s)

As a result of the primary query everybody ought to ask is: AUC beneath which curve?

The reply is:

AUC is the realm beneath the ROC curve.

However this raises one other query.

What’s the ROC curve, and the place does it come from?

ROC stands for Receiver Working Attribute. The identify is historic (early sign detection), however the thought is fashionable and easy: it describes what occurs once you change the choice threshold.

The ROC curve is a plot with:

- x-axis: FPR (False Optimistic Fee)

FPR = FP / (FP + TN) - y-axis: TPR (True Optimistic Fee), additionally known as Recall or Sensitivity

TPR = TP / (TP + FN)

Every threshold provides one level (FPR, TPR). Whenever you join all factors, you get the ROC curve.

At this stage, one element issues: the ROC curve shouldn’t be instantly noticed; it’s constructed by sweeping the brink over the rating ordering.

2.2 Constructing the ROC curve from scores

For every rating, we will use it as a threshold (and naturally, we may additionally outline personalized thresholds).

For every threshold:

- we compute TP, FP, FN, TN from the confusion matrix

- then we calculate FPR and TPR

So the ROC curve is just the gathering of all these (FPR, TPR) pairs, ordered from strict thresholds to permissive thresholds.

That is precisely what we’ll implement in Excel.

At this level, you will need to discover one thing that feels virtually too easy. Once we construct the ROC curve, the precise numeric values of the scores don’t matter. What issues is the order.

If one mannequin outputs scores between 0 and 1, one other outputs scores between -12 and +5, and a 3rd outputs solely two distinct values, ROC works the identical manner. So long as greater scores are inclined to correspond to the optimistic class, the brink sweep will create the identical sequence of choices.

That’s the reason step one in Excel is at all times the identical: type by rating from highest to lowest. As soon as the rows are in the fitting order, the remaining is simply counting.

2.3 Studying the ROC curve

Within the Excel sheet, the development turns into very concrete.

You type observations by Rating, from highest to lowest. You then stroll down the listing. At every row, you act as if the brink is about to that rating, that means: every thing above is predicted optimistic.

That lets Excel compute cumulative counts:

- what number of positives you’ve got accepted to this point

- what number of negatives you’ve got accepted to this point

From these cumulative counts and the dataset totals, we compute TPR and FPR.

Now each row is one ROC level.

Why the ROC curve appears to be like like a staircase

- When the subsequent accepted row is a optimistic, TP will increase, so TPR will increase whereas FPR stays flat.

- When the subsequent accepted row is a unfavorable, FP will increase, so FPR will increase whereas TPR stays flat.

That’s the reason, with actual finite information, the ROC curve is a staircase. Excel makes this seen.

2.4 Reference instances it’s best to acknowledge

A number of reference instances assist you to learn the curve instantly:

- Good classification: the curve goes straight up (TPR reaches 1 whereas FPR stays 0), then goes proper on the prime.

- Random classifier: the curve stays near the diagonal line from (0,0) to (1,1).

- Inverted rating: the curve falls “under” the diagonal, and the AUC turns into smaller than 0.5. However on this case we have now to vary the scores with 1-score. In idea, we will think about this fictive case. In observe, this often occurs when scores are interpreted within the unsuitable path or class labels are swapped.

These will not be simply idea. They’re visible anchors. Upon getting them, you possibly can interpret any actual ROC curve shortly.

3. ROC AUC

Now, with the curve, what can we do?

3.1 Computing the realm

As soon as the ROC curve exists as an inventory of factors (FPR, TPR), the AUC is pure geometry.

Between two consecutive factors, the realm added is the realm of a trapezoid:

- width = change in FPR

- top = common TPR of the 2 factors

In Excel, this turns into a “delta column” strategy:

- compute dFPR between consecutive rows

- multiply by the typical TPR

- sum every thing

Totally different instances:

- good classification: AUC = 1

- random rating: AUC ≈ 0.5

- inverted rating: AUC < 0.5

So the AUC is actually the abstract of the entire ROC staircase.

3.2. AUC as a likelihood

AUC shouldn’t be about selecting a threshold.

It solutions a a lot less complicated query:

If I randomly decide one optimistic instance and one unfavorable instance, what’s the likelihood that the mannequin assigns the next rating to the optimistic one?

That’s all.

- AUC = 1.0 means good rating (the optimistic at all times will get the next rating)

- AUC = 0.5 means random rating (it’s principally a coin flip)

- AUC < 0.5 means the rating is inverted (negatives are inclined to get greater scores)

This interpretation is extraordinarily helpful, as a result of it explains once more this essential level:

AUC solely is dependent upon rating ordering, not on absolutely the values.

Because of this ROC AUC works even when the “scores” will not be completely calibrated possibilities. They are often uncooked scores, margins, leaf proportions, or any monotonic confidence worth. So long as greater means “extra possible optimistic”, AUC can consider the rating high quality.

Conclusion

A confusion matrix evaluates a mannequin at one threshold, however classifiers produce scores, not selections.

ROC and AUC consider the mannequin throughout all thresholds by specializing in rating, not calibration.

Ultimately, AUC solutions a easy query: how typically does a optimistic instance obtain the next rating than a unfavorable one?

Seen this fashion, ROC AUC is an intuitive metric, and a spreadsheet is sufficient to make each step express.