thrilling to see the GenAI trade starting to maneuver towards standardisation. We could be witnessing one thing just like the early days of the web, when HTTP (HyperText Switch Protocol) first emerged. When Tim Berners-Lee developed HTTP in 1990, it offered a easy but extensible protocol that remodeled the web from a specialised analysis community into the globally accessible World Extensive Internet. By 1993, net browsers like Mosaic had made HTTP so widespread that net visitors shortly outpaced different techniques.

One promising step on this course is MCP (Mannequin Context Protocol), developed by Anthropic. MCP is gaining reputation with its makes an attempt to standardise the interactions between LLMs and exterior instruments or information sources. Extra not too long ago (the first commit is dated April 2025), a brand new protocol known as ACP (Agent Communication Protocol) appeared. It enhances MCP by defining the methods by which brokers can talk with one another.

On this article, I wish to talk about what ACP is, why it may be useful and the way it may be utilized in observe. We are going to construct a multi-agent AI system for interacting with information.

ACP overview

Earlier than leaping into observe, let’s take a second to know the idea behind ACP and the way it works beneath the hood.

ACP (Agent Communication Protocol) is an open protocol designed to deal with the rising problem of connecting AI brokers, purposes, and people. The present GenAI trade is sort of fragmented, with completely different groups constructing brokers in isolation utilizing numerous, usually incompatible frameworks and applied sciences. This fragmentation slows down innovation and makes it tough for brokers to collaborate successfully.

To handle this problem, ACP goals to standardise communication between brokers by way of RESTful APIs. The protocol is framework- and technology-agnostic, that means it may be used with any agentic framework, equivalent to LangChain, CrewAI, smolagents, or others. This flexibility makes it simpler to construct interoperable techniques the place brokers can seamlessly work collectively, no matter how they have been initially developed.

This protocol has been developed as an open normal beneath the Linux Basis, alongside BeeAI (its reference implementation). One of many key factors the workforce emphasises is that ACP is brazenly ruled and formed by the group, relatively than a bunch of distributors.

What advantages can ACP convey?

- Simply replaceable brokers. With the present tempo of innovation within the GenAI area, new cutting-edge applied sciences are rising on a regular basis. ACP permits brokers to be swapped in manufacturing seamlessly, decreasing upkeep prices and making it simpler to undertake essentially the most superior instruments after they turn into obtainable.

- Enabling collaboration between a number of brokers constructed on completely different frameworks. As we all know from individuals administration, specialisation usually results in higher outcomes. The identical applies to agentic techniques. The group of brokers, every targeted on a particular process (like writing Python code or researching the net), can usually outperform a single agent making an attempt to do all the pieces. ACP makes it potential for such specialised brokers to speak and work collectively, even when they’re constructed utilizing completely different frameworks or applied sciences.

- New alternatives for partnerships. With a unified normal for brokers’ communication, it might be simpler for brokers to collaborate, sparking new partnerships between completely different groups throughout the firm and even completely different corporations. Think about a world the place your sensible house agent notices the temperature dropping unusually, determines that the heating system has failed, and checks along with your utility supplier’s agent to verify there aren’t any deliberate outages. Lastly, it books a technician coordinating the go to along with your Google Calendar agent to be sure you’re house. It might sound futuristic, however with ACP, it may be fairly shut.

We’ve coated what ACP is and why it issues. The protocol appears to be like fairly promising. So, let’s put it to the take a look at and see the way it works in observe.

ACP in observe

Let’s attempt ACP in a traditional “discuss to information” use case. To make use of the advantage of ACP being framework agnostic, we’ll construct ACP brokers with completely different frameworks:

- SQL Agent with CrewAI to compose SQL queries

- DB Agent with HuggingFace smolagents to execute these queries.

I gained’t go into the small print of every framework right here, however for those who’re curious, I’ve written in-depth articles on each of them:

– “Multi AI Agent Systems 101” about CrewAI,

– “Code Agents: The Future of Agentic AI” about smolagents.

Constructing DB Agent

Let’s begin with the DB agent. As I discussed earlier, ACP may very well be complemented by MCP. So, I’ll use instruments from my analytics toolbox by way of the MCP server. Yow will discover the MCP server implementation on GitHub. For a deeper dive and step-by-step directions, test my previous article, the place I coated MCP intimately.

The code itself is fairly easy: we initialise the ACP server and use the @server.agent() decorator to outline our agent perform. This perform expects an inventory of messages as enter and returns a generator.

from collections.abc import AsyncGenerator

from acp_sdk.fashions import Message, MessagePart

from acp_sdk.server import Context, RunYield, RunYieldResume, Server

from smolagents import LiteLLMModel,ToolCallingAgent, ToolCollection

import logging

from dotenv import load_dotenv

from mcp import StdioServerParameters

load_dotenv()

# initialise ACP server

server = Server()

# initialise LLM

mannequin = LiteLLMModel(

model_id="openai/gpt-4o-mini",

max_tokens=2048

)

# outline config for MCP server to attach

server_parameters = StdioServerParameters(

command="uv",

args=[

"--directory",

"/Users/marie/Documents/github/mcp-analyst-toolkit/src/mcp_server",

"run",

"server.py"

],

env=None

)

@server.agent()

async def db_agent(enter: listing[Message], context: Context) -> AsyncGenerator[RunYield, RunYieldResume]:

"This can be a CodeAgent can execute SQL queries towards ClickHouse database."

with ToolCollection.from_mcp(server_parameters, trust_remote_code=True) as tool_collection:

agent = ToolCallingAgent(instruments=[*tool_collection.tools], mannequin=mannequin)

query = enter[0].components[0].content material

response = agent.run(query)

yield Message(components=[MessagePart(content=str(response))])

if __name__ == "__main__":

server.run(port=8001)We will even have to arrange a Python atmosphere. I will probably be utilizing uv bundle supervisor for this.

uv init --name acp-sql-agent

uv venv

supply .venv/bin/activate

uv add acp-sdk "smolagents[litellm]" python-dotenv mcp "smolagents[mcp]" ipykernelThen, we will run the agent utilizing the next command.

uv run db_agent.pyIf all the pieces is ready up accurately, you will note a server operating on port 8001. We are going to want an ACP shopper to confirm that it’s working as anticipated. Bear with me, we’ll take a look at it shortly.

Constructing the SQL agent

Earlier than that, let’s construct a SQL agent that may compose queries. We are going to use the CrewAI framework for this. Our agent will reference the information base of questions and queries to generate solutions. So, we’ll equip it with a RAG (Retrieval Augmented Technology) software.

First, we’ll initialise the RAG software and cargo the reference file clickhouse_queries.txt. Subsequent, we’ll create a CrewAI agent by specifying its function, purpose and backstory. Lastly, we’ll create a process and bundle all the pieces collectively right into a Crew object.

from crewai import Crew, Process, Agent, LLM

from crewai.instruments import BaseTool

from crewai_tools import RagTool

from collections.abc import AsyncGenerator

from acp_sdk.fashions import Message, MessagePart

from acp_sdk.server import RunYield, RunYieldResume, Server

import json

import os

from datetime import datetime

from typing import Sort

from pydantic import BaseModel, Area

import nest_asyncio

nest_asyncio.apply()

# config for RAG software

config = {

"llm": {

"supplier": "openai",

"config": {

"mannequin": "gpt-4o-mini",

}

},

"embedding_model": {

"supplier": "openai",

"config": {

"mannequin": "text-embedding-ada-002"

}

}

}

# initialise software

rag_tool = RagTool(

config=config,

chunk_size=1200,

chunk_overlap=200)

rag_tool.add("clickhouse_queries.txt")

# initialise ACP server

server = Server()

# initialise LLM

llm = LLM(mannequin="openai/gpt-4o-mini", max_tokens=2048)

@server.agent()

async def sql_agent(enter: listing[Message]) -> AsyncGenerator[RunYield, RunYieldResume]:

"This agent is aware of the database schema and might return SQL queries to reply questions concerning the information."

# create agent

sql_agent = Agent(

function="Senior SQL analyst",

purpose="Write SQL queries to reply questions concerning the e-commerce analytics database.",

backstory="""

You're an professional in ClickHouse SQL queries with over 10 years of expertise. You're aware of the e-commerce analytics database schema and might write optimized queries to extract insights.

## Database Schema

You're working with an e-commerce analytics database containing the next tables:

### Desk: ecommerce.customers

**Description:** Buyer info for the net store

**Main Key:** user_id

**Fields:**

- user_id (Int64) - Distinctive buyer identifier (e.g., 1000004, 3000004)

- nation (String) - Buyer's nation of residence (e.g., "Netherlands", "United Kingdom")

- is_active (Int8) - Buyer standing: 1 = energetic, 0 = inactive

- age (Int32) - Buyer age in full years (e.g., 31, 72)

### Desk: ecommerce.periods

**Description:** Consumer session information and transaction information

**Main Key:** session_id

**Overseas Key:** user_id (references ecommerce.customers.user_id)

**Fields:**

- user_id (Int64) - Buyer identifier linking to customers desk (e.g., 1000004, 3000004)

- session_id (Int64) - Distinctive session identifier (e.g., 106, 1023)

- action_date (Date) - Session begin date (e.g., "2021-01-03", "2024-12-02")

- session_duration (Int32) - Session period in seconds (e.g., 125, 49)

- os (String) - Working system used (e.g., "Home windows", "Android", "iOS", "MacOS")

- browser (String) - Browser used (e.g., "Chrome", "Safari", "Firefox", "Edge")

- is_fraud (Int8) - Fraud indicator: 1 = fraudulent session, 0 = official

- income (Float64) - Buy quantity in USD (0.0 for non-purchase periods, >0 for purchases)

## ClickHouse-Particular Tips

1. **Use ClickHouse-optimized capabilities:**

- uniqExact() for exact distinctive counts

- uniqExactIf() for conditional distinctive counts

- quantile() capabilities for percentiles

- Date capabilities: toStartOfMonth(), toStartOfYear(), as we speak()

2. **Question formatting necessities:**

- At all times finish queries with "format TabSeparatedWithNames"

- Use significant column aliases

- Use correct JOIN syntax when combining tables

- Wrap date literals in quotes (e.g., '2024-01-01')

3. **Efficiency concerns:**

- Use acceptable WHERE clauses to filter information

- Think about using HAVING for post-aggregation filtering

- Use LIMIT when discovering high/backside outcomes

4. **Information interpretation:**

- income > 0 signifies a purchase order session

- income = 0 signifies a looking session with out buy

- is_fraud = 1 periods ought to usually be excluded from enterprise metrics except particularly analyzing fraud

## Response Format

Present solely the SQL question as your reply. Embrace temporary reasoning in feedback if the question logic is advanced.

""",

verbose=True,

allow_delegation=False,

llm=llm,

instruments=[rag_tool],

max_retry_limit=5

)

# create process

task1 = Process(

description=enter[0].components[0].content material,

expected_output = "Dependable SQL question that solutions the query primarily based on the e-commerce analytics database schema.",

agent=sql_agent

)

# create crew

crew = Crew(brokers=[sql_agent], duties=[task1], verbose=True)

# execute agent

task_output = await crew.kickoff_async()

yield Message(components=[MessagePart(content=str(task_output))])

if __name__ == "__main__":

server.run(port=8002)We will even want so as to add any lacking packages to uv earlier than operating the server.

uv add crewai crewai_tools nest-asyncio

uv run sql_agent.pyNow, the second agent is operating on port 8002. With each servers up and operating, it’s time to test whether or not they’re working correctly.

Calling an ACP agent with a shopper

Now that we’re prepared to check our brokers, we’ll use the ACP shopper to run them synchronously. For that, we have to initialise a Shopper with the server URL and use the run_sync perform specifying the agent’s identify and enter.

import os

import nest_asyncio

nest_asyncio.apply()

from acp_sdk.shopper import Shopper

import asyncio

# Set your OpenAI API key right here (or use atmosphere variable)

# os.environ["OPENAI_API_KEY"] = "your-api-key-here"

async def instance() -> None:

async with Shopper(base_url="http://localhost:8001") as client1:

run1 = await client1.run_sync(

agent="db_agent", enter="choose 1 as take a look at"

)

print(' DB agent response:')

print(run1.output[0].components[0].content material)

async with Shopper(base_url="http://localhost:8002") as client2:

run2 = await client2.run_sync(

agent="sql_agent", enter="What number of prospects did we've in Might 2024?"

)

print(' SQL agent response:')

print(run2.output[0].components[0].content material)

if __name__ == "__main__":

asyncio.run(instance())

# DB agent response:

# 1

# SQL agent response:

# ```

# SELECT COUNT(DISTINCT user_id) AS total_customers

# FROM ecommerce.customers

# WHERE is_active = 1

# AND user_id IN (

# SELECT DISTINCT user_id

# FROM ecommerce.periods

# WHERE action_date >= '2024-05-01' AND action_date < '2024-06-01'

# )

# format TabSeparatedWithNames We obtained anticipated outcomes from each servers, so it appears to be like like all the pieces is working as supposed.

💡Tip: You possibly can test the total execution logs within the terminal the place every server is operating.

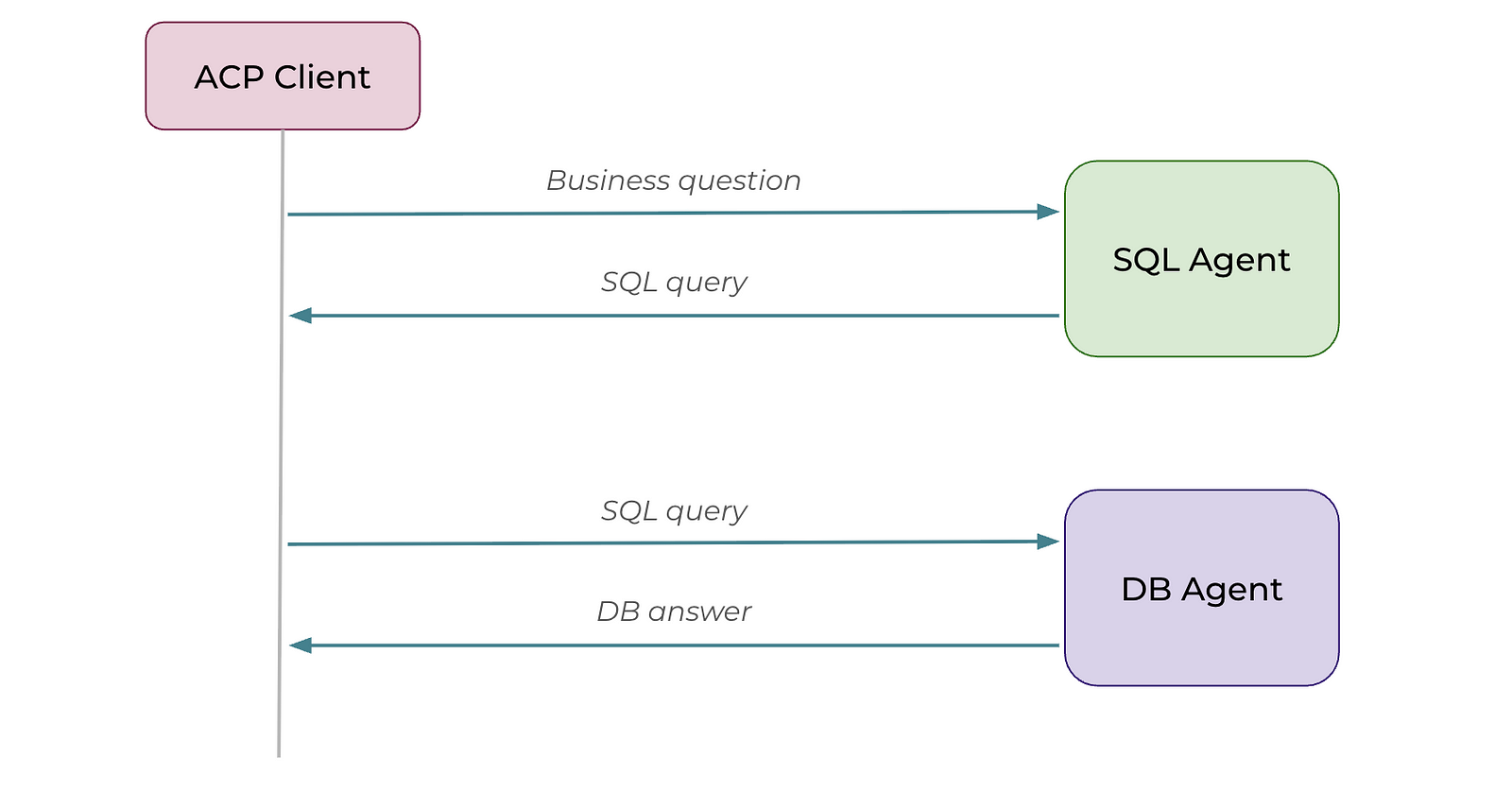

Chaining brokers sequentially

To reply precise questions from prospects, we want each brokers to work collectively. Let’s chain them one after the opposite. So, we’ll first name the SQL agent after which cross the generated SQL question to the DB agent for execution.

Right here’s the code to chain the brokers. It’s fairly just like what we used earlier to check every server individually. The primary distinction is that we now cross the output from the SQL agent straight into the DB agent.

async def instance() -> None:

async with Shopper(base_url="http://localhost:8001") as db_agent, Shopper(base_url="http://localhost:8002") as sql_agent:

query = 'What number of prospects did we've in Might 2024?'

sql_query = await sql_agent.run_sync(

agent="sql_agent", enter=query

)

print('SQL question generated by SQL agent:')

print(sql_query.output[0].components[0].content material)

reply = await db_agent.run_sync(

agent="db_agent", enter=sql_query.output[0].components[0].content material

)

print('Reply from DB agent:')

print(reply.output[0].components[0].content material)

asyncio.run(instance())All the pieces labored easily, and we obtained the anticipated output.

SQL question generated by SQL agent:

Thought: I have to craft a SQL question to rely the variety of distinctive prospects

who have been energetic in Might 2024 primarily based on their periods.

```sql

SELECT COUNT(DISTINCT u.user_id) AS active_customers

FROM ecommerce.customers AS u

JOIN ecommerce.periods AS s ON u.user_id = s.user_id

WHERE u.is_active = 1

AND s.action_date >= '2024-05-01'

AND s.action_date < '2024-06-01'

FORMAT TabSeparatedWithNames

```

Reply from DB agent:

234544Router sample

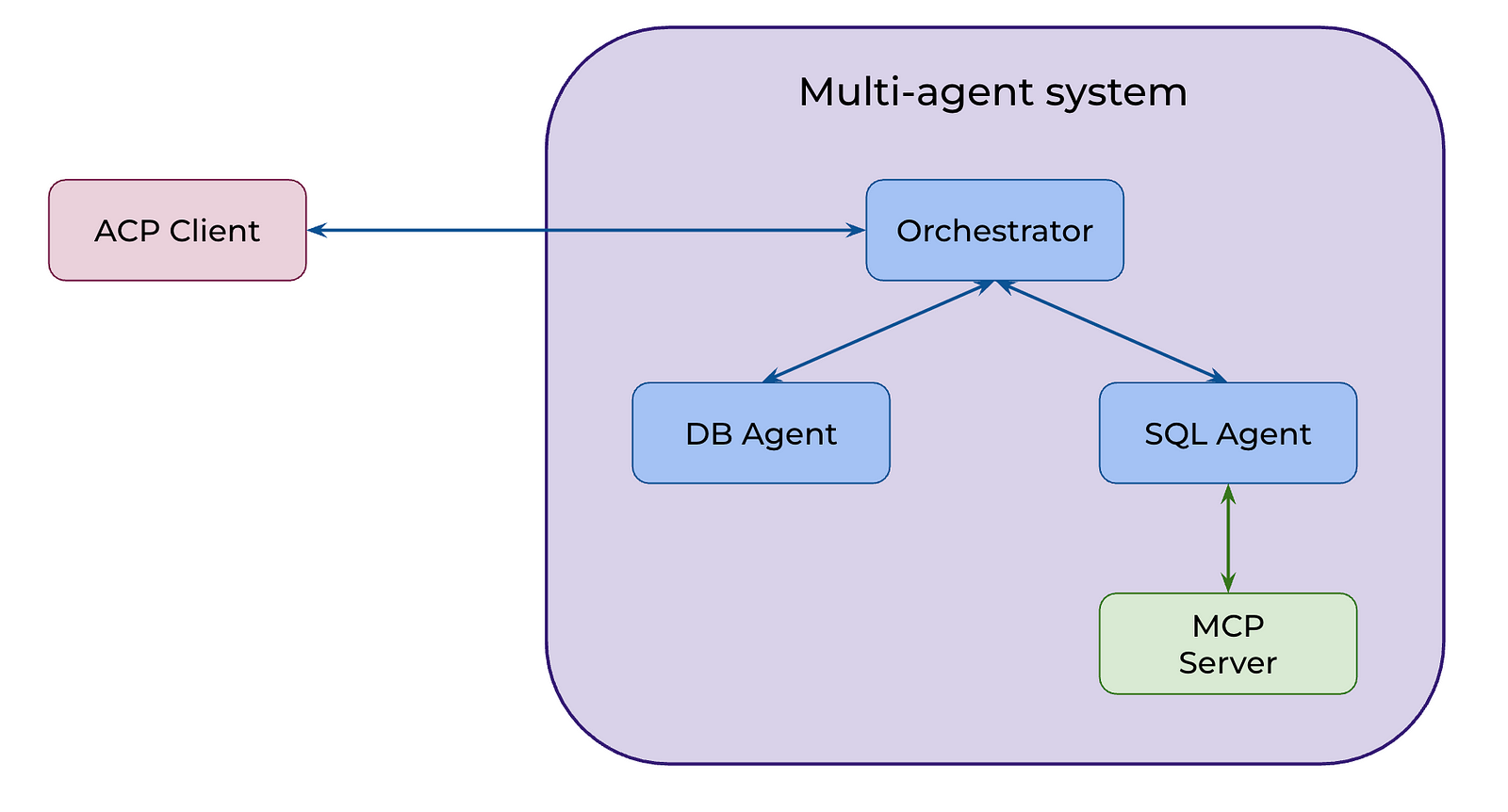

In some use instances, the trail is static and well-defined, and we will chain brokers straight as we did earlier. Nonetheless, extra usually we count on LLM brokers to purpose independently and resolve which instruments or brokers to make use of to attain a purpose. To resolve for such instances, we’ll implement a router sample utilizing ACP. We are going to create a brand new agent (the orchestrator ) that may delegate duties to DB and SQL brokers.

We are going to begin by including a reference implementation beeai_framework to the bundle supervisor.

uv add beeai_frameworkTo allow our orchestrator to name the SQL and DB brokers, we’ll wrap them as instruments. This manner, the orchestrator can deal with them like some other software and invoke them when wanted.

Let’s begin with the SQL agent. It’s primarily boilerplate code: we outline the enter and output fields utilizing Pydantic after which name the agent within the _run perform.

from pydantic import BaseModel, Area

from acp_sdk import Message

from acp_sdk.shopper import Shopper

from acp_sdk.fashions import MessagePart

from beeai_framework.instruments.software import Instrument

from beeai_framework.instruments.sorts import ToolRunOptions

from beeai_framework.context import RunContext

from beeai_framework.emitter import Emitter

from beeai_framework.instruments import ToolOutput

from beeai_framework.utils.strings import to_json

# helper perform

async def run_agent(agent: str, enter: str) -> listing[Message]:

async with Shopper(base_url="http://localhost:8002") as shopper:

run = await shopper.run_sync(

agent=agent, enter=[Message(parts=[MessagePart(content=input, content_type="text/plain")])]

)

return run.output

class SqlQueryToolInput(BaseModel):

query: str = Area(description="The query to reply utilizing SQL queries towards the e-commerce analytics database")

class SqlQueryToolResult(BaseModel):

sql_query: str = Area(description="The SQL question that solutions the query")

class SqlQueryToolOutput(ToolOutput):

consequence: SqlQueryToolResult = Area(description="SQL question consequence")

def get_text_content(self) -> str:

return to_json(self.consequence)

def is_empty(self) -> bool:

return self.consequence.sql_query.strip() == ""

def __init__(self, consequence: SqlQueryToolResult) -> None:

tremendous().__init__()

self.consequence = consequence

class SqlQueryTool(Instrument[SqlQueryToolInput, ToolRunOptions, SqlQueryToolOutput]):

identify = "SQL Question Generator"

description = "Generate SQL queries to reply questions concerning the e-commerce analytics database"

input_schema = SqlQueryToolInput

def _create_emitter(self) -> Emitter:

return Emitter.root().youngster(

namespace=["tool", "sql_query"],

creator=self,

)

async def _run(self, enter: SqlQueryToolInput, choices: ToolRunOptions | None, context: RunContext) -> SqlQueryToolOutput:

consequence = await run_agent("sql_agent", enter.query)

return SqlQueryToolOutput(consequence=SqlQueryToolResult(sql_query=str(consequence[0])))Let’s observe the identical strategy with the DB agent.

from pydantic import BaseModel, Area

from acp_sdk import Message

from acp_sdk.shopper import Shopper

from acp_sdk.fashions import MessagePart

from beeai_framework.instruments.software import Instrument

from beeai_framework.instruments.sorts import ToolRunOptions

from beeai_framework.context import RunContext

from beeai_framework.emitter import Emitter

from beeai_framework.instruments import ToolOutput

from beeai_framework.utils.strings import to_json

async def run_agent(agent: str, enter: str) -> listing[Message]:

async with Shopper(base_url="http://localhost:8001") as shopper:

run = await shopper.run_sync(

agent=agent, enter=[Message(parts=[MessagePart(content=input, content_type="text/plain")])]

)

return run.output

class DatabaseQueryToolInput(BaseModel):

question: str = Area(description="The SQL question or query to execute towards the ClickHouse database")

class DatabaseQueryToolResult(BaseModel):

consequence: str = Area(description="The results of the database question execution")

class DatabaseQueryToolOutput(ToolOutput):

consequence: DatabaseQueryToolResult = Area(description="Database question execution consequence")

def get_text_content(self) -> str:

return to_json(self.consequence)

def is_empty(self) -> bool:

return self.consequence.consequence.strip() == ""

def __init__(self, consequence: DatabaseQueryToolResult) -> None:

tremendous().__init__()

self.consequence = consequence

class DatabaseQueryTool(Instrument[DatabaseQueryToolInput, ToolRunOptions, DatabaseQueryToolOutput]):

identify = "Database Question Executor"

description = "Execute SQL queries and questions towards the ClickHouse database"

input_schema = DatabaseQueryToolInput

def _create_emitter(self) -> Emitter:

return Emitter.root().youngster(

namespace=["tool", "database_query"],

creator=self,

)

async def _run(self, enter: DatabaseQueryToolInput, choices: ToolRunOptions | None, context: RunContext) -> DatabaseQueryToolOutput:

consequence = await run_agent("db_agent", enter.question)

return DatabaseQueryToolOutput(consequence=DatabaseQueryToolResult(consequence=str(consequence[0])))Now let’s put collectively the principle agent that will probably be orchestrating the others as instruments. We are going to use the ReAct agent implementation from the BeeAI framework for the orchestrator. I’ve additionally added some further logging to the software wrappers round our DB and SQL brokers, in order that we will see all of the details about the calls.

from collections.abc import AsyncGenerator

from acp_sdk import Message

from acp_sdk.fashions import MessagePart

from acp_sdk.server import Context, Server

from beeai_framework.backend.chat import ChatModel

from beeai_framework.brokers.react import ReActAgent

from beeai_framework.reminiscence import TokenMemory

from beeai_framework.utils.dicts import exclude_none

from sql_tool import SqlQueryTool

from db_tool import DatabaseQueryTool

import os

import logging

# Configure logging

logger = logging.getLogger(__name__)

logger.setLevel(logging.INFO)

# Solely add handler if it would not exist already

if not logger.handlers:

handler = logging.StreamHandler()

handler.setLevel(logging.INFO)

formatter = logging.Formatter('ORCHESTRATOR - %(levelname)s - %(message)s')

handler.setFormatter(formatter)

logger.addHandler(handler)

# Forestall propagation to keep away from duplicate messages

logger.propagate = False

# Wrapped our instruments with further logging for tracebility

class LoggingSqlQueryTool(SqlQueryTool):

async def _run(self, enter, choices, context):

logger.data(f"🔍 SQL Instrument Request: {enter.query}")

consequence = await tremendous()._run(enter, choices, context)

logger.data(f"📝 SQL Instrument Response: {consequence.consequence.sql_query}")

return consequence

class LoggingDatabaseQueryTool(DatabaseQueryTool):

async def _run(self, enter, choices, context):

logger.data(f"🗄️ Database Instrument Request: {enter.question}")

consequence = await tremendous()._run(enter, choices, context)

logger.data(f"📊 Database Instrument Response: {consequence.consequence.consequence}...")

return consequence

server = Server()

@server.agent(identify="orchestrator")

async def orchestrator(enter: listing[Message], context: Context) -> AsyncGenerator:

logger.data(f"🚀 Orchestrator began with enter: {enter[0].components[0].content material}")

llm = ChatModel.from_name("openai:gpt-4o-mini")

agent = ReActAgent(

llm=llm,

instruments=[LoggingSqlQueryTool(), LoggingDatabaseQueryTool()],

templates={

"system": lambda template: template.replace(

defaults=exclude_none({

"directions": """

You're an professional information analyst assistant that helps customers analyze e-commerce information.

You may have entry to 2 instruments:

1. SqlQueryTool - Use this to generate SQL queries from pure language questions concerning the e-commerce database

2. DatabaseQueryTool - Use this to execute SQL queries straight towards the ClickHouse database

The database comprises two predominant tables:

- ecommerce.customers (buyer info)

- ecommerce.periods (person periods and transactions)

When a person asks a query:

1. First, use SqlQueryTool to generate the suitable SQL question

2. Then, use DatabaseQueryTool to execute that question and get the outcomes

3. Current the leads to a transparent, comprehensible format

At all times present context about what the info reveals and any insights you'll be able to derive.

""",

"function": "system"

})

)

}, reminiscence=TokenMemory(llm))

immediate = (str(enter[0]))

logger.data(f"🤖 Working ReAct agent with immediate: {immediate}")

response = await agent.run(immediate)

logger.data(f"✅ Orchestrator accomplished. Response size: {len(response.consequence.textual content)} characters")

logger.data(f"📤 Ultimate response: {response.consequence.textual content}...")

yield Message(components=[MessagePart(content=response.result.text)])

if __name__ == "__main__":

server.run(port=8003)Now, identical to earlier than, we will run the orchestrator agent utilizing the ACP shopper to see the consequence.

async def router_example() -> None:

async with Shopper(base_url="http://localhost:8003") as orchestrator_client:

query = 'What number of prospects did we've in Might 2024?'

response = await orchestrator_client.run_sync(

agent="orchestrator", enter=query

)

print('Orchestrator response:')

# Debug: Print the response construction

print(f"Response sort: {sort(response)}")

print(f"Response output size: {len(response.output) if hasattr(response, 'output') else 'No output attribute'}")

if response.output and len(response.output) > 0:

print(response.output[0].components[0].content material)

else:

print("No response obtained from orchestrator")

print(f"Full response: {response}")

asyncio.run(router_example())

# In Might 2024, we had 234,544 distinctive energetic prospects.Our system labored nicely, and we received the anticipated consequence. Good job!

Let’s see the way it labored beneath the hood by checking the logs from the orchestrator server. The router first invoked the SQL agent as a SQL software. Then, it used the returned question to name the DB agent. Lastly, it produced the ultimate reply.

ORCHESTRATOR - INFO - 🚀 Orchestrator began with enter: What number of prospects did we've in Might 2024?

ORCHESTRATOR - INFO - 🤖 Working ReAct agent with immediate: What number of prospects did we've in Might 2024?

ORCHESTRATOR - INFO - 🔍 SQL Instrument Request: What number of prospects did we've in Might 2024?

ORCHESTRATOR - INFO - 📝 SQL Instrument Response:

SELECT COUNT(uniqExact(u.user_id)) AS active_customers

FROM ecommerce.customers AS u

JOIN ecommerce.periods AS s ON u.user_id = s.user_id

WHERE u.is_active = 1

AND s.action_date >= '2024-05-01'

AND s.action_date < '2024-06-01'

FORMAT TabSeparatedWithNames

ORCHESTRATOR - INFO - 🗄️ Database Instrument Request:

SELECT COUNT(uniqExact(u.user_id)) AS active_customers

FROM ecommerce.customers AS u

JOIN ecommerce.periods AS s ON u.user_id = s.user_id

WHERE u.is_active = 1

AND s.action_date >= '2024-05-01'

AND s.action_date < '2024-06-01'

FORMAT TabSeparatedWithNames

ORCHESTRATOR - INFO - 📊 Database Instrument Response: 234544...

ORCHESTRATOR - INFO - ✅ Orchestrator accomplished. Response size: 52 characters

ORCHESTRATOR - INFO - 📤 Ultimate response: In Might 2024, we had 234,544 distinctive energetic prospects....Due to the additional logging we added, we will now hint all of the calls made by the orchestrator.

Yow will discover the total code on GitHub.

Abstract

On this article, we’ve explored the ACP protocol and its capabilities. Right here’s the fast recap of the important thing factors:

- ACP (Agent Communication Protocol) is an open protocol that goals to standardise communication between brokers. It enhances MCP, which handles interactions between brokers and exterior instruments and information sources.

- ACP follows a client-server structure and makes use of RESTful APIs.

- The protocol is technology- and framework-agnostic, permitting you to construct interoperable techniques and create new collaborations between brokers seamlessly.

- With ACP, you’ll be able to implement a variety of agent interactions, from easy chaining in well-defined workflows to the router sample, the place an orchestrator can delegate duties dynamically to different brokers.

Thanks for studying. I hope this text was insightful. Keep in mind Einstein’s recommendation: “The necessary factor is to not cease questioning. Curiosity has its personal purpose for present.” Might your curiosity lead you to your subsequent nice perception.

Reference

This text is impressed by the “ACP: Agent Communication Protocol“ brief course from DeepLearning.AI.