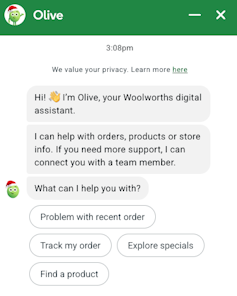

Woolworths has introduced a partnership with Google to include agentic synthetic intelligence into its “Olive” chatbot, beginning in Australia later this year.

Till now, Olive has largely answered questions, resolved issues and directed buyers to data.

Quickly, Olive will have the ability to do extra: planning meals, decoding handwritten recipes, making use of loyalty reductions and putting urged objects instantly right into a buyer’s on-line buying basket.

Woolworths

Woolworths says Olive won’t full purchases routinely, and prospects will nonetheless have to approve and pay for orders.

This distinction is essential, however dangers understating what’s truly altering. By the point a client reaches the checkout, most of the substantive selections about what to purchase could have already got been formed by the system.

From helper to resolution maker

Probably the most vital change for buyers is how selections shall be made in the course of the buying course of – and who makes them.

Google describes its new system as a “proactive digital concierge” that understands buyer intent, causes by way of multi-step duties, and executes actions.

Main United States retailers, together with Walmart, Kroger and Lowe’s, are adopting the identical expertise. The transfer kinds a part of a broader technique by Google to advertise agent-based commerce across retail.

In sensible phrases, if Woolworths buyers give their permission, the brand new Google Gemini model of Olive will more and more assemble buying baskets autonomously.

For instance, a buyer who uploads a photograph of a handwritten recipe might obtain a accomplished listing of substances, reflecting product availability and reductions.

Alternatively, a buyer who asks for a meal plan might obtain a ready-made basket based mostly on previous preferences, present promotions and native inventory ranges.

This essentially adjustments the position of the consumer.

As a substitute of actively deciding on merchandise by way of looking and comparability, buyers will more and more evaluation and approve alternatives made for them. Resolution-making shifts away from the person in the direction of the system.

This delegation could seem minor when thought-about in isolation. Over time, nonetheless, repeated delegation shapes habits, preferences and spending patterns. That’s the reason this new change deserves cautious scrutiny.

Nudging by design

Woolworths presents Olive’s expanded position as a sensible comfort to save lots of effort and time, whereas rising personalisation. These claims will not be incorrect, however they obscure an essential level.

Agent-based buying techniques are designed to nudge behaviour in ways in which differ markedly from conventional promoting.

When Olive highlights discounted merchandise or promotional gives for a client, it doesn’t depend on impartial standards. As a substitute, its priorities mirror pricing methods, promotional priorities and industrial relationships – not an goal evaluation of the buyer’s pursuits.

As soon as such judgements are embedded inside an AI system that guides buying selections, nudging turns into a part of the construction of alternative, moderately than a visual layer positioned on prime of it.

It is a notably highly effective type of affect. Conventional promoting is recognisable. Buyers know when they’re being persuaded and might low cost or ignore it.

Algorithmic nudging, against this, operates upstream. It shapes which choices are surfaced, mixed, or omitted earlier than the consumer encounters them. Over time, this affect turns into routine and troublesome to detect.

Agent-based buying additionally means AI does the looking, evaluating costs and weighing options for us. Buyers are more and more introduced with curated outcomes that invite acceptance, moderately than deliberation.

As fewer choices are made seen and fewer trade-offs are explicitly introduced, comfort begins to switch knowledgeable alternative.

For these causes, it will be mistaken to deal with agent-led buying as worth impartial. Programs designed to extend loyalty and income mustn’t routinely be assumed to behave in the most effective pursuits of customers, even once they ship real comfort.

Unresolved knowledge privateness questions

Knowledge privateness is a good larger concern.

Grocery buying reveals way over model choice. Meal planning can disclose well being circumstances, dietary restrictions, cultural practices, spiritual observance, household composition and monetary pressures. When an AI system manages these duties, home life turns into legible to the platform that helps it.

Google has stated buyer knowledge utilized in its system isn’t used to coach fashions and that strict security requirements apply.

These assurances are essential, however they don’t resolve all issues. It’s not but clear how lengthy family knowledge is retained, the way it’s aggregated, or how insights from such knowledge are used elsewhere.

Consent gives restricted safety on this context. It’s usually granted as soon as, whereas profiling and optimisation proceed over time. Even with out direct knowledge sharing, inferences drawn from family behaviour can form system efficiency and design.

These privateness dangers don’t depend upon misuse or knowledge breaches. They come up from the rising intimacy of information used to form behaviour, moderately than merely report it.

Comfort shouldn’t finish the dialog

For a lot of households, Olive’s expanded capabilities will save time, cut back friction and enhance the buying expertise.

However when AI strikes from help to motion, it reshapes how decisions are made and the way a lot company individuals quit.

This shift ought to immediate a broader dialogue about the place comfort ends and client autonomy begins. When AI techniques begin making on a regular basis selections, we should ask whether or not customers retain significant management over their decisions.

Transparency about how suggestions are generated, limits on industrial incentives shaping agent behaviour, and limits on family knowledge use needs to be handled as baseline expectations, not optionally available safeguards.

With out such scrutiny, agent-led buying dangers quietly reconfiguring client behaviour in methods which might be troublesome to detect – and even tougher to reverse.![]()

- Uri Gal, Professor in Enterprise Data Programs, University of Sydney

This text is republished from The Conversation underneath a Artistic Commons license. Learn the original article.