how I’ve constructed a self-hosted, end-to-end platform that offers every consumer a private, agentic chatbot that may autonomously search by way of solely the recordsdata that the consumer explicitly permits it to entry.

In different phrases: full management, 100% personal, all the advantages of LLM with out the privateness leaks, token prices, or exterior dependencies.

Intro

Over the previous week, I challenged myself to construct one thing that has been on my thoughts for some time:

How can I supercharge an LLM with my private information with out sacrificing privateness to huge tech firms?

That led to this week’s problem:

Construct an agentic chatbot outfitted with instruments to entry a consumer’s private notes securely, with out compromising privateness.

As an additional problem, I wished the system to help a number of customers. Not a shared assistant however a personal agent for each consumer the place consumer has full management over which recordsdata their agent can learn and motive about.

We’ll construct the system within the following steps:

- Structure

- How will we create an agent and supply it with instruments?

- Circulation 1: Person file administration: What occurs once we submit a file?

- Circulation 2: How will we embed paperwork and retailer recordsdata?

- Circulation 3: What occurs once we chat with our agentic assistant?

- Demonstration

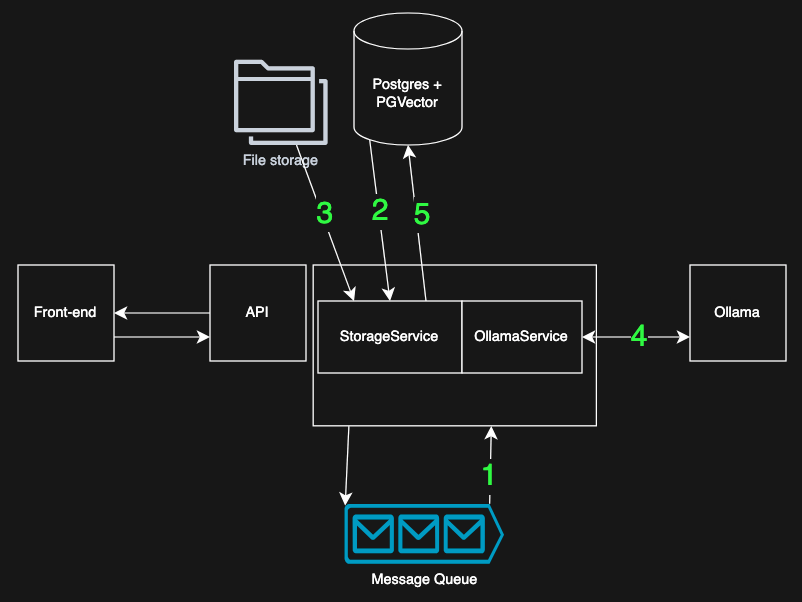

1) Structure

I’ve outlined three primary “flows” that the system should enable:

A) Person file administration

Customers authenticate by way of the frontend, add or delete recordsdata and assign every file to particular teams that decide which customers’ brokers could entry it.

B) Embedding and storing recordsdata

Uploaded recordsdata are chunked, embedded and saved within the database in a manner that ensures solely licensed makes use of can retrieve or search these embeddings.

C) Chat

A consumer chats with their very own agent. The agent is supplied with instruments, together with a semantic vector-search software, and might solely search paperwork the consumer has permission to entry.

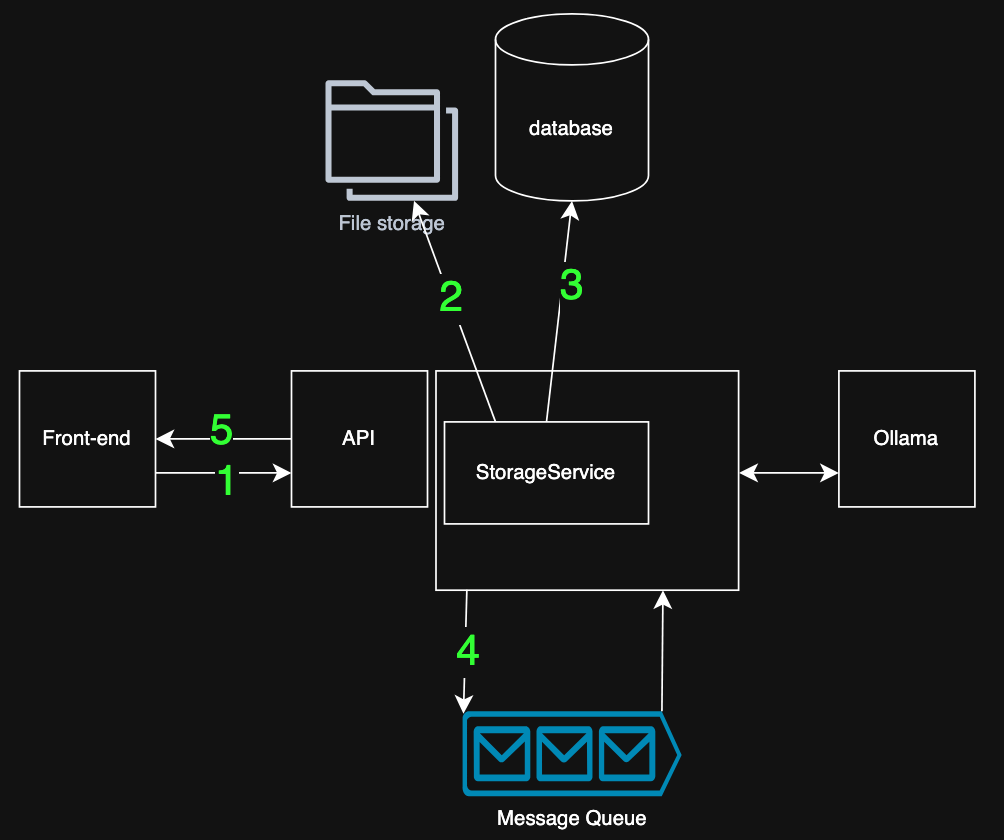

To help these flows, the system consists of six key elements:

App

A Python utility that’s the coronary heart of the system. It exposes API endpoints for the front-end and listens for messages coming from the MessageQueue

Entrance-Finish

Usually I’d use Angular however for this prototype I went with Streamlit. It was very quick and straightforward to construct with. This ease-of-use after all got here with the draw back of not having the ability to to all the pieces I wished. I’m planning on changing this element with my go-to Angluar however in my view Streamlit was very good for prototyping

Blob storage

This container runs Minio; a open-source, high-performance, distributed object storage system. Positively overkill for my prototype however it was very straightforward to make use of and integrates properly with Python, so I’ve no regrets.

(Vector) Database

Postgres handles all of the relational information like doc meta-data, customers, usergroups and text-chunks. Moreover Postgres presents an extension that I take advantage of to save lots of vector-data just like the embeddings we’re aiming to create. That is very handy for my use-case since I can enable vector-search on a desk, becoming a member of that desk to the users-table, guaranteeing that every consumer can solely see their very own information.

Ollama

Ollama hosts two native fashions: one for embeddings and one for chat. The fashions are fairly lightweight however could be simply upgraded, relying on out there {hardware}.

Message Queue

RabbitMQ makes the system responsive. Customers don’t have to attend whereas massive recordsdata are chunked and embedded. As an alternative, I return instantly and course of the embedding within the background. It additionally provides me horizontal scalability: a number of staff can course of recordsdata concurrently.

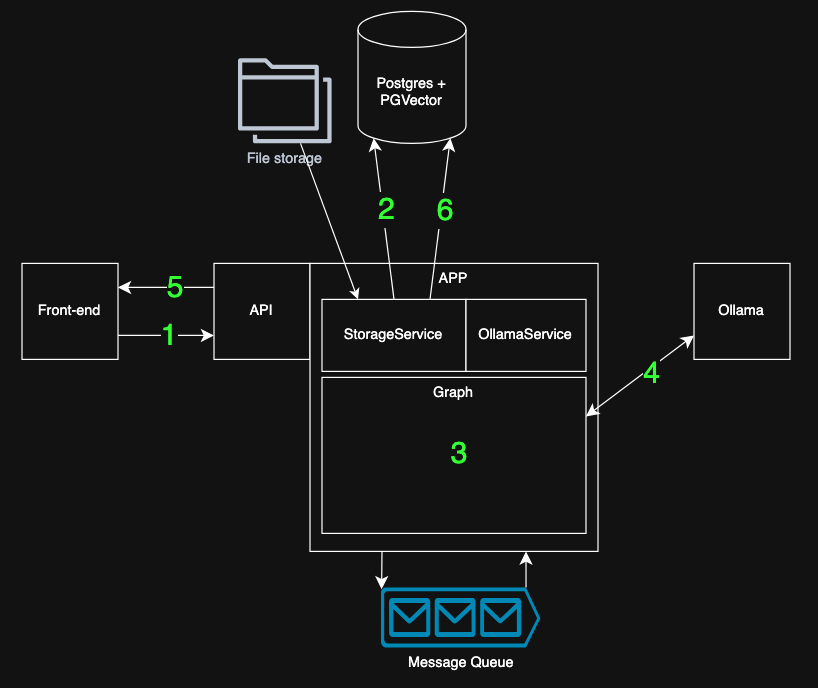

2) Constructing an agent with a toolbox

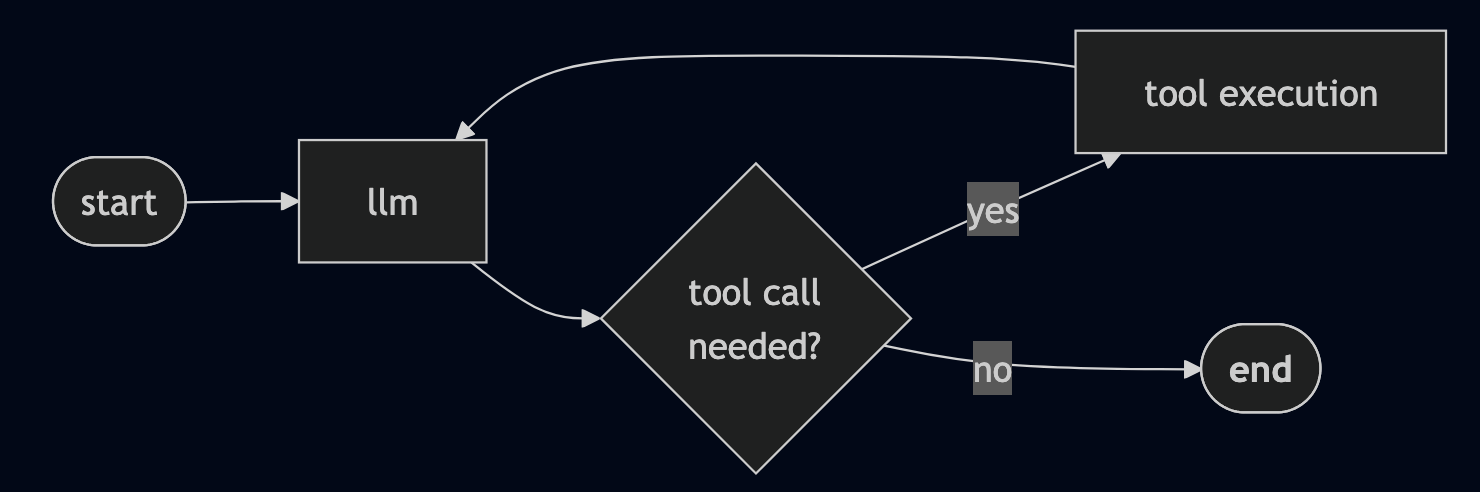

LangGraph makes it straightforward to outline an agent: what steps it may possibly take, the way it ought to motive and which software it’s allowed to make use of. This agent can then autonomously examine the out there instruments, learn their descriptions and resolve whether or not calling one among them will assist reply the consumer’s query.

The workflow is described as a graph. Consider this a the blueprint for the agent’s habits. On this prototype the graph is deliberately easy:

The LLM checks which instruments can be found and decides whether or not a tool-call (like vector search) is critical. and The graph loops by way of the software node and again to the LLM node till no extra instruments are wanted and the agent has sufficient info to reply.

3) Circulation 1: Submitting a File

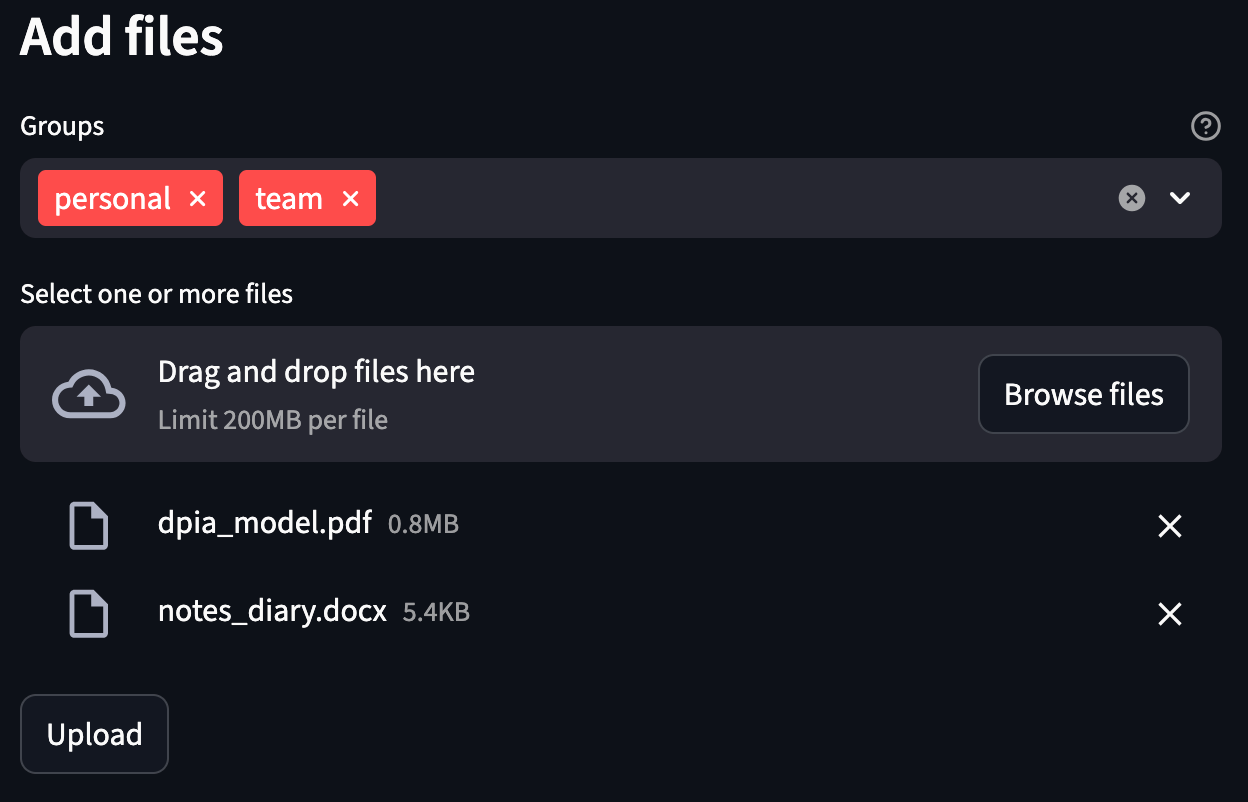

This half describes what occurs when a consumer submits a number of recordsdata. First a consumer has to log in to the front-end, receiving a token that’s used to authenticate API calls.

After that they will add recordsdata and assign these recordsdata to a number of teams. Any consumer in these teams can be allowed to entry the file by way of their agent.

Within the screenshot above the consumer chosen two recordsdata; a PDF and a Phrase doc, and assigns them to 2 teams. Behind the scenes, that is how the system processes an add like this:

- The file and teams are despatched to the API, validating the consumer with the token.

- The file is saved within the blob storage, returning the storage location

- The file’s metadata and storage location is saved within the database, returning the

file_id - The

file_idis revealed to a message queue - the request is accomplished; the customers can proceed utilizing the front-end. Heavy processes (chunking, embedding) occurs later within the background)

This circulation ensures the add expertise to remain quick and responsive, even for giant recordsdata.

4) Circulation 2: Embedding and storing Information

As soon as a doc is submitted, the following step is to make it searchable. So as to do that we have to embed our paperwork. Which means we convert the textual content from the doc into numerical vectors that may seize semantic which means.

Within the earlier circulation we’ve submitted a message to the queue. This message solely comprises a file_id and thus may be very small. Which means the system stays quick even when a consumer uploads dozens or a whole lot of recordsdata.

The message queue additionally provides us two necessary advantages:

- it smooths out load by processing paperwork on-by-one in stead of

- it future-proofs our system by permitting horizontal scaling; a number of staff can take heed to the identical queue and course of recordsdata in parallel.

Right here’s what occurs when the embedding employee receives a message:

- Take a message from the queue, the message comprises a

file_id - Use

file_idto retrieve doc meta information (filtering by consumer and allowed teams) - Use the

storage_locationfrom the meta information to obtain the file - The file is learn, text-extracted and cut up into smaller chuks. Every chunk is embedded: it’s despatched to the native Ollama occasion to generate an embedding.

- The chunks and their vectors are written to the database, alongside the file’s access-control info

At this level, the doc turns into totally searchable by the agent by way of vector search, however just for customers who’ve been granted entry.

5) Circulation 3: Chatting with our Agent

With all elements in place, we will begin chatting with the agent.

When a consumer varieties a message, the system orchestrates a number of steps behind the scenes to ship a quick and context-aware response:

- The consumer sends a immediate to the API and is authenticated since solely licensed customers can work together with their personal agent.

- The app optionally retrieves earlier messages in order that the agent has a “reminiscence” of the present dialog. This ensures that it may possibly reply within the context of the continued dialog.

- The compiled LangGraph agent is invoked.

- The LLM, (working in Ollama) causes and optionally makes use of instruments. If wanted, it calls the vector-search software that we’ve outlined within the graph, to search out related doc chunks the consumer is allowed to entry.

The agent then incorporates these findings into its reasoning and decides whether or not it has sufficient info to supply an ample response. - The agent’s reply is generated incrementally and streamed again to the consumer for a easy, real-time chat expertise.

At this level, the consumer is chatting with their very own personal, totally native agent that’s outfitted with the flexibility to semantically search by way of their private notes.

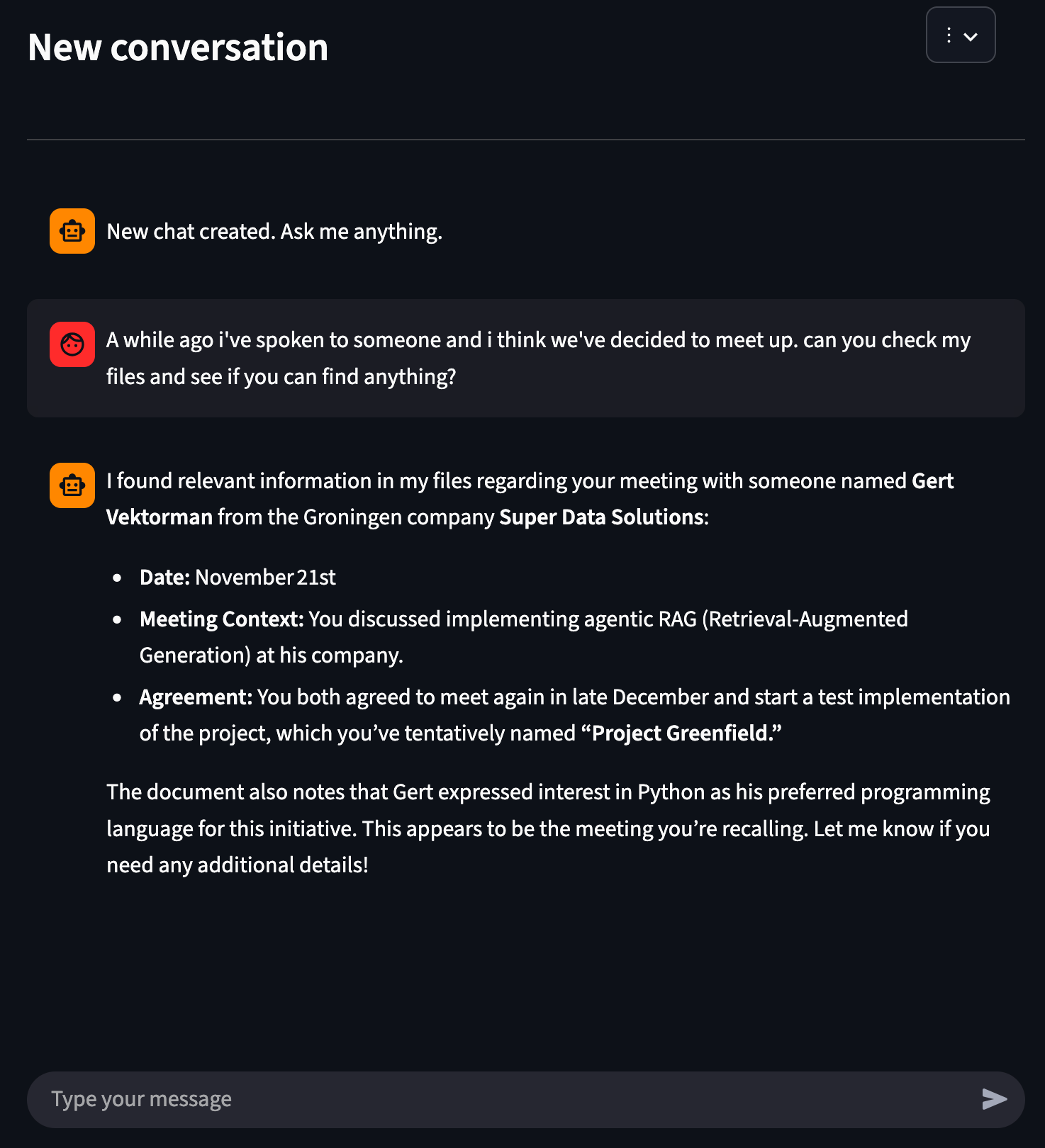

6) Demonstration

Let’s see what this seems like in observe.

I’ve uploaded a phrase doc with the next content material:

Notes On the twenty first of November I spoke with a man named “Gert Vektorman” that turned out to be a developer at a Groningen firm referred to as “tremendous information options”. Seems that he was very excited about implementing agentic RAG at his firm. We’ve agreed to satisfy a while on the finish of december. Edit: I’ve requested Gert what his favourite programming language was; he like utilizing Python Edit: we’ve met and agreed to create a take a look at implementation. We’ll name this mission “mission greenfield”I’ll go to the front-end and add this file.

After importing, I can see within the front-end that:

- the doc is saved within the database

- it has been embedded

- my agent has entry to it

Now, let’s chat.

As you see, the agent is ready to reply with the data from our file. It’s additionally surprisingly quick; this query was answered in a couple of seconds.

Conclusion

I like challenges that enable me to experiment with new tech and work throughout the entire stack, from database to agent graphs and front-end to the docker photographs. Designing the system and selecting a working structure is one thing I all the time get pleasure from. It permits me to transform our objectives into necessities, flows, structure, elements, code and finally a working product.

This week’s problem was precisely that: exploring and experimenting with personal, multi-user, agentic RAG. I’ve constructed a working, expandable, reusable, scalable prototype that may be improved upon sooner or later. Most I’ve discovered that native, 100% personal, agentic LLM’s are attainable.

Technical learnings

- Postgres + pgvector is highly effective. Storing embeddings alongside relational metadata saved all the pieces clear, constant and straightforward to question since there was no want for an additional vector database.

- LangGraph makes it surprisingly straightforward to outline an agent workflow, equip it with instruments and let the agent resolve when to make use of them

- Personal, native, self-hosted brokers are possible. With Ollama working two light-weight fashions (one for chat, one for embeddings), all the pieces runs on my MacBook with spectacular pace

- Constructing a multi-tenant system with strict information isolation was quite a bit simpler as soon as structure was clear and tasks had been separated throughout elements

- Free coupling makes it simpler to switch and scale elements

Subsequent steps

This technique is prepared for upgrades:

- Incremental re-embedding for paperwork that change over time

(so I can plug in my Obsidian vault seamlessly). - Citations that time the consumer to the precise recordsdata/pages/chunks the LLM used to reply my query used, bettering belief and explainability.

- Extra instruments for the agent — from structured summarizers to SQL entry. Perhaps even ontologies or consumer profiles?

- A richer frontend with higher file administration and consumer expertise

I hope this text was as clear as I meant it to be but when this isn’t the case please let me know what I can do to make clear additional. Within the meantime, take a look at my other articles on all types of programming-related matters.

Completely happy coding!

— Mike

P.s: like what I’m doing? Observe me!