Australian on-line regulator eSafety has despatched notices to the makers of a few of the world’s hottest on-line video games, over considerations that the platforms are being utilized by sexual predators to groom kids and extremists to radicalise them.

The legally enforceable transparency reporting notices require Roblox, Fortnite, Minecraft and Steam to clarify how they’re figuring out, stopping and responding to grooming, sexual extortion and radicalisation points, in addition to cyberbullying and on-line hate

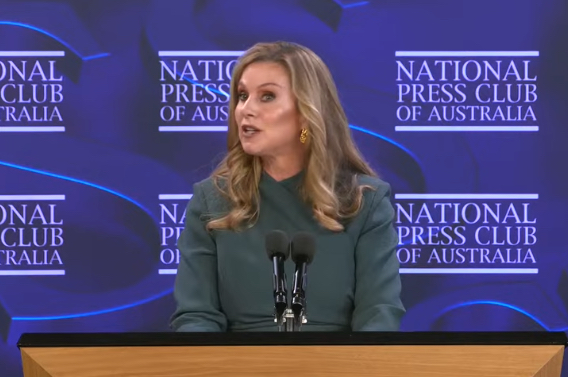

eSafety Commissioner Julie Inman Grant desires to understand how intently their methods align with the federal government’s Basic Online Safety Expectations.

“What we frequently see after these offenders make contact with kids in on-line sport environments, they then transfer kids to personal messaging providers,” she mentioned

“Gaming platforms are amongst the net areas most closely utilized by Australian kids, functioning not solely as locations to play, but in addition as locations to socialize and talk.

“Predatory adults know this and goal kids by grooming or embedding terrorist and violent extremist narratives in gameplay, rising the dangers of contact offending, radicalisation and different off-platform harms.”

90% of children sport on-line

eSafety research into kids and gaming discovered that round 90% of Australian children aged 8 to 17 performed on-line video games. Inman Grant mentioned there’s been a number of reviews of grooming on all 4 of those platforms in addition to terrorist and violent extremist-themed gameplay.

“This consists of Islamic State-inspired video games and recreations of mass shootings on Roblox, in addition to far proper teams recreating fascist imagery in Minecraft,” she mentioned.

“These corporations should take significant steps to forestall their providers changing into onramps to abuse, extremist violence, radicalisation or lifelong hurt.”

Compliance with a transparency reporting discover is necessary. If corporations fail to reply, eSafety can search monetary penalties of as much as $825,000 a day.

Firms present in breach of a course to adjust to a code such because the Online Safety Codes and Standards face penalties of as much as $49.5 million per breach.

Earlier this yr Roblox pledged to make a number of key adjustments to guard kids, together with extra stringent age assurance, making accounts for beneath 16s personal by default, and introducing instruments to forestall grownup customers from contacting beneath 16s with out parental consent.

eSafety mentioned it will likely be testing the implementation of these commitments to validate their effectiveness.