Benchmarking large language models presents some uncommon challenges. For one, the primary function of many LLMs is to supply compelling textual content that’s indistinguishable from human writing. And success in that job could not correlate with metrics historically used to guage processor efficiency, akin to instruction execution charge.

However there are stable causes to persevere in making an attempt to gauge the efficiency of LLMs. In any other case, it’s unimaginable to know quantitatively how a lot better LLMs have gotten over time—and to estimate after they is perhaps able to finishing substantial and helpful tasks by themselves.

Large Language Models are extra challenged by duties which have a excessive “messiness” rating.Mannequin Analysis & Risk Analysis

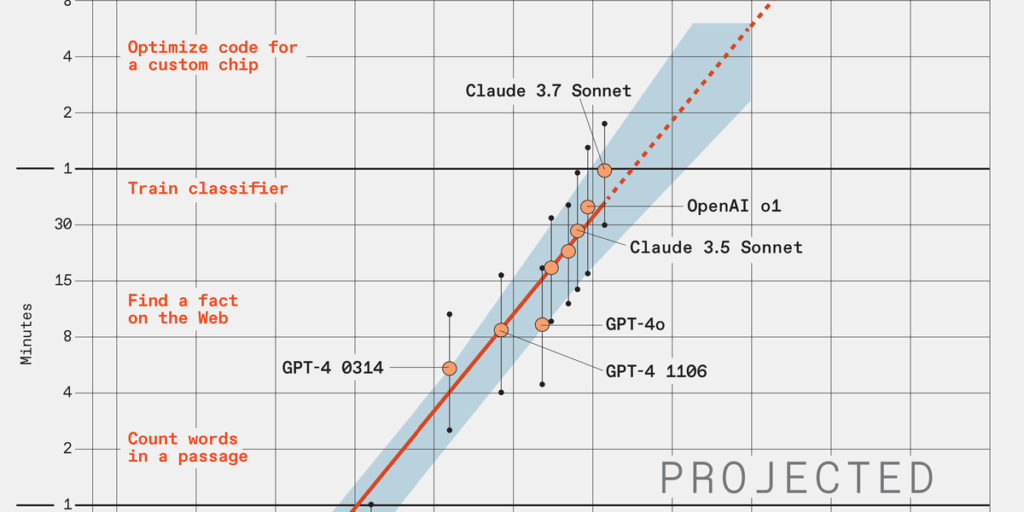

That was a key motivation behind work at Mannequin Analysis & Risk Analysis (METR). The group, primarily based in Berkeley, Calif., “researches, develops, and runs evaluations of frontier AI methods’ capability to finish complicated duties with out human enter.” In March, the group launched a paper known as Measuring AI Ability to Complete Long Tasks, which reached a startling conclusion: Based on a metric it devised, the capabilities of key LLMs are doubling each seven months. This realization results in a second conclusion, equally gorgeous: By 2030, essentially the most superior LLMs ought to be capable of full, with 50 p.c reliability, a software-based job that takes people a full month of 40-hour workweeks. And the LLMs would possible be capable of do many of those duties way more rapidly than people, taking solely days, and even simply hours.

An LLM Would possibly Write a First rate Novel by 2030

Such duties would possibly embody beginning up an organization, writing a novel, or enormously enhancing an present LLM. The provision of LLMs with that form of functionality “would include huge stakes, each by way of potential advantages and potential dangers,” AI researcher Zach Stein-Perlman wrote in a blog post.

On the coronary heart of the METR work is a metric the researchers devised known as “task-completion time horizon.” It’s the period of time human programmers would take, on common, to do a job that an LLM can full with some specified diploma of reliability, akin to 50 p.c. A plot of this metric for some general-purpose LLMs going again a number of years [main illustration at top] reveals clear exponential progress, with a doubling interval of about seven months. The researchers additionally thought-about the “messiness” issue of the duties, with “messy” duties being those who extra resembled ones within the “actual world,” in response to METR researcher Megan Kinniment. Messier duties had been more difficult for LLMs [smaller chart, above].

If the thought of LLMs enhancing themselves strikes you as having a sure singularity–robocalypse high quality to it, Kinniment wouldn’t disagree with you. However she does add a caveat: “You could possibly get acceleration that’s fairly intense and does make issues meaningfully harder to manage with out it essentially ensuing on this massively explosive progress,” she says. It’s fairly doable, she provides, that varied components might gradual issues down in observe. “Even when it had been the case that we had very, very intelligent AIs, this tempo of progress might nonetheless find yourself bottlenecked on issues like {hardware} and robotics.”

From Your Web site Articles

Associated Articles Across the Net