normally begins the identical method. In a management assembly, somebody says: “Let’s use AI!” Heads nod, enthusiasm builds, and earlier than you understand it, the room lands on the default conclusion: “Positive — we’ll construct a chatbot.” That intuition is comprehensible. Massive language fashions are highly effective, ubiquitous, and interesting. They promise intuitive entry to common data and performance.

The group walks away and begins constructing. Quickly, demo time comes round. A refined chat interface seems, accompanied by assured arguments about why this time, will probably be completely different. At that time, nevertheless, it normally hasn’t reached actual customers in actual conditions, and analysis is biased and optimistic. Somebody within the viewers inevitably comes up with a customized query, irritating the bot. The builders promise to repair “it”, however normally, the underlying subject is systemic.

As soon as the chatbot hits the bottom, preliminary optimism is commonly matched by person frustration. Right here, issues get a bit private as a result of over the previous weeks, I used to be pressured to spend a while speaking to completely different chatbots. I are likely to delay interactions with service suppliers till the state of affairs turns into unsustainable, and a few these instances had piled up. Smiling chatbot widgets grew to become my final hope earlier than an everlasting hotline name, however:

- After logging in to my automotive insurer’s website, I requested to clarify an unannounced worth improve, solely to appreciate the chatbot had no entry to my pricing knowledge. All it may provide was the hotline quantity. Ouch.

- After a flight was canceled on the final minute, I requested the airline’s chatbot for the rationale. It politely apologized that, because the departure time was already previously, it couldn’t assist me. It was open to debate all different subjects, although.

- On a telco website, I requested why my cellular plan had immediately expired. The chatbot confidently replied that it couldn’t touch upon contractual issues and referred me to the FAQs. As anticipated, these have been lengthy however irrelevant.

These interactions didn’t deliver me nearer to an answer and left me on the reverse finish of pleasure. The chatbots felt like overseas our bodies. Sitting there, they consumed actual property, latency, and a focus, however didn’t add worth.

Let’s skip the talk on whether or not these are intentional darkish patterns. The actual fact is, legacy techniques because the above carry a heavy burden of entropy. They arrive with tons of distinctive knowledge, data, and context. The second you attempt to combine them with a general-purpose LLM, you make two worlds conflict. The mannequin must ingest the context of your product so it may well motive meaningfully about your area. Correct context engineering requires talent and time for relentless analysis and iteration. And earlier than you even get to that time, your knowledge must be prepared, however in most organizations, knowledge is noisy, fragmented, or simply lacking.

On this publish, I’ll recap insights from my e book The Art of AI Product Development and my latest discuss on the Google Web AI Summit and share a extra natural, incremental strategy to integrating AI into current merchandise.

Utilizing smaller fashions for low-risk, incremental AI integration

“When implementing AI, I see extra organizations fail by beginning too huge than beginning too small.” ( Andrew Ng).

AI integration wants time:

- Your technical group wants to organize the information and be taught the obtainable strategies and instruments.

- You want to prototype and iterate to search out the candy spots of AI worth in your product and market.

- Customers have to calibrate their belief when shifting to new probabilistic experiences.

To adapt to those studying curves, you shouldn’t rush to show AI — particularly open-ended chat performance — to your customers. AI introduces uncertainty and errors into the expertise, which most individuals don’t like.

One efficient solution to tempo your AI journey within the brownfield context is through the use of small language fashions (SLMs), which usually vary from just a few hundred million to a couple billion parameters. They’ll combine flexibly along with your product’s current knowledge and infrastructure, slightly than including extra technological overhead.

How SLMs are skilled

Most SLMs are derived from bigger fashions via knowledge distillation. On this setup, a big mannequin acts because the trainer and a smaller one as the scholar. For instance, Google’s Gemini served because the trainer for Gemma 2 and Gemma 3 , whereas Meta’s Llama Behemoth skilled its herd of smaller Llama 4 models. Simply as a human trainer condenses years of examine into clear explanations and structured classes, the massive mannequin distills its huge parameter house right into a smaller, denser illustration that the scholar can take in. The result’s a compact mannequin that retains a lot of the trainer’s competence however operates with far fewer parameters and dramatically decrease computational value.

Utilizing SLMs

One of many key benefits of SLMs is their deployment flexibility. Not like LLMs which can be principally used via exterior APIs, smaller fashions may be run domestically, both in your group’s infrastructure or instantly on the person’s gadget:

- Native deployment: You’ll be able to host SLMs by yourself servers or inside your cloud setting, preserving full management over knowledge, latency, and compliance. This setup is good for enterprise functions the place delicate data or regulatory constraints make third-party APIs impractical.

📈 Native deployment additionally affords you versatile fine-tuning alternatives as you gather extra knowledge and wish to reply to rising person expectations.

- On-device deployment by way of the browser: Trendy browsers have built-in AI capabilities you could depend on. For example, Chrome integrates Gemini Nano by way of the built-in AI APIs, whereas Microsoft Edge contains Phi-4 (see Prompt API documentation). Operating fashions instantly within the browser permits low-latency, privacy-preserving use instances reminiscent of sensible textual content options, type autofill, or contextual assist.

If you need to be taught extra concerning the technicalities of SLMs, listed below are a few helpful assets:

Let’s now transfer on and see what you may construct with SLMs to supply person worth and make regular progress in your AI integration.

Product alternatives for SLMs

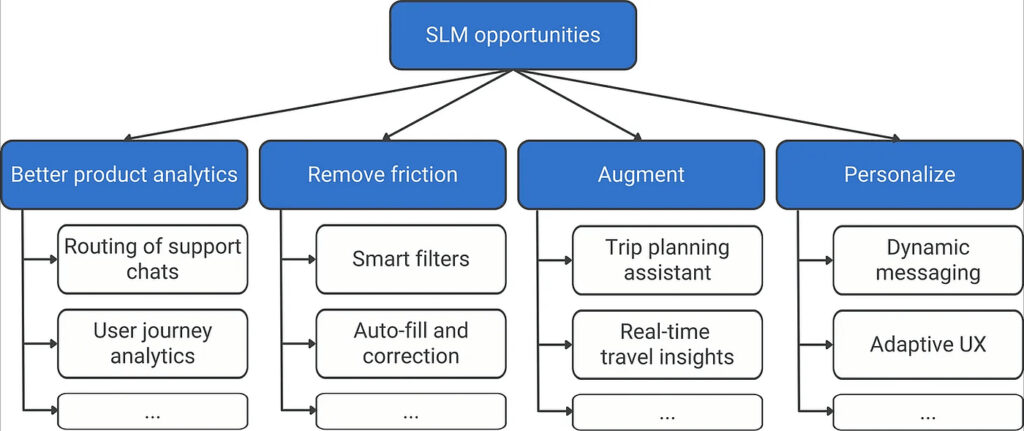

SLMs shine in centered, well-defined duties the place the context and knowledge are already recognized — the sorts of use instances that stay deep inside current merchandise. You’ll be able to consider them as specialised, embedded intelligence slightly than general-purpose assistants. Let’s stroll via the principle buckets of alternative they unlock within the brownfield, as illustrated within the following alternative tree.

1. Higher product analytics

Earlier than exposing AI options to customers, search for methods to enhance your product from the within. Most merchandise already generate a steady stream of unstructured textual content — assist chats, assist requests, in-app suggestions. SLMs can analyze this knowledge in actual time and floor insights that inform each product selections and fast person expertise. Listed below are some examples:

- Tag and route assist chats as they occur, directing technical points to the best groups.

- Flag churn alerts throughout a session, prompting well timed interventions.

- Counsel related content material or actions primarily based on the person’s present context.

- Detect repeated friction factors whereas the person remains to be within the circulation, not weeks later in a retrospective.

These inside enablers maintain danger low whereas including worth and giving your group time to be taught. They strengthen your knowledge basis and put together you for extra seen, user-facing AI options down the street.

2. Take away friction

Subsequent, take a step again and audit UX debt that’s already there. Within the brownfield, most merchandise aren’t precisely a designer’s dream. They have been designed beneath the technical and architectural constraints of their time. With AI, we now have a chance to carry a few of these constraints, lowering friction and creating sooner, extra intuitive experiences.

A very good instance is the sensible filters on search-based web sites like Reserving.com. Historically, these pages use lengthy lists of checkboxes and classes that attempt to cowl each potential person desire. They’re cumbersome to design and use, and in the long run, many customers can’t discover the setting that issues to them.

Language-based filtering adjustments this. As a substitute of navigating a posh taxonomy, customers merely kind what they need (for instance “pet-friendly lodges close to the seashore”), and the mannequin interprets it right into a structured question behind the scenes.

Extra broadly, search for areas in your product the place customers want to use your inside logic — your classes, constructions, or terminology — and exchange that with pure language interplay. At any time when customers can categorical intent instantly, you take away a layer of cognitive friction and make the product smarter and friendlier.

3. Increase

Together with your person expertise decluttered, it’s time to consider augmentation — including small, helpful AI capabilities to your product. As a substitute of reinventing the core expertise, take a look at what customers are already doing round your product — the aspect duties, workarounds, or exterior instruments they depend on to succeed in their aim. Can centered AI fashions assist them do it sooner or smarter?

For instance, a journey app may combine a contextual journey be aware generator that summarizes itinerary particulars or drafts messages for co-travelers. A productiveness device may embrace a gathering recap generator that summarizes discussions or motion gadgets from textual content notes, with out sending knowledge to the cloud.

These options develop organically from actual person habits and prolong your product’s context as an alternative of redefining it.

4. Personalize

Profitable personalization is the holy grail of AI. It flips the standard dynamic: as an alternative of asking customers to be taught and adapt to your product, your product now adapts to them like a well-fitting glove.

While you begin, maintain ambition at bay — you don’t want a completely adaptive assistant. Fairly, introduce small, low-risk changes in what customers see, how data is phrased, or which choices seem first. On the content material stage, AI can adapt tone and elegance, like utilizing concise wording for consultants and extra explanatory phrasing for newcomers. On the expertise stage, it may well create adaptive interfaces. For example, a project-management device may floor essentially the most related actions (“create job,” “share replace,” “generate abstract”) primarily based on the person’s previous workflows.

⚠️ When personalization goes unsuitable, it rapidly erodes belief. Customers sense that they’ve traded private knowledge for an expertise that doesn’t really feel higher. Thus, introduce personalization solely as soon as your knowledge is able to assist it.

Why “small” wins over time

Every profitable AI function — be it an analytics enchancment, a frictionless UX touchpoint, or a customized step in a bigger circulation — strengthens your knowledge basis and builds your group’s iteration muscle and AI literacy. It additionally lays the groundwork for bigger, extra complicated functions later. When your “small” options work reliably, they change into reusable elements in greater workflows or modular agent techniques (cf. Nvidia’s paper Small Language Models are the Future of Agentic AI).

To summarize:

✅ Begin small — favor gradual enchancment over disruption.

✅ Experiment quick — smaller fashions imply decrease value and sooner suggestions loops.

✅ Be cautious — begin internally; introduce user-facing AI when you’ve validated it.

✅ Construct your iteration muscle — regular, compound progress beats headline tasks.

Initially printed at https://jannalipenkova.substack.com.