rewrite of the identical system immediate.

You MUST return ONLY legitimate JSON. No markdown. No code fences. No rationalization. JUST the JSON object.

I had written MUST in all-caps. To a language mannequin. As if emphasis would work on one thing that doesn’t have emotions or, apparently, a constant definition of “legitimate JSON.”

It didn’t work. Right here’s what did.

How GPT-4 Ended Up in a Nightly Batch Job

Our group consumes analysis paperwork, equivalent to PDFs and plain textual content, and infrequently these pesky semi-structured studies that some vendor clearly exported from a spreadsheet they had been very happy with. And a part of that pipeline classifies them and extracts structured fields earlier than something touches the information warehouse. Methodology kind, dataset supply, key metrics.

This seems like a solved downside. It normally is, till there are about forty forms of strategies listed and the paperwork cease wanting something like those you skilled on.

For some time, we dealt with this utilizing regex, rule-based extractors, and a fine-tuned BERT mannequin. The great factor is, it labored, however sustaining it felt like fixing a CSS file from 2015, the place you contact one rule and one thing unrelated breaks on a web page you haven’t visited in months.

So when GPT-4 got here alongside, we tried it.

Not going to lie, it was type of unbelievable. Edge instances that had been driving BERT loopy for months, codecs we’d by no means seen earlier than, and paperwork with inconsistent sections had been all taken care of cleanly by GPT-4.

The group demo went properly. I imply, somebody even mentioned “wow” out loud.

I despatched my supervisor a textual content that night: “suppose we’ve cracked the extraction downside.” Despatched with confidence.

Two weeks after we deployed it, the failures began.

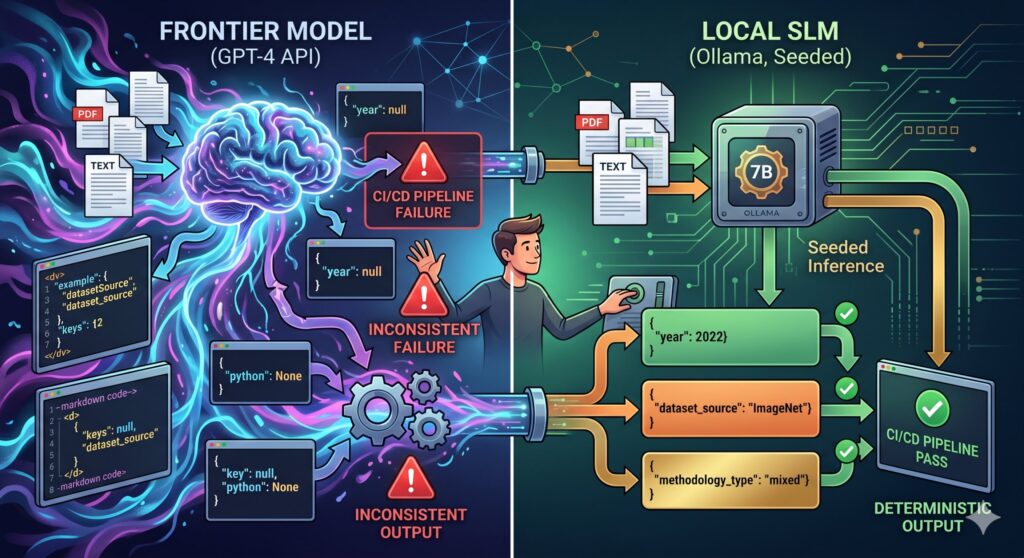

The Downside With “Largely Constant”

From my expertise, GPT-4 is succesful and non-deterministic.

For lots of use instances, the non-determinism doesn’t matter. For a nightly batch pipeline feeding a knowledge warehouse, it issues loads.

At temperature=0 you get principally constant outputs. In a CI/CD context, “principally” means “it’ll break on a Friday.”

The failures weren’t dramatic both; if not, that will’ve been simpler to debug. GPT-4 was not hallucinating fields or returning rubbish.

It was doing refined issues. "dataset_source" one night time, "datasetSource" the subsequent, "source_dataset" the night time after. Markdown code fences across the JSON although we’d informed it to not, throughout each model of the immediate. Numbers returned as strings. JSON null returned because the Python string "None"; I spent longer than I’d prefer to admit watching that one.

Every failure adopted the identical ritual.

Pydantic catches it downstream, pipeline fails, I verify it’s one other formatting quirk, then I get to work instantly to re-run manually. It passes, then about three days later, a unique quirk yet again.

So I began holding a log. Six weeks of it:

- 23 pipeline failures from GPT-4 output inconsistency

- ~18 minutes common to diagnose and re-run

- 0 precise bugs within the pipeline code

Zero actual bugs. Each single failure was the mannequin being subtly completely different from what it had been the night time earlier than. That’s the quantity that made me cease defending the setup.

What I Tried Earlier than Admitting the Actual Downside

Prompting

Seven rewrites over two days.

I didn’t even notice it had gotten that a lot. I simply wished a great repair. And it didn’t even cease there.

I attempted all-caps directions, few-shot examples, and counter-examples with a “Do NOT do that” header. I even tried including a reminder on the very finish of the person message as a last-ditch nudge, as if the mannequin would get to that line and suppose oh proper, JSON solely, practically forgot.

At one level, the identical instruction appeared in three completely different locations in the identical immediate, and I genuinely thought which may assist. Failures continued, unchanged.

A cleanup parser

When prompting failed, I wrote code to scrub up any mess that GPT-4 threw again at me.

Strip markdown fences, discover the JSON object if it was buried in textual content, and log the uncooked output when nothing labored.

This technique truly labored for a few week, which was sufficient time for me to be ok with it.

After which GPT-4 started returning structurally legitimate JSON with flawed key names, camelCase as an alternative of snake_case. The parser handed it by nice, and the error surfaced three steps later.

I used to be enjoying whack-a-mole with a mannequin that had infinite moles.

response_format + temperature=0. OpenAI’s response_format={"kind": "json_object"} mixed with temperature=0 introduced failures from 23 right down to about 9. Significant progress. Nonetheless not zero, and “9 random failures per six weeks” shouldn’t be a pipeline property I might defend.

Perform calling

This was the one that just about labored. Forcing output by a typed schema contract genuinely tightened issues.

I ended checking logs each morning, stopped bracing for the Slack notification. Instructed somebody on the group it was steady. Which, when you work in software program, you understand is the quickest method to jinx one thing.

capabilities = [

{

"name": "extract_document_metadata",

"parameters": {

"type": "object",

"properties": {

"methodology_type": {

"type": "string",

"enum": ["experimental", "observational", "review", "simulation", "mixed"]

},

"dataset_source": {"kind": "string"},

"primary_metric": {"kind": "string"},

"yr": {"kind": "integer"},

"confidence_score": {"kind": "quantity", "minimal": 0, "most": 1}

},

"required": ["methodology_type", "dataset_source", "year"]

}

}

]

response = openai_client.chat.completions.create(

mannequin="gpt-4-turbo",

messages=messages,

capabilities=capabilities,

function_call={"title": "extract_document_metadata"},

temperature=0

)Then one Tuesday, the OpenAI API had a 20-minute disruption. Pipeline failed arduous; it wasn’t a mannequin difficulty, only a community dependency we’d by no means correctly reckoned with.

We couldn’t version-lock the mannequin, couldn’t run offline, couldn’t reply “what modified between the run that labored and the run that didn’t?” as a result of the mannequin on the opposite finish wasn’t ours.

Sitting there ready for another person’s API to get well, I lastly requested the query I ought to have requested months earlier: Does this particular job really want a frontier mannequin?

The Native Fashions Are Higher Than I Anticipated

I went in absolutely anticipating to spend a day confirming they weren’t ok. I had a conclusion prepared earlier than I’d run a single check: tried it, high quality wasn’t there, staying on GPT-4 with higher retry logic. A narrative the place the primary choice was nonetheless defensible.

It took possibly three hours to comprehend I’d been excited about this flawed.

Doc extraction into a set schema isn’t truly a tough job for a language mannequin. Not in the way in which that makes a frontier mannequin obligatory. There’s no reasoning concerned, no synthesis, no want for the breadth of world data that makes GPT-4 price what it prices.

What it truly is: structured studying comprehension. The mannequin reads a doc and fills in fields. A well-trained 7B mannequin does this nice. And with the suitable setup, particularly, seeded inference, it does it identically each single run.

I ran 4 fashions towards 50 paperwork I’d manually annotated:

- Phi-3-mini (3.8B): Higher instruction following than I anticipated for a 3.8B mannequin, however it fell aside on something over about 3,000 tokens. I practically picked this earlier than I appeared on the longer doc outcomes.

- Mistral 7B Instruct: Stable in all places, no actual surprises in both course. The Toyota Camry of native fashions. You’d be nice with this.

- Qwen2.5-7B-Instruct: This one received clearly. Finest structured output consistency of the 4 by a margin huge sufficient that it wasn’t an in depth name.

- Llama 3.2 3B Instruct: That is quick, however the extraction high quality dropped off sufficient on edge instances that I wouldn’t run it on manufacturing information with out much more validation work first.

Qwen2.5 and Mistral each hit 90–95% accuracy on the annotated set, decrease than GPT-4 on genuinely ambiguous paperwork, sure.

However I ran Qwen2.5 on the identical 20 paperwork thrice and went again to diff the outcomes. No diffs. Similar JSON, similar values, similar subject order each run.

After six weeks of failures I couldn’t predict, that felt virtually too clear to be actual.

Earlier than and After

The GPT-4 extractor at its most steady, not the unique prototype, the model after months of defensive code had collected round it:

# extractor_gpt4.py

import os

import json

from openai import OpenAI

consumer = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

SYSTEM_PROMPT = """You're a analysis doc metadata extractor.

Given the textual content of a analysis doc, extract the desired metadata fields.

Be exact. If you're not sure a few subject, use your finest judgment based mostly on context."""

EXTRACTION_SCHEMA = {

"title": "extract_document_metadata",

"parameters": {

"kind": "object",

"properties": {

"methodology_type": {

"kind": "string",

"enum": ["experimental", "observational", "review", "simulation", "mixed"]

},

"dataset_source": {"kind": "string"},

"primary_metric": {"kind": "string"},

"yr": {"kind": "integer"},

"confidence_score": {"kind": "quantity"}

},

"required": ["methodology_type", "dataset_source", "year"]

}

}

def extract_metadata_gpt4(document_text: str) -> dict:

response = consumer.chat.completions.create(

mannequin="gpt-4-turbo",

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": f"Extract metadata from this document:nn{document_text[:8000]}"}

],

capabilities=[EXTRACTION_SCHEMA],

function_call={"title": "extract_document_metadata"},

temperature=0,

timeout=30

)

strive:

args = response.selections[0].message.function_call.arguments

return json.hundreds(args)

besides (AttributeError, json.JSONDecodeError) as e:

elevate ValueError(f"Did not parse GPT-4 response: {e}")Latency: 3.5–5.8s per doc. Price: ~$0.04 per name. Failure fee: ~6% after all of the fixes.

For the substitute, I selected Ollama. A colleague talked about it offhandedly, and the docs appeared affordable at 11 pm, which is truthfully how quite a lot of infrastructure choices get made. The REST API is shut sufficient to OpenAI’s that the swap took about an hour:

# extractor_slm.py

import json

import logging

import os

import re

import requests

logger = logging.getLogger(__name__)

OLLAMA_URL = os.getenv("OLLAMA_URL", "http://localhost:11434")

MODEL = os.getenv("MODEL_NAME", "qwen2.5:7b-instruct-q4_K_M")

# Examined empirically: 7B this autumn on a T4 takes ~8s on chilly first token,

# then ~1.5s per doc chunk. 45s covers dangerous days.

_TIMEOUT = 45

_SYSTEM_PROMPT = """

You're a metadata extractor for analysis paperwork.

Return ONLY a JSON object — no rationalization, no markdown, no surrounding textual content.

Fields to extract:

- methodology_type (required): one among experimental | observational | assessment | simulation | combined

- dataset_source (required): the place the information got here from

- yr (required): integer

- primary_metric: most important eval metric if current

- confidence_score: your confidence 0.0–1.0

Output instance:

{"methodology_type": "experimental", "dataset_source": "ImageNet", "yr": 2022, "primary_metric": "top-1 accuracy", "confidence_score": 0.95}

"""

def call_ollama(doc_text: str) -> dict:

payload = {

"mannequin": MODEL,

"messages": [

{"role": "system", "content": _SYSTEM_PROMPT},

{"role": "user", "content": doc_text[:6000]},

],

"stream": False,

"choices": {

"temperature": 0,

"seed": 42, # determinism — that is the entire level

},

}

strive:

resp = requests.put up(f"{OLLAMA_URL}/api/chat", json=payload, timeout=_TIMEOUT)

resp.raise_for_status()

besides requests.exceptions.Timeout:

elevate RuntimeError(f"Ollama timed out after {_TIMEOUT}s — is the mannequin loaded?")

besides requests.exceptions.ConnectionError:

elevate RuntimeError(f"Cannot attain Ollama at {OLLAMA_URL} — is the container operating?")

uncooked = resp.json()["message"]["content"].strip()

cleaned = re.sub(r"^```(?:json)?s*|s*```$", "", uncooked).strip()

strive:

return json.hundreds(cleaned)

besides json.JSONDecodeError as e:

logger.error("Did not parse mannequin output: %snRaw was: %s", e, uncooked[:400])

elevateThe seed: 42 in choices is what truly delivers the determinism. Ollama helps seeded technology; similar enter, similar seed, similar output, each time, not roughly. temperature=0 with a hosted API implied this however by no means assured it since you’re not controlling the runtime. Regionally, you’re.

Getting It Into GitHub Actions

Two issues that aren’t apparent till they chunk you. First: GitHub Actions service containers are network-isolated from the runner. You’ll be able to’t docker exec into them; the mannequin pull has to undergo the REST API. Second: cache the mannequin. A chilly pull is 4.7GB and provides 3–4 minutes to each job.

# The non-obvious elements — the remainder is commonplace Actions boilerplate

- title: Cache Ollama mannequin

makes use of: actions/cache@v4

with:

path: ~/.ollama/fashions

key: ollama-qwen2.5-7b-q4

- title: Pull SLM mannequin

# Cannot docker exec into service containers — use the API

run: |

curl -s http://localhost:11434/api/pull

-d '{"title": "qwen2.5:7b-instruct-q4_K_M"}'

--max-time 300

- title: Heat up mannequin

run: |

curl -s http://localhost:11434/api/generate

-d '{"mannequin": "qwen2.5:7b-instruct-q4_K_M", "immediate": "whats up", "stream": false}'

> /dev/null

- title: Run ingestion pipeline

run: python pipeline/run_ingestion.py

env:

OLLAMA_URL: "http://localhost:11434"

MODEL_NAME: "qwen2.5:7b-instruct-q4_K_M"The Capitalization Bug I Spent Too Lengthy On

Qwen 2.5 often returns "Experimental" with a capital E regardless of express directions to not. The Literal kind test rejects it, and also you get a validation failure on a doc the mannequin truly extracted appropriately.

I spent an embarrassingly very long time on this as a result of the error message simply mentioned “invalid worth,” and my first intuition was to have a look at the doc, then the extractor, earlier than I lastly appeared on the validator and noticed it.

A normalize_methodology validator with mode="earlier than" fixes it earlier than the kind test runs:

# pipeline/validation.py

from typing import Literal, Non-compulsory

from pydantic import BaseModel, Discipline, field_validator

class DocMetadata(BaseModel):

methodology_type: Literal["experimental", "observational", "review", "simulation", "mixed"]

dataset_source: str = Discipline(min_length=3)

yr: int = Discipline(ge=1950, le=2030)

primary_metric: Non-compulsory[str] = None

confidence_score: Non-compulsory[float] = Discipline(default=None, ge=0.0, le=1.0)

@field_validator("dataset_source")

@classmethod

def strip_whitespace(cls, v: str) -> str:

return v.strip()

@field_validator("methodology_type", mode="earlier than")

@classmethod

def normalize_methodology(cls, v: str) -> str:

# Qwen often returns "Experimental" regardless of directions

return v.decrease().strip() if isinstance(v, str) else vI wish to be straight concerning the tradeoffs as a result of I hate articles that don’t.

GPT-4 is genuinely higher on ambiguous paperwork, non-English textual content, something requiring actual inference. There’s a category of doc in our corpus, older PDFs, studies mixing a number of languages, non-standard codecs, the place the SLM stumbles and GPT-4 wouldn’t.

We flag these individually and route them to a assessment queue. That’s not seamless, however it’s trustworthy. The SLM does the 90% it’s good at and doesn’t get requested to do the remainder.

The setup can be extra work than pip set up openai. First-time configuration takes an actual afternoon. And Ollama doesn’t auto-update fashions, so model administration is my downside now. I’ve genuinely made peace with that, understanding precisely what mannequin model ran final night time and the night time earlier than is the entire level.

What I Assume Now

The morning the pipeline first ran clear, no notification, no re-run, only a log file displaying 312 paperwork processed in 8.4 minutes, I checked it twice, then ran it manually once more.

I’d spent six weeks half-expecting an alert earlier than I’d even completed my espresso. Watching it cross quietly felt genuinely unusual.

The failure wasn’t utilizing GPT-4. The failure was treating a probabilistic system like a deterministic operate. temperature=0 reduces variance. It doesn’t remove it.

I understood this in idea the entire time. It took 23 failures to know it in a means that modified how I construct issues.

If you happen to’re operating an LLM in an automatic pipeline and issues maintain breaking in methods you’ll be able to’t reproduce, it may not be your code or your prompts. It may be the character of what you’re relying on.

An area mannequin on {hardware} you management, seeded for determinism, is a genuinely completely different class of software for this sort of job. Not higher at every part however higher at being the identical factor twice. And for a pipeline that runs unattended each night time, that’s most of what truly issues.

Earlier than you go!

If this was helpful, I write extra concerning the messy actuality behind constructing with AI, what breaks in manufacturing, what truly works, and the tradeoffs behind the fixes.

You’ll be able to subscribe to my newsletter when you’d like extra of that.