Be sure that additionally to take a look at the earlier components:

👉Half 1: Precision@k, Recall@k, and F1@k

👉Part 2: Mean Reciprocal Rank (MRR) and Average Precision (AP)

of my put up collection on retrieval analysis measures for RAG pipelines, we took an in depth have a look at the binary retrieval analysis metrics. Extra particularly, in Half 1, we went over binary, order-unaware retrieval analysis metrics, like HitRate@Ok, Recall@Ok, Precision@Ok, and F1@Ok. Binary, order-unaware retrieval analysis metrics are primarily probably the most fundamental kind of measures we will use for scoring the efficiency of our retrieval mechanism; they only classify a outcome both as related or irrelevant, and consider if related outcomes make it to the retrieved set.

Then, partially 2, we reviewed binary, order-aware analysis metrics like Imply Reciprocal Rank (MRR) and Common Precision (AP). Binary, order-aware measures categorise outcomes both as related or irrelevant and verify if they seem within the retrieval set, however on high of this, in addition they quantify how effectively the outcomes are ranked. In different phrases, in addition they bear in mind the rating with which every result’s retrieved, other than whether or not it’s retrieved or not within the first place.

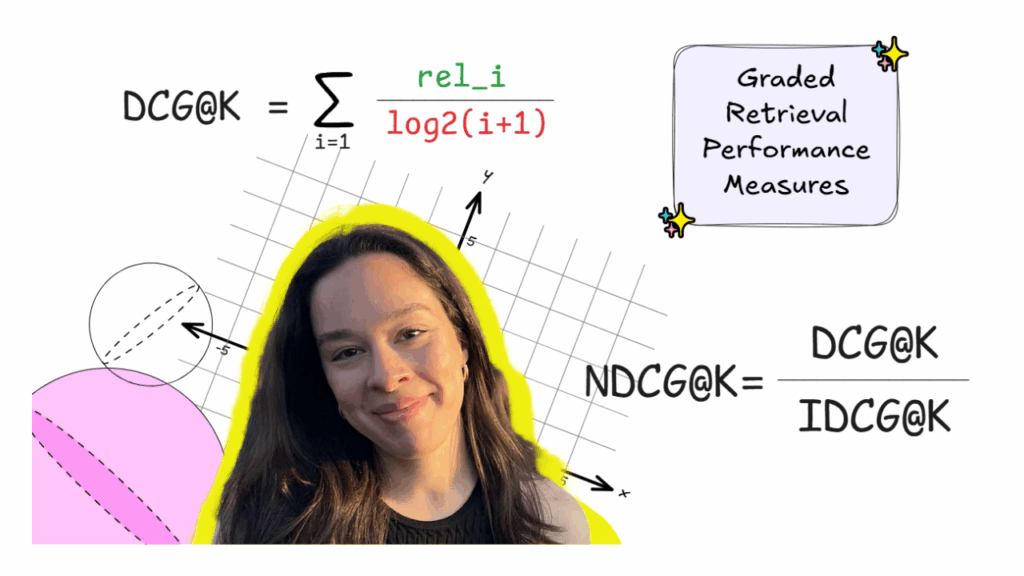

On this closing a part of the retrieval analysis metrics put up collection, I’m going to additional elaborate on the opposite massive class of metrics, past binary metrics. That’s, graded metrics. In contrast to binary metrics, the place outcomes are both related or irrelevant, for graded metrics, relevance is relatively a spectrum. On this means, the retrieved chunk could be kind of related to the consumer’s question.

Two generally used graded relevance metrics that we’re going to be having a look at in in the present day’s put up are Discounted Cumulative Achieve (DCG@Ok) and Normalized Discounted Cumulative Achieve (NDCG@ok).

I write 🍨DataCream, the place I’m studying and experimenting with AI and information. Subscribe here to be taught and discover with me.

Some graded measures

For graded retrieval measures, it’s initially necessary to grasp the idea of graded relevance. That’s, for graded measures, a retrieved merchandise could be kind of related, as quantified by rel_i.

🎯 Discounted Cumulative Achieve (DCG@ok)

Discounted Cumulative Achieve (DCG@ok) is a graded, order-aware retrieval analysis metric, permitting us to quantify how helpful a retrieved result’s, taking into consideration the rank with which it’s retrieved. We will calculate it as follows:

Right here, the numerator rel_i is the graded relevance of the retrieved outcome i, primarily, is a quantification of how related the retrieved textual content chunk is. Furthermore, the denominator of this formulation is the log of the rating of the outcome i. Primarily, this enables us to penalize objects that seem within the retrieved set with decrease ranks, emphasizing the concept that outcomes showing on the high are extra necessary. Thus, the extra related a result’s, the upper the rating, however the decrease the rating it seems at, the decrease the rating.

Let’s additional discover this with a easy instance:

In any case, a serious difficulty of DCG@ok is that, as you may see, is actually a sum perform of all of the related objects. Thus, a retrieved set with extra objects (a bigger ok) and/or extra related objects goes to inevitably end in a bigger DCG@ok. As an illustration, if in for instance, simply take into account ok = 4, we might find yourself with a DCG@4 = 28.19. Equally, DCG@6 can be larger and so forth. As ok will increase, DCG@ok sometimes will increase, since we embrace extra outcomes, except further objects have zero relevance. Nonetheless, this doesn’t essentially imply that its retrieval efficiency is superior. Quite the opposite, this relatively causes an issue as a result of it doesn’t enable us to match retrieved units with totally different ok values based mostly on DCG@ok.

This difficulty is successfully solved by the following graded measure we’re going to be discussing afterward in the present day – NDCG@ok. However earlier than that, we have to introduce IDCG@Ok, required for calculating NDCG@Ok.

🎯 Best Discounted Cumulative Achieve (IDCG@ok)

Best Discounted Cumulative Achieve (IDCG@ok), as its title suggests, is the DCG we might get within the excellent state of affairs the place our retrieved set is completely ranked based mostly on the retrieved outcomes’ relevance. Let’s see what the IDCG for our instance can be:

Apparently, for a set ok, IDCG@ok goes to at all times be equal to or bigger than any DCG@ok, because it represents the rating for an ideal retrieval and rating of outcomes for a sure ok.

Lastly, we will now calculate Normalized Discounted Cumulative Achieve (NDCG@ok), utilizing DCG@ok and IDCG@ok.

🎯 Normalized Discounted Cumulative Achieve (NDCG@ok)

Normalized Discounted Cumulative Achieve (NDCG@ok) is actually a normalised expression of DCG@ok, fixing our preliminary downside and rendering it comparable for various retrieved set sizes ok. We will calculate NDCG@ok with this simple formulation:

Mainly, NDCG@ok permits us to quantify how shut our present retrieval and rating is to the perfect one, for a given ok. This conveniently offers us with a quantity that is comparable for various values of ok. In our instance, NDCG@ok=5 can be:

Generally, NDCG@ok can vary from 0 to 1, with 1 representing an ideal retrieval and rating of the outcome, and 0 indicating a whole mess.

So, how will we truly calculate DCG and NDCG in Python?

In the event you’ve learn my other RAG tutorials, you recognize that is the place the Warfare and Peace instance would often are available. Nonetheless, this code instance is getting too large to incorporate in each put up, so as a substitute I’m going to point out you the way to calculate DCG and NDCG in Python, doing my greatest to maintain this put up at an affordable size.

To calculate these retrieval metrics, we first have to outline a floor fact set, exactly as we did in Part 1 when calculating Precision@Ok and Recall@Ok. The distinction right here is that, as a substitute of characterising every retrieved chunk as related or not, utilizing binary relevances (0 or 1), we now assign to it a graded relevance rating; for instance, from fully irrelevant (0), to tremendous related (5). Thus, our floor fact set would come with the textual content chunks which have the best graded relevance scores for every question.

As an illustration, for a question like “Who’s Anna Pávlovna?”, a retrieved chunk that completely matches the reply may obtain a rating of three, one which partially mentions the wanted info may get a 2, and a very unrelated chunk would get a relevance rating equal to 0.

Utilizing these graded relevance lists for a retrieved outcome set, we will then calculate DCG@ok, IDCG@ok, and NDCG@ok. We’ll use Python’s math library to deal with the logarithmic phrases:

import mathTo begin with, we will outline a perform for calculating DCG@ok as follows:

# DCG@ok

def dcg_at_k(relevance, ok):

ok = min(ok, len(relevance))

return sum(rel / math.log2(i + 1) for i, rel in enumerate(relevance[:k], begin=1))We will additionally calculate IDCG@ok making use of the same logic. Primarily, IDCG@ok is DCG@ok for an ideal retrieval and rating; thus, we will simply calculate it by calculating DCG@ok after sorting the outcomes by descending relevance.

# IDCG@ok

def idcg_at_k(relevance, ok):

ideal_relevance = sorted(relevance, reverse=True)

return dcg_at_k(ideal_relevance, ok)

Lastly, after now we have calculated DCG@ok and IDCG@ok, we will additionally simply calculate NDCG@ok as their perform. Extra particularly:

# NDCG@ok

def ndcg_at_k(relevance, ok):

dcg = dcg_at_k(relevance, ok)

idcg = idcg_at_k(relevance, ok)

return dcg / idcg if idcg > 0 else 0.0As defined, every of those features takes as enter a listing of graded relevance scores for retrieved chunks. As an illustration, let’s suppose that for a selected question, floor fact set, and retrieved outcomes take a look at, we find yourself with the next listing:

relevance = [3, 2, 3, 0, 1]Then, we will calculate the graded retrieval metrics utilizing our features :

print(f"DCG@5: {dcg_at_k(relevance, 5):.4f}")

print(f"IDCG@5: {idcg_at_k(relevance, 5):.4f}")

print(f"NDCG@5: {ndcg_at_k(relevance, 5):.4f}")And that was that! That is how we get our graded retrieval efficiency measures for our RAG pipeline in Python.

Lastly, equally to all different retrieval efficiency metrics, we will additionally common the scores of a metric throughout totally different queries to get a extra consultant general rating.

On my thoughts

In the present day’s put up in regards to the graded relevance measures concludes my put up collection about probably the most generally used metrics for evaluating the retrieval efficiency of RAG pipelines. Specifically, all through this put up collection, we explored binary measures, order-unaware and order-aware, in addition to graded measures, gaining a holistic view of how we strategy this. Apparently, there are many different issues that we will have a look at in an effort to consider a retrieval mechanism of a RAG pipeline, as as an illustration, latency per question or context tokens despatched. Nonetheless, the measures I went over in these posts cowl the basics for evaluating retrieval efficiency.

This enables us to quantify, consider, and in the end enhance the efficiency of the retrieval mechanism, in the end paving the best way for constructing an efficient RAG pipeline that produces significant solutions, grounded within the paperwork of our alternative.

Beloved this put up? Let’s be pals! Be a part of me on:

📰Substack 💌 Medium 💼LinkedIn ☕Buy me a coffee!

What about pialgorithms?

Seeking to convey the ability of RAG into your group?

pialgorithms can do it for you 👉 book a demo in the present day