says that “any sufficiently superior know-how is indistinguishable from magic”. That’s precisely how a whole lot of as we speak’s AI frameworks really feel. Instruments like GitHub Copilot, Claude Desktop, OpenAI Operator, and Perplexity Comet are automating on a regular basis duties that may’ve appeared inconceivable to automate simply 5 years in the past. What’s much more exceptional is that with just some traces of code, we are able to construct our personal subtle AI instruments: ones that search by way of information, browse the net, click on hyperlinks, and even make purchases. It actually does really feel like magic.

Although I genuinely believe in data wizards, I don’t consider in magic. I discover it thrilling (and sometimes useful) to grasp how issues are literally constructed and what’s taking place beneath the hood. That’s why I’ve determined to share a collection of posts on agentic AI design ideas that’ll enable you perceive how all these magical instruments truly work.

To achieve a deep understanding, we’ll construct a multi-AI agent system from scratch. We’ll keep away from utilizing frameworks like CrewAI or smolagents and as a substitute work instantly with the inspiration mannequin API. Alongside the best way, we’ll discover the elemental agentic design patterns: reflection, instrument use, planning, and multi-agent setups. Then, we’ll mix all this information to construct a multi-AI agent system that may reply complicated data-related questions.

As Richard Feynman put it, “What I can not create, I don’t perceive.” So let’s begin constructing! On this article, we’ll concentrate on the reflection design sample. However first, let’s work out what precisely reflection is.

What reflection is

Let’s mirror on how we (people) normally work on duties. Think about I have to share the outcomes of a latest characteristic launch with my PM. I’ll possible put collectively a fast draft after which learn it a few times from starting to finish, making certain that each one elements are constant, there’s sufficient data, and there are not any typos.

Or let’s take one other instance: writing a SQL question. I’ll both write it step-by-step, checking the intermediate outcomes alongside the best way, or (if it’s easy sufficient) I’ll draft it all of sudden, execute it, have a look at the end result (checking for errors or whether or not the end result matches my expectations), after which tweak the question based mostly on that suggestions. I’d rerun it, verify the end result, and iterate till it’s proper.

So we not often write lengthy texts from high to backside in a single go. We normally circle again, overview, and tweak as we go. These suggestions loops are what assist us enhance the standard of our work.

LLMs use a special strategy. If you happen to ask an LLM a query, by default, it’ll generate a solution token by token, and the LLM gained’t be capable of overview its end result and repair any points. However in an agentic AI setup, we are able to create suggestions loops for LLMs too, both by asking the LLM to overview and enhance its personal reply or by sharing exterior suggestions with it (just like the outcomes of a SQL execution). And that’s the entire level of reflection. It sounds fairly easy, however it may yield considerably higher outcomes.

There’s a considerable physique of analysis exhibiting the advantages of reflection:

- In “Reflexion: Language Agents with Verbal Reinforcement Learning” Shinn et al. (2023), the authors achieved a 91% cross@1 accuracy on the HumanEval coding benchmark, surpassing the earlier state-of-the-art GPT-4, which scored simply 80%. In addition they discovered that Reflexion considerably outperforms all baseline approaches on the HotPotQA benchmark (a Wikipedia-based Q&A dataset that challenges brokers to parse content material and cause over a number of supporting paperwork).

Reflection is very impactful in agentic programs as a result of it may be used to course-correct at many steps of the method:

- When a consumer asks a query, the LLM can use reflection to judge whether or not the request is possible.

- When the LLM places collectively an preliminary plan, it may use reflection to double-check whether or not the plan is smart and may help obtain the aim.

- After every execution step or instrument name, the agent can consider whether or not it’s on monitor and whether or not it’s price adjusting the plan.

- When the plan is absolutely executed, the agent can mirror to see whether or not it has truly completed the aim and solved the duty.

It’s clear that reflection can considerably enhance accuracy. Nevertheless, there are trade-offs price discussing. Reflection may require a number of further calls to the LLM and probably different programs, which might result in elevated latency and prices. So in enterprise instances, it’s price contemplating whether or not the standard enhancements justify the bills and delays within the consumer stream.

Reflection in frameworks

Since there’s little question that reflection brings worth to AI brokers, it’s extensively utilized in well-liked frameworks. Let’s have a look at some examples.

The thought of reflection was first proposed within the paper “ReAct: Synergizing Reasoning and Acting in Language Models” by Yao et al. (2022). ReAct is a framework that mixes interleaving levels of Reasoning (reflection by way of specific thought traces) and Performing (task-relevant actions in an atmosphere). On this framework, reasoning guides the selection of actions, and actions produce new observations that inform additional reasoning. The reasoning stage itself is a mixture of reflection and planning.

This framework grew to become fairly well-liked, so there at the moment are a number of off-the-shelf implementations, corresponding to:

- The DSPy framework by Databricks has a

ReActclass, - In LangGraph, you should use the

create_react_agentperform, - Code brokers within the smolagents library by HuggingFace are additionally based mostly on the ReAct structure.

Reflection from scratch

Now that we’ve realized the speculation and explored current implementations, it’s time to get our fingers soiled and construct one thing ourselves. Within the ReAct strategy, brokers use reflection at every step, combining planning with reflection. Nevertheless, to grasp the influence of reflection extra clearly, we’ll have a look at it in isolation.

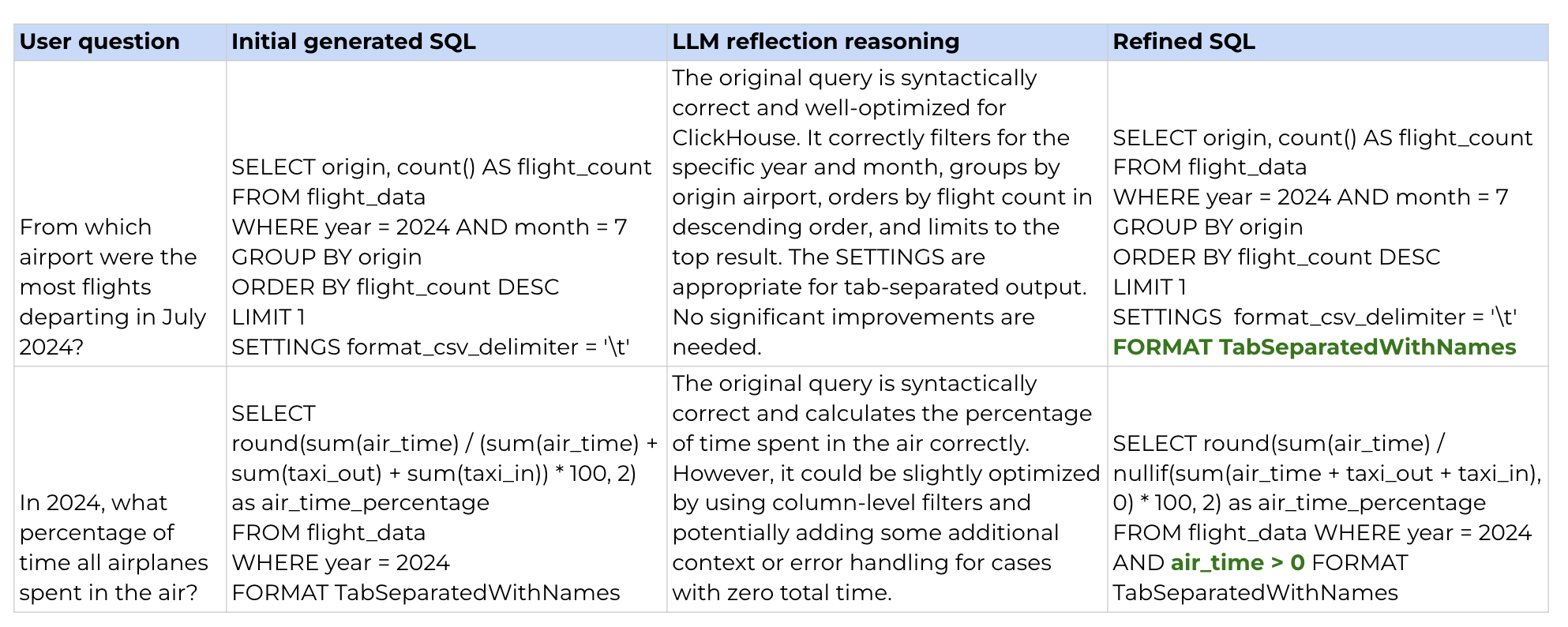

For instance, we’ll use text-to-SQL: we’ll give an LLM a query and count on it to return a legitimate SQL question. We’ll be working with a flight delay dataset and the ClickHouse SQL dialect.

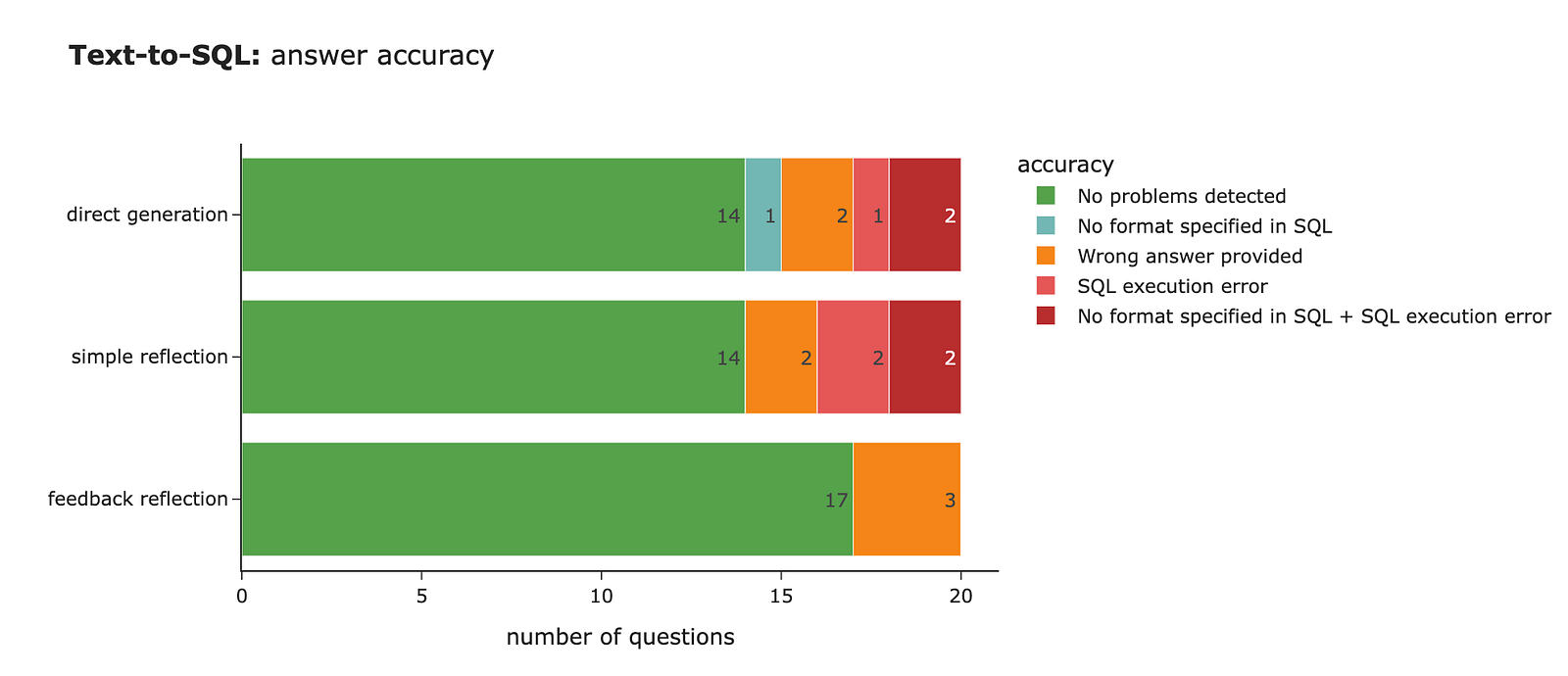

We’ll begin by utilizing direct technology with none reflection as our baseline. Then, we’ll attempt utilizing reflection by asking the mannequin to critique and enhance the SQL, or by offering it with further suggestions. After that, we’ll measure the standard of our solutions to see whether or not reflection truly results in higher outcomes.

Direct technology

We’ll start with essentially the most easy strategy, direct technology, the place we ask the LLM to generate SQL that solutions a consumer question.

pip set up anthropicWe have to specify the API Key for the Anthropic API.

import os

os.environ['ANTHROPIC_API_KEY'] = config['ANTHROPIC_API_KEY']The subsequent step is to initialise the consumer, and we’re all set.

import anthropic

consumer = anthropic.Anthropic()Now we are able to use this consumer to ship messages to the LLM. Let’s put collectively a perform to generate SQL based mostly on a consumer question. I’ve specified the system immediate with fundamental directions and detailed details about the info schema. I’ve additionally created a perform to ship the system immediate and consumer question to the LLM.

base_sql_system_prompt = '''

You're a senior SQL developer and your activity is to assist generate a SQL question based mostly on consumer necessities.

You're working with ClickHouse database. Specify the format (Tab Separated With Names) within the SQL question output to make sure that column names are included within the output.

Don't use rely(*) in your queries since it is a dangerous follow with columnar databases, desire utilizing rely().

Be sure that the question is syntactically appropriate and optimized for efficiency, making an allowance for ClickHouse particular options (i.e. that ClickHouse is a columnar database and helps capabilities like ARRAY JOIN, SAMPLE, and so forth.).

Return solely the SQL question with none further explanations or feedback.

You may be working with flight_data desk which has the next schema:

Column Title | Knowledge Sort | Null % | Instance Worth | Description

--- | --- | --- | --- | ---

12 months | Int64 | 0.0 | 2024 | Yr of flight

month | Int64 | 0.0 | 1 | Month of flight (1–12)

day_of_month | Int64 | 0.0 | 1 | Day of the month

day_of_week | Int64 | 0.0 | 1 | Day of week (1=Monday … 7=Sunday)

fl_date | datetime64[ns] | 0.0 | 2024-01-01 00:00:00 | Flight date (YYYY-MM-DD)

op_unique_carrier | object | 0.0 | 9E | Distinctive provider code

op_carrier_fl_num | float64 | 0.0 | 4814.0 | Flight quantity for reporting airline

origin | object | 0.0 | JFK | Origin airport code

origin_city_name | object | 0.0 | "New York, NY" | Origin metropolis title

origin_state_nm | object | 0.0 | New York | Origin state title

dest | object | 0.0 | DTW | Vacation spot airport code

dest_city_name | object | 0.0 | "Detroit, MI" | Vacation spot metropolis title

dest_state_nm | object | 0.0 | Michigan | Vacation spot state title

crs_dep_time | Int64 | 0.0 | 1252 | Scheduled departure time (native, hhmm)

dep_time | float64 | 1.31 | 1247.0 | Precise departure time (native, hhmm)

dep_delay | float64 | 1.31 | -5.0 | Departure delay in minutes (unfavourable if early)

taxi_out | float64 | 1.35 | 31.0 | Taxi out time in minutes

wheels_off | float64 | 1.35 | 1318.0 | Wheels-off time (native, hhmm)

wheels_on | float64 | 1.38 | 1442.0 | Wheels-on time (native, hhmm)

taxi_in | float64 | 1.38 | 7.0 | Taxi in time in minutes

crs_arr_time | Int64 | 0.0 | 1508 | Scheduled arrival time (native, hhmm)

arr_time | float64 | 1.38 | 1449.0 | Precise arrival time (native, hhmm)

arr_delay | float64 | 1.61 | -19.0 | Arrival delay in minutes (unfavourable if early)

cancelled | int64 | 0.0 | 0 | Cancelled flight indicator (0=No, 1=Sure)

cancellation_code | object | 98.64 | B | Cause for cancellation (if cancelled)

diverted | int64 | 0.0 | 0 | Diverted flight indicator (0=No, 1=Sure)

crs_elapsed_time | float64 | 0.0 | 136.0 | Scheduled elapsed time in minutes

actual_elapsed_time | float64 | 1.61 | 122.0 | Precise elapsed time in minutes

air_time | float64 | 1.61 | 84.0 | Flight time in minutes

distance | float64 | 0.0 | 509.0 | Distance between origin and vacation spot (miles)

carrier_delay | int64 | 0.0 | 0 | Service-related delay in minutes

weather_delay | int64 | 0.0 | 0 | Climate-related delay in minutes

nas_delay | int64 | 0.0 | 0 | Nationwide Air System delay in minutes

security_delay | int64 | 0.0 | 0 | Safety delay in minutes

late_aircraft_delay | int64 | 0.0 | 0 | Late plane delay in minutes

'''

def generate_direct_sql(rec):

# making an LLM name

message = consumer.messages.create(

mannequin = "claude-3-5-haiku-latest",

# I selected smaller mannequin in order that it is simpler for us to see the influence

max_tokens = 8192,

system=base_sql_system_prompt,

messages = [

{'role': 'user', 'content': rec['question']}

]

)

sql = message.content material[0].textual content

# cleansing the output

if sql.endswith('```'):

sql = sql[:-3]

if sql.startswith('```sql'):

sql = sql[6:]

return sqlThat’s it. Now let’s take a look at our text-to-SQL resolution. I’ve created a small evaluation set of 20 question-and-answer pairs that we are able to use to verify whether or not our system is working nicely. Right here’s one instance:

{

'query': 'What was the very best velocity in mph?',

'reply': '''

choose max(distance / (air_time / 60)) as max_speed

from flight_data

the place air_time > 0

format TabSeparatedWithNames'''

}Let’s use our text-to-SQL perform to generate SQL for all consumer queries within the take a look at set.

# load analysis set

with open('./information/flight_data_qa_pairs.json', 'r') as f:

qa_pairs = json.load(f)

qa_pairs_df = pd.DataFrame(qa_pairs)

tmp = []

# executing LLM for every query in our eval set

for rec in tqdm.tqdm(qa_pairs_df.to_dict('information')):

llm_sql = generate_direct_sql(rec)

tmp.append(

{

'id': rec['id'],

'llm_direct_sql': llm_sql

}

)

llm_direct_df = pd.DataFrame(tmp)

direct_result_df = qa_pairs_df.merge(llm_direct_df, on = 'id')Now we’ve our solutions, and the subsequent step is to measure the standard.

Measuring high quality

Sadly, there’s no single appropriate reply on this state of affairs, so we are able to’t simply evaluate the SQL generated by the LLM to a reference reply. We have to provide you with a method to measure high quality.

There are some points of high quality that we are able to verify with goal standards, however to verify whether or not the LLM returned the suitable reply, we’ll want to make use of an LLM. So I’ll use a mixture of approaches:

- First, we’ll use goal standards to verify whether or not the right format was specified within the SQL (we instructed the LLM to make use of

TabSeparatedWithNames). - Second, we are able to execute the generated question and see whether or not ClickHouse returns an execution error.

- Lastly, we are able to create an LLM decide that compares the output from the generated question to our reference reply and checks whether or not they differ.

Let’s begin by executing the SQL. It’s price noting that our get_clickhouse_data perform doesn’t throw an exception. As a substitute, it returns textual content explaining the error, which will be dealt with by the LLM later.

CH_HOST = 'http://localhost:8123' # default handle

import requests

import pandas as pd

import tqdm

# perform to execute SQL question

def get_clickhouse_data(question, host = CH_HOST, connection_timeout = 1500):

r = requests.publish(host, params = {'question': question},

timeout = connection_timeout)

if r.status_code == 200:

return r.textual content

else:

return 'Database returned the next error:n' + r.textual content

# getting the outcomes of SQL execution

direct_result_df['llm_direct_output'] = direct_result_df['llm_direct_sql'].apply(get_clickhouse_data)

direct_result_df['answer_output'] = direct_result_df['answer'].apply(get_clickhouse_data)The subsequent step is to create an LLM decide. For this, I’m utilizing a series‑of‑thought strategy that prompts the LLM to supply its reasoning earlier than giving the ultimate reply. This provides the mannequin time to suppose by way of the issue, which improves response high quality.

llm_judge_system_prompt = '''

You're a senior analyst and your activity is to check two SQL question outcomes and decide if they're equal.

Focus solely on the info returned by the queries, ignoring any formatting variations.

Take into consideration the preliminary consumer question and data wanted to reply it. For instance, if consumer requested for the common distance, and each queries return the identical common worth however in considered one of them there's additionally a rely of information, you must take into account them equal, since each present the identical requested data.

Reply with a JSON of the next construction:

{

'reasoning': '',

'equivalence':

}

Be sure that ONLY JSON is within the output.

You may be working with flight_data desk which has the next schema:

Column Title | Knowledge Sort | Null % | Instance Worth | Description

--- | --- | --- | --- | ---

12 months | Int64 | 0.0 | 2024 | Yr of flight

month | Int64 | 0.0 | 1 | Month of flight (1–12)

day_of_month | Int64 | 0.0 | 1 | Day of the month

day_of_week | Int64 | 0.0 | 1 | Day of week (1=Monday … 7=Sunday)

fl_date | datetime64[ns] | 0.0 | 2024-01-01 00:00:00 | Flight date (YYYY-MM-DD)

op_unique_carrier | object | 0.0 | 9E | Distinctive provider code

op_carrier_fl_num | float64 | 0.0 | 4814.0 | Flight quantity for reporting airline

origin | object | 0.0 | JFK | Origin airport code

origin_city_name | object | 0.0 | "New York, NY" | Origin metropolis title

origin_state_nm | object | 0.0 | New York | Origin state title

dest | object | 0.0 | DTW | Vacation spot airport code

dest_city_name | object | 0.0 | "Detroit, MI" | Vacation spot metropolis title

dest_state_nm | object | 0.0 | Michigan | Vacation spot state title

crs_dep_time | Int64 | 0.0 | 1252 | Scheduled departure time (native, hhmm)

dep_time | float64 | 1.31 | 1247.0 | Precise departure time (native, hhmm)

dep_delay | float64 | 1.31 | -5.0 | Departure delay in minutes (unfavourable if early)

taxi_out | float64 | 1.35 | 31.0 | Taxi out time in minutes

wheels_off | float64 | 1.35 | 1318.0 | Wheels-off time (native, hhmm)

wheels_on | float64 | 1.38 | 1442.0 | Wheels-on time (native, hhmm)

taxi_in | float64 | 1.38 | 7.0 | Taxi in time in minutes

crs_arr_time | Int64 | 0.0 | 1508 | Scheduled arrival time (native, hhmm)

arr_time | float64 | 1.38 | 1449.0 | Precise arrival time (native, hhmm)

arr_delay | float64 | 1.61 | -19.0 | Arrival delay in minutes (unfavourable if early)

cancelled | int64 | 0.0 | 0 | Cancelled flight indicator (0=No, 1=Sure)

cancellation_code | object | 98.64 | B | Cause for cancellation (if cancelled)

diverted | int64 | 0.0 | 0 | Diverted flight indicator (0=No, 1=Sure)

crs_elapsed_time | float64 | 0.0 | 136.0 | Scheduled elapsed time in minutes

actual_elapsed_time | float64 | 1.61 | 122.0 | Precise elapsed time in minutes

air_time | float64 | 1.61 | 84.0 | Flight time in minutes

distance | float64 | 0.0 | 509.0 | Distance between origin and vacation spot (miles)

carrier_delay | int64 | 0.0 | 0 | Service-related delay in minutes

weather_delay | int64 | 0.0 | 0 | Climate-related delay in minutes

nas_delay | int64 | 0.0 | 0 | Nationwide Air System delay in minutes

security_delay | int64 | 0.0 | 0 | Safety delay in minutes

late_aircraft_delay | int64 | 0.0 | 0 | Late plane delay in minutes

'''

llm_judge_user_prompt_template = '''

Right here is the preliminary consumer question:

{user_query}

Right here is the SQL question generated by the primary analyst:

SQL:

{sql1}

Database output:

{result1}

Right here is the SQL question generated by the second analyst:

SQL:

{sql2}

Database output:

{result2}

'''

def llm_judge(rec, field_to_check):

# assemble the consumer immediate

user_prompt = llm_judge_user_prompt_template.format(

user_query = rec['question'],

sql1 = rec['answer'],

result1 = rec['answer_output'],

sql2 = rec[field_to_check + '_sql'],

result2 = rec[field_to_check + '_output']

)

# make an LLM name

message = consumer.messages.create(

mannequin = "claude-sonnet-4-5",

max_tokens = 8192,

temperature = 0.1,

system = llm_judge_system_prompt,

messages=[

{'role': 'user', 'content': user_prompt}

]

)

information = message.content material[0].textual content

# Strip markdown code blocks

information = information.strip()

if information.startswith('```json'):

information = information[7:]

elif information.startswith('```'):

information = information[3:]

if information.endswith('```'):

information = information[:-3]

information = information.strip()

return json.hundreds(information) Now, let’s run the LLM decide to get the outcomes.

tmp = []

for rec in tqdm.tqdm(direct_result_df.to_dict('information')):

attempt:

judgment = llm_judge(rec, 'llm_direct')

besides Exception as e:

print(f"Error processing report {rec['id']}: {e}")

proceed

tmp.append(

{

'id': rec['id'],

'llm_judge_reasoning': judgment['reasoning'],

'llm_judge_equivalence': judgment['equivalence']

}

)

judge_df = pd.DataFrame(tmp)

direct_result_df = direct_result_df.merge(judge_df, on = 'id')Let’s have a look at one instance to see how the LLM decide works.

# consumer question

In 2024, what share of time all airplanes spent within the air?

# appropriate reply

choose (sum(air_time) / sum(actual_elapsed_time)) * 100 as percentage_in_air

the place 12 months = 2024

from flight_data

format TabSeparatedWithNames

percentage_in_air

81.43582596894757

# generated by LLM reply

SELECT

spherical(sum(air_time) / (sum(air_time) + sum(taxi_out) + sum(taxi_in)) * 100, 2) as air_time_percentage

FROM flight_data

WHERE 12 months = 2024

FORMAT TabSeparatedWithNames

air_time_percentage

81.39

# LLM decide response

{

'reasoning': 'Each queries calculate the share of time airplanes

spent within the air, however use totally different denominators. The primary question

makes use of actual_elapsed_time (which incorporates air_time + taxi_out + taxi_in

+ any floor delays), whereas the second makes use of solely (air_time + taxi_out

+ taxi_in). The second question is strategy is extra correct for answering

"time airplanes spent within the air" because it excludes floor delays.

Nevertheless, the outcomes are very shut (81.44% vs 81.39%), suggesting minimal

influence. These are materially totally different approaches that occur to yield

comparable outcomes',

'equivalence': FALSE

}The reasoning is smart, so we are able to belief our decide. Now, let’s verify all LLM-generated queries.

def get_llm_accuracy(sql, output, equivalence):

issues = []

if 'format tabseparatedwithnames' not in sql.decrease():

issues.append('No format laid out in SQL')

if 'Database returned the next error' in output:

issues.append('SQL execution error')

if not equivalence and ('SQL execution error' not in issues):

issues.append('Incorrect reply supplied')

if len(issues) == 0:

return 'No issues detected'

else:

return ' + '.be a part of(issues)

direct_result_df['llm_direct_sql_quality_heuristics'] = direct_result_df.apply(

lambda row: get_llm_accuracy(row['llm_direct_sql'], row['llm_direct_output'], row['llm_judge_equivalence']), axis=1)The LLM returned the right reply in 70% of instances, which isn’t dangerous. However there’s undoubtedly room for enchancment, because it typically both gives the improper reply or fails to specify the format appropriately (generally inflicting SQL execution errors).

Including a mirrored image step

To enhance the standard of our resolution, let’s attempt including a mirrored image step the place we ask the mannequin to overview and refine its reply.

For a mirrored image name, I’ll maintain the identical system immediate because it comprises all the mandatory details about SQL and the info schema. However I’ll tweak the consumer message to share the preliminary consumer question and the generated SQL, asking the LLM to critique and enhance it.

simple_reflection_user_prompt_template = '''

Your activity is to evaluate the SQL question generated by one other analyst and suggest enhancements if needed.

Test whether or not the question is syntactically appropriate and optimized for efficiency.

Take note of nuances in information (particularly time stamps varieties, whether or not to make use of complete elapsed time or time within the air, and so forth).

Be sure that the question solutions the preliminary consumer query precisely.

Because the end result return the next JSON:

{{

'reasoning': '',

'refined_sql': ''

}}

Be sure that ONLY JSON is within the output and nothing else. Be sure that the output JSON is legitimate.

Right here is the preliminary consumer question:

{user_query}

Right here is the SQL question generated by one other analyst:

{sql}

'''

def simple_reflection(rec) -> str:

# developing a consumer immediate

user_prompt = simple_reflection_user_prompt_template.format(

user_query=rec['question'],

sql=rec['llm_direct_sql']

)

# making an LLM name

message = consumer.messages.create(

mannequin="claude-3-5-haiku-latest",

max_tokens = 8192,

system=base_sql_system_prompt,

messages=[

{'role': 'user', 'content': user_prompt}

]

)

information = message.content material[0].textual content

# strip markdown code blocks

information = information.strip()

if information.startswith('```json'):

information = information[7:]

elif information.startswith('```'):

information = information[3:]

if information.endswith('```'):

information = information[:-3]

information = information.strip()

return json.hundreds(information.exchange('n', ' ')) Let’s refine the queries with reflection and measure the accuracy. We don’t see a lot enchancment within the ultimate high quality. We’re nonetheless at 70% appropriate solutions.

Let’s have a look at particular examples to grasp what occurred. First, there are a few instances the place the LLM managed to repair the issue, both by correcting the format or by including lacking logic to deal with zero values.

Nevertheless, there are additionally instances the place the LLM overcomplicated the reply. The preliminary SQL was appropriate (matching the golden set reply), however then the LLM determined to ‘enhance’ it. A few of these enhancements are affordable (e.g., accounting for nulls or excluding cancelled flights). Nonetheless, for some cause, it determined to make use of ClickHouse sampling, although we don’t have a lot information and our desk doesn’t assist sampling. Consequently, the refined question returned an execution error: Database returned the next error: Code: 141. DB::Exception: Storage default.flight_data does not assist sampling. (SAMPLING_NOT_SUPPORTED).

Reflection with exterior suggestions

Reflection didn’t enhance accuracy a lot. That is possible as a result of we didn’t present any further data that may assist the mannequin generate a greater end result. Let’s attempt sharing exterior suggestions with the mannequin:

The results of our verify on whether or not the format is specified appropriately

The output from the database (both information or an error message)

Let’s put collectively a immediate for this and generate a brand new model of the SQL.

feedback_reflection_user_prompt_template = '''

Your activity is to evaluate the SQL question generated by one other analyst and suggest enhancements if needed.

Test whether or not the question is syntactically appropriate and optimized for efficiency.

Take note of nuances in information (particularly time stamps varieties, whether or not to make use of complete elapsed time or time within the air, and so forth).

Be sure that the question solutions the preliminary consumer query precisely.

Because the end result return the next JSON:

{{

'reasoning': '',

'refined_sql': ''

}}

Be sure that ONLY JSON is within the output and nothing else. Be sure that the output JSON is legitimate.

Right here is the preliminary consumer question:

{user_query}

Right here is the SQL question generated by one other analyst:

{sql}

Right here is the database output of this question:

{output}

We run an automated verify on the SQL question to verify whether or not it has fomatting points. Here is the output:

{formatting}

'''

def feedback_reflection(rec) -> str:

# outline message for formatting

if 'No format laid out in SQL' in rec['llm_direct_sql_quality_heuristics']:

formatting = 'SQL lacking formatting. Specify "format TabSeparatedWithNames" to make sure that column names are additionally returned'

else:

formatting = 'Formatting is appropriate'

# developing a consumer immediate

user_prompt = feedback_reflection_user_prompt_template.format(

user_query = rec['question'],

sql = rec['llm_direct_sql'],

output = rec['llm_direct_output'],

formatting = formatting

)

# making an LLM name

message = consumer.messages.create(

mannequin = "claude-3-5-haiku-latest",

max_tokens = 8192,

system = base_sql_system_prompt,

messages = [

{'role': 'user', 'content': user_prompt}

]

)

information = message.content material[0].textual content

# strip markdown code blocks

information = information.strip()

if information.startswith('```json'):

information = information[7:]

elif information.startswith('```'):

information = information[3:]

if information.endswith('```'):

information = information[:-3]

information = information.strip()

return json.hundreds(information.exchange('n', ' ')) After working our accuracy measurements, we are able to see that accuracy has improved considerably: 17 appropriate solutions (85% accuracy) in comparison with 14 (70% accuracy).

If we verify the instances the place the LLM mounted the problems, we are able to see that it was capable of appropriate the format, handle SQL execution errors, and even revise the enterprise logic (e.g., utilizing air time for calculating velocity).

Let’s additionally do some error evaluation to look at the instances the place the LLM made errors. Within the desk under, we are able to see that the LLM struggled with defining sure timestamps, incorrectly calculating complete time, or utilizing complete time as a substitute of air time for velocity calculations. Nevertheless, a few of the discrepancies are a bit tough:

- Within the final question, the time interval wasn’t explicitly outlined, so it’s affordable for the LLM to make use of 2010–2023. I wouldn’t take into account this an error, and I’d alter the analysis as a substitute.

- One other instance is methods to outline airline velocity:

avg(distance/time)orsum(distance)/sum(time). Each choices are legitimate since nothing was specified within the consumer question or system immediate (assuming we don’t have a predefined calculation methodology).

General, I feel we achieved a reasonably good end result. Our ultimate 85% accuracy represents a major 15% level enchancment. You could possibly probably transcend one iteration and run 2–3 rounds of reflection, but it surely’s price assessing while you hit diminishing returns in your particular case, since every iteration goes with elevated price and latency.

You could find the complete code on GitHub.

Abstract

It’s time to wrap issues up. On this article, we began our journey into understanding how the magic of agentic AI programs works. To determine it out, we’ll implement a multi-agent text-to-data instrument utilizing solely API calls to basis fashions. Alongside the best way, we’ll stroll by way of the important thing design patterns step-by-step: beginning as we speak with reflection, and transferring on to instrument use, planning, and multi-agent coordination.

On this article, we began with essentially the most basic sample — reflection. Reflection is on the core of any agentic stream, because the LLM must mirror on its progress towards attaining the top aim.

Reflection is a comparatively easy sample. We merely ask the identical or a special mannequin to analyse the end result and try to enhance it. As we realized in follow, sharing exterior suggestions with the mannequin (like outcomes from static checks or database output) considerably improves accuracy. A number of analysis research and our personal expertise with the text-to-SQL agent show the advantages of reflection. Nevertheless, these accuracy positive factors come at a price: extra tokens spent and better latency as a result of a number of API calls.

Thanks for studying. I hope this text was insightful. Bear in mind Einstein’s recommendation: “The vital factor is to not cease questioning. Curiosity has its personal cause for current.” Might your curiosity lead you to your subsequent nice perception.

Reference

This text is impressed by the “Agentic AI” course by Andrew Ng from DeepLearning.AI.