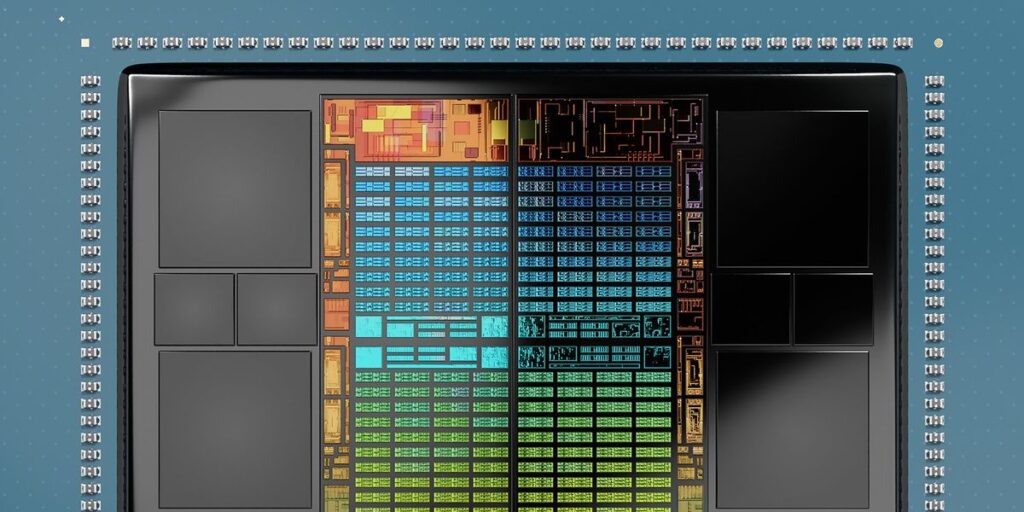

Peek contained in the bundle of AMD’s or Nvidia’s most advanced AI products and also you’ll discover a acquainted association: The GPU is flanked on two sides by high-bandwidth memory (HBM), probably the most superior reminiscence chips out there. These reminiscence chips are positioned as shut as attainable to the computing chips they serve in an effort to reduce down on the largest bottleneck in AI computing—the energy and delay in getting billions of bits per second from reminiscence into logic. However what in case you may carry computing and reminiscence even nearer collectively by stacking the HBM on prime of the GPU?

Imec just lately explored this situation utilizing superior thermal simulations, and the reply—delivered in December on the 2025 IEEE International Electron Device Meeting (IEDM)—was a bit grim. 3D stacking doubles the working temperature contained in the GPU, rendering it inoperable. However the group, led by Imec’s James Myers, didn’t simply surrender. They recognized a number of engineering optimizations that in the end may whittle down the temperature distinction to just about zero.

Imec began with a thermal simulation of a GPU and 4 HBM dies as you’d discover them right this moment, inside what’s known as a 2.5D bundle. That’s, each the GPU and the HBM sit on substrate known as an interposer, with minimal distance between them. The 2 forms of chips are linked by 1000’s of micrometer-scale copper interconnects constructed into the interposer’s floor. On this configuration, the mannequin GPU consumes 414 watts and reaches a peak temperature of just below 70 °C—typical for a processor. The reminiscence chips eat a further 40 W or so and get considerably much less sizzling. The warmth is faraway from the highest of the bundle by the sort of liquid cooling that’s grow to be frequent in new AI data centers.

“Whereas this method is at present used, it doesn’t scale properly for the long run—particularly because it blocks two sides of the GPU, limiting future GPU-to-GPU connections contained in the bundle,” Yukai Chen, a senior researcher at Imec informed engineers at IEDM. In distinction, “the 3D method results in greater bandwidth, decrease latency… crucial enchancment is the bundle footprint.”

Sadly, as Chen and his colleagues discovered, probably the most easy model of stacking, merely placing the HBM chips on prime of the GPU and including a block of clean silicon to fill in a niche on the middle, shot temperatures within the GPU as much as a scorching 140 °C—properly previous a typical GPU’s 80 °C restrict.

System Expertise Co-optimization

The Imec group set about making an attempt a lot of know-how and system optimizations aimed toward reducing the temperature. The very first thing they tried was to throw out a layer of silicon that was now redundant. To grasp why, it’s important to first get a grip on what HBM actually is.

This type of reminiscence is a stack of as many as 12 high-density DRAM dies. Every has been thinned all the way down to tens of micrometers and is shot by with vertical connections. These thinned dies are stacked one atop one other and linked by tiny balls of solder, and this stack of reminiscence is vertically linked to a different piece of silicon, known as the bottom die. The bottom die is a logic chip designed to multiplex the information—pack it into the restricted variety of wires that may match throughout the millimeter-scale hole to the GPU.

However with the HBM now on prime of the GPU, there’s no want for such a knowledge pump. Bits can movement straight into the processor with out regard for what number of wires occur to suit alongside the facet of the chip. After all, this alteration means shifting the reminiscence management circuits from the bottom die into the GPU and subsequently altering the processor’s floorplan, says Myers. However there needs to be ample room, he suggests, as a result of the GPU will not want the circuits used to demultiplex incoming reminiscence information.

Reducing out this middle-man of reminiscence cooled issues down by solely rather less than 4 °C. However, importantly, it ought to massively enhance the bandwidth between the reminiscence and the processor, which is vital for one more optimization the group tried—slowing down the GPU.

That may appear opposite to the entire function of higher AI computing, however on this case it’s a bonus. Large language models are what are known as “reminiscence sure” issues. That’s, reminiscence bandwidth is the primary limiting issue. However Myers’ group estimated 3D stacking HBM on the GPU would enhance bandwidth fourfold. With that added headroom, even slowing the GPU’s clock by 50 % nonetheless results in a efficiency win, whereas cooling the whole lot down by greater than 20 °C. In apply, the processor may not must be slowed down fairly that a lot. Growing the clock frequency to 70 % led to a GPU that was just one.7 °C hotter, Myers says.

Optimized HBM

One other huge drop in temperature got here from making the HBM stack and the realm round it extra conductive. That included merging the 4 stacks into two wider stacks, thereby eliminating a heat-trapping area; scaling down the highest—normally thicker—die of the stack; and filling in additional of the area across the HBM with clean items of silicon to conduct extra warmth.

With all of that, the stack now operated at about 88 °C. One remaining optimization introduced issues again to close 70 °C. Typically, some 95 % of a chip’s warmth is faraway from the highest of the bundle, the place on this case water carries the warmth away. However including comparable cooling to the underside as properly drove the stacked chips down a remaining 17 °C.

Though the analysis offered at IEDM reveals it could be attainable, HBM-on-GPU isn’t essentially the only option, Myers says. “We’re simulating different system configurations to assist construct confidence that that is or isn’t the only option,” he says. “GPU-on-HBM is of curiosity to some in trade,” as a result of it places the GPU nearer to the cooling. However it might seemingly be a extra advanced design, as a result of the GPU’s energy and information must movement vertically by the HBM to succeed in it.

From Your Website Articles

Associated Articles Across the Net