the primary 2 tales of this collection [1], [2], we:

- Launched X-way interpretation of matrices

- Noticed bodily that means and particular instances of matrix-vector multiplication

- Seemed on the bodily that means of matrix-matrix multiplication

- Noticed its conduct on a number of particular instances of matrices

On this story, I need to share my ideas in regards to the transpose of a matrix, denoted as AT, the operation that simply flips the content material of the sq. desk round its diagonal.

In distinction to many different operations on matrices, it’s fairly simple to transpose a given matrix ‘A‘ on paper. Nevertheless, the bodily that means of that usually stays behind. Then again, it isn’t so clear why the next transpose-related formulation truly work:

- (AB)T = BTAT,

- (y, Ax) = (x, ATy),

- (ATA)T = ATA.

On this story, I’m going to provide my interpretation of the transpose operation, which, amongst others, will present why the talked about formulation are literally the way in which they’re. So let’s dive in!

However to begin with, let me remind all of the definitions which are used all through the tales of this collection:

- Matrices are denoted with uppercase (like ‘A‘, ‘B‘), whereas vectors and scalars are denoted with lowercase (like ‘x‘, ‘y‘ or ‘m‘, ‘n‘).

- |x| – is the size of vector ‘x‘,

- rows(A) – variety of rows of matrix ‘A‘,

- columns(A) – variety of columns of matrix ‘A‘,

- AT – the transpose of matrix ‘A‘,

- aTi,j – the worth on the i-th row and j-th column of the transposed matrix AT,

- (x, y) – dot product of vectors ‘x‘ and ‘y‘ (i.e. “x1y1 + x2y2 + … + xnyn“).

Transpose vs. X-way interpretation

In part 1 of this collection – “matrix-vector multiplication” [1], I launched the X-way interpretation of matrices. Let’s recollect it with an instance:

From there, we additionally do not forget that the left stack of the X-diagram of ‘A‘ will be related to rows of matrix ‘A‘, whereas its proper stack will be related to the columns.

On the similar time, the values which come to the two’nd from the highest merchandise of the left stack are the values of two’nd row of ‘A’ (highlighted in purple).

Now, if transposing a matrix is definitely flipping the desk round its predominant diagonal, it implies that all of the columns of ‘A‘ turn into rows in ‘AT‘, and vice versa.

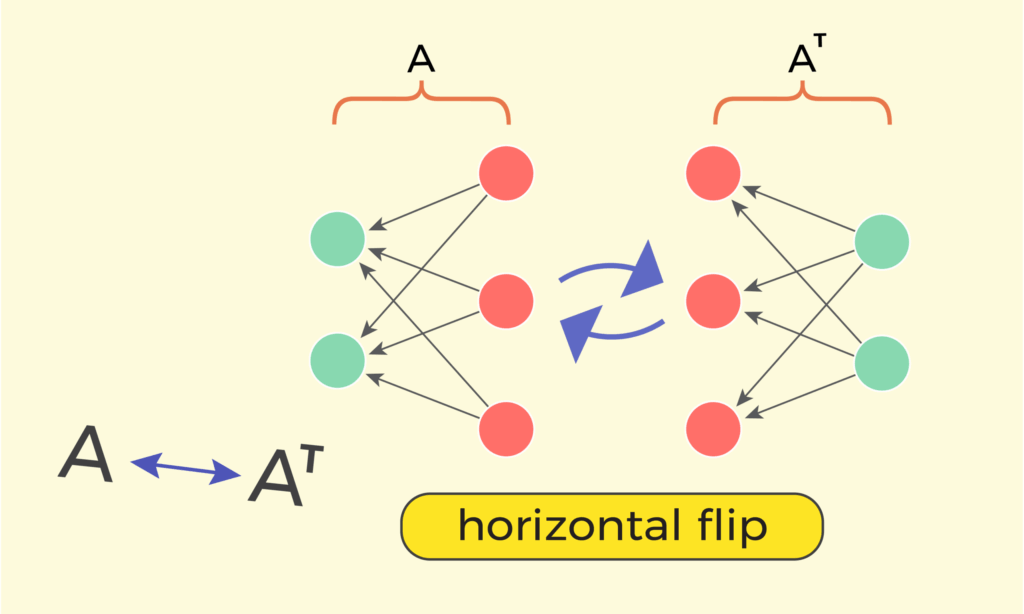

And if transposing means altering the locations of rows and columns, then maybe we will do the identical on the X-diagram? Thus, to swap rows and columns of the X-diagram, we should always flip it horizontally:

Will the horizontally flipped X-diagram of ‘A‘ symbolize the X-diagram of ‘AT‘? We all know that cell “ai,j” is current within the X-diagram because the arrow ranging from the j‘th merchandise of the left stack, and directed in the direction of the i‘th merchandise of the precise stack. After flipping horizontally, that very same arrow will begin now from the i‘th merchandise of the precise stack and will probably be directed to the j‘th merchandise of the left stack.

Which implies that the definition of transpose “ai,j = aTj,i” does maintain.

Concluding this chapter, we’ve got seen that transposing matrix ‘A‘ is similar as horizontally flipping its X-diagram.

Transposing a sequence of matrices

Let’s see how decoding AT as a horizontal flip of its X-diagram will assist us to uncover the bodily that means of some transpose-related formulation. Let’s begin with the next:

[begin{equation*}

(AB)^T = B^T A^T

end{equation*}]

which says that transposing the multiplication “A*B” is similar as multiplying transpositions AT and BT, however in reverse order. Now, why does the order truly turn into reversed?

From part 2 of this collection – “matrix-matrix multiplication” [2], we do not forget that the matrix multiplication “A*B” will be interpreted as a concatenation of X-diagrams of ‘A‘ and ‘B‘. Thus, having:

y = (AB)x = A*(Bx)

will drive the enter vector ‘x‘ to go at first by means of the transformation of matrix ‘B‘, after which the intermediate end result will undergo the transformation of matrix ‘A‘, after which the output vector ‘y‘ will probably be obtained.

And now the bodily that means of the components “(AB)T = BTAT” turns into clear: flipping horizontally the X-diagram of the product “A*B” will clearly flip the separate X-diagrams of ‘A‘ and the considered one of ‘B‘, nevertheless it additionally will reverse their order:

Within the earlier story [2], we’ve got additionally seen {that a} cell ci,j of the product matrix ‘C=A*B‘ describes all of the doable methods wherein xj of the enter vector ‘x‘ can have an effect on yi of the output vector ‘y = (AB)x‘.

Now, when transposing the product “C=A*B“, thus calculating matrix CT, we need to have the mirroring impact – so cTj,i will describe all doable methods by which yj can have an effect on xi. And with the intention to get that, we should always simply flip the concatenation diagram:

In fact, this interpretation will be generalized on transposing the product of a number of matrices:

[begin{equation*}

(ABC)^T = C^T B^T A^T

end{equation*}]

Why ATA is all the time symmetrical, for any matrix A

A symmetrical matrix ‘S‘ is such an nxn sq. matrix, the place for any indexes i, j ∈ [1..n], we’ve got ‘si,j = sj,i‘. Which means that it’s symmetrical upon its diagonal, in addition to that transposing it should don’t have any impact.

We see that transposing a symmetrical matrix could have no impact. So, a matrix ‘S‘ is symmetrical if and provided that:

[begin{equation*}

S^T = S

end{equation*}]

Equally, the X-diagram of a symmetrical matrix ‘S‘ has the property that it isn’t modified after a horizontal flip. That’s as a result of for any arrow si,j we’ve got an equal arrow sj,i there:

In matrix evaluation, we’ve got a components stating that for any matrix ‘A‘ (not essentially symmetrical), the product ATA is all the time a symmetrical matrix. In different phrases:

[begin{equation*}

(A^T A)^T = A^T A

end{equation*}]

It isn’t easy to really feel the correctness of this components if matrix multiplication within the conventional method. However its correctness turns into apparent if matrix multiplication because the concatenation of their X-diagrams:

What is going to occur if an arbitrary matrix ‘A‘ is concatenated with its horizontal flip AT? The end result ATA will probably be symmetrical, as after a horizontal flip, the precise issue ‘A‘ involves the left aspect and is flipped, turning into AT, whereas the left issue AT involves the precise aspect and can be flipped, turning into ‘A‘.

Because of this for any matrix ‘A‘, the product ATA is all the time symmetrical.

Understanding why (y, Ax) = (x, ATy)

There’s one other components in matrix evaluation, stating that:

[begin{equation*}

(y, Ax) = (x, A^T y)

end{equation*}]

the place “(u, v)” is the dot product of vectors ‘u‘ and ‘v‘:

[begin{equation*}

(u,v) = u_1 v_1 + u_2 v_2 + dots + u_n v_n

end{equation*}]

The dot product will be calculated just for vectors of equal size. Additionally, the dot product will not be a vector however a single quantity. If attempting for instance the dot product “(u, v)” in a method much like X-diagrams, we will draw one thing like this:

Now, what does the expression (y, Ax) truly imply? It’s the dot product of vector ‘y‘ by the vector “Ax” (or by vector ‘x‘, which went by means of the transformation of “A“). For the expression (y, Ax) to make sense, we should always have:

|x| = columns(A), and

|y| = rows(A).

At first, let’s calculate (y, Ax) formally. Right here, each worth yi is multiplied by the i-th worth of the vector Ax, denoted right here as “(Ax)i“:

[begin{equation*}

(Ax)_i = a_{i,1}x_1 + a_{i,2}x_2 + dots + a_{i,m}x_m

end{equation*}]

After one multiplication, we could have:

[begin{equation*}

y_i(Ax)_i = y_i a_{i,1}x_1 + y_i a_{i,2}x_2 + dots + y_i a_{i,m}x_m

end{equation*}]

And after summing all of the phrases by “i ∈ [1, n]”, we could have:

[begin{equation*}

begin{split}

(y, Ax) = y_1(Ax)_1 + y_2(Ax)_2 + dots + y_n(Ax)_n =

= y_1 a_{1,1}x_1 + y_1 a_{1,2}x_2 + &dots + y_1 a_{1,m}x_m +

+ y_2 a_{2,1}x_1 + y_2 a_{2,2}x_2 + &dots + y_2 a_{2,m}x_m +

&vdots

+ y_n a_{n,1}x_1 + y_n a_{n,2}x_2 + &dots + y_n a_{n,m}x_m

end{split}

end{equation*}]

which clearly reveals that within the product (y, Ax), each cell ai,j of the matrix “A” participates precisely as soon as, along with the elements yi and xj.

Now let’s transfer to X-diagrams. If we need to draw one thing like an X-diagram of vector “Ax“, we will do it within the following method:

Subsequent, if we need to draw the dot product (y, Ax), we will do it this manner:

On this diagram, let’s see what number of methods there are to succeed in the left endpoint from the precise one. The trail from proper to left can go by means of any arrow of A‘s X-diagram. If passing by means of a sure arrow ai,j, it is going to be the trail composed of xj, the arrow ai,j, and yi.

And this precisely matches the formal conduct of (y, Ax) derived a bit above, the place (y, Ax) was the sum of all triples of the shape “yi*ai,j*xj“. And we will conclude right here that if (y, Ax) within the X-interpretation, it is the same as the sum of all doable paths from the precise endpoint to the left one.

Now, what is going to occur if we flip this whole diagram horizontally?

From the algebraic perspective, the sum of all paths from proper to left is not going to change, as all collaborating phrases stay the identical. However wanting from the geometrical perspective, the vector ‘y‘ goes to the precise half, the vector ‘x‘ involves the left half, and the matrix “A” is being flipped horizontally; in different phrases, “A” is transposed. So the flipped X-diagram corresponds to the dot product of vectors “x” and “ATy” now, or has the worth of (x, ATy). We see that each (y, Ax) and (x, ATy) symbolize the identical sum, which proves that:

[begin{equation*}

(y, Ax) = (x, A^T y)

end{equation*}]

Conclusion

That’s all I needed to current in regard to the matrix transpose operation. I hope that the visible strategies illustrated above will assist all of us to realize a greater grasp of assorted matrix operations.

Within the subsequent (and possibly the final) story of this collection, I’ll tackle inverting matrices, and the way it may be visualized by X-interpretation. We are going to see why formulation like “(AB)-1 = B-1A-1” are the way in which they really are, and we’ll observe how the inverse works on a number of particular varieties of matrices.

So see you within the subsequent story!

My gratitude to:

– Asya Papyan, for the exact design of all of the used illustrations (linkedin.com/in/asya-papyan-b0a1b0243/),

– Roza Galstyan, for cautious evaluation of the draft (linkedin.com/in/roza-galstyan-a54a8b352/).For those who loved studying this story, be happy to observe me on LinkedIn, the place, amongst different issues, I will even put up updates (linkedin.com/in/tigran-hayrapetyan-cs/).

All used photos, until in any other case famous, are designed by request of the writer.

References

[1] – Understanding matrices | Half 1: matrix-vector multiplication – https://towardsdatascience.com/understanding-matrices-part-1-matrix-vector-multiplication/

[2] – Understanding matrices | Half 2: matrix-matrix multiplication – https://towardsdatascience.com/understanding-matrices-part-2-matrix-matrix-multiplication/