on the subject of algorithm-agnostic mannequin constructing. You’ll find the earlier two articles revealed on TDS beneath.

Algorithm-Agnostic Model Building with MLflow

Explainable Generic ML Pipeline with MLflow

After writing these two articles, I continued to develop the framework, and it regularly developed into one thing a lot bigger than I initially envisioned. Quite than squeezing every part into one other article, I made a decision to bundle it as an open-source Python library known as MLarena to share with fellow knowledge and ML practitioners. MLarena is an algorithm-agnostic machine studying toolkit that helps mannequin coaching, diagnostics, and optimization.

🔗You’ll find the total codebase on GitHub: MLarena repo 🧰

At its core, MLarena is carried out as a customized mlflow.pyfunc mannequin. This makes it absolutely suitable with the MLflow ecosystem, enabling sturdy experiment monitoring, mannequin versioning, and seamless deployment, no matter which underlying ML library you utilize, and allows easy migration between algorithms when crucial.

As well as, it additionally seeks to strike a stability between automation and professional perception in mannequin growth. Many instruments both summary away an excessive amount of, making it arduous to know what’s taking place beneath the hood, or require a lot boilerplate that they decelerate iteration. MLarena goals to bridge that hole: it automates routine machine studying duties utilizing greatest practices, whereas additionally offering instruments for professional customers to diagnose, interpret, and optimize their fashions extra successfully.

Within the sections that observe, we’ll have a look at how these concepts are mirrored within the toolkit’s design and stroll by sensible examples of the way it can assist real-world machine studying workflows.

1. A Light-weight Abstraction for Coaching and Analysis

One of many recurring ache factors in ML workflows is the quantity of boilerplate code required simply to get a working pipeline, particularly when switching between algorithms or frameworks. MLarena introduces a light-weight abstraction that standardizes this course of whereas remaining suitable with scikit-learn-style estimators.

Right here’s a easy instance of how the core MLPipeline object works:

from mlarena import MLPipeline, PreProcessor

# Outline the pipeline

mlpipeline_rf = MLPipeline(

mannequin = RandomForestClassifier(), # works with any sklearn model algorithm

preprocessor = PreProcessor()

)

# Match the pipeline

mlpipeline_rf.match(X_train,y_train)

# Predict on new knowledge and consider

outcomes = mlpipeline_rf.consider(X_test, y_test)This interface wraps collectively widespread preprocessing steps, mannequin coaching, and analysis. Internally, it auto-detects the duty sort (classification or regression), applies acceptable metrics, and generates a diagnostic report—all with out sacrificing flexibility in how fashions or preprocessors are outlined (extra on customization choices later).

Quite than abstracting every part away, MLarena focuses on surfacing significant defaults and insights. The consider methodology doesn’t simply return scores, it produces a full report tailor-made to the duty.

1.1 Diagnostic Reporting

For classification duties, the analysis report contains key metrics comparable to AUC, MCC, precision, recall, F1, and F-beta (when beta is specified). The visible outputs function a ROC-AUC curve (backside left), a confusion matrix (backside proper), and a precision–recall–threshold plot on the high. On this high plot, precision (blue), recall (purple), and F-beta (inexperienced, with β = 1 by default) are proven throughout totally different classification thresholds, with a vertical dotted line indicating the present threshold to spotlight the trade-off. These visualizations are helpful not just for technical diagnostics, but in addition for supporting discussions round threshold choice with area consultants (extra on threshold optimization later).

=== Classification Mannequin Analysis ===

1. Analysis Parameters

----------------------------------------

• Threshold: 0.500 (Classification cutoff)

• Beta: 1.000 (F-beta weight parameter)

2. Core Efficiency Metrics

----------------------------------------

• Accuracy: 0.805 (Total right predictions)

• AUC: 0.876 (Rating high quality)

• Log Loss: 0.464 (Confidence-weighted error)

• Precision: 0.838 (True positives / Predicted positives)

• Recall: 0.703 (True positives / Precise positives)

• F1 Rating: 0.765 (Harmonic imply of Precision & Recall)

• MCC: 0.608 (Matthews Correlation Coefficient)

3. Prediction Distribution

----------------------------------------

• Pos Fee: 0.378 (Fraction of optimistic predictions)

• Base Fee: 0.450 (Precise optimistic class price)For regression fashions, MLarena routinely adapts its analysis metrics and visualisations:

=== Regression Mannequin Analysis ===

1. Error Metrics

----------------------------------------

• RMSE: 0.460 (Root Imply Squared Error)

• MAE: 0.305 (Imply Absolute Error)

• Median AE: 0.200 (Median Absolute Error)

• NRMSE Imply: 22.4% (RMSE/imply)

• NRMSE Std: 40.2% (RMSE/std)

• NRMSE IQR: 32.0% (RMSE/IQR)

• MAPE: 17.7% (Imply Abs % Error, excl. zeros)

• SMAPE: 15.9% (Symmetric Imply Abs % Error)

2. Goodness of Match

----------------------------------------

• R²: 0.839 (Coefficient of Willpower)

• Adj. R²: 0.838 (Adjusted for # of options)

3. Enchancment over Baseline

----------------------------------------

• vs Imply: 59.8% (RMSE enchancment)

• vs Median: 60.9% (RMSE enchancment)

One hazard in speedy iteration of ML challenge is that some underlying points could go unnoticed. Due to this fact, along with the above metrics and plots, a Mannequin Analysis Diagnostics part will seem within the report when potential purple flags are detected:

Regression Diagnostics

⚠️ Pattern-to-feature ratio warnings: Alerts when n/ok < 10, indicating excessive overfitting danger

ℹ️ MAPE transparency: Reviews what number of observations had been excluded from MAPE on account of zero goal values

Classification Diagnostics

⚠️ Information leakage detection: Flags near-perfect AUC (>99%) that usually signifies leakage

⚠️ Overfitting alerts: Similar n/ok ratio warnings as regression

ℹ️ Class imbalance consciousness: Flags severely imbalanced class distributions

Under is an summary of MLarena’s analysis reviews for each classification and regression duties:

1.2 Explainability as a Constructed-In Layer

Explainability in machine studying initiatives is essential for a number of causes:

- Mannequin Choice

Explainability helps us select the very best mannequin by letting us consider the soundness of its reasoning. Even when two fashions present comparable efficiency metrics, analyzing the options they depend on with area consultants can reveal which mannequin’s logic aligns higher with real-world understanding. - Troubleshooting

Analyzing a mannequin’s reasoning is a robust troubleshooting technique for enchancment. As an illustration, by investigating why a classification mannequin confidently made a mistake, we will pinpoint the contributing options and proper its reasoning. - Mannequin Monitoring

Past typical efficiency and knowledge drift checks, monitoring mannequin reasoning is very informative. Getting alerted to vital shifts in the important thing options driving a manufacturing mannequin’s selections helps preserve its reliability and relevance. - Mannequin Implementation

Offering mannequin reasoning alongside predictions will be extremely invaluable to end-users. For instance, a customer support agent might use a churn rating together with the particular buyer options that result in that rating to higher retain a buyer.

To assist mannequin interpretability, the explain_model methodology provides you international explanations, revealing which options have probably the most vital affect in your mannequin’s predictions.

mlpipeline.explain_model(X_test)

The explain_case methodology gives native explanations for particular person circumstances, serving to us perceive how every function contributes to every particular prediction.

mlpipeline.explain_case(5)

1.3 Reproducibility and Deployment With out Further Overhead

One persistent problem in machine studying initiatives is guaranteeing that fashions are reproducible and production-ready—not simply as code, however as full artifacts that embody preprocessing, mannequin logic, and metadata. Typically, the trail from a working pocket book to a deployable mannequin entails manually wiring collectively a number of parts and remembering to trace all related configurations.

To scale back this friction, MLPipeline is carried out as a customized mlflow.pyfunc mannequin. This design selection permits all the pipeline ( together with the preprocessing steps and skilled mannequin), to be packaged as a single, transportable artifact.

When evaluating a pipeline, you possibly can allow MLflow logging by setting log_model=True:

outcomes = mlpipeline.consider(

X_test, y_test,

log_model=True # to log the pipeline with mlflow

)Behind the scenes, this triggers a collection of MLflow operations:

- Begins and manages an MLflow run

- Logs mannequin hyperparameters and analysis metrics

- Saves the whole pipeline object as a versioned artifact

- Routinely infers the mannequin signature to scale back deployment errors

This helps groups preserve experiment traceability and transfer from experimentation to deployment extra easily, with out duplicating monitoring or serialization code. The ensuing artifact is suitable with the MLflow Mannequin Registry and will be deployed by any of MLflow’s supported backends.

2. Tuning Fashions with Effectivity and Stability in Thoughts

Hyperparameter tuning is without doubt one of the most resource-intensive components of constructing machine studying fashions. Whereas search methods like grid or random search are widespread, they are often computationally costly and sometimes inefficient, particularly when utilized to giant or advanced search areas. One other massive concern in hyperparameter optimization is that it might produce unstable fashions that carry out effectively in growth however degrade in manufacturing.

To handle these points, MLarena features a tune methodology that simplifies the method of hyperparameter optimization whereas encouraging robustness and transparency. It builds on Bayesian optimization—an environment friendly search technique that adapts based mostly on earlier outcomes—and provides guardrails to keep away from widespread pitfalls like overfitting or incomplete search area protection.

2.1 Hyperparameter Optimization with Constructed-In Early Stopping and Variance Management

Right here’s an instance of the best way to run tuning utilizing LightGBM and a customized search area:

from mlarena import MLPipeline, PreProcessor

import lightgbm as lgb

lgb_param_ranges = {

'learning_rate': (0.01, 0.1),

'n_estimators': (100, 1000),

'num_leaves': (20, 100),

'max_depth': (5, 15),

'colsample_bytree': (0.6, 1.0),

'subsample': (0.6, 0.9)

}

# organising with default settings, see customization beneath

best_pipeline = MLPipeline.tune(

X_train,

y_train,

algorithm=lgb.LGBMClassifier, # works with any sklearn model algorithm

preprocessor=PreProcessor(),

param_ranges=lgb_param_ranges

)To keep away from pointless computation, the tuning course of contains assist for early stopping: you possibly can set a most variety of evaluations, and cease the method routinely if no enchancment is noticed after a specified variety of trials. This protects computation time whereas focusing the search on probably the most promising components of the search area.

best_pipeline = MLPipeline.tune(

...

max_evals=500, # most optimization iterations

early_stopping=50, # cease if no enchancment after 50 trials

n_startup_trials=5, # minimal trials earlier than early stopping kicks in

n_warmup_steps=0, # steps per trial earlier than pruning

)To make sure sturdy outcomes, MLarena applies cross-validation throughout hyperparameter tuning. Past optimizing for common efficiency, it additionally means that you can penalize excessive variance throughout folds utilizing the cv_variance_penalty parameter. That is notably invaluable in real-world eventualities the place mannequin stability will be simply as essential as uncooked accuracy.

best_pipeline = MLPipeline.tune(

...

cv=5, # variety of folds for cross-validation

cv_variance_penalty=0.3, # penalize excessive variance throughout folds

)For instance, between two fashions with similar imply AUC, the one with decrease variance throughout folds is usually extra dependable in manufacturing. Will probably be chosen by MLarena tuning on account of its higher efficient rating, which is mean_auc - std * cv_variance_penalty:

| Mannequin | Imply AUC | Std Dev | Efficient Rating |

|---|---|---|---|

| A | 0.85 | 0.02 | 0.85 – 0.02 * 0.3 (penalty) |

| B | 0.85 | 0.10 | 0.85 – 0.10 * 0.3 (penalty) |

2.2 Diagnosing Search House Design with Visible Suggestions

One other frequent bottleneck in tuning is designing an excellent search area. If the vary for a hyperparameter is simply too slender or too broad, the optimizer could waste iterations or miss high-performing areas solely.

To assist extra knowledgeable search design, MLarena features a parallel coordinates plot that visualizes how totally different hyperparameter values relate to mannequin efficiency:

- You may spot developments, comparable to which ranges of

learning_ratepersistently yield higher outcomes. - You may establish edge clustering, the place top-performing trials are bunched on the boundary of a parameter vary, usually an indication that the vary wants adjustment.

- You may see interactions throughout a number of hyperparameters, serving to refine your instinct or information additional exploration.

This type of visualization helps customers refine search areas iteratively, main to higher outcomes with fewer iterations.

best_pipeline = MLPipeline.tune(

...

# to indicate parallel coordinate plot:

visualize = True # default=True

)

2.3 Selecting the Proper Metric for the Drawback

The target of tuning isn’t all the time the identical: in some circumstances, you need to maximize AUC, in others, chances are you’ll care extra about minimizing RMSE or SMAPE. However totally different metrics additionally require totally different optimization instructions—and when mixed with cross-validation variance penalty, which both must be added to or subtracted from the CV imply relying on the optimization course, the mathematics can get tedious. 😅

MLarena simplifies this by supporting a variety of metrics for each classification and regression:

Classification metrics:

auc(default)f1accuracylog_lossmcc

Regression metrics:

rmse(default)maemedian_aesmapenrmse_mean,nrmse_iqr,nrmse_std

To modify metrics, merely move tune_metric to the tactic:

best_pipeline = MLPipeline.tune(

...

tune_metric = "f1"

)MLarena handles the remainder, routinely figuring out whether or not the metric needs to be maximized or minimized and making use of the variance penalty persistently.

3. Tackling Actual-World Preprocessing Challenges

Preprocessing is usually one of the crucial ignored steps in machine studying workflows, and likewise one of the crucial error-prone. Coping with lacking values, high-cardinality categoricals, irrelevant options, and inconsistent column naming can introduce refined bugs, degrade mannequin efficiency, or block manufacturing deployment altogether.

MLarena’s PreProcessor was designed to make this step extra sturdy and fewer advert hoc. It affords wise defaults for widespread use circumstances, whereas offering the pliability and tooling wanted for extra advanced eventualities.

Right here’s an instance of the default configuration:

from mlarena import PreProcessor

preprocessor = PreProcessor(

num_impute_strategy="median", # Numeric lacking worth imputation

cat_impute_strategy="most_frequent", # Categorical lacking worth imputation

target_encode_cols=None, # Columns for goal encoding (elective)

target_encode_smooth="auto", # Smoothing for goal encoding

drop="if_binary", # Drop technique for one-hot encoding

sanitize_feature_names=True # Clear up particular characters in column names

)

X_train_prep = preprocessor.fit_transform(X_train)

X_test_prep = preprocessor.remodel(X_test)These defaults are sometimes ample for fast iteration. However real-world datasets not often match neatly into defaults. So let’s discover a few of the extra nuanced preprocessing duties the PreProcessor helps.

3.1 Managing Excessive-Cardinality Categoricals with Goal Encoding

Excessive-cardinality categorical options pose a problem: conventional one-hot encoding may end up in tons of of sparse columns. Goal encoding affords a compact various, changing classes with smoothed averages of the goal variable. Nevertheless, tuning the smoothing parameter is hard: too little smoothing results in overfitting, whereas an excessive amount of dilutes helpful sign.

MLarena adopts the empirical Bayes-based strategy in SKLearn’s TargetEncoder to smoothing when target_encode_smooth="auto", and likewise permits customers to specify numeric values (see doc for sklearn TargetEncoder and Micci-Barrec, 2001).

preprocessor = PreProcessor(

target_encode_cols=['city'],

target_encode_smooth='auto'

)To assist information this selection, the plot_target_encoding_comparison methodology visualizes how totally different smoothing values have an effect on the encoding of uncommon classes. For instance:

PreProcessor.plot_target_encoding_comparison(

X_train, y_train,

target_encode_col='metropolis',

smooth_params=['auto', 10, 20]

)

That is particularly helpful for inspecting the impact on underrepresented classes (e.g., a metropolis like “Seattle” with solely 24 samples). The visualization exhibits that totally different smoothing parameters result in marked variations in Seattle’s encoded worth. Such clear visuals assist knowledge specialists and area consultants in having significant discussions and making knowledgeable selections on the very best encoding technique.

3.2 Figuring out and Eradicating Unhelpful Options

One other widespread problem is function overload: too many variables, not all of which contribute significant indicators. Choosing a cleaner subset can enhance each efficiency and interpretability.

The filter_feature_selection methodology helps filter out:

- Options with excessive missingness

- Options with just one distinctive worth

- Options with low mutual data with the goal

Right here’s the way it works:

filter_fs = PreProcessor.filter_feature_selection(

X_train,

y_train,

job='classification', # or 'regression'

missing_threshold=0.2, # drop options with > 20% lacking values

mi_threshold=0.05, # drop options with low mutual data

)This returns a abstract like:

Filter Characteristic Choice Abstract:

==========

Complete options analyzed: 7

1. Excessive lacking ratio (>20.0%): 0 columns

2. Single worth: 1 columns

Columns: occupation

3. Low mutual data (<0.05): 3 columns

Columns: age, tenure, occupation

Advisable drops: (3 columns in complete)The chosen options will be accessed programmatically:

selected_cols = fitler_fs['selected_cols']

X_train_selected = X_train[selected_cols]

This early filter step doesn’t exchange full function engineering or wrapper-based choice (which is on the roadmap), however helps cut back noise earlier than heavier modelling begins.

3.3 Stopping Downstream Errors with Column Identify Sanitization

When one-hot encoding is utilized to categorical options, column names can inherit particular characters, like 'age_60+' or 'income_<$30K'. These characters can break pipelines downstream, particularly throughout logging, deployment, or use with MLflow.

To scale back the danger of silent pipeline failures, MLarena routinely sanitizes function names by default:

preprocessor = PreProcessor(sanitize_feature_names=True)Characters like +, <, and % are changed with protected alternate options as proven within the desk beneath, enhancing compatibility with production-grade tooling. Customers preferring uncooked names can simply disable this habits by setting sanitize_feature_names=False.

4. Fixing On a regular basis Challenges in ML Follow

In real-world machine studying initiatives, success goes past mannequin accuracy. It usually depends upon how clearly we talk outcomes, how effectively our instruments assist stakeholder decision-making, and the way reliably our pipelines deal with imperfect knowledge. MLarena features a rising set of utilities designed to handle these sensible challenges. Under are only a few examples.

4.1 Threshold Evaluation for Classification Issues

Binary classification fashions usually output possibilities, however real-world selections require a tough threshold to separate positives from negatives. This selection impacts precision, recall, and in the end, enterprise outcomes. But in observe, thresholds are sometimes left on the default 0.5, even when that’s not aligned with area wants.

MLarena’s threshold_analysis methodology helps make this selection extra rigorous and tailor-made. We are able to:

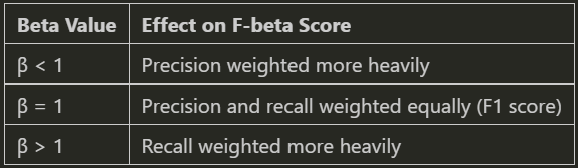

- Customise the precision-recall stability through the beta parameter within the F-beta rating

- Discover the optimum classification threshold based mostly on our enterprise targets by maximizing F-beta

- Use bootstrapping or stratified k-fold cross-validation for sturdy, dependable estimates

# Carry out threshold evaluation utilizing bootstrap methodology

outcomes = MLPipeline.threshold_analysis(

y_train, # True labels for coaching knowledge

y_pred_proba, # Predicted possibilities from mannequin

beta = 0.8, # F-beta rating parameter (weights precision greater than recall)

methodology = "bootstrap", # Use bootstrap resampling for sturdy outcomes

bootstrap_iterations=100) # Variety of bootstrap samples to generate

# make the most of the optimum threshold recognized on new knowledge

best_pipeline.consider(

X_test, y_test, beta=0.8,

threshold=outcomes['optimal_threshold']

)

This allows practitioners to tie mannequin selections extra carefully to area priorities, comparable to catching extra fraud circumstances (recall) or lowering false alarms in high quality management (precision).

4.2 Speaking with Readability By way of Visualization

Sturdy visualizations are important not only for EDA, however for participating stakeholders and validating findings. MLarena features a set of plotting utilities designed for interpretability and readability.

4.2.1 Evaluating Distributions Throughout Teams

When analyzing numerical knowledge throughout distinct classes comparable to areas, cohorts, or therapy teams, a complete understanding requires extra than simply central tendency metrics like imply or median. It’s essential to additionally grasp the information’s dispersion and establish any outliers. To handle this, the plot_box_scatter perform in Mlarena overlays boxplots with jittered scatter factors, offering wealthy distribution data inside a single, intuitive visualization.

Moreover, complementing visible insights with sturdy statistical evaluation usually proves invaluable. Due to this fact, the plotting perform optionally integrates statistical assessments comparable to ANOVA, Welch’s ANOVA, and Kruskal-Wallis, permitting us to annotate our plots with statistical take a look at outcomes, as demonstrated beneath.

import mlarena.utils.plot_utils as put

fig, ax, outcomes = put.plot_box_scatter(

knowledge=df,

x="merchandise",

y="worth",

title="Boxplot with Scatter Overlay (Demo for Crowded Information)",

point_size=2,

xlabel=" ",

stat_test="anova", # specify a statistical take a look at

show_stat_test=True

)

There are numerous methods to customise the plot — both by modifying the returned ax object or utilizing built-in perform parameters. For instance, you possibly can coloration the factors by one other variable utilizing the point_hue parameter.

fig, ax = put.plot_box_scatter(

knowledge=df,

x="group",

y="worth",

point_hue="supply", # coloration factors by supply

point_alpha=0.5,

title="Boxplot with Scatter Overlay (Demo for Level Hue)",

)

4.2.2 Visualizing Temporal Distribution

Information specialists and area consultants ceaselessly want to look at how the distribution of a steady variable evolves over time to identify essential shifts, rising developments, or anomalies.

This usually entails boilerplate duties like aggregating knowledge by desired time granularity (hourly, weekly, month-to-month, and many others.), guaranteeing right chronological order, and customizing appearances, comparable to coloring factors by a 3rd variable of curiosity. Our plot_distribution_over_time perform handles these complexities with ease.

# routinely group knowledge and format X-axis lable by specified granularity

fig, ax = put.plot_distribution_over_time(

knowledge=df,

x='timestamp',

y='heart_rate',

freq='h', # specify granularity

point_hue=None, # set a variable to paint factors if desired

title='Coronary heart Fee Distribution Over Time',

xlabel=' ',

ylabel='Coronary heart Fee (bpm)',

)

Extra demos of plotting capabilities and examples can be found within the plot_utils documentation🔗.

4.3 Information Utilities

When you’re like me, you in all probability spend numerous time cleansing and troubleshooting knowledge earlier than attending to the enjoyable components of machine studying. 😅 Actual-world knowledge is usually messy, inconsistent, and filled with surprises. That’s why MLarena features a rising assortment of data_utils capabilities to simplify and streamline our EDA and knowledge preparation course of.

4.3.1 Cleansing Up Inconsistent Date Codecs

Date columns don’t all the time arrive in clear, ISO codecs, and inconsistent casing or codecs is usually a actual headache. The transform_date_cols perform helps standardize date columns for downstream evaluation, even when values have irregular codecs like:

import mlarena.utils.data_utils as dut

df_raw = pd.DataFrame({

...

"date": ["25Aug2024", "15OCT2024", "01Dec2024"], # inconsistent casing

})

# reworked the required date columns

df_transformed = dut.transform_date_cols(df_raw, 'date', "%dpercentbpercentY")

df_transformed['date']

# 0 2024-08-25

# 1 2024-10-15

# 2 2024-12-01It routinely handles case variations and converts the column into correct datetime objects.

When you generally neglect the Python date format codes or combine them up with Spark’s, you’re not alone 😁. Simply test the perform’s docstring for a fast refresher.

?dut.transform_date_cols # test for docstringSignature:

----------

dut.transform_date_cols(

knowledge: pandas.core.body.DataFrame,

date_cols: Union[str, List[str]],

str_date_format: str = '%Ypercentmpercentd',

) -> pandas.core.body.DataFrame

Docstring:

Transforms specified columns in a Pandas DataFrame to datetime format.

Parameters

----------

knowledge : pd.DataFrame

The enter DataFrame.

date_cols : Union[str, List[str]]

A column identify or listing of column names to be reworked to dates.

str_date_format : str, default="%Ypercentmpercentd"

The string format of the dates, utilizing Python's `strftime`/`strptime` directives.

Widespread directives embody:

%d: Day of the month as a zero-padded decimal (e.g., 25)

%m: Month as a zero-padded decimal quantity (e.g., 08)

%b: Abbreviated month identify (e.g., Aug)

%B: Full month identify (e.g., August)

%Y: 4-digit yr (e.g., 2024)

%y: Two-digit yr (e.g., 24)4.3.2 Verifying Major Keys in Messy Information

Figuring out a sound main key will be difficult in real-world, messy datasets. Whereas a standard main key should inherently be distinctive throughout all rows and include no lacking values, potential key columns usually include nulls, notably within the early phases of an information pipeline.

The is_primary_key perform adopts a realistic strategy to this problem: it alerts person to any lacking values inside potential key columns after which verifies if the remaining non-null rows are uniquely identifiable.

That is helpful for:

– Information high quality evaluation: Shortly assess the completeness and uniqueness of our key fields.

– Be part of readiness: Establish dependable keys for merging datasets, even when some values are initially lacking.

– ETL validation: Confirm key constraints whereas accounting for real-world knowledge imperfections.

– Schema design: Inform sturdy database schema planning with insights derived from precise knowledge key traits.

As such, is_primary_key is especially invaluable for designing resilient knowledge pipelines in less-than-perfect knowledge environments. It helps each single and composite keys by accepting both a column identify or an inventory of columns.

df = pd.DataFrame({

# Single column main key

'id': [1, 2, 3, 4, 5],

# Column with duplicates

'class': ['A', 'B', 'A', 'B', 'C'],

# Date column with some duplicates

'date': ['2024-01-01', '2024-01-01', '2024-01-02', '2024-01-02', '2024-01-03'],

# Column with null values

'code': ['X1', None, 'X3', 'X4', 'X5'],

# Values column

'worth': [100, 200, 300, 400, 500]

})

print("nTest 1: Column with duplicates")

dut.is_primary_key(df, ['category']) # Ought to return False

print("nTest 2: Column with null values")

dut.is_primary_key(df, ['code','date']) # Ought to return TrueCheck 1: Column with duplicates

✅ There are not any lacking values in column 'class'.

ℹ️ Complete row rely after filtering out missings: 5

ℹ️ Distinctive row rely after filtering out missings: 3

❌ The column(s) 'class' don't type a main key.

Check 2: Column with null values

⚠️ There are 1 row(s) with lacking values in column 'code'.

✅ There are not any lacking values in column 'date'.

ℹ️ Complete row rely after filtering out missings: 4

ℹ️ Distinctive row rely after filtering out missings: 4

🔑 The column(s) 'code', 'date' type a main key after eradicating rows with lacking values.Past what we’ve coated, the data_utils module affords different useful utilities, together with a devoted set of three capabilities for the “Uncover → Examine → Resolve” deduplication workflow, the place is_primary_key mentioned above, serves because the preliminary step. Extra particulars can be found within the data_utils demo🔗.

And there you’ve it — an introduction to the MLarena bundle. My hope is that these instruments show as invaluable for streamlining your machine studying workflows as they’ve been for mine. That is an open-source, not-for-profit initiative. Please don’t hesitate to succeed in out when you have any questions or wish to request new options. I’d love to listen to from you! 🤗

Keep tuned, and observe me on Medium. 😁

💼LinkedIn | 😺GitHub | 🕊️Twitter/X

Except in any other case famous, all photographs are by the writer.