automotive stops abruptly. Worryingly, there is no such thing as a cease register sight. The engineers can solely make guesses as to why the automotive’s neural community turned confused. It could possibly be a tumbleweed rolling throughout the road, a automotive coming down the opposite lane or the pink billboard within the background. To seek out the true purpose, they flip to Grad-CAM [1].

Grad-CAM is an explainable AI (XAI) method that helps reveal why a convolutional neural community (CNN) made a selected resolution. The strategy produces a heatmap that highlights the areas in a picture which are an important for a prediction. For our self-driving automotive instance, this might present if the pixels from the weed, automotive or billboard brought on the automotive to cease.

Now, Grad-CAM is one in every of many XAI methods for Computer Vision. As a consequence of its pace, flexibility and reliability, it has rapidly change into some of the well-liked. It has additionally impressed many associated strategies. So, in case you are thinking about XAI, it’s price understanding precisely how this technique works. To do this, we will probably be implementing Grad-CAM from scratch utilizing Python.

Particularly, we will probably be counting on PyTorch Hooks. As you will note, these enable us to dynamically extract gradients and activations from a community throughout ahead and backwards passes. These are sensible abilities that won’t solely let you implement Grad-CAM but in addition any gradient-based XAI technique. See the total venture on GitHub.

The idea behind Grad-CAM

Earlier than we get to the code, it’s price referring to the idea behind Grad-CAM. If you’d like a deep dive, then try the video under. If you wish to study different strategies, then see this free XAI for Computer Vision course.

To summarise, when creating Grad-CAM heatmaps, we begin with a educated CNN. We then do a ahead move by this community with a single pattern picture. It will activate all convolutional layers within the community. We name these function maps ($A^okay$). They are going to be a set of 2D matrices that comprise totally different options detected within the pattern picture.

With Grad-CAM, we’re usually within the maps from the final convolutional layer of the community. Once we apply the strategy to VGG16, you will note that its ultimate layer has 512 function maps. We use these as they comprise options with probably the most detailed semantic data whereas nonetheless retaining spatial data. In different phrases, they inform us what was used for a prediction and the place within the picture it was taken from.

The issue is that these maps additionally comprise options which are necessary for different lessons. To mitigate this, we observe the method proven in Determine 1. As soon as now we have the function maps ($A^okay$), we weight them by how necessary they’re to the category of curiosity ($y_c$). We do that utilizing $a_k^c$ — the typical gradient of the rating for $y_c$ w.r.t. to the weather within the function map. We then do element-wise summation. For VGG16, you will note we go from 512 maps of 14×14 pixels to a single 14×14 map.

The gradients for a person component ($frac{partial y^c}{partial A_{ij}^okay}$) inform us how a lot the rating will change with a small change within the component. Because of this giant common gradients point out that the whole function map was necessary and will contribute extra to the ultimate heatmap. So, once we weight and sum the maps, those that comprise options for different lessons will possible contribute much less.

The ultimate steps are to use the ReLU activation perform to make sure all destructive parts could have a worth of zero. Then we upsample with interpolation so the heatmap has the identical dimensions because the pattern picture. The ultimate map is summarised by the method under. You would possibly recognise it from the Grad-CAM paper [1].

$$ L_{Grad-CAM}^c = ReLUleft( sum_{okay} a_k^c A^okay proper) $$

Grad-CAM from Scratch

Don’t fear if the idea is just not fully clear. We are going to stroll by it step-by-step as we apply the strategy from scratch. You’ll find the total venture on GitHub. To begin, now we have our imports under. These are all frequent imports for laptop imaginative and prescient issues.

import matplotlib.pyplot as plt

import numpy as np

import cv2

from PIL import Picture

import torch

import torch.nn.useful as F

from torchvision import fashions, transforms

import urllib.requestLoad pretrained mannequin from PyTorch

We’ll be making use of Grad-CAM to VGG16 pretrained on ImageNet. To assist, now we have the 2 features under. The primary will format a picture within the right approach for enter into the mannequin. The normalisation values used are the imply and commonplace deviation of the pictures in ImageNet. The 224×224 measurement can be commonplace for ImageNet fashions.

def preprocess_image(img_path):

"""Load and preprocess photographs for PyTorch fashions."""

img = Picture.open(img_path).convert("RGB")

#Transforms utilized by imagenet fashions

remodel = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

])

return remodel(img).unsqueeze(0)ImageNet has many lessons. The second perform will format the output of the mannequin so we show the lessons with the best predicted possibilities.

def display_output(output,n=5):

"""Show the highest n classes predicted by the mannequin."""

# Obtain the classes

url = "https://uncooked.githubusercontent.com/pytorch/hub/grasp/imagenet_classes.txt"

urllib.request.urlretrieve(url, "imagenet_classes.txt")

with open("imagenet_classes.txt", "r") as f:

classes = [s.strip() for s in f.readlines()]

# Present prime classes per picture

possibilities = torch.nn.useful.softmax(output[0], dim=0)

top_prob, top_catid = torch.topk(possibilities, n)

for i in vary(top_prob.measurement(0)):

print(classes[top_catid[i]], top_prob[i].merchandise())

return top_catid[0]We now load the pretrained VGG16 model (line 2), transfer it to a GPU (strains 5-8) and set it to analysis mode (line 11). You’ll be able to see a snippet of the mannequin output in Determine 2. VGG16 is product of 16 weighted layers. Right here, you possibly can see the final 2 of 13 convolutional layers and the three absolutely related layers.

# Load the pre-trained mannequin (e.g., VGG16)

mannequin = fashions.vgg16(pretrained=True)

# Set the mannequin to gpu

system = torch.system('mps' if torch.backends.mps.is_built()

else 'cuda' if torch.cuda.is_available()

else 'cpu')

mannequin.to(system)

# Set the mannequin to analysis mode

mannequin.eval()The names you see in Determine 2 are necessary. Later, we’ll use them to reference a particular layer within the community to entry its activations and gradients. Particularly, we’ll use mannequin.options[28]. That is the ultimate convolutional layer within the community. As you possibly can see within the snapshot, this layer incorporates 512 function maps.

Ahead move with pattern picture

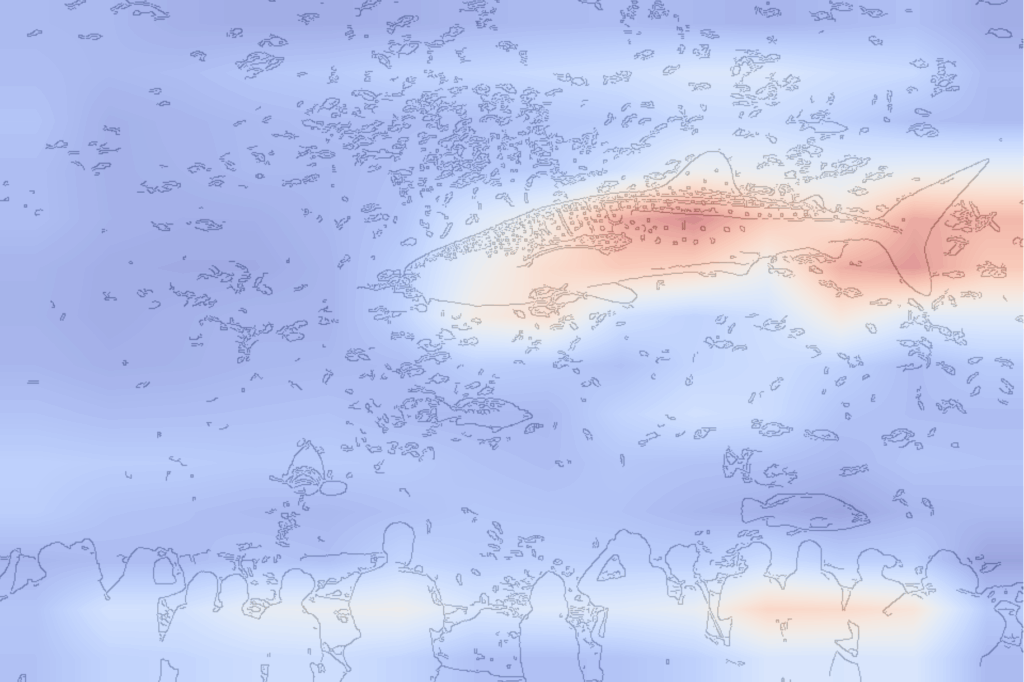

We will probably be explaining a prediction from this mannequin. To do that, we’d like a pattern picture that will probably be fed into the mannequin. We downloaded one from Wikipedia Commons (strains 2-3). We then load it (strains 5-6), crop it to have equal top and width (line 7) and show it (strains 9-10). In Determine 3, you possibly can see we’re utilizing a picture of a whale shark in an aquarium.

# Load a pattern picture from the net

img_url = "https://add.wikimedia.org/wikipedia/commons/thumb/a/a1/Male_whale_shark_at_Georgia_Aquarium.jpg/960px-Male_whale_shark_at_Georgia_Aquarium.jpg"

urllib.request.urlretrieve(img_url, "sample_image.jpg")[0]

img_path = "sample_image.jpg"

img = Picture.open(img_path).convert("RGB")

img = img.crop((320, 0, 960, 640)) # Crop to 640x640

plt.imshow(img)

plt.axis("off")

ImageNet has no devoted class for whale sharks, so it is going to be fascinating to see what the mannequin predicts. To do that, we begin by processing our picture (line 2) and shifting it to the GPU (line 3). We then do a ahead move to get a prediction (line 6) and show the highest 5 possibilities (line 7). You’ll be able to see these in Determine 4.

# Preprocess the picture

img_tensor = preprocess_image(img_path)

img_tensor = img_tensor.to(system)

# Ahead move

predictions = mannequin(img_tensor)

display_output(predictions,n=5)Given the out there lessons, these appear cheap. They’re all marine life and the highest two are sharks. Now, let’s see how we will clarify this prediction. We need to perceive what areas of the picture contribute probably the most to the best predicted class — hammerhead.

PyTorch hooks naming conventions

Grad-CAM heatmaps are created utilizing each activations from a ahead move and gradients from a backwards move. To entry these, we’ll use PyTorch hooks. These are features that let you save the inputs and outputs of a layer. We gained’t do it right here, however they even let you alter these facets. For instance, Guided Backpropagation may be utilized by guaranteeing solely constructive gradients are propagated utilizing a backwards hook.

You’ll be able to see some examples of those features under. A forwards_hook will probably be referred to as throughout a ahead move. Will probably be registered on a given module (i.e. layer). By default, the perform receives three arguments — the module, its enter and its output. Equally, a backwards_hook is triggered throughout a backwards move with the module and gradients of the enter and output.

# Instance of a forwards hook perform

def fowards_hook(module, enter, output):

"""Parameters:

module (nn.Module): The module the place the hook is utilized.

enter (tuple of Tensors): Enter to the module.

output (Tensor): Output of the module."""

...

# Instance of a backwards hook perform

def backwards_hook(module, grad_in, grad_out):

"""Parameters:

module (nn.Module): The module the place the hook is utilized.

grad_in (tuple of Tensors): Gradients w.r.t. the enter of the module.

grad_out (tuple of Tensors): Gradients w.r.t. the output of the module."""

...To keep away from confusion, let’s make clear the parameter names utilized by these features. Check out the overview of the usual backpropagation process for a convolutional layer in Determine 5. This layer consists of a set of kernels, $Ok$, and biases, $b$. The opposite components are the:

- enter – a set of function maps or a picture

- output – set of function maps

- grad_in is the gradient of the loss w.r.t. the layer’s enter.

- grad_out is the gradient of the loss w.r.t. the layer’s output.

Now we have labelled these utilizing the identical names of the arguments used to name the hook features that we apply later.

Take into account, we gained’t use the gradients in the identical approach as backpropagation. Often, we use the gradients of a batch of photographs to replace $Ok$ and $b$. Now, we’re solely thinking about grad_out of a single pattern picture. It will give us the gradients of the weather within the layer’s function maps. In different phrases, the gradients we use to weight the function maps.

Activations with PyTorch ahead hook

Our VGG16 community has been created utilizing ReLU with inplace=True. These modify tensors in reminiscence, so the unique values are misplaced. That’s, tensors used as enter are overwritten by the ReLU perform. This could result in issues when making use of hooks, as we might have the unique enter. So we use the code under to exchange all ReLU features with inplace=False ones. This won’t impression the output of the mannequin, however it’ll improve its reminiscence utilization.

# Change all in-place ReLU activations with out-of-place ones

def replace_relu(mannequin):

for identify, little one in mannequin.named_children():

if isinstance(little one, torch.nn.ReLU):

setattr(mannequin, identify, torch.nn.ReLU(inplace=False))

print(f"Changing ReLU activation in layer: {identify}")

else:

replace_relu(little one) # Recursively apply to submodules

# Apply the modification to the VGG16 mannequin

replace_relu(mannequin)Beneath now we have our first hook perform — save_activations. It will append the output from a module (line 6) to a listing of activations (line 2). In our case, we’ll solely register the hook onto one module (i.e. the final convolutional layer), so this record will solely comprise one component. Discover how we format the output (line 6). We detach it from the computational graph so the community is just not affected. We additionally format them as a numpy array and squeeze the batch dimension.

# Record to retailer activations

activations = []

# Operate to save lots of activations

def save_activations(module, enter, output):

activations.append(output.detach().cpu().numpy().squeeze())To make use of the hook perform, we register it on the final convolutional layer — mannequin.options[28]. That is accomplished utilizing the register_forward_hook perform.

# Register the hook to the final convolutional layer

hook = mannequin.options[28].register_forward_hook(save_activations)Now, once we do a ahead move (line 2), the save_activations hook perform will probably be referred to as for this layer. In different phrases, its output will probably be saved to the activations record.

# Ahead move by the mannequin to get activations

prediction = mannequin(img_tensor)

Lastly, it’s good observe to take away the hook perform when it’s now not wanted (line 2). This implies the ahead hook perform won’t be triggered if we do one other ahead move.

# Take away the hook after use

hook.take away() The form of those activations is (512, 14, 14). In different phrases, now we have 512 function maps and every map is 14×14 pixels. You’ll be able to see some examples of those in Determine 6. A few of these maps might comprise options necessary for different lessons or those who lower the likelihood of the anticipated class. So let’s see how we will discover gradients to assist establish an important maps.

act_shape = np.form(activations[0])

print(f"Form of activations: {act_shape}") # (512, 14, 14)

Gradients with PyTorch backwards hooks

To get gradients, we observe an analogous course of to earlier than. The important thing distinction is that we now use the register_full_backward_hook to register the save_gradients perform (line 7). It will be sure that it’s referred to as throughout a backwards move. Importantly, we do the backwards move (line 16) from the output for the category with the best rating (line 13). This successfully units the rating for this class to 1 and all different scores to 0. In different phrases, we get the gradients of the hammerhead class w.r.t. to the weather of the function maps.

gradients = []

def save_gradient(module, grad_in, grad_out):

gradients.append(grad_out[0].cpu().numpy().squeeze())

# Register the backward hook on a convolutional layer

hook = mannequin.options[28].register_full_backward_hook(save_gradient)

# Ahead move

output = mannequin(img_tensor)

# Decide the category with highest rating

rating = output[0].max()

# Backward move from the rating

rating.backward()

# Take away the hook after use

hook.take away()We could have a gradient for each component of the function maps. So, once more, the form is (512, 14, 14). Determine 7 visualises a few of these. You’ll be able to see some are inclined to have greater values. Nevertheless, we’re not so involved with the person gradients. Once we create a Grad-CAM heatmap, we’ll use the typical gradient of every function map.

grad_shape = np.form(gradients[0])

print(f"Form of gradients: {grad_shape}") # (512, 14, 14)

Lastly, earlier than we transfer on, it’s good observe to reset the mannequin’s gradients (line 2). That is notably necessary in case you plan to run the code for a number of photographs, as gradients may be accrued with every backwards move.

# Reset gradients

mannequin.zero_grad() Creating Grad-CAM heatmaps

First, we discover the imply gradients for every function map. There will probably be 512 of those common gradients. Plotting a histogram of them, you possibly can see most are typically round 0. In different phrases, these don’t have a lot impression on the anticipated rating. There are a number of that are inclined to have a destructive impression and a constructive impression. It’s these function maps we need to give extra weight to.

# Step 1: mixture the gradients

gradients_aggregated = np.imply(gradients[0], axis=(1, 2))

We mix all of the activations by doing element-wise summation (strains 2-4). Once we do that, we weight every function map by its common gradient (line 3). Ultimately, we could have one 14×14 array.

# Step 2: weight the activations by the aggregated gradients and sum them up

weighted_activations = np.sum(activations[0] *

gradients_aggregated[:, np.newaxis, np.newaxis],

axis=0)These weighted activations will comprise each constructive and destructive pixels. We are able to think about the destructive pixels to be suppressing the anticipated rating. In different phrases, a rise within the worth of those areas tends to lower the rating. Since we’re solely within the constructive contributions—areas that help the category prediction—we apply a ReLU activation to the ultimate heatmap (line 2). You’ll be able to see the distinction within the heatmaps in Determine 9.

# Step 3: ReLU summed activations

relu_weighted_activations = np.most(weighted_activations, 0)

You’ll be able to see the heatmap in Determine 9 is sort of coarse. It might be extra helpful if it had the size of the unique picture. This is the reason the final step for creating Grad-CAM heatmaps is to upsample to the dimension of the enter picture (strains 2-4). On this case, now we have a 224×224 picture.

#Step 4: Upsample the heatmap to the unique picture measurement

upsampled_heatmap = cv2.resize(relu_weighted_activations,

(img_tensor.measurement(3), img_tensor.measurement(2)),

interpolation=cv2.INTER_LINEAR)

print(np.form(upsampled_heatmap)) # Must be (224, 224)Determine 10 offers us our ultimate visualisation. We show the pattern picture (strains 5-7) subsequent to the heatmap (strains 10-15). For the latter, we create a transparent visualisation with the assistance of Canny Edge detection (line 10). This provides us an edge map (i.e. define) of the pattern picture. We are able to then overlay the heatmap on prime of this (line 14).

# Step 5: visualise the heatmap

fig, ax = plt.subplots(1, 2, figsize=(8, 8))

# Enter picture

resized_img = img.resize((224, 224))

ax[0].imshow(resized_img)

ax[0].axis("off")

# Edge map for the enter picture

edge_img = cv2.Canny(np.array(resized_img), 100, 200)

ax[1].imshow(255-edge_img, alpha=0.5, cmap='grey')

# Overlay the heatmap

ax[1].imshow(upsampled_heatmap, alpha=0.5, cmap='coolwarm')

ax[1].axis("off")our Grad-CAM heatmap, there’s some noise. Nevertheless, it seems the mannequin is counting on the tail fin and, to a lesser extent, the pectoral fin to make its predictions. It’s beginning to make sense why the mannequin labeled this shark as a hammerhead. Maybe each animals share these traits.

For some additional investigation, we apply the identical course of however now utilizing an precise picture of a hammerhead. On this case, the mannequin seems to be counting on the identical options. It is a bit regarding. Would we not count on the mannequin to make use of one of many shark’s defining options— the hammerhead? Finally, this may occasionally lead VGG16 to confuse several types of sharks.

With this instance, we see how Grad-CAM can spotlight potential flaws in our mannequin. We cannot solely get their predictions but in addition perceive how they made them. We are able to perceive if the options used will result in unexpected predictions down the road. This could doubtlessly save us numerous time, cash and within the case of extra consequential functions, lives!

If you wish to be taught extra about XAI for CV try one in every of these articles. Or see this Free XAI for CV course.

I hope you loved this text! See the course page for extra XAI programs. You can even discover me on Bluesky | Threads | YouTube | Medium

References

[1] Ramprasaath R Selvaraju, Michael Cogswell, Abhishek Das, Ramakrishna Vedantam, Devi Parikh, and Dhruv Batra. Grad-cam: Visible explanations from deep networks through gradient-based localization. In Proceedings of the IEEE worldwide convention on laptop imaginative and prescient, pages 618–626, 2017.