have a easy downside: they present you the forecast, however they don’t inform you when it truly modified.

Which may sound trivial. It isn’t.

Trendy numerical climate prediction (NWP) programs — like ECMWF IFS — produce remarkably correct forecasts at ~9 km decision, up to date each few hours. The information is already excellent.

The issue will not be the forecast.

The issue is consideration: understanding when a change in that information is definitely significant.

I didn’t be taught that from software program engineering. I realized it years earlier, learning chaos idea on the Instituto Balseiro. It was there, working by dynamical programs, that I first encountered a barely unsettling concept:

A system could be utterly deterministic and nonetheless be virtually unpredictable.

That concept stayed with me. And years later, once I began constructing AI programs, I noticed that lots of them had been ignoring it.

The issue with “vibe-based” deltas

Once I began seeing how builders had been constructing climate brokers, I seen a sample:

- Fetch forecast information

- Feed it into an LLM

- Ask: “Did the climate change considerably?”

At first look, this appears cheap. From a physics perspective, it’s problematic — no less than for issues the place the choice boundary is already well-defined — as a result of it replaces a well-defined threshold with a probabilistic interpretation.

In a chaotic system, significance will not be a linguistic judgment — it’s a threshold outlined on variables like temperature, precipitation, or wind velocity. It is dependent upon magnitudes, context, and time horizons.

An LLM is a stochastic course of. It is extremely good at producing language, however it’s not designed to implement deterministic boundaries on bodily programs.

Once you ask an LLM whether or not a forecast “modified considerably,” you’re asking a probabilistic mannequin to approximate a deterministic rule that you may have outlined explicitly. That introduces variability precisely the place you need consistency.

The failure modes are delicate:

- Developments inferred from phrasing quite than information

- Inconsistent choices throughout related inputs

- Outputs that can not be examined or reproduced

In lots of purposes, that is perhaps acceptable. In agriculture, power, and logistics — the place a 3°C drop is a section transition for a crop, a non-linear spike in power demand, or an operational disruption — it’s not. These choices must be steady and explainable.

Which led me to a easy rule:

Should you can write an assert assertion for it, you in all probability shouldn’t be utilizing a immediate.

My path to this downside

My profession has appeared much less like a straight line and extra like a trajectory in section area. A Marie Curie PhD in local weather dynamics, 5 years directing R&D at Uruguay’s nationwide meteorology institute — forest hearth prevention, seasonal forecasting, local weather adaptation — then a shift to manufacturing ML at Microsoft and Mercado Libre.

That arc gave me one thing particular: I already understood the physics of the info, the ability horizons of the fashions, and what “important change” truly means in a bodily system. Not as a software program abstraction — as a measurable delta on a variable with identified uncertainty bounds.

Once I began constructing AI programs, the intuition was fast: this can be a threshold downside. Thresholds belong in code, not in prompts.

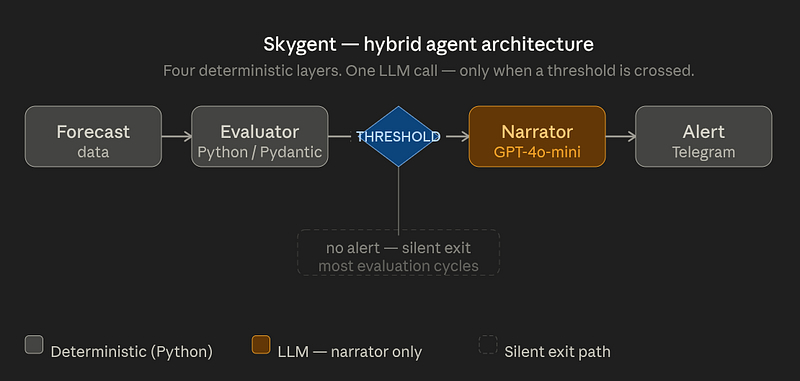

Skygent is one expression of that perspective — an agent designed to not show forecasts, however to detect significant adjustments in them.

The system runs repeatedly on actual forecast information for user-defined occasions, evaluating adjustments each few hours and solely triggering alerts when predefined circumstances are met. In follow, most analysis cycles end in no alert — solely a small fraction of adjustments cross the importance threshold. That’s the purpose: sign, not noise.

The structure

Skygent follows a clear separation throughout 5 layers:

Just one layer calls the LLM.

The Deterministic Gatekeeper

On the core is a Python evaluator. It doesn’t interpret — it calculates. It:

- Compares consecutive Pydantic-validated forecast snapshots

- Evaluates deltas towards configurable thresholds

- Incorporates context: occasion sort, variable sensitivity

- Accounts for forecast horizon utilizing established NWP ability limits — a change in a 24-hour forecast doesn’t carry the identical reliability as a change in a 10-day forecast

That is the place choices are made. Each alert has a traceable path: which variable modified, by how a lot, which threshold was crossed. In a company or authorities atmosphere, with the ability to clarify why an alert fired — with out saying “the mannequin felt prefer it” — will not be non-obligatory.

The Set off

An alert fires provided that a threshold is crossed. If the delta doesn’t cross the boundary, nothing occurs. It is a binary, testable situation — not a judgment name.

The Narrator

Solely after the choice is made does the LLM enter the pipeline. Its function is strictly restricted: take structured JSON information, translate it into pure language.

# Structured payload despatched to GPT-4o-mini

{

"event_name": "Ana's Marriage ceremony",

"variable": "precipitation_probability_max",

"from_value": 10.0,

"to_value": 50.0,

"delta": 40.0,

"horizon_days": 5.2,

"confidence": "medium"

}Output:

“Rain likelihood elevated from 10% to 50% on your occasion window. Confidence is medium as a result of 5-day forecast horizon.”

The LLM will not be deciding something. It’s explaining.

Why this structure is testable

It’s virtually not possible to succeed in 100% take a look at protection on a pure LLM agent — you can’t write deterministic assertions on probabilistic outputs.

The hybrid method adjustments this. The choice logic is pure Python with Pydantic-validated inputs: 204 unit exams, zero LLM dependencies within the take a look at suite. The LLM handles solely the narrative tone — the one factor that genuinely advantages from pure language technology.

This isn’t only a testing comfort. It means each resolution the

system makes could be defined, reproduced, and verified independently of the LLM.

Occasion-Pushed LLM Invocation

A naive agent calls the LLM on each polling cycle. This one doesn’t.

Skygent evaluates each 6 hours. It solely calls the mannequin when a threshold is crossed — roughly a few times per week per monitored occasion, in comparison with ~28 requires a naive polling agent.

At gpt-4o-mini pricing (~$0.0001 per narrative), price is negligible. Extra importantly, price is proportional to precise info: you pay for an LLM name solely when one thing price speaking occurred.

A concrete instance

Earlier snapshot: Rain likelihood 10%, Max temp 22°C, Wind 15 km/h

Present snapshot: Rain likelihood 50%, Max temp 21.4°C, Wind 18 km/h

Threshold: Alert if rain likelihood Δ > 20pp

Analysis frequency: Each 6 hours

Consequence: Alert triggered → GPT-4o-mini generates narrative → Telegram supply

When this sample breaks

This method doesn’t apply in every single place. It breaks down when:

- Inputs are unstructured or ambiguous

- Choice boundaries can’t be codified as thresholds

- Reasoning is open-ended

In these circumstances, LLM-first architectures — ReAct, Plan-and-Execute — make extra sense.

One sincere caveat: the thresholds in Skygent are configurable defaults — cheap beginning factors knowledgeable by meteorological follow, however not calibrated towards historic forecast errors for particular use circumstances. Calibration towards actual outcomes is the pure subsequent step for any vertical deployment. The sample is sound; the parameters are a place to begin.

Closing

An important resolution I made constructing this technique was not selecting a mannequin or a framework.

It was deciding the place not to make use of an LLM.

There’s a tendency proper now to delegate increasingly more to language fashions — to allow them to determine issues out. However some issues have already got construction. Some choices have already got boundaries.

After they do, approximating them with language is the fallacious transfer. Encoding them explicitly is best.

In follow, this usually comes right down to a easy distinction: use LLMs to clarify choices, to not substitute well-defined ones.

The complete implementation — significance evaluator, LangGraph pipeline, Telegram bot — is out there at: github.com/ferariz/skygent

Fernando Arizmendi builds manufacturing AI programs on the intersection of rigorous scientific technique and utilized AI engineering. He’s a physicist (B.Sc. & M.Sc.) from Instituto Balseiro, former Marie Curie fellow (Ph.D. learning Local weather Dynamics & Advanced Techniques), and beforehand directed R&D at Uruguay’s nationwide meteorology institute.

All photos by the writer. Pipeline diagram generated with Claude (Anthropic).