In 2016, I stated one thing that went in opposition to the place robotics was heading on the time: vision alone doesn’t work for grasping.

Not “it wants enchancment.” Not “the tech isn’t there but.” It doesn’t match the issue.

Greedy is bodily. Contact, power, friction. Imaginative and prescient can information the strategy. It will possibly’t really feel what occurs subsequent.

Again then, we noticed it within the lab. Tactile vibration knowledge predicted grasp failure with 83% accuracy and detected slip at 92%. Early outcomes, however clear sufficient. The indicators that matter don’t present up in photos.

Ten years later, the remainder of the sphere is operating into the identical restrict.

Imaginative and prescient will get you shut

Imaginative and prescient nonetheless issues. It handles detection, positioning and planning. It will get the robotic to the fitting place, lined up the fitting method.

It does that effectively, however manipulation doesn’t cease when the gripper reaches the item.

That’s the place issues break.

What occurs at contact isn’t seen

Earlier than contact, the robotic is working off photos.

After contact, it’s coping with forces.

A nasty grasp doesn’t begin as a visible change. It reveals up as a shift in power. Slip begins within the fingertips earlier than something strikes sufficient to see. An excessive amount of strain reveals up within the wrist earlier than the item deforms.

By the point a digicam picks up an issue, it’s already occurring.

Imaginative and prescient sees outcomes. Contact sensing measures interplay because it occurs.

And the helpful knowledge lives proper there, for the time being of contact.

The proof is already there

This isn’t a principle anymore.

Tactile-driven insurance policies beat vision-only ones on duties that contain power. Benchmarks like ManiSkill-ViTac present higher efficiency once you mix imaginative and prescient with tactile enter, particularly in insertion and meeting. Fashions like π0, OpenVLA, and Octo rely on synchronized inputs from a number of sensors. Take away power or tactile knowledge, and efficiency drops.

Nobody is changing imaginative and prescient. They’re including what’s lacking.

The strongest methods in the present day mix imaginative and prescient, proprioception, power, and contact right into a single mannequin.

That’s what strikes efficiency.

Imaginative and prescient has already given most of what it will probably

Imaginative and prescient nonetheless carries a variety of the system. But it surely doesn’t resolve the arduous half.

Bodily AI improves with extra knowledge, however not all knowledge issues the identical. Pressure and tactile indicators have an outsized influence on how effectively a system handles actual contact.

Most datasets nonetheless lean closely on imaginative and prescient and joint knowledge.

So that you see the identical sample time and again. Robots attain the fitting place. Then wrestle with insertion, meeting, and something that depends upon compliance.

The lacking data is bodily.

Tactile knowledge hasn’t scaled but

Amassing good contact knowledge hasn’t been straightforward. You want instrumented finish effectors, dependable power and tactile sensors, tight synchronization, and constant codecs.

That’s a {hardware} drawback as a lot as a modelling one.

Till not too long ago, the infrastructure wasn’t there.

Now it’s.

The bottleneck is how briskly groups can deploy it and begin amassing knowledge.

Closing the loop

What began as a declare in 2016 is now exhibiting up in all places.

Robots that solely see will hold hitting the identical limits. Robots that may really feel will begin to shut the hole.

Imaginative and prescient stays. It’s not going anyplace.

But it surely gained’t carry manipulation by itself. The shift comes from including the indicators that matter on the level of contact.

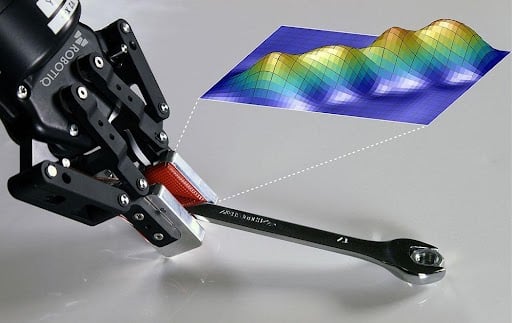

At Robotiq, our tactile sensors are constructed to seize these indicators straight on the gripper, so robots see and really feel what they’re doing.