Your brokers are solely pretty much as good because the data they’ll entry — and solely as protected because the permissions they implement.

We’re launching ACL Hydration (entry management record hydration) to safe data workflows within the DataRobot Agent Workforce Platform: a unified framework for ingesting unstructured enterprise content material, preserving source-system entry controls, and imposing these permissions at question time — so your brokers retrieve the best info for the best person, each time.

The issue: enterprise data with out enterprise safety

Each group constructing agentic AI runs into the identical wall. Your brokers want entry to data locked inside SharePoint, Google Drive, Confluence, Jira, Slack, and dozens of different methods. However connecting to these methods is simply half the problem. The more durable drawback is guaranteeing that when an agent retrieves a doc to reply a query, it respects the identical permissions that govern who can see that doc within the supply system.

As we speak, most RAG implementations ignore this solely. Paperwork get chunked, embedded, and saved in a vector database with no document of who was — or wasn’t — alleged to entry them. This can lead to a system the place a junior analyst’s question surfaces board-level monetary paperwork, or the place a contractor’s agent retrieves HR recordsdata meant just for inside management. The problem isn’t simply the best way to propagate permissions from the information sources through the inhabitants of the RAG system — these permissions have to be repeatedly refreshed as individuals are added to or faraway from entry teams. That is vital to maintain synchronized controls over who can entry varied varieties of supply content material.

This isn’t a theoretical threat. It’s the rationale safety groups block GenAI rollouts, compliance officers hesitate to log out, and promising agent pilots stall earlier than reaching manufacturing. Enterprise clients have been specific: with out access-control-aware retrieval, agentic AI can’t transfer past sandboxed experiments.

Current options don’t resolve this properly. Some can implement permissions — however solely inside their very own ecosystems. Others help connectors throughout platforms however lack native agent workflow integration. Vertical functions are restricted to inside search with out platform extensibility. None of those choices give enterprises what they really want: a cross-platform, ACL-aware data layer purpose-built for agentic AI.

What DataRobot supplies

DataRobot’s safe data workflows present three foundational, interlinked capabilities within the Agent Workforce Platform for safe data and context administration.

1. Enterprise knowledge connectors for unstructured content material

Connect with the methods the place your group’s data really lives. At launch, we’re offering production-grade connectors for SharePoint, Google Drive, Confluence, Jira, OneDrive, and Field — with Slack, GitHub, Salesforce, ServiceNow, Dropbox, Microsoft Groups, Gmail, and Outlook following in subsequent releases.

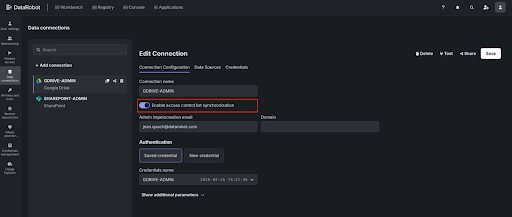

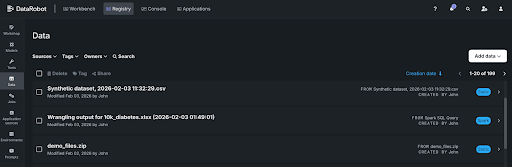

Every connector helps full historic backfill for preliminary ingestion and scheduled incremental syncs to maintain your vector databases present. You management entry and handle connections by means of APIs or the DataRobot UI.

These aren’t light-weight integrations. They’re constructed to deal with production-scale workloads — 100GB+ of unstructured knowledge — with sturdy error dealing with, retries, and sync standing monitoring.

2. ACL Hydration and metadata preservation

That is the core differentiator. When DataRobot ingests paperwork from a supply system, it doesn’t simply extract content material — it captures and preserves the entry management metadata (ACLs) that outline who can see every doc. Consumer permissions, group memberships, position assignments — all of it’s propagated to the vector database lookup in order that retrieval is conscious of the permissioning on the information being retrieved.

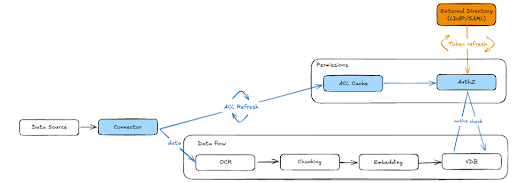

Right here’s the way it works (additionally illustrated in Determine 1 under):

- Throughout ingestion, document-level ACL metadata — together with person, group, and position permissions — is extracted from the supply system and endured alongside the vectorized content material.

- ACLs are saved in a centralized cache, decoupled from the vector database itself. This can be a vital architectural determination: when permissions change within the supply system, we replace the ACL cache with out reindexing all the VDB. Permission adjustments propagate to all downstream shoppers mechanically. This contains permissioning for regionally uploaded recordsdata, which respect DataRobot RBAC.

- Close to real-time ACL refresh retains the system in sync with supply permissions. DataRobot repeatedly polls and refreshes ACLs inside minutes. When somebody’s entry is revoked in SharePoint or a Google Drive folder is restructured, these adjustments are mirrored in DataRobot on a scheduled foundation — guaranteeing your brokers by no means serve stale permissions.

- Exterior identification decision maps customers and teams out of your enterprise listing (by way of LDAP/SAML) to the ACL metadata, so permission checks resolve appropriately no matter how identities are represented throughout completely different supply methods.

3. Dynamic permission enforcement at question time

Storing ACLs is critical however not ample. The actual work occurs at retrieval time.

When an agent queries the vector database on behalf of a person, DataRobot’s authorization layer evaluates the saved ACL metadata in opposition to the requesting person’s identification, group memberships, and roles — in actual time. Solely embeddings the person is allowed to entry are returned. Every little thing else is filtered earlier than it ever reaches the LLM.

This implies two customers can ask the identical agent the identical query and obtain completely different solutions — not as a result of the agent is inconsistent, however as a result of it’s appropriately scoping its data to what every person is permitted to see.

For paperwork ingested with out exterior ACLs (resembling regionally uploaded recordsdata), DataRobot’s inside authorization system (AuthZ) handles entry management, guaranteeing constant permission enforcement no matter how content material enters the platform.

The way it works: step-by-step

Step 1: Join your knowledge sources

Register your enterprise knowledge sources in DataRobot. Authenticate by way of OAuth, SAML, or service accounts relying on the supply system. Configure what to ingest — particular folders, file varieties, metadata filters. DataRobot handles the preliminary backfill of historic content material.

Step 2: Ingest content material with ACL metadata

As paperwork are ingested, DataRobot extracts content material for chunking and embedding whereas concurrently capturing document-level ACL metadata from the supply system. This metadata — together with person permissions, group memberships, and position assignments — is saved in a centralized ACL cache.

The content material flows by means of the usual RAG pipeline: OCR (if wanted), chunking, embedding, and storage in your vector database of selection — whether or not DataRobot’s built-in FAISS-based resolution or your personal Elastic, Pinecone, or Milvus occasion — with the ACLs following the information all through the workflow.

Step 3: Map exterior identities

DataRobot resolves person and group info. This mapping ensures that ACL permissions from supply methods — which can use completely different identification representations — might be precisely evaluated in opposition to the person making a question.

Group memberships, together with exterior teams like Google Teams, are resolved and cached to help quick permission checks at retrieval time.

Step 4: Question with permission enforcement

When an agent or utility queries the vector database, DataRobot’s AuthZ layer intercepts the request and evaluates it in opposition to the ACL cache. The system checks the requesting person’s identification and group memberships in opposition to the saved permissions for every candidate embedding.

Solely approved content material is returned to the LLM for response technology. Unauthorized embeddings are filtered silently — the agent responds as if the restricted content material doesn’t exist, stopping any info leakage.

Step 5: Monitor, audit, and govern

Each connector change, sync occasion, and ACL modification is logged for auditability. Directors can observe who linked which knowledge sources, what knowledge was ingested, and what permissions had been utilized — offering full knowledge lineage and compliance traceability.

Permission adjustments in supply methods are propagated by means of scheduled ACL refreshes, and all downstream shoppers — throughout all VDBs constructed from that supply — are mechanically up to date.

Why this issues to your brokers

Safe data workflows change what’s doable with agentic AI within the enterprise.

Brokers get the context they want with out compromising safety. By propagating ACLs, brokers have the context info they should get the job completed, whereas guaranteeing the information accessed by brokers and finish customers honors the authentication and authorization privileges maintained within the enterprise. An agent doesn’t turn out to be a backdoor to enterprise info — whereas nonetheless having all of the enterprise context wanted to do its job.

Safety groups can approve manufacturing deployments. With source-system permissions enforced end-to-end, the danger of unauthorized knowledge publicity by means of GenAI isn’t simply mitigated — it’s eradicated. Each retrieval respects the identical entry boundaries that govern the supply system.

Builders can transfer sooner. As an alternative of constructing customized permission logic for each knowledge supply, builders get ACL-aware retrieval out of the field. Join a supply, ingest the content material, and the permissions include it. This removes weeks of customized safety engineering from each agent mission.

Finish customers can belief the system. When customers know that the agent solely surfaces info they’re approved to see, adoption accelerates. Belief isn’t a characteristic you bolt on — it’s the results of an structure that enforces permissions by design.

Get began

Safe data workflows can be found now within the DataRobot Agent Workforce Platform. In the event you’re constructing brokers that have to motive over enterprise knowledge — and also you want these brokers to respect who can see what — that is the potential that makes it doable. Attempt DataRobot or request a demo.