Phase Something Mannequin 3 (SAM3) despatched a shockwave by way of the pc imaginative and prescient neighborhood. Social media feeds have been rightfully flooded with reward for its efficiency. SAM3 isn’t simply an incremental replace; it introduces Promptable Idea Segmentation (PCS), a imaginative and prescient language structure that enables customers to section objects utilizing pure language prompts. From its 3D capabilities (SAM3D) to its native video monitoring, it’s undeniably a masterpiece of basic goal AI.

Nevertheless, on the planet of manufacturing grade AI, pleasure can typically blur the road between zero-shot functionality and sensible dominance. Following the discharge, many claimed that coaching in home detectors is now not obligatory. As an engineer who has spent years deploying fashions within the discipline, I felt a well-recognized skepticism. Whereas a basis mannequin is the final word Swiss Military Knife, you don’t use it to chop down a forest when you’ve got a chainsaw. This text investigates a query that’s typically implied in analysis papers however hardly ever examined in opposition to the constraints of a manufacturing atmosphere.

Can a small, task-specific mannequin educated with restricted knowledge and a 6-hour compute funds outperform an enormous, general-purpose big like SAM3 in a totally autonomous setting?

To these within the trenches of Pc Imaginative and prescient, the instinctive reply is Sure. However in an trade pushed by knowledge, intuition isn’t sufficient therefore, I made a decision to show it.

What’s New in SAM3?

Earlier than diving into the benchmarks, we have to perceive why SAM3 is taken into account such a leap ahead. SAM3 is a heavyweight basis mannequin, packing 840.50975 million parameters. This scale comes with a price, inference is computationally costly. On a NVIDIA P100 GPU, it runs at roughly ~1100 ms per picture.

Whereas the predecessor SAM targeted on The place (interactive clicks, packing containers, and masks), SAM3 introduces a Imaginative and prescient–Language element that permits What reasoning by way of text-driven, open-vocabulary prompts.

Briefly, SAM3 transforms from an interactive assistant right into a zero shot system. It doesn’t want a predefined label listing; it operates on the fly. This makes it a dream instrument for picture enhancing and handbook annotation. However the query stays, does this huge, basic goal mind really outperform a lean specialist when the duty is slim and the atmosphere is autonomous?

Benchmarks

To pit SAM3 in opposition to domain-trained fashions, I chosen a complete of 5 datasets spanning throughout three domains: Object Detection, Occasion Segmentation, and Saliency Object Detection. To maintain the comparability truthful and grounded in actuality I outlined the next standards for the coaching course of.

- Truthful Grounds for SAM3: The dataset classes must be detectable by SAM3 out of the field. We wish to take a look at SAM3 at its strengths. For instance SAM3 can precisely determine a shark versus a whale. Nevertheless, asking it to differentiate between a blue whale and a fin whale could be unfair.

- Minimal Hyperparameter Tuning: I used preliminary guesses for many parameters with little to no fine-tuning. This simulates a fast begin state of affairs for an engineer.

- Strict Compute Finances: The specialist fashions have been educated inside a most window of 6 hours. This satisfies the situation of utilizing minimal and accessible computing sources.

- Immediate Energy: For each dataset I examined the SAM3 prompts in opposition to 10 randomly chosen photographs. I solely finalized a immediate as soon as I used to be glad that SAM3 was detecting the objects correctly on these samples. If you’re skeptical, you possibly can choose random photographs from these datasets and take a look at my prompts within the SAM3 demo to substantiate this unbiased strategy.

The next desk reveals the weighted common of particular person metrics for every case. If you’re in a rush, this desk gives the high-level image of the efficiency and pace trade-offs. You may see all of the WandDB runs here.

Let’s discover the nuances of every use case and see why the numbers look this fashion.

Object Detection

On this use case we benchmark datasets utilizing solely bounding packing containers. That is the commonest process in manufacturing environments.

For our analysis metrics, we use the usual COCO metrics computed with bounding field primarily based IoU. To find out an total winner throughout totally different datasets, I exploit a weighted sum of those metrics. I assigned the very best weight to mAP (imply Common Precision) because it gives essentially the most complete snapshot of a mannequin’s precision and recall stability. Whereas the weights assist us choose an total winner you possibly can see how every mannequin festivals in opposition to the opposite in each particular person class.

1. International Wheat Detection

The primary publish I noticed on LinkedIn concerning SAM3 efficiency was really about this dataset. That particular publish sparked my concept to conduct a benchmark somewhat than basing my opinion on a number of anecdotes.

This dataset holds a particular place for me as a result of it was the primary competitors I participated in again in 2020. On the time I used to be a inexperienced engineer recent off Andrew Ng’s Deep Studying Specialization. I had extra motivation than coding talent and I foolishly determined to implement YOLOv3 from scratch. My implementation was a catastrophe with a recall of ~10% and I didn’t make a single profitable submission. Nevertheless, I realized extra from that failure than any tutorial might train me. Selecting this dataset once more was a pleasant journey down reminiscence lane and a measurable solution to see how far I’ve grown.

For the practice val cut up I randomly divided the supplied knowledge right into a 90-10 ratio to make sure each fashions have been evaluated on the very same photographs. The ultimate rely was 3035 photographs for coaching and 338 photographs for validation.

I used Ultralytics YOLOv11-Giant and supplied COCO pretrained weights as a place to begin and educated the mannequin for 30 epochs with default hyperparameters. The coaching course of was accomplished in simply 2 hours quarter-hour.

The uncooked knowledge reveals SAM3 trailing YOLO by 17% total, however the visible outcomes inform a extra complicated story. SAM3 predictions are typically tight, binding carefully to the wheat head.

In distinction, the YOLO mannequin predicts barely bigger packing containers that embody the awns (the hair bristles). As a result of the dataset annotations embody these awns, the YOLO mannequin is technically extra appropriate in keeping with the use case, which explains why it leads in excessive IoU metrics. This additionally explains why SAM3 seems to dominate YOLO within the Small Object class (an 132% lead). To make sure a good comparability regardless of this bounding field mismatch, we must always take a look at AP50. At a 0.5 IoU threshold, SAM3 loses by 12.4%.

Whereas my YOLOv11 mannequin struggled with the smallest wheat heads, a problem that might be solved by including a P2 excessive decision detection head The specialist mannequin nonetheless gained the vast majority of classes in an actual world utilization state of affairs.

| Metric | yolov11-large | SAM3 | % Change |

|---|---|---|---|

| AP | 0.4098 | 0.315 | -23.10 |

| AP50 | 0.8821 | 0.7722 | -12.40 |

| AP75 | 0.3011 | 0.1937 | -35.60 |

| AP small | 0.0706 | 0.0649 | -8.00 |

| AP medium | 0.4013 | 0.3091 | -22.90 |

| AP giant | 0.464 | 0.3592 | -22.50 |

| AR 1 | 0.0145 | 0.0122 | -15.90 |

| AR 10 | 0.1311 | 0.1093 | -16.60 |

| AR 100 | 0.479 | 0.403 | -15.80 |

| AR small | 0.0954 | 0.2214 | +132 |

| AR medium | 0.4617 | 0.4002 | -13.30 |

| AR giant | 0.5661 | 0.4233 | -25.20 |

On the hidden competitors take a look at set the specialist mannequin outperformed SAM3 by important margins as nicely.

| Mannequin | Public LB Rating | Non-public LB Rating |

|---|---|---|

| yolov11-large | 0.677 | 0.5213 |

| SAM3 | 0.4647 | 0.4507 |

| Change | -31.36 | -13.54 |

Execution Particulars:

2. CCTV Weapon Detection

I selected this dataset to benchmark SAM3 on surveillance type imagery and to reply a crucial query: Does a basis mannequin make extra sense when knowledge is extraordinarily scarce?

The dataset consists of solely 131 photographs captured from CCTV cameras throughout six totally different areas. As a result of photographs from the identical digicam feed are extremely correlated I made a decision to separate the information on the scene stage somewhat than the picture stage. This ensures the validation set comprises solely unseen environments which is a greater take a look at of a mannequin’s robustness. I used 4 scenes for coaching and two for validation leading to 111 coaching photographs and 30 validation photographs.

For this process I used YOLOv11-Medium. To stop overfitting on such a tiny pattern measurement I made a number of particular engineering selections:

- Spine Freezing: I froze your entire spine to protect the COCO pretrained options. With solely 111 photographs unfreezing the spine would possible corrupt the weights and result in unstable coaching.

- Regularization: I elevated weight decay and used extra intensive knowledge augmentation to power the mannequin to generalize.

- Studying Fee Adjustment: I lowered each the preliminary and remaining studying charges to make sure the head of the mannequin converged gently on the brand new options.

All the coaching course of took solely 8 minutes for 50 epochs. Although I structured this experiment as a possible win for SAM3 the outcomes have been shocking. The specialist mannequin outperformed SAM3 in each single class shedding to YOLO by 20.50% total.

| Metric | yolov11-medium | SAM3 | Change |

|---|---|---|---|

| AP | 0.4082 | 0.3243 | -20.57 |

| AP50 | 0.831 | 0.5784 | -30.4 |

| AP75 | 0.3743 | 0.3676 | -1.8 |

| AP_small | – | – | – |

| AP_medium | 0.351 | 0.24 | -31.64 |

| AP_large | 0.5338 | 0.4936 | -7.53 |

| AR_1 | 0.448 | 0.368 | -17.86 |

| AR_10 | 0.452 | 0.368 | -18.58 |

| AR_100 | 0.452 | 0.368 | -18.58 |

| AR_small | – | – | – |

| AR_medium | 0.4059 | 0.2941 | -27.54 |

| AR_large | 0.55 | 0.525 | -4.55 |

This implies that for particular excessive stakes duties like weapon detection even a handful of area particular photographs can present higher baseline than an enormous basic goal mannequin.

Execution Particulars:

Occasion Segmentation

On this use case we benchmark datasets with instance-level segmentation masks and polygons. For our analysis, we use the usual COCO metrics computed with masks primarily based IoU. Just like the article detection part I exploit a weighted sum of those metrics to find out the ultimate rankings.

A big hurdle in benchmarking occasion segmentation is that many prime quality datasets solely present semantic masks. To create a good take a look at for SAM3 and YOLOv11, I chosen datasets the place the objects have clear spatial gaps between them. I wrote a preprocessing pipeline to transform these semantic masks into occasion stage labels by figuring out particular person related parts. I then formatted these as a COCO Polygon dataset. This allowed us to measure how nicely the fashions distinguish between particular person issues somewhat than simply figuring out stuff.

1. Concrete Crack Segmentation

I selected this dataset as a result of it represents a big problem for each fashions. Cracks have extremely irregular shapes and branching paths which can be notoriously troublesome to seize precisely. The ultimate cut up resulted in 9603 photographs for coaching and 1695 photographs for validation.

The unique labels for the cracks have been extraordinarily nice. To coach on such skinny buildings successfully, I might have wanted to make use of a really excessive enter decision which was not possible inside my compute funds. To resolve this, I utilized a morphological transformation to thicken the masks. This allowed the mannequin to be taught the crack buildings at a decrease decision whereas sustaining acceptable outcomes. To make sure a good comparability I utilized the very same transformation to the SAM3 output. Since SAM3 performs inference at excessive decision and detects nice particulars, thickening its masks ensured we have been evaluating apples to apples throughout analysis.

I educated a YOLOv11-Medium-Seg mannequin for 30 epochs. I maintained default settings for many hyperparameters which resulted in a complete coaching time of 5 hours 20 minutes.

The specialist mannequin outperformed SAM 3 with an total rating distinction of 47.69%. Most notably, SAM 3 struggled with recall, falling behind the YOLO mannequin by over 33%. This implies that whereas SAM 3 can determine cracks in a basic sense, it lacks the area particular sensitivity required to map out exhaustive fracture networks in an autonomous setting.

Nevertheless, visible evaluation suggests we must always take this dramatic 47.69% hole with a grain of salt. Even after publish processing, SAM 3 produces thinner masks than the YOLO mannequin and SAM3 is probably going being penalized for its nice segmentations. Whereas YOLO would nonetheless win this benchmark, a extra refined masks adjusted metric would possible place the precise efficiency distinction nearer to 25%.

| Metric | yolov11-medium | SAM3 | Change |

|---|---|---|---|

| AP | 0.2603 | 0.1089 | -58.17 |

| AP50 | 0.6239 | 0.3327 | -46.67 |

| AP75 | 0.1143 | 0.0107 | -90.67 |

| AP_small | 0.06 | 0.01 | -83.28 |

| AP_medium | 0.2913 | 0.1575 | -45.94 |

| AP_large | 0.3384 | 0.1041 | -69.23 |

| AR_1 | 0.2657 | 0.1543 | -41.94 |

| AR_10 | 0.3281 | 0.2119 | -35.41 |

| AR_100 | 0.3286 | 0.2192 | -33.3 |

| AR_small | 0.0633 | 0.0466 | -26.42 |

| AR_medium | 0.3078 | 0.2237 | -27.31 |

| AR_large | 0.4626 | 0.2725 | -41.1 |

Execution Particulars:

2. Blood Cell Segmentation

I included this dataset to check the fashions within the medical area. On the floor this felt like a transparent benefit for SAM3. The pictures don’t require complicated excessive decision patching and the cells typically have distinct clear edges which is strictly the place basis fashions normally shine. Or a minimum of that was my speculation.

Just like the earlier process I needed to convert semantic masks right into a COCO type occasion segmentation format. I initially had a priority concerning touching cells. If a number of cells have been grouped right into a single masks blob my preprocessing would deal with them as one occasion. This might create a bias the place the YOLO mannequin learns to foretell clusters whereas SAM3 accurately identifies particular person cells however will get penalized for it. Upon nearer inspection I discovered that the dataset supplied nice gaps of some pixels between adjoining cells. By utilizing contour detection I used to be in a position to separate these into particular person cases. I deliberately prevented morphological dilation right here to protect these gaps and I ensured the SAM3 inference pipeline remained equivalent. The dataset supplied its personal cut up with 1169 coaching photographs and 159 validation photographs.

I educated a YOLOv11-Medium mannequin for 30 epochs. My solely important change from the default settings was rising the weight_decay to supply extra aggressive regularization. The coaching was extremely environment friendly, taking solely 46 minutes.

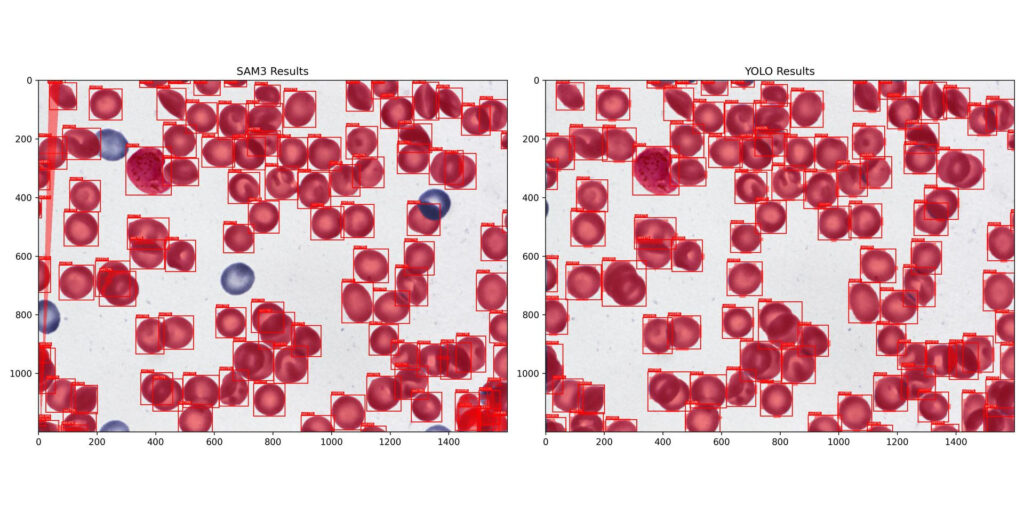

Regardless of my preliminary perception that this could be a win for SAM3 the specialist mannequin once more outperformed the muse mannequin by 23.59% total. Even when the visible guidelines appear to favor a generalist the specialised coaching permits the smaller mannequin to seize the area particular nuances that SAM3 misses. You may see from the outcomes above SAM3 is lacking various cases of cells.

| Metric | yolov11-Medium | SAM3 | Change |

|---|---|---|---|

| AP | 0.6634 | 0.5254 | -20.8 |

| AP50 | 0.8946 | 0.6161 | -31.13 |

| AP75 | 0.8389 | 0.5739 | -31.59 |

| AP_small | – | – | – |

| AP_medium | 0.6507 | 0.5648 | -13.19 |

| AP_large | 0.6996 | 0.4508 | -35.56 |

| AR_1 | 0.0112 | 0.01 | -10.61 |

| AR_10 | 0.1116 | 0.0978 | -12.34 |

| AR_100 | 0.7002 | 0.5876 | -16.09 |

| AR_small | – | – | – |

| AR_medium | 0.6821 | 0.6216 | -8.86 |

| AR_large | 0.7447 | 0.5053 | -32.15 |

Execution Particulars:

Saliency Object Detection / Picture Matting

On this use case we benchmark datasets that contain binary segmentation with foreground and background separation segmentation masks. The first software is picture enhancing duties like background elimination the place correct separation of the topic is crucial.

The Cube coefficient is our major analysis metric. In observe Cube scores rapidly attain values round 0.99 as soon as the mannequin segments the vast majority of the area. At this stage significant variations seem within the slim 0.99 to 1.0 vary. Small absolute enhancements right here correspond to visually noticeable beneficial properties particularly round object boundaries.

We take into account two metrics for our total comparability:

- Cube Coefficient: Weighted at 3.0

- MAE (Imply Absolute Error): Weighted at 0.01

Observe: I had additionally added F1-Rating however later realized that F1-Rating and Cube Coefficient are mathematically equivalent, Therefore I omitted it right here. Whereas specialised boundary targeted metrics exist I excluded them to keep up our novice engineer persona. We wish to see if somebody with fundamental abilities can beat SAM3 utilizing normal instruments.

Within the Weights & Biases (W&B) logs the specialist mannequin outputs could look objectively unhealthy in comparison with SAM3. This can be a visualization artifact brought on by binary thresholding. Our ISNet mannequin predicts a gradient alpha matte which permits for easy semi-transparent edges. To sync with W&B I used a hard and fast threshold of 0.5 to transform these to binary masks. In a manufacturing atmosphere tuning this threshold or utilizing the uncooked alpha matte would yield a lot increased visible high quality. Since SAM3 produces a binary masks of the field its outputs look nice in WandB. I recommend referring to the outputs given in pocket book’s output’s part.

Engineering the Pipeline :

For this process I used ISNet, I utilized the mannequin code and pretrained weights from the official repository however carried out a customized coaching loop and dataset lessons. To optimize the method I additionally carried out:

- Synchronized Transforms: I prolonged the torchvision transforms to make sure masks transformations (like rotation or flipping) have been completely synchronized with the picture.

- Blended Precision Coaching: I modified the mannequin class and loss perform to help combined precision. I used BCEWithLogitsLoss for numerical stability.

1. EasyPortrait Dataset

I wished to incorporate a excessive stakes background elimination process particularly for selfie/portrait photographs. That is arguably the most well-liked software of Saliency Object Detection right now. The primary problem right here is hair segmentation. Human hair has excessive frequency edges and transparency which can be notoriously troublesome to seize. Moreover topics put on numerous clothes that may typically mix into the background colours.

The unique dataset gives 20,000 labeled face photographs. Nevertheless the supplied take a look at set was a lot bigger than the validation set. Working SAM3 on such a big take a look at set would have exceeded the Kaggle GPU quota that week, I wanted that quota for different stuff. So I swapped the 2 units leading to a extra manageable analysis pipeline

- Prepare Set: 14,000 photographs

- Val Set: 4,000 photographs

- Check Set: 2,000 photographs

Strategic Augmentations:

To make sure the mannequin can be helpful in actual world workflows somewhat than simply over becoming the validation set I carried out a strong augmentation pipeline, You may see the augmentation above, however this was my considering behind augmentations

- Facet Ratio Conscious Resize: I first resized the longest dimension after which took a hard and fast measurement random crop. This prevented the squashed face impact frequent with normal resizing.

- Perspective Transforms: For the reason that dataset consists principally of individuals wanting straight on the digicam I added sturdy perspective shifts to simulate angled seating or aspect profile photographs.

- Shade Jitter: I diversified brightness and distinction to deal with lighting from underexposed to overexposed however stored the hue shift at zero to keep away from unnatural pores and skin tones.

- Affine Remodels: Added rotation to deal with numerous digicam tilts.

Because of compute limits I educated at a decision of 640×640 for 16 epochs. This was a big drawback since SAM3 operates and was possible educated at 1024×1024 decision, the coaching took 4 hours 45 minutes.

Even with the decision drawback and minimal coaching, the specialist mannequin outperformed SAM3 by 0.25% total. Nevertheless, the numerical outcomes masks a captivating visible commerce off:

- The Edge High quality: Our mannequin’s predictions are at the moment noisier because of the brief coaching period. Nevertheless, when it hits, the sides are naturally feathered, good for mixing.

- The SAM3 Boxiness: SAM3 is extremely constant however its edges typically appear to be excessive level polygons somewhat than natural masks. It produces a boxy, pixelated boundary that appears synthetic.

- The Hair Win: Our mannequin outperforms SAM3 in hair areas. Regardless of the noise, our mannequin captures the natural circulation of hair, whereas SAM3 typically approximates these areas. That is mirrored within the Imply Absolute Error (MAE), the place SAM3 is 27.92% weaker.

- The Clothes Wrestle: Conversely, SAM3 excels at segmenting clothes, the place the boundaries are extra geometric. Our mannequin nonetheless struggles with fabric textures and shapes.

| Mannequin | MAE | Cube Coefficient |

|---|---|---|

| ISNet | 0.0079 | 0.992 |

| SAM3 | 0.0101 | 0.9895 |

| Change | -27.92 | -0.25 |

The truth that a handicapped mannequin (decrease decision, fewer epochs) can nonetheless beat a basis mannequin on its strongest metric (MAE/Edge precision) is a testomony to area particular coaching. If scaled to 1024px and educated longer, this specialist mannequin would possible present additional beneficial properties over SAM3 for this particular use case.

Execution Particulars:

Conclusion

Primarily based on this multi area benchmark, the information suggests a transparent strategic path for manufacturing stage Pc Imaginative and prescient. Whereas basis fashions like SAM3 signify an enormous leap in functionality, they’re finest utilized as improvement accelerators somewhat than everlasting manufacturing employees.

- Case 1: Fastened Classes & Obtainable labelled Information (~500+ samples) Prepare a specialist mannequin. The accuracy, reliability, and 30x sooner inference speeds far outweigh the small preliminary coaching time.

- Case 2: Fastened Classes however No labelled Information Use SAM3 as an interactive labeling assistant (not computerized). SAM3 is unmatched for bootstrapping a dataset. Upon getting ~500 prime quality frames, transition to a specialist mannequin for deployment.

- Case 3: Chilly Begin (No Photographs, No labelled Information) Deploy SAM3 in a low site visitors shadow mode for a number of weeks to gather actual world imagery. As soon as a consultant corpus is constructed, practice and deploy a website particular mannequin. Use SAM3 to hurry up the annotation workflows.

Why does the Specialist Win in Manufacturing?

1. {Hardware} Independence and Value Effectivity

You don’t want an H100 to ship prime quality imaginative and prescient. Specialist fashions like YOLOv11 are designed for effectivity.

- GPU serving: A single Tesla T4 (which prices peanuts in comparison with an H100) can serve a big person base with sub 50ms latency. It may be scaled horizontal as per the necessity.

- CPU Viability: For a lot of workflows, CPU deployment is a viable, excessive margin choice. By utilizing a powerful CPU pod and horizontal scaling, you possibly can handle latency ~200ms whereas protecting infrastructure complexity at a minimal.

- Optimization: Specialist fashions will be pruned and quantized. An optimized YOLO mannequin on a CPU can ship unbeatable worth at quick inference speeds.

2. Complete Possession and Reliability

While you personal the mannequin, you management the answer. You may retrain to deal with particular edge case failures, tackle hallucinations, or create atmosphere particular weights for various purchasers. Working a dozen atmosphere tuned specialist fashions is commonly cheaper and predictable than one huge, basis mannequin.

The Future Function of SAM3

SAM3 must be seen as a Imaginative and prescient Assistant. It’s the final instrument for any use case the place classes should not mounted resembling:

- Interactive Picture Modifying: The place a human is driving the segmentation.

- Open Vocabulary Search: Discovering any object in an enormous picture/video database.

- AI Assisted Annotation: Slicing handbook labeling time.

Meta’s group has created a masterpiece with SAM3, and its idea stage understanding is a recreation changer. Nevertheless, for an engineer trying to construct a scalable, price efficient, and correct product right now, the specialised Professional mannequin stays the superior selection. I sit up for including SAM4 to the combo sooner or later to see how this hole evolves.

Are you seeing basis fashions substitute your specialist pipelines, or is the associated fee nonetheless too excessive? Let’s focus on within the feedback. Additionally, when you received any worth out of this, I might admire a share!