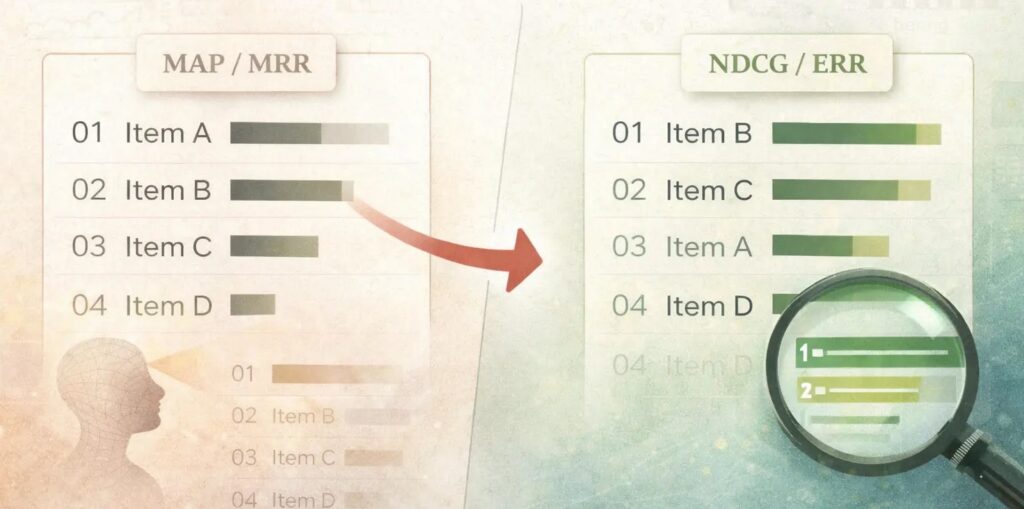

usually use Imply Reciprocal Rank (MRR) and Imply Common Precision (MAP) to evaluate the standard of their rankings. On this put up, we’ll talk about why (MAP) and (MRR) poorly aligned with fashionable person conduct in search rating. We then take a look at two metrics that function higher alternate options to (MRR) and (MAP).

What are MRR and MAP?

Imply Reciprocal Rank (MRR)

Imply Reciprocal Rank ((MRR)) is the typical rank the place the primary related merchandise happens.

$$mathrm{RR} = frac{1}{textual content{rank of first related merchandise}}$$

In e-commerce, the primary related rank might be the rank of the primary merchandise clicked in response to a question

For the above instance, assume the related merchandise is the second merchandise. This implies:

$$mathrm{Reciprocal Rank} = frac{1}{2}$$

Reciprocal rank is calculated for all of the queries within the analysis set. To get a single metric for all of the queries, we take the imply of reciprocal ranks to get the Imply Reciprocal Rank

$$mathrm{Imply Reciprocal Rank} = frac{1}{N}sum_{i=1}^N {frac{1}{textual content{Rank of First Related Merchandise}}}$$

the place (N) is the variety of queries. From this definition, we are able to see that (MRR) focuses on getting one related outcome early. It doesn’t measure what occurs after the primary related outcome.

Imply Common Precision (MAP)

Imply Common Precision ((MAP) measures how effectively the system retrieves related gadgets and the way early they’re proven. We start by first calculating Common Precision (AP) for every question. We outline AP as

$$mathrm{AP} = frac{1}Rsum_{ok=1}^{Okay}mathrm{Precision@}ok cdot mathbf{1}[text{item at } k text{ is relevant}]$$

the place (|R|) is the variety of related gadgets for the question

(mathrm{MAP}) is the common of (mathrm{AP}) throughout queries

The above equation seems rather a lot, however it’s truly easy. Let’s use an instance to interrupt it down. Assume a question has 3 related gadgets, and our mannequin predicts the next order:

Rank: 1 2 3 4 5

Merchandise: R N R N R(R = related, N = not related)

To compute the (MAP), we compute the AP at every related place:

- @1: Precision = 1/1 = 1.0

- @3: Precision = 2/3 ≈ 0.667

- @5: Precision = 3/5 = 0.6

$$mathrm{AP} = frac{1}{3}(1.0 + 0.667 + 0.6) = 0.756$$

We calculate the above for all of the queries and common them to get the (MAP). The AP method has two essential parts:

- Precision@ok: Since we use Precision, retrieving related gadgets earlier yields increased precision values. If the mannequin ranks related gadgets later, Precision@ok reduces on account of a bigger ok

- Averaging the Precisions: We common the precisions over the whole variety of related gadgets. If the system by no means retrieves an merchandise or retrieves it past the cutoff, the merchandise contributes nothing to the numerator whereas nonetheless counting within the denominator, which reduces (AP) and (MAP).

Why MAP and MRR are Dangerous for Search Rating

Now that we’ve got coated the definitions, let’s perceive why (MAP) and (MRR) should not used for search outcomes rating.

Relevance is Graded, not Binary

After we compute (MRR), we take the rank of the primary related merchandise. In (MRR), we deal with all related gadgets the identical. It makes no distinction if a distinct related merchandise exhibits up first. In actuality, completely different gadgets are inclined to have completely different relevance.

Equally, in (MAP), we use binary relevance- we merely search for the subsequent related merchandise. Once more, (MAP) makes no distinction within the relevance rating of the gadgets. In actual instances, relevance is graded, not binary.

Merchandise : 1 2 3

Relevance: 3 1 0(MAP) and (MRR) each ignore how good the related merchandise is. They fail to quantify the relevance.

Customers Scan A number of Outcomes

That is extra particular to (MRR). In (MRR) computation, we report the rank of the primary related merchandise. And ignore every little thing after. It may be good for lookups, QA, and so forth. However that is dangerous for suggestions, product search, and so forth.

Throughout search, customers don’t cease on the first related outcome (aside from instances the place there is just one appropriate response). Customers scan a number of outcomes that contribute to general search relevancy.

MAP overemphasizes recall

(MAP) computes

$$mathrm{AP} = frac{1}Rsum_{ok=1}^{Okay}mathrm{Precision@}ok cdot mathbf{1}[text{item at } k text{ is relevant}]$$

As a consequence, each related merchandise contributes to the scoring. Lacking any related merchandise hurts the scoring. When customers make a search, they don’t seem to be excited by discovering all of the related gadgets. They’re excited by discovering one of the best few choices. (MAP) optimization pushes the mannequin to study the lengthy tail of related gadgets, even when the relevance contribution is low, and customers by no means scroll that far. Therefore, (MAP) overemphasizes recall.

MAP Decays Linearly

Contemplate the instance under. We place a related merchandise at three completely different positions and compute the AP

| Rank | Precision@ok | AP |

|---|---|---|

| 1 | 1/1 = 1.0 | 1.0 |

| 3 | 1/3 ≈ 0.33 | 0.33 |

| 30 | 1/30 ≈ 0.033 | 0.033 |

AP at Rank 30 seems worse than Rank 3, which seems worse than Rank 1. The AP rating decays linearly with the rank. In actuality, Rank 3 vs Rank 30 is greater than a 10x distinction. It’s extra like seen vs not seen.

(MAP) is position-sensitive however solely weakly. It doesn’t replicate how person conduct adjustments with place. (MAP) is position-sensitive by Precision@ok, the place the decay with rank is linear. This doesn’t replicate how person consideration drops in search outcomes.

NDCG and ERR are Higher Decisions

For search outcomes rating, (NDCG) and (ERR) are higher selections. They repair the problems that (MRR) and (MAP) undergo from.

Anticipated Reciprocal Rank (ERR)

Anticipated Reciprocal Rank ((ERR)) assumes a cascade person mannequin whereby a person does the next

- Scans the listing from prime to backside

- At every rank (i),

- With likelihood (R_i), the person is happy and stops

- With likelihood (1- R_i), the person continues trying forward

(ERR) computes the anticipated reciprocal rank of this stopping place the place the person is happy:

$$mathrm{ERR} = sum_{r=1}^n frac{1}{r} cdot {R}_r cdot prod_{i=1}^{r-1}{1-{R}_i}$$

the place (R_i) is (R_i = frac{2^{l_i}-1}{2^{l_m}}) and (l_m) is the utmost potential label worth.

Let’s perceive how (ERR) is completely different from (MRR).

- (ERR) makes use of (R_i = frac{2^{l_i}-1}{2^{l_m}}), which is graded relevance, so a outcome can partially fulfill a person

- (ERR) permits for a number of related gadgets to contribute. Early high-quality gadgets scale back the contribution of later gadgets

Instance 1

We’ll take a toy instance to grasp how (ERR) and (MRR) differ

Rank : 1 2 3

Relevance: 2 3 0- (MRR) = 1 (related merchandise is at first place)

- (ERR) =

- (R_1 = {(2^2 – 1)}/{2^3} = {3}/{8})

- (R_2 ={(2^3 – 1)}/{2^3} = {7}/{8})

- (R_3 ={(2^0 – 1)}/{2^3} = 0)

- (ERR = (1/1) cdot R_1 + (1/2) cdot R_2 + (1/3) cdot R_3 = 0.648)

- (MRR) says excellent rating. (ERR) says not excellent as a result of a better relevance merchandise seems later

Instance 2

Let’s take one other instance to see how a change in rating impacts the (ERR) contribution of an merchandise. We’ll place a extremely related merchandise at completely different positions in an inventory and compute the (ERR) contribution for that merchandise. Contemplate the instances under

- Rating 1: ([8, 4, 4, 4, 4])

- Rating 2: ([4, 4, 4, 4, 8])

Lets compute

| Relevance l | 2^l − 1 | R(l) |

|---|---|---|

| 4 | 15 | 0.0586 |

| 8 | 255 | 0.9961 |

Utilizing this to compute the complete (ERR) for each the rankings, we get:

- Rating 1: (ERR) ≈ 0.99

- Rating 2: (ERR) ≈ 0.27

If we particularly take a look at the contribution of the merchandise with the relevance rating of 8, we see that the drop in contribution of that time period is 6.36x. If the penalty had been linear, the drop could be 5x.

| State of affairs | Contribution of relevance-8 merchandise |

|---|---|

| Rank 1 | 0.9961 |

| Rank 5 | 0.1565 |

| Drop issue | 6.36x |

Normalized Discounted Cumulative Achieve (NDCG)

Normalized Discounted Cumulative Achieve ((NDCG)) is one other nice selection that’s well-suited for rating search outcomes. (NDCG) is constructed on two principal concepts

- Achieve: Gadgets with increased relevance scores are value far more

- Low cost: gadgets showing later are value a lot much less since customers pay much less consideration to later gadgets

NDCG combines the concept of Achieve and Low cost to create a rating. Moreover, it additionally normalizes the rating to permit comparability between completely different queries. Formally, achieve and low cost are outlined as

- (mathrm{Achieve} = 2^{l_r}-1)

- (mathrm{Low cost} = log_2(1+r))

the place (l) is the relevance label of an merchandise at place (r) and (r) is the place at which it happens.

Achieve has an exponential kind, which rewards increased relevance. This ensures that gadgets with a better relevance rating contribute far more. The logarithmic low cost penalizes the later rating of related gadgets. Mixed and utilized to the complete ranked listing, we get the Discounted Cumulative Achieve:

$$mathrm{DCG@Okay} = sum_{r=1}^{Okay} frac{2^{l_r}-1}{mathrm{log_2(1+r)}}$$

for a given ranked listing (l_1, l_2, l_3, …l_k). (DCG@Okay) computation is useful, however the relevance labels can fluctuate in scale throughout queries, which makes evaluating (DCG@Okay) an unfair comparability. So we’d like a strategy to normalize the (DCG@Okay) values.

We do this by computing the (IDCG@Okay), which is the best discounted cumulative achieve. (IDCG) is the utmost potential (DCG) for a similar gadgets, obtained by sorting them by relevance in descending order.

$$mathrm{DCG@Okay} = sum_{r=1}^{Okay} frac{2^{l^*_r}-1}{mathrm{log_2(1+r)}}$$

(IDCG) represents an ideal rating. To normalize the (DCG@Okay), we compute

$$mathrm{NDCG@Okay} = frac{mathrm{DCG@Okay}}{mathrm{IDCG@Okay}}$$

(NDCG@Okay) has the next properties

- Bounded between 0 and 1

- Comparable throughout queries

- 1 is an ideal ordering

Instance: Good vs Barely Worse Ordering

On this instance, we’ll take two completely different rankings of the identical listing and examine their (NDCG) values. Assume we’ve got gadgets with relevance labels on a 0-3 scale.

Rating A

Rank : 1 2 3

Relevance: 3 2 1Rating B

Rank : 1 2 3

Relevance: 2 1 3Computing the (NDCG) parts, we get:

| Rank | Achieve (2^l − 1) | Low cost log₂(1 + r) | A contrib | B contrib |

|---|---|---|---|---|

| 1 | 7 | 1.00 | 7.00 | 3.00 |

| 2 | 3 | 1.58 | 1.89 | 4.42 |

| 3 | 1 | 2.00 | 0.50 | 0.50 |

- DCG(A) = 9.39

- DCG(B) = 7.92

- IDCG = 9.39

- NDCG(A) = 9.39 / 9.39 = 1.0

- NDCG(B) = 7.92 / 9.39 = 0.84

Thus, swapping a related merchandise away from rank 1 causes a big drop.

NDCG: Further Dialogue

The low cost that kinds the denominator of the (DCG) computation is logarithmic in nature. It will increase a lot slower than linearly.

$$mathrm{low cost(r)}=frac{1}{mathrm{log2(1+r)}}$$

Let’s see how this compares with the linear decay:

| Rank (r) |

Linear (1/r) |

Logarithmic (1 / log₂(1 + r)) |

|---|---|---|

| 1 | 1.00 | 1.00 |

| 2 | 0.50 | 0.63 |

| 5 | 0.20 | 0.39 |

| 10 | 0.10 | 0.29 |

| 50 | 0.02 | 0.18 |

- (1/r) decays sooner

- (1/log(1+r)) decays slower

Logarithmic low cost penalizes later ranks much less aggressively than a linear low cost. The distinction between rank 1 → 2 is bigger, however the distinction between rank 10 → 50 is small.

The log low cost has a diminishing marginal discount in penalizing later ranks on account of its concave form. This prevents (NDCG) from changing into a top-heavy metric the place ranks 1-3 dominate the rating. A linear penalty would ignore cheap selections decrease down.

A logarithmic low cost additionally displays the truth that person consideration drops sharply on the prime of the listing after which flattens out as a substitute of reducing linearly with rank.

Conclusion

(MAP) and (MRR) are helpful data retrieval metrics, however are poorly suited to fashionable search rating programs. Whereas (MAP) focuses on discovering all of the related paperwork, (MRR) treats a rating downside as a single-position metric. (MAP) and (MRR) each ignore the graded relevance of things in a search and deal with them as binary: related and never related.

(NDCG) and (ERR) higher replicate actual person conduct by accounting for a number of positions, permitting gadgets to have non-binary scores, whereas giving increased significance to prime positions. For search rating programs, these position-sensitive metrics should not only a higher choice- they’re vital.

Additional Studying

- LambdaMART (good clarification)

- Learning To Rank (extremely advocate studying this. It’s lengthy and thorough, and likewise the inspiration for this text!)