previous article, we launched the core mechanism of Gradient Boosting by way of Gradient Boosted Linear Regression.

That instance was intentionally easy. Its aim was not efficiency, however understanding.

Utilizing a linear mannequin allowed us to make each step express: residuals, updates, and the additive nature of the mannequin. It additionally made the hyperlink with Gradient Descent very clear.

On this article, we transfer to the setting the place Gradient Boosting actually turns into helpful in observe: Choice Tree Regressors.

We’ll reuse the identical conceptual framework as earlier than, however the conduct of the algorithm modifications in an vital method. In contrast to linear fashions, resolution bushes are non-linear and piecewise fixed. When they’re mixed by way of Gradient Boosting, they not collapse right into a single mannequin. As an alternative, every new tree provides construction and refines the predictions of the earlier ones.

Because of this, we’ll solely briefly recap the final Gradient Boosting mechanism and focus as a substitute on what is particular to Gradient Boosted Choice Timber: how bushes are educated on residuals, how the ensemble evolves, and why this method is so highly effective.

1. Machine Studying in Three Steps

We’ll once more use the identical three-step framework to maintain the reason constant and intuitive.

1. Base mannequin

we’ll use resolution tree regressors as our base mannequin.

A choice tree is non-linear by development. It splits the function house into areas and assigns a continuing prediction to every area.

An vital level is that when bushes are added collectively, they don’t collapse right into a single tree.

Every new tree introduces extra construction to the mannequin.

That is the place Gradient Boosting turns into notably highly effective.

1 bis. Ensemble mannequin

Gradient Boosting is the mechanism used to mixture these base fashions right into a single predictive mannequin.

2. Mannequin becoming

For readability, we’ll use resolution stumps, that means bushes with a depth of 1 and a single cut up.

Every tree is educated to foretell the residuals of the earlier mannequin.

2 bis. Ensemble studying

The ensemble itself is constructed utilizing gradient descent in perform house.

Right here, the objects being optimized will not be parameters however features, and people features are resolution bushes.

3. Mannequin tuning

Choice bushes have a number of hyperparameters, akin to:

- most depth

- minimal variety of samples required to separate

- minimal variety of samples per leaf

On this article, we repair the tree depth to 1.

On the ensemble stage, two extra hyperparameters are important:

- the studying fee

- the variety of boosting iterations

These parameters management how briskly the mannequin learns and the way advanced it turns into.

2. Gradient Boosting Algorithm

The Gradient Boosting algorithm follows a easy and repetitive construction.

2.1 Algorithm overview

Listed below are the primary steps of the Gradient Boosting algorithm

- Initialization

Begin with a continuing mannequin. For regression with squared loss, that is the common worth of the goal. - Residual computation

Compute the residuals between the present predictions and the noticed values. - Match a weak learner

Practice a choice tree regressor to foretell these residuals. - Mannequin replace

Add the brand new tree to the present mannequin, scaled by a studying fee. - Repeat

Iterate till the chosen variety of boosting steps is reached or the error stabilizes.

2.2 Dataset

For instance the conduct of Gradient Boosted Timber, we’ll use a number of sorts of datasets that I generated:

- Piecewise linear knowledge, the place the connection modifications by segments

- Non-linear knowledge, akin to curved patterns

- Binary targets, for classification duties

For classification, we’ll begin with the squared loss for simplicity. This enables us to reuse the identical mechanics as in regression. The loss perform can later get replaced by alternate options higher suited to classification, akin to logistic or exponential loss.

These completely different datasets assist spotlight how Gradient Boosting adapts to numerous knowledge buildings and loss features whereas counting on the identical underlying algorithm.

2.3 Initialization

The Gradient Boosting course of begins with a continuing mannequin.

For regression with squared loss, this preliminary prediction is just the common worth of the goal variable.

This common worth represents the very best preliminary prediction earlier than any construction is discovered from the options.

Additionally it is a superb alternative to recall: virtually each regression mannequin will be seen as an enchancment over the worldwide common.

- k-NN seems to be for related observations, and predicts with the common worth of their neighbors.

- Choice Tree Regressors cut up the dataset into areas and compute the common worth inside every leaf to foretell for a brand new commentary that falls into this leaf.

- Weight-based fashions regulate function weights to stability or replace the worldwide common, for a given new commentary.

Right here, for gradient boosting, we additionally begin with the common worth. After which we’ll see how it is going to be progressively corrected.

2.4 First Tree

The primary resolution tree is then educated on the residuals of this preliminary mannequin.

After the initialization, the residuals are simply the variations between the noticed values and the common.

To construct this primary tree, we use precisely the identical process as within the article on Choice Tree Regressors.

The one distinction is the goal: as a substitute of predicting the unique values, the tree predicts the residuals.

This primary tree supplies the preliminary correction to the fixed mannequin and units the path for the boosting course of.

2.5 Mannequin replace

As soon as the primary tree has been educated on the residuals, we are able to compute the primary improved prediction.

The up to date mannequin is obtained by combining the preliminary prediction and the primary tree’s correction:

f1(x) = f0 + learning_rate * h1(x)

the place:

- f0 is the preliminary prediction, equal to the common worth of the goal

- h1(x) is the prediction of the primary tree educated on the residuals

- learning_rate controls how a lot of this correction is utilized

This replace step is the core mechanism of Gradient Boosting.

Every tree barely adjusts the present predictions as a substitute of changing them, permitting the mannequin to enhance progressively and stay secure.

2.6 Repeating the Course of

As soon as the primary replace has been utilized, the identical process is repeated.

At every iteration, new residuals are computed utilizing the present predictions, and a brand new resolution tree is educated to foretell these residuals. This tree is then added to the mannequin utilizing the training fee.

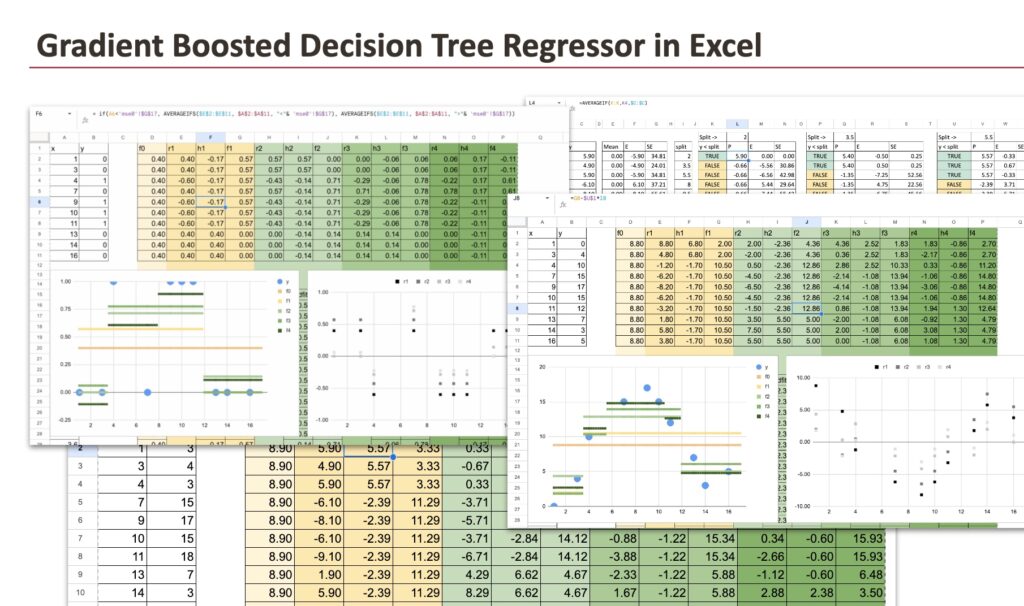

To make this course of simpler to observe in Excel, the formulation will be written in a method that’s absolutely automated. As soon as that is accomplished, the formulation for the second tree and all subsequent bushes can merely be copied to the fitting.

Because the iterations progress, all of the predictions of the residual fashions are grouped collectively. This makes the construction of the ultimate mannequin very clear.

On the finish, the prediction will be written in a compact kind:

f(x) = f0 + eta * (h1(x) + h2(x) + h3(x) + …)

This illustration highlights an vital thought: the ultimate mannequin is just the preliminary prediction plus a weighted sum of residual predictions.

It additionally opens the door to attainable extensions. For instance, the training fee doesn’t should be fixed. It will possibly lower over time, following a decay by way of the iteration course of.

It’s the similar thought for the decay in gradient descent or stochastic gradient descent.

3. Understanding the Closing Mannequin

3.1 How the mannequin evolves throughout iterations

We begin with a piecewise dataset. Within the visualization beneath, we are able to see all of the intermediate fashions produced through the Gradient Boosting course of.

First, we see the preliminary fixed prediction, equal to the common worth of the goal.

Then comes f1, obtained after including the primary tree with a single cut up.

Subsequent, f2, after including a second tree, and so forth.

Every new tree introduces a neighborhood correction. As extra bushes are added, the mannequin progressively adapts to the construction of the info.

The identical conduct seems with a curved dataset. Regardless that every particular person tree is piecewise fixed, their additive mixture leads to a easy curve that follows the underlying sample.

When utilized to a binary goal, the algorithm nonetheless works, however some predictions can change into destructive or better than one. That is anticipated when utilizing squared error loss, which treats the issue as regression and doesn’t constrain the output vary.

If probability-like outputs are required, a classification-oriented loss perform, akin to logistic loss, must be used as a substitute.

In conclusion, Gradient Boosting will be utilized to several types of datasets, together with piecewise, non-linear, and binary circumstances. Whatever the dataset, the ultimate mannequin stays piecewise fixed by development, since it’s constructed as a sum of resolution bushes.

Nonetheless, the buildup of many small corrections permits the general prediction to carefully approximate advanced patterns.

3.2 Comparability with a single resolution tree

When exhibiting these plots, a pure query typically arises:

Does Gradient Boosting not find yourself making a tree, identical to a Choice Tree Regressor?

This impression is comprehensible, particularly when working with a small dataset. Visually, the ultimate prediction can look related, which makes the 2 approaches tougher to differentiate at first look.

Nonetheless, the distinction turns into clear once we have a look at how the splits are computed.

A single Choice Tree Regressor is constructed by way of a sequence of splits. At every cut up, the out there knowledge is split into smaller subsets. Because the tree grows, every new resolution relies on fewer and fewer observations, which might make the mannequin delicate to noise.

As soon as a cut up is made, knowledge factors that fall into completely different areas are not associated. Every area is handled independently, and early selections can’t be revised.

Gradient Boosted Timber work in a totally completely different method.

Every tree within the boosting course of is educated utilizing the total dataset. No commentary is ever faraway from the training course of. At each iteration, all knowledge factors contribute by way of their residuals.

This modifications the conduct of the mannequin basically.

A single tree makes exhausting, irreversible selections. Gradient Boosting, however, permits later bushes to appropriate the errors made by earlier ones.

As an alternative of committing to 1 inflexible partition of the function house, the mannequin progressively refines its predictions by way of a sequence of small changes.

This capacity to revise and enhance earlier selections is among the key the explanation why Gradient Boosted Timber are each sturdy and highly effective in observe.

3.3 Common comparability with different fashions

In comparison with a single resolution tree, Gradient Boosted Timber produce smoother predictions, cut back overfitting, and enhance generalization.

In comparison with linear fashions, they naturally seize non-linear patterns, robotically mannequin function interactions, and require no handbook function engineering.

In comparison with non-linear weight-based fashions, akin to kernel strategies or neural networks, Gradient Boosted Timber provide a distinct set of trade-offs. They depend on easy, interpretable constructing blocks, are much less delicate to function scaling, and require fewer assumptions concerning the construction of the info. In lots of sensible conditions, additionally they practice quicker and require much less tuning.

These mixed properties clarify why Gradient Boosted Choice Tree Regressors carry out so effectively throughout a variety of real-world purposes.

Conclusion

On this article, we confirmed how Gradient Boosting builds highly effective fashions by combining easy resolution bushes educated on residuals. Ranging from a continuing prediction, the mannequin is refined step-by-step by way of small, native corrections.

We noticed that this method adapts naturally to several types of datasets and that the selection of the loss perform is crucial, particularly for classification duties.

By combining the pliability of bushes with the soundness of boosting, Gradient Boosted Choice Timber obtain robust efficiency in observe whereas remaining conceptually easy and interpretable.