of my Machine Learning “Advent Calendar”. I wish to thanks in your assist.

I’ve been constructing these Google Sheet information for years. They advanced little by little. However when it’s time to publish them, I all the time want hours to reorganize the whole lot, clear the format, and make them nice to learn.

Immediately, we transfer to DBSCAN.

DBSCAN Does Not Be taught a Parametric Mannequin

Identical to LOF, DBSCAN is not a parametric mannequin. There isn’t any system to retailer, no guidelines, no centroids, and nothing compact to reuse later.

We should maintain the entire dataset as a result of the density construction will depend on all factors.

Its full identify is Density-Based mostly Spatial Clustering of Functions with Noise.

However cautious: this “density” is just not a Gaussian density.

It’s a count-based notion of density. Simply “what number of neighbors dwell near me”.

Why DBSCAN Is Particular

As its identify signifies, DBSCAN does two issues on the identical time:

- it finds clusters

- it marks anomalies (the factors that don’t belong to any cluster)

That is precisely why I current the algorithms on this order:

- okay-means and GMM are clustering fashions. They output a compact object: centroids for k-means, means and variances for GMM.

- Isolation Forest and LOF are pure anomaly detection fashions. Their solely purpose is to seek out uncommon factors.

- DBSCAN sits in between. It does each clustering and anomaly detection, primarily based solely on the notion of neighborhood density.

A Tiny Dataset to Hold Issues Intuitive

We stick with the identical tiny dataset that we used for LOF: 1, 2, 3, 7, 8, 12

When you take a look at these numbers, you already see two compact teams:

one round 1–2–3, one other round 7–8, and 12 residing alone.

DBSCAN captures precisely this instinct.

Abstract in 3 Steps

DBSCAN asks three easy questions for every level:

- What number of neighbors do you’ve inside a small radius (eps)?

- Do you’ve sufficient neighbors to turn into a Core level (minPts)?

- As soon as we all know the Core factors, to which linked group do you belong?

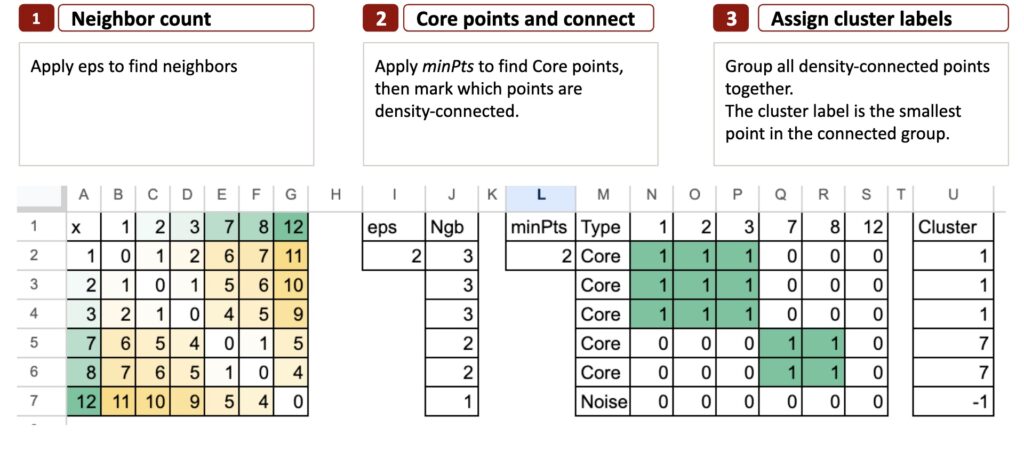

Right here is the abstract of the DBSCAN algorithm in 3 steps:

Allow us to start step-by-step.

DBSCAN in 3 steps

Now that we perceive the concept of density and neighborhoods, DBSCAN turns into very simple to explain.

All the things the algorithm does suits into three easy steps.

Step 1 – Rely the neighbors

The purpose is to verify what number of neighbors every level has.

We take a small radius known as eps.

For every level, we take a look at all different factors and mark these whose distance is lower than eps.

These are the neighbors.

This offers us the primary concept of density:

a degree with many neighbors is in a dense area,

a degree with few neighbors lives in a sparse area.

For a 1-dimensional toy instance like ours, a typical selection is:

eps = 2

We draw slightly interval of radius 2 round every level.

Why is it known as eps?

The identify eps comes from the Greek letter ε (epsilon), which is historically utilized in arithmetic to symbolize a small amount or a small radius round a degree.

So in DBSCAN, eps is actually “the small neighborhood radius”.

It solutions the query:

How far do we glance round every level?

So in Excel, step one is to compute the pairwise distance matrix, then rely what number of neighbors every level has inside eps.

Step 2 – Core Factors and Density Connectivity

Now that we all know the neighbors from Step 1, we apply minPts to resolve which factors are Core.

minPts means right here minimal variety of factors.

It’s the smallest variety of neighbors a degree should have (contained in the eps radius) to be thought-about a Core level.

Some extent is Core if it has not less than minPts neighbors inside eps.

In any other case, it might turn into Border or Noise.

With eps = 2 and minPts = 2, we have now 12 that’s not Core.

As soon as the Core factors are recognized, we merely verify which factors are density-reachable from them. If a degree might be reached by shifting from one Core level to a different inside eps, it belongs to the identical group.

In Excel, we are able to symbolize this as a easy connectivity desk that exhibits which factors are linked by Core neighbors.

This connectivity is what DBSCAN makes use of to kind clusters in Step 3.

Step 3 – Assign cluster labels

The purpose is to show connectivity into precise clusters.

As soon as the connectivity matrix is prepared, the clusters seem naturally.

DBSCAN merely teams all linked factors collectively.

To present every group a easy and reproducible identify, we use a really intuitive rule:

The cluster label is the smallest level within the linked group.

For instance:

- Group {1, 2, 3} turns into cluster 1

- Group {7, 8} turns into cluster 7

- Some extent like 12 with no Core neighbors turns into Noise

That is precisely what we’ll show in Excel utilizing formulation.

Ultimate ideas

DBSCAN is ideal to show the concept of native density.

There isn’t any chance, no Gaussian system, no estimation step.

Simply distances, neighbors, and a small radius.

However this simplicity additionally limits it.

As a result of DBSCAN makes use of one mounted radius for everybody, it can not adapt when the dataset comprises clusters of various scales.

HDBSCAN retains the identical instinct, however seems at all radii and retains what stays secure.

It’s way more strong, and far nearer to how people naturally see clusters.