Whereas AI bubble speak fills the air today, with fears of overinvestment that would pop at any time, one thing of a contradiction is brewing on the bottom: Corporations like Google and OpenAI can barely construct infrastructure quick sufficient to fill their AI wants.

Throughout an all-hands assembly earlier this month, Google’s AI infrastructure head Amin Vahdat instructed workers that the corporate should double its serving capability each six months to fulfill demand for synthetic intelligence providers, reports CNBC. The feedback present a uncommon have a look at what Google executives are telling its personal workers internally. Vahdat, a vp at Google Cloud, offered slides to its workers displaying the corporate must scale “the subsequent 1000x in 4-5 years.”

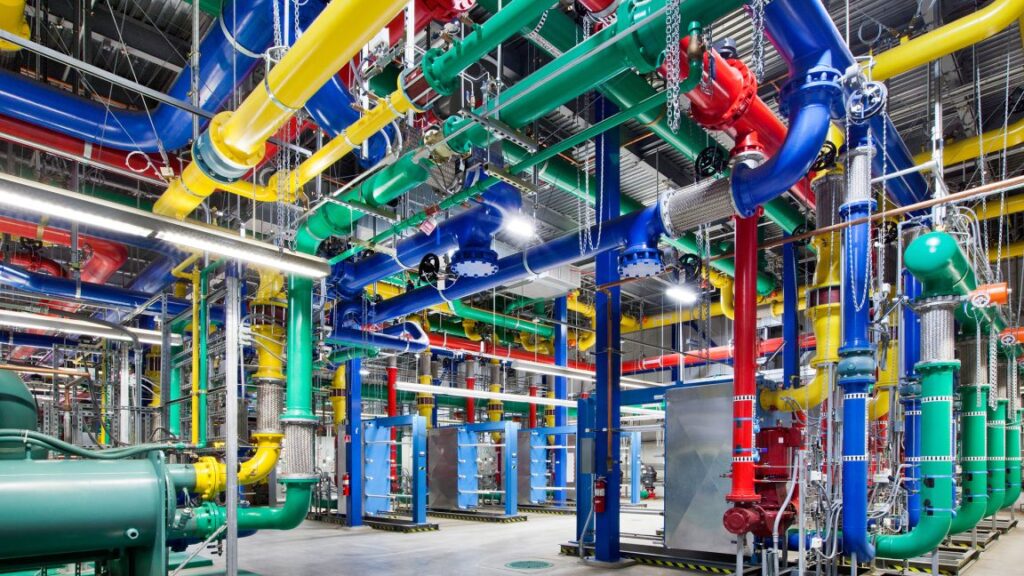

Whereas a thousandfold enhance in compute capability sounds bold by itself, Vahdat famous some key constraints: Google wants to have the ability to ship this enhance in functionality, compute, and storage networking “for primarily the identical value and more and more, the identical energy, the identical vitality degree,” he instructed workers throughout the assembly. “It received’t be straightforward however by way of collaboration and co-design, we’re going to get there.”

It’s unclear how a lot of this “demand” Google talked about represents natural person curiosity in AI capabilities versus the corporate integrating AI options into current providers like Search, Gmail, and Workspace. However whether or not customers are utilizing the options voluntarily or not, Google isn’t the one tech firm struggling to maintain up with a rising person base of consumers utilizing AI providers.

Main tech corporations are in a race to construct out knowledge facilities. Google competitor OpenAI is planning to construct six large knowledge facilities throughout the US by way of its Stargate partnership mission with SoftBank and Oracle, committing over $400 billion within the subsequent three years to achieve almost 7 gigawatts of capability. The corporate faces comparable constraints serving its 800 million weekly ChatGPT customers, with even paid subscribers recurrently hitting utilization limits for options like video synthesis and simulated reasoning fashions.

“The competitors in AI infrastructure is essentially the most essential and likewise the costliest a part of the AI race,” Vahdat stated on the assembly, based on CNBC’s viewing of the presentation. The infrastructure govt defined that Google’s problem goes past merely outspending opponents. “We’re going to spend so much,” he stated, however famous the actual goal is constructing infrastructure that’s “extra dependable, extra performant and extra scalable than what’s out there wherever else.”