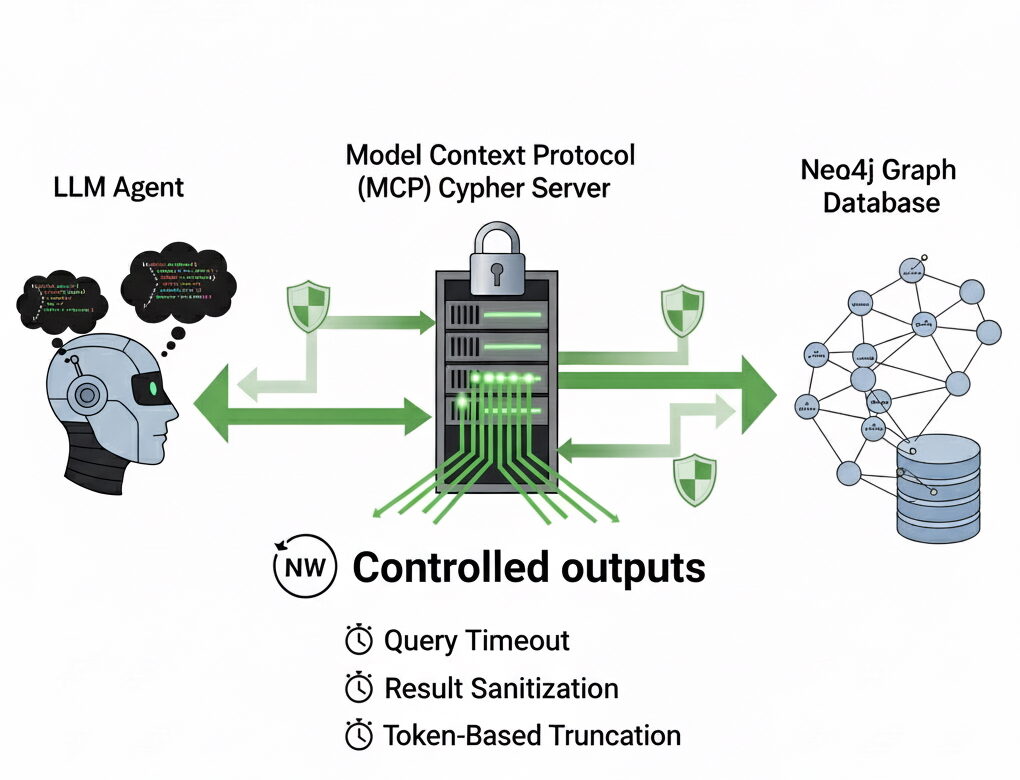

fashions linked to your Neo4j graph achieve unbelievable flexibility: they’ll generate any Cypher queries by the Neo4j MCP Cypher server. This makes it attainable to dynamically generate advanced queries, discover database construction, and even chain multi-step agent workflows.

To generate significant queries, the LLM wants the graph schema as enter: the node labels, relationship varieties, and properties that outline the info mannequin. With this context, the mannequin can translate pure language into exact Cypher, uncover connections, and chain collectively multi-hop reasoning.

For instance, if it is aware of about (Individual)-[:ACTED_IN]->(Film) and (Individual)-[:DIRECTED]->(Film) patterns within the graph, it might flip “Which films characteristic actors who additionally directed?” into a sound question. The schema provides it the grounding wanted to adapt to any graph and produce Cypher statements which might be each right and related.

However this freedom comes at a value. When left unchecked, an LLM can produce Cypher that runs far longer than supposed, or returns huge datasets with deeply nested constructions. The outcome is not only wasted computation but additionally a severe danger of overwhelming the mannequin itself. In the meanwhile, each instrument invocation returns its output again by the LLM’s context. Meaning while you chain instruments collectively, all the intermediate outcomes should movement again by the mannequin. Returning hundreds of rows or embedding-like values into that loop shortly turns into noise, bloating the context window and decreasing the standard of the reasoning that follows.

Because of this throttling responses issues. With out controls, the identical energy that makes the Neo4j MCP Cypher server so compelling additionally makes it fragile. By introducing timeouts, output sanitization, row limits, and token-aware truncation, we are able to hold the system responsive and be certain that question outcomes keep helpful to the LLM as an alternative of drowning it in irrelevant element.

Disclaimer: I work at Neo4j, and this displays my exploration of potential future enhancements to the present implementation.

The server is out there on GitHub.

Managed outputs

So how can we forestall runaway queries and outsized responses from overwhelming our LLM? The reply is to not restrict what sorts of Cypher an agent can write as the entire level of the Neo4j MCP server is to reveal the total expressive energy of the graph. As an alternative, we place good constraints on how a lot comes again and how lengthy a question is allowed to run. In observe, meaning introducing three layers of safety: timeouts, outcome sanitization, and token-aware truncation.

Question timeouts

The primary safeguard is easy: each question will get a time finances. If the LLM generates one thing costly, like a large Cartesian product or a traversal throughout hundreds of thousands of nodes, it would fail quick as an alternative of hanging the entire workflow.

We expose this as an atmosphere variable, QUERY_TIMEOUT, which defaults to 10 seconds. Internally, queries are wrapped in neo4j.Question with the timeout utilized. This fashion, each reads and writes respect the identical certain. This alteration alone makes the server way more sturdy.

Sanitizing noisy values

Trendy graphs typically connect embedding vectors to nodes and relationships. These vectors will be lots of and even hundreds of floating-point numbers per entity. They’re important for similarity search, however when handed into an LLM context, they’re pure noise. The mannequin can’t motive over them straight, and so they devour an enormous quantity of tokens.

To unravel this, we recursively sanitize outcomes with a easy Python operate. Outsized lists are dropped, nested dicts are pruned, and solely values that match inside an inexpensive certain (by default, lists underneath 52 gadgets) are preserved.

Token-aware truncation

Lastly, even sanitized outcomes will be verbose. To ensure they’ll all the time match, we run them by a tokenizer and slice right down to a most of 2048 tokens, utilizing OpenAI’s tiktoken library.

encoding = tiktoken.encoding_for_model("gpt-4")

tokens = encoding.encode(payload)

payload = encoding.decode(tokens[:2048])This last step ensures compatibility with any LLM you join, no matter how huge the intermediate information may be. It’s like a security internet that catches something the sooner layers didn’t filter to keep away from overwhelming the context.

YAML response format

Moreover, we are able to scale back the context dimension additional through the use of YAML responses. In the meanwhile, Neo4j Cypher MCP responses are returned as JSON, which introduce some additional overhead. By changing these dictionaries to YAML, we are able to scale back the variety of tokens in our prompts, decreasing prices and enhancing latency.

yaml.dump(

response,

default_flow_style=False,

sort_keys=False,

width=float('inf'),

indent=1, # Compact however nonetheless structured

allow_unicode=True,

)Tying it collectively

With these layers mixed — timeouts, sanitization, and truncation — the Neo4j MCP Cypher server stays absolutely succesful however much more disciplined. The LLM can nonetheless try any question, however the responses are all the time bounded and context-friendly to an LLM. Utilizing YAML as response format additionally helps decrease the token depend.

As an alternative of flooding the mannequin with massive quantities of information, you come simply sufficient construction to maintain it good. And that, in the long run, is the distinction between a server that feels brittle and one which feels purpose-built for LLMs.

The code for the server is out there on GitHub.