GPT-4o-based brokers led by a human collaborated to develop experimentally validated nanobodies in opposition to SARS-CoV-2. Uncover with me how we’re getting into the period of automated reasoning and work with actionable outputs, and let’s think about how this can go even additional when robotic lab technicians can even perform the moist lab elements of any analysis venture!

In an period the place synthetic intelligence continues to reshape how we code, write, and even purpose, a brand new frontier has emerged: AI conducting actual scientific analysis, as a number of firms (from main established gamers like Google to devoted spin offs) try to attain. And we aren’t speaking nearly simulations, automated summarization, information crunching, or theoretical outputs, however truly about producing experimentally validated supplies, resembling organic designs with potential scientific relevance, on this case that I deliver you immediately.

That future simply obtained a lot nearer; very shut certainly!

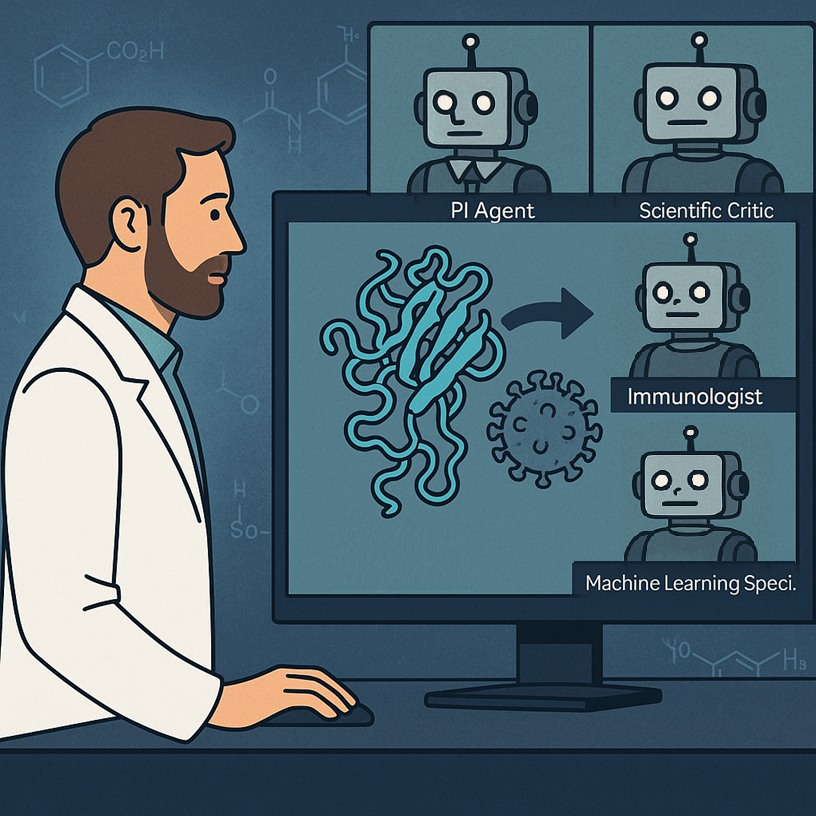

In a groundbreaking paper simply revealed in Nature by researchers from Stanford and the Chan Zuckerberg Biohub, a novel system referred to as the Digital Lab demonstrated {that a} human researcher working with a group of enormous language mannequin (LLM) brokers can design new nanobodies—these are tiny, antibody-like proteins that may bind others to dam their perform—to focus on fast-mutating variants of SARS-CoV-2. This was not only a slender chatbot interplay or a tool-assisted paper; it was an open-ended, multi-phase analysis course of led and executed by AI brokers, every having a specialised experience and position, leading to real-world validated organic molecules that might completely transfer on to downstream research for precise applicability in illness (on this case Covid-19) therapy.

Let’s delve into it to see how that is critical, replicable analysis presenting an method to AI-human (and truly AI-AI) collaborative science works.

From “easy” purposes to materializing a full-staff AI lab

Though there are some precedents to this, the brand new system is in contrast to something earlier than it. And one of many coolest issues is that it’s not primarily based on a special-purpose skilled LLM or software; somewhat, it makes use of GPT-4o instructed with prompts that make it play the position of the completely different sorts of individuals typically concerned in a analysis group.

Till not too long ago, the position of LLMs in science was restricted to question-answering, summarizing, writing assist, coding and maybe some direct information evaluation. Helpful, sure, however not transformative. The Digital Lab introduced on this new Nature paper adjustments that by elevating LLMs from assistants to autonomous researchers that work together with each other and with a human consumer (who brings the analysis query, runs experiments when required, and finally concludes the venture) in structured conferences to discover, hypothesize, code, analyze, and iterate.

The core thought on the coronary heart of this work was certainly to simulate an interdisciplinary lab staffed by AI brokers. Every agent has a scientific position—say, immunologist, computational biologist, or machine studying specialist—and is instantiated from GPT-4o with a “persona” crafted by way of cautious immediate engineering. These brokers are led by a Principal Investigator (PI) Agent and monitored by a Scientific Critic Agent, each digital brokers.

The Critic Agent challenges assumptions and pinpoints errors, appearing because the lab’s skeptical reviewer; because the paper explored, this was a key component of the workflow with out which too many errors and overlooks occurred that went in detriment of the venture.

The human researcher units high-level agendas, injects area constraints, and in the end runs the outputs (particularly the wet-lab experiments). However the “considering” (possibly I ought to start thinking about eradicating the citation marks?) is finished by the brokers.

The way it all labored

The Digital Lab was tasked with fixing an actual and pressing biomedical problem: design new nanobody binders for the KP.3 and JN.1 variants of SARS-CoV-2, which had developed resistance to present therapies. As a substitute of ranging from scratch, the AI brokers determined (sure, they took this determination by themselves) to mutate present nanobodies that have been efficient in opposition to the ancestral pressure however now not labored as nicely. Sure, they selected that!

As all interactions are tracked and documented, we will see precisely how the group moved ahead with the venture.

Initially, the human outlined solely the PI and Critic brokers. The PI agent then created the scientific group by spawning specialised brokers. On this case they have been an Immunologist, a Machine Studying Specialist and a Computational Biologist. In a group assembly, the brokers debated whether or not to design antibodies or nanobodies, and whether or not to design from scratch or mutate present ones. They selected nanobody mutation, justified by sooner timelines and obtainable structural information. They then mentioned about what instruments to make use of and learn how to implement them. They went for the ESM protein language mannequin coupled to AlphaFold multimer for construction prediction and Rosetta for binding vitality calculations. For implementation, the brokers determined to go along with Python code. Very apparently, the code wanted to be reviewed by the Critic agent a number of occasions, and was refined via a number of asynchronous conferences.

From the conferences and a number of runs of code, a precise technique was devised on learn how to suggest the ultimate set of mutations to be examined. Briefly, for the bioinformaticians studying this put up, the PI agent designed an iterative pipeline that makes use of ESM to attain all level mutations on a nanobody sequence by log-likelihood ratio, selects high mutants to foretell their constructions in complicated with the goal protein utilizing AlphaFold-Multimer, scores the interfaces by way of ipLDDT, then makes use of Rosetta to estimate binding vitality, and eventually combines the scores to provide you with a rating of all proposed mutations. This truly was repeated in a cycle, to introduce extra mutations as wanted.

The computational pipeline generated 92 nanobody sequences that have been synthesized and examined in a real-world lab, discovering that almost all of them have been truly proteins that may be produced and dealt with. Two of those proteins gained affinity to the SARS-CoV-2 proteins they have been designed to bind, each to trendy mutants and to ancestral types.

These success charges are much like these coming from analogous initiatives working in conventional kind (that’s executed by people), however they took a lot a lot much less time to conclude. And it hurts to say it, however I’m fairly certain the digital lab entailed a lot decrease prices total, because it concerned a lot fewer folks (therefore salaries).

Like in a human group of scientists: conferences, roles, and collaboration

We noticed above how the Digital Lab mimics how human science occurs: by way of structured interdisciplinary conferences. Every assembly is both a “Staff Assembly”, the place a number of brokers focus on broad questions (the PI begins, others contribute, the Critic critiques, and the PI summarizes and decides); or an “Particular person Assembly” the place a single agent (with or with out the Critic) works on a selected job, e.g., writing code or scoring outputs.

To keep away from hallucinations and inconsistency, the system additionally makes use of parallel conferences; that’s, the identical job is run a number of occasions with completely different randomness (i.e. at excessive “temperature”). It’s attention-grabbing that the outcomes from these a number of conferences is the condensed in a single low-temperature merge assembly that’s far more deterministic and may fairly safely determine which conclusions, amongst all coming from the assorted conferences, make extra sense.

Clearly, these concepts could be utilized to every other form of multi-agent interplay, and for any goal!

How a lot did the people do?

Surprisingly little, for the computational a part of course–because the experiments can’t be a lot automated but, although hold studying to search out some reflections about robots in labs!

On this Digital Lab spherical, LLM brokers wrote 98.7% of the full phrases (over 120,000 tokens), whereas the human researcher contributed simply 1,596 phrases in complete throughout your entire venture. The Brokers wrote all of the scripts for ESM, AlphaFold-Multimer post-processing, and Rosetta XML workflows. The human solely helped working the code and facilitated the real-world experiments. The Digital Lab pipeline was in-built 1-2 days of prompting and conferences, and the nanobody design computation ran in ~1 week.

Why this issues (and what comes subsequent)

The Digital Lab might function the prototype for a basically new analysis mannequin–and truly for a basically new technique to work, the place every part that may be performed on a pc is left automated, with people solely taking the very vital choices. LLMs are clearly shifting from passive instruments to lively collaborators that, because the Digital Lab reveals, can drive complicated, interdisciplinary initiatives from thought to implementation.

The following formidable leap? Substitute the palms of human technicians, who ran the experiments, with robotic ones. Clearly, the subsequent frontier is in automatizing the bodily interplay with the actual world, which is actually what robots are. Think about then the complete pipeline as utilized to a analysis lab:

- A human PI defines a high-level organic objective.

- The group does analysis of present info, scans databases, brainstorms concepts.

- A set of AI brokers selects computational instruments if required, writes and runs code and/or analyses, and eventually proposes experiments.

- Then, robotic lab technicians, somewhat than human technicians, perform the protocols: pipetting, centrifuging, plating, imaging, information assortment.

- The outcomes circulate again into the Digital Lab, closing the loop.

- Brokers analyze, adapt, iterate.

This may make the analysis course of actually end-to-end autonomous. From drawback definition to experiment execution to outcome interpretation, all elements can be run by an built-in AI-robotics system with minimal human intervention—simply high-level steering, supervision, and international imaginative and prescient.

Robotic biology labs are already being prototyped. Emerald Cloud Lab, Strateos, and Transcriptic (now a part of Colabra) provide robotic wet-lab-as-a-service. Future Home is a non-profit constructing AI brokers to automate analysis in biology and different complicated sciences. In academia, some autonomous chemistry labs exist whose robots can discover chemical house on their very own. Biofoundries use programmable liquid handlers and robotic arms for artificial biology workflows. Adaptyv Bio automates protein expression and testing at scale.

Such sorts of automatized laboratory programs coupled to rising programs like Digital Lab might radically rework how science and know-how progress. The clever layer drives the venture and provides these robots work to do, whose output then feeds again into the considering software in a closed-loop discovery engine that will run 24/7 with out fatigue or scheduling conflicts, conduct a whole bunch or 1000’s of micro-experiments in parallel, and quickly discover huge speculation areas which are simply not possible for human labs. Furthermore, the labs, digital labs, and managers don’t even have to be bodily collectively, permitting to optimize how sources are unfold.

There are challenges, in fact. Actual-world science is messy and nonlinear. Robotic protocols should be extremely strong. Sudden errors nonetheless want judgment. However as robotics and AI proceed to evolve, these gaps will definitely shrink.

Last ideas

We people have been all the time assured that know-how within the type of good computer systems and robots would kick us out of our extremely repetitive bodily jobs, whereas creativity and considering would nonetheless be our area of mastery for many years, maybe centuries. Nonetheless, regardless of fairly some automation by way of robots, the AI of the 2020s has proven us that know-how may also be higher than us at a few of our most brain-intensive jobs.

Within the close to future, LLMs don’t simply reply our questions or barely assist us with work. They’ll ask, argue, debate, determine. And typically, they are going to uncover!

References and additional reads

The Nature paper analyzed right here:

https://www.nature.com/articles/s41586-025-09442-9

Different scientific discoveries by AI programs: