is the science of offering LLMs with the proper context to maximise efficiency. Whenever you work with LLMs, you usually create a system immediate, asking the LLM to carry out a sure activity. Nonetheless, when working with LLMs from a programmer’s perspective, there are extra components to think about. You must decide what different knowledge you may feed your LLM to enhance its capability to carry out the duty you requested it to do.

On this article, I’ll focus on the science of context engineering and how one can apply context engineering strategies to enhance your LLM’s efficiency.

You may as well learn my articles on Reliability for LLM Applications and Document QA using Multimodal LLMs

Desk of Contents

Definition

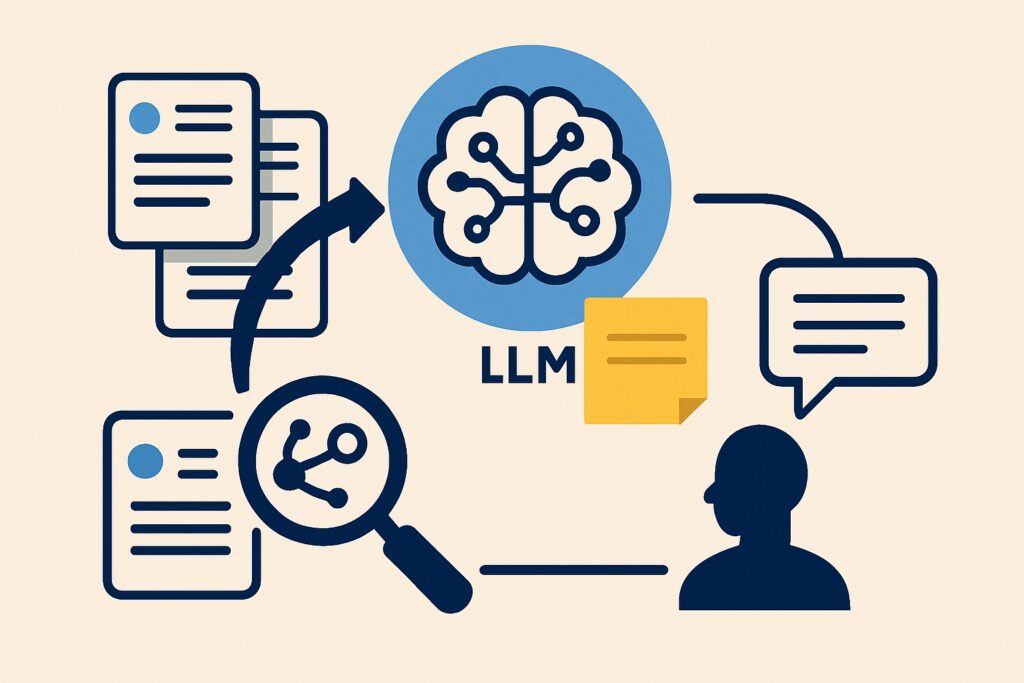

Earlier than I begin, it’s essential to outline the time period context engineering. Context engineering is basically the science of deciding what to feed into your LLM. This will, for instance, be:

- The system immediate, which tells the LLM methods to act

- Doc knowledge fetch utilizing RAG vector search

- Few-shot examples

- Instruments

The closest earlier description of this has been the time period immediate engineering. Nonetheless, immediate engineering is a much less descriptive time period, contemplating it implies solely altering the system immediate you might be feeding to the LLM. To get most efficiency out of your LLM, you must take into account all of the context you might be feeding into it, not solely the system immediate.

Motivation

My preliminary motivation for this text got here from studying this Tweet by Andrej Karpathy.

I actually agreed with the purpose Andrej made on this tweet. Immediate engineering is certainly an essential science when working with LLMs. Nonetheless, immediate engineering doesn’t cowl every part we enter into LLMs. Along with the system immediate you write, you even have to think about components akin to:

- Which knowledge must you insert into your immediate

- How do you fetch that knowledge

- Easy methods to solely present related info to the LLM

- And many others.

I’ll focus on all of those factors all through this text.

API vs Console utilization

One essential distinction to make clear is whether or not you might be utilizing the LLMs from an API (calling it with code), or by way of the console (for instance, by way of the ChatGPT website or software). Context engineering is certainly essential when working with LLMs by means of the console; nonetheless, my focus on this article will probably be on API utilization. The explanation for that is that when utilizing an API, you’ve got extra choices for dynamically altering the context you might be feeding the LLM. For instance, you are able to do RAG, the place you first carry out a vector search, and solely feed the LLM a very powerful bits of knowledge, slightly than the complete database.

These dynamic adjustments will not be accessible in the identical manner when interacting with LLMs by means of the console; thus, I’ll give attention to utilizing LLMs by means of an API.

Context engineering strategies

Zero-shot prompting

Zero-shot prompting is the baseline for context engineering. Doing a activity zero-shot means the LLM is performing a activity it hasn’t seen earlier than. You’re primarily solely offering a activity description as context for the LLM. For instance, offering an LLM with an extended textual content and asking it to categorise the textual content into class A or B, based on some definition of the lessons. The context (immediate) you might be feeding the LLM may look one thing like this:

You're an skilled textual content classifier, and tasked with classifying texts into

class A or class B.

- Class A: The textual content accommodates a optimistic sentiment

- Class B: The following accommodates a damaging sentiment

Classify the textual content: {textual content}Relying on the duty, this might work very properly. LLMs are generalists and are in a position to carry out most straightforward text-based duties. Classifying a textual content into considered one of two lessons will often be a easy activity, and zero-shot prompting will thus often work fairly properly.

Few-shot prompting

This infographic highlights methods to carry out few-shot prompting:

The follow-up from zero-shot prompting is few-shot prompting. With few-shot prompting, you present the LLM with a immediate just like the one above, however you additionally present it with examples of the duty it can carry out. This added context will assist the LLM enhance at performing the duty. Following up on the immediate above, a few-shot immediate may seem like:

You're an skilled textual content classifier, and tasked with classifying texts into

class A or class B.

- Class A: The textual content accommodates a optimistic sentiment

- Class B: The following accommodates a damaging sentiment

{textual content 1} -> Class A

{textual content 2} -> class B

Classify the textual content: {textual content}You may see I’ve offered the mannequin some examples wrapped in

Few-shot prompting works properly since you are offering the mannequin with examples of the duty you might be asking it to carry out. This often will increase efficiency.

You may think about this works properly on people as properly. When you ask a human a activity they’ve by no means completed earlier than, simply by describing the duty, they could carry out decently (in fact, relying on the problem of the duty). Nonetheless, when you additionally present the human with examples, their efficiency will often improve.

Total, I discover it helpful to consider LLM prompts as if I’m asking a human to carry out a activity. Think about as an alternative of prompting an LLM, you merely present the textual content to a human, and also you ask your self the query:

Given this immediate, and no different context, will the human be capable to carry out the duty?

If the reply is not any, you must work on clarifying and bettering your immediate.

I additionally wish to point out dynamic few-shot prompting, contemplating it’s a method I’ve had numerous success with. Historically, with few-shot prompting, you’ve got a set listing of examples you feed into each immediate. Nonetheless, you may usually obtain greater efficiency utilizing dynamic few-shot prompting.

Dynamic few-shot prompting means choosing the few-shot examples dynamically when creating the immediate for a activity. For instance, if you’re requested to categorise a textual content into lessons A and B, and you have already got an inventory of 200 texts and their corresponding labels. You may then carry out a similarity search between the brand new textual content you might be classifying and the instance texts you have already got. Persevering with, you may measure the vector similarity between the texts and solely select essentially the most related texts (out of the 200 texts) to feed into your immediate as context. This fashion, you’re offering the mannequin with extra related examples of methods to carry out the duty.

RAG

Retrieval augmented era is a widely known approach for growing the data of LLMs. Assume you have already got a database consisting of hundreds of paperwork. You now obtain a query from a person, and need to reply it, given the data inside your database.

Sadly, you may’t feed the complete database into the LLM. Despite the fact that we’ve LLMs akin to Llama 4 Scout with a 10-million context size window, databases are often a lot bigger. You subsequently have to seek out essentially the most related info within the database to feed into your LLM. RAG does this equally to dynamic few-shot prompting:

- Carry out a vector search

- Discover essentially the most related paperwork to the person query (most related paperwork are assumed to be most related)

- Ask the LLM to reply the query, given essentially the most related paperwork

By performing RAG, you might be doing context engineering by solely offering the LLM with essentially the most related knowledge for performing its activity. To enhance the efficiency of the LLM, you may work on the context engineering by bettering your RAG search. This will, for instance, be completed by bettering the search to seek out solely essentially the most related paperwork.

You may learn extra about RAG in my article about creating a RAG system on your private knowledge:

Instruments (MCP)

You may as well present the LLM with instruments to name, which is a crucial a part of context engineering, particularly now that we see the rise of AI brokers. Device calling at present is usually completed utilizing Model Context Protocol (MCP), a concept started by Anthropic.

AI brokers are LLMs able to calling instruments and thus performing actions. An instance of this may very well be a climate agent. When you ask an LLM with out entry to instruments concerning the climate in New York, it will be unable to supply an correct response. The explanation for that is naturally that details about the climate must be fetched in actual time. To do that, you may, for instance, give the LLM a device akin to:

@device

def get_weather(metropolis):

# code to retrieve the present climate for a metropolis

return climate

When you give the LLM entry to this device and ask it concerning the climate, it will possibly then seek for the climate for a metropolis and offer you an correct response.

Offering instruments for LLMs is extremely essential, because it considerably enhances the skills of the LLM. Different examples of instruments are:

- Search the web

- A calculator

- Search by way of Twitter API

Matters to think about

On this part, I make a couple of notes on what you must take into account when creating the context to feed into your LLM

Utilization of context size

The context size of an LLM is a crucial consideration. As of July 2025, you may feed most frontier mannequin LLMs with over 100,000 enter tokens. This gives you with numerous choices for methods to make the most of this context. You must take into account the tradeoff between:

- Together with numerous info in a immediate, thus risking a few of the info getting misplaced within the context

- Lacking some essential info within the immediate, thus risking the LLM not having the required context to carry out a selected activity

Normally, the one manner to determine the stability, is to check your LLMs efficiency. For instance with a classificaition activity, you may verify the accuracy, given completely different prompts.

If I uncover the context to be too lengthy for the LLM to work successfully, I typically cut up a activity into a number of prompts. For instance, having one immediate summarize a textual content, and a second immediate classifying the textual content abstract. This can assist the LLM make the most of its context successfully and thus improve efficiency.

Moreover, offering an excessive amount of context to the mannequin can have a major draw back, as I describe within the subsequent part:

Context rot

Final week, I learn an interesting article about context rot. The article was about the truth that growing the context size lowers LLM efficiency, regardless that the duty issue doesn’t improve. This means that:

Offering an LLM irrelevant info, will lower its capability to carry out duties succesfully, even when activity issue doesn’t improve

The purpose right here is basically that you must solely present related info to your LLM. Offering different info decreases LLM efficiency (i.e., efficiency isn’t impartial to enter size)

Conclusion

On this article, I’ve mentioned the subject of context engineering, which is the method of offering an LLM with the suitable context to carry out its activity successfully. There are numerous strategies you may make the most of to replenish the context, akin to few-shot prompting, RAG, and instruments. These are all highly effective strategies you should use to considerably enhance an LLM’s capability to carry out a activity successfully. Moreover, you even have to think about the truth that offering an LLM with an excessive amount of context additionally has downsides. Rising the variety of enter tokens reduces efficiency, as you would examine within the article about context rot.

👉 Comply with me on socials:

🧑💻 Get in touch

🔗 LinkedIn

🐦 X / Twitter

✍️ Medium

🧵 Threads