Within the first story [1] of this collection, we have now:

- Addressed multiplication of a matrix by a vector,

- Launched the idea of X-diagram for a given matrix,

- Noticed conduct of a number of particular matrices, when being multiplied by a vector.

Within the present 2nd story, we are going to grasp the bodily which means of matrix-matrix multiplication, perceive why multiplication isn’t a symmetrical operation (i.e., why “A*B ≠ B*A“), and eventually, we are going to see how a number of particular matrices behave when being multiplied over one another.

So let’s begin, and we’ll do it by recalling the definitions that I take advantage of all through this collection:

- Matrices are denoted with uppercase (like ‘A‘ and ‘B‘), whereas vectors and scalars are denoted with lowercase (like ‘x‘, ‘y‘ or ‘m‘, ‘n‘).

- |x| – is the size of vector ‘x‘,

- rows(A) – variety of rows of matrix ‘A‘,

- columns(A) – variety of columns of matrix ‘A‘.

The idea of multiplying matrices

Multiplication of two matrices “A” and “B” might be the most typical operation in matrix evaluation. A identified truth is that “A” and “B” might be multiplied provided that “columns(A) = rows(B)”. On the similar time, “A” can have any variety of rows, and “B” can have any variety of columns. Cells of the product matrix “C = A*B” are calculated by the next method:

[

begin{equation*}

c_{i,j} = sum_{k=1}^{p} a_{i,k}*b_{k,j}

end{equation*}

]

the place “p = columns(A) = rows(B)”. The outcome matrix “C” may have the size:

rows(C) = rows(A),

columns(C) = columns(B).

Performing upon the multiplication method, when calculating “A*B” we should always scan i-th row of “A” in parallel to scanning j-th column of “B“, and after summing up all of the merchandise “ai,ok*bok,j” we may have the worth of “ci,j“.

One other well-known truth is that matrix multiplication isn’t a symmetrical operation, i.e., “A*B ≠ B*A“. With out going into particulars, we will already see that when multiplying 2 rectangular matrices:

For newbies, the truth that matrix multiplication isn’t a symmetrical operation typically appears unusual, as multiplication outlined for nearly another object is a symmetrical operation. One other truth that’s typically unclear is why matrix multiplication is carried out by such a wierd method.

On this story, I’m going to provide my solutions to each of those questions, and never solely to them…

Derivation of the matrices multiplication method

Multiplying “A*B” ought to produce such a matrix ‘C‘, that:

y = C*x = (A*B)*x = A*(B*x).

In different phrases, multiplying any vector ‘x‘ by the product matrix “C=A*B” ought to end in the identical vector ‘y‘, which we are going to obtain if at first multiplying ‘B‘ by ‘x‘, after which multiplying ‘A‘ by that intermediate outcome.

This already explains why in “C=A*B“, the situation that “columns(A) = rows(B)” needs to be saved. That’s due to the size of the intermediate vector. Let’s denote it as ‘t‘:

t = B*x,

y = C*x = (A*B)*x = A*(B*x) = A*t.

Clearly, as “t = B*x“, we are going to obtain a vector ‘t‘ of size “|t| = rows(B)”. However later, matrix ‘A‘ goes to be multiplied by ‘t‘, which requires ‘t‘ to have the size “|t| = columns(A)”. From these 2 information, we will already determine that:

rows(B) = |t| = columns(A), or

rows(B) = columns(A).

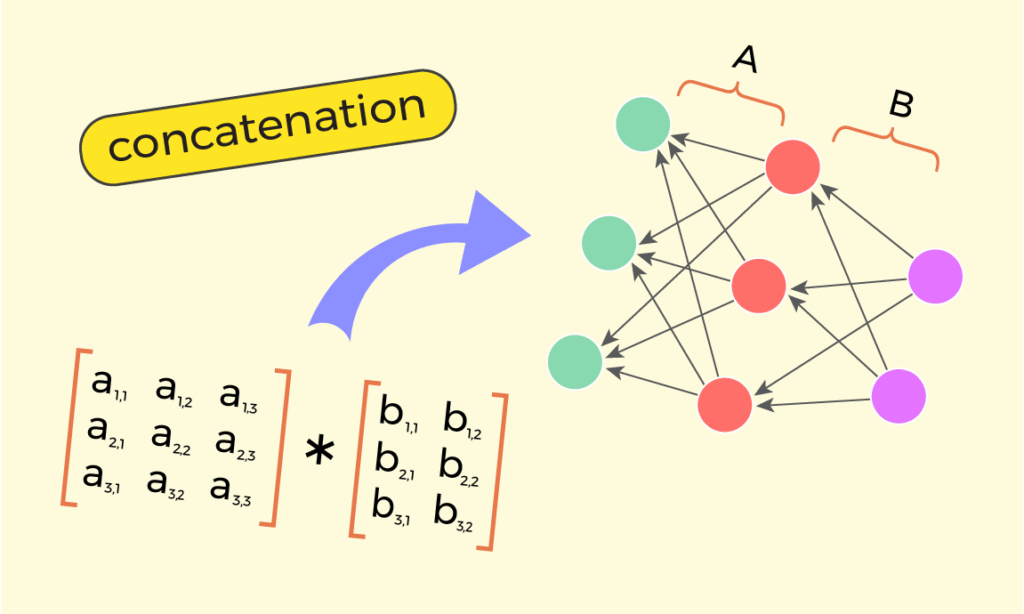

Within the first story [1] of this collection, we have now realized the “X-way interpretation” of matrix-vector multiplication “A*x“. Contemplating that for “y = (A*B)x“, vector ‘x‘ goes at first by means of the transformation of matrix ‘B‘, after which it continues by means of the transformation of matrix ‘A‘, we will broaden the idea of “X-way interpretation” and current matrix-matrix multiplication “A*B” as 2 adjoining X-diagrams:

Now, what ought to a sure cell “ci,j” of matrix ‘C‘ be equal to? From half 1 – “matrix-vector multiplication” [1], we do not forget that the bodily which means of “ci,j” is – how a lot the enter worth ‘xj‘ impacts the output worth ‘yi‘. Contemplating the image above, let’s see how some enter worth ‘xj‘ can have an effect on another output worth ‘yi‘. It could have an effect on by means of the intermediate worth ‘t1‘, i.e., by means of arrows “ai,1” and “b1,j“. Additionally, the love can happen by means of the intermediate worth ‘t2‘, i.e., by means of arrows “ai,2” and “b2,j“. Usually, the love of ‘xj‘ on ‘yi‘ can happen by means of any worth ‘tok‘ of the intermediate vector ‘t‘, i.e., by means of arrows “ai,ok” and “bok,j“.

So there are ‘p‘ attainable methods through which the worth ‘xj‘ influences ‘yi‘, the place ‘p‘ is the size of the intermediate vector: “p = |t| = |B*x|”. The influences are:

[begin{equation*}

begin{matrix}

a_{i,1}*b_{1,j},

a_{i,2}*b_{2,j},

a_{i,3}*b_{3,j},

dots

a_{i,p}*b_{p,j}

end{matrix}

end{equation*}]

All these ‘p‘ influences are impartial of one another, which is why within the method of matrices multiplication they take part as a sum:

[begin{equation*}

c_{i,j} =

a_{i,1}*b_{1,j} + a_{i,2}*b_{2,j} + dots + a_{i,p}*b_{p,j} =

sum_{k=1}^{p} a_{i,k}*b_{k,j}

end{equation*}]

That is my visible clarification of the matrix-matrix multiplication method. By the best way, decoding “A*B” as a concatenation of X-diagrams of “A” and “B” explicitly exhibits why the situation “columns(A) = rows(B)” needs to be held. That’s easy, as a result of in any other case it won’t be attainable to concatenate the 2 X-diagrams:

Why is it that “A*B ≠ B*A”

Decoding matrix multiplication “A*B” as a concatenation of X-diagrams of “A” and “B” additionally explains why multiplication isn’t symmetrical for matrices, i.e., why “A*B ≠ B*A“. Let me present that on two sure matrices:

[begin{equation*}

A =

begin{bmatrix}

0 & 0 & 0 & 0

0 & 0 & 0 & 0

a_{3,1} & a_{3,2} & a_{3,3} & a_{3,4}

a_{4,1} & a_{4,2} & a_{4,3} & a_{4,4}

end{bmatrix}

, B =

begin{bmatrix}

b_{1,1} & b_{1,2} & 0 & 0

b_{2,1} & b_{2,2} & 0 & 0

b_{3,1} & b_{3,2} & 0 & 0

b_{4,1} & b_{4,2} & 0 & 0

end{bmatrix}

end{equation*}]

Right here, matrix ‘A‘ has its higher half full of zeroes, whereas ‘B‘ has zeroes on its proper half. Corresponding X-diagrams are:

The truth that ‘A’ has zeroes on its higher rows ends in the higher objects of its left stack being disconnected.

The truth that ‘B’ has zeroes on its proper columns ends in the decrease objects of its proper stack being disconnected.

What’s going to occur if making an attempt to multiply “A*B“? Then A’s X-diagram needs to be positioned to the left of B’s X-diagram.

Having such a placement, we see that enter values ‘x1‘ and ‘x2‘ can have an effect on each output values ‘y3‘ and ‘y4‘. Notably, which means the product matrix “A*B” is non-zero.

[

begin{equation*}

A*B =

begin{bmatrix}

0 & 0 & 0 & 0

0 & 0 & 0 & 0

c_{3,1} & c_{3,2} & 0 & 0

c_{4,1} & c_{4,2} & 0 & 0

end{bmatrix}

end{equation*}

]

Now, what’s going to occur if we attempt to multiply these two matrices within the reverse order? For presenting the product “B*A“, B’s X-diagram needs to be drawn to the left of A’s diagram:

We see that now there isn’t any related path, by which any enter worth “xj” can have an effect on any output worth “yi“. In different phrases, within the product matrix “B*A” there isn’t any affection in any respect, and it’s truly a zero-matrix.

[begin{equation*}

B*A =

begin{bmatrix}

0 & 0 & 0 & 0

0 & 0 & 0 & 0

0 & 0 & 0 & 0

0 & 0 & 0 & 0

end{bmatrix}

end{equation*}]

This instance clearly illustrates why order is essential for matrix-matrix multiplication. In fact, many different examples can be discovered.

Multiplying chain of matrices

X-diagrams can be concatenated once we multiply 3 or extra matrices. For instance, for the case of:

G = A*B*C,

we will draw the concatenation within the following method:

Right here we now have 2 intermediate vectors:

t = C*x, and

s = (B*C)*x = B*(C*x) = B*t

whereas the outcome vector is:

y = (A*B*C)*x = A*(B*(C*x)) = A*(B*t) = A*s.

The variety of attainable methods through which some enter worth “xj” can have an effect on some output worth “yi” grows right here by an order of magnitude.

Extra exactly, the affect of sure “xj” over “yi” can come by means of any merchandise of the primary intermediate stack “t“, and any merchandise of the second intermediate stack “s“. So the variety of methods of affect turns into “|t|*|s|”, and the method for “gi,j” turns into:

[begin{equation*}

g_{i,j} = sum_{v=1}^ sum_{u=1}^ a_{i,v}*b_{v,u}*c_{u,j}

end{equation*}]

Multiplying matrices of particular sorts

We will already visually interpret matrix-matrix multiplication. Within the first story of this collection [1], we additionally realized about a number of particular forms of matrices – the size matrix, shift matrix, permutation matrix, and others. So let’s check out how multiplication works for these forms of matrices.

Multiplication of scale matrices

A scale matrix has non-zero values solely on its diagonal:

From concept, we all know that multiplying two scale matrices ends in one other scale matrix. Why is it that method? Let’s concatenate X-diagrams of two scale matrices:

The concatenation X-diagram clearly exhibits that any enter merchandise “xi” can nonetheless have an effect on solely the corresponding output merchandise “yi“. It has no method of influencing another output merchandise. Due to this fact, the outcome construction behaves the identical method as another scale matrix.

Multiplication of shift matrices

A shift matrix is one which, when multiplied over some enter vector ‘x‘, shifts upwards or downwards values of ‘x‘ by some ‘ok‘ positions, filling the emptied slots with zeroes. To realize that, a shift matrix ‘V‘ will need to have 1(s) on a line parallel to its major diagonal, and 0(s) in any respect different cells.

The idea says that multiplying 2 shift matrices ‘V1‘ and ‘V2‘ ends in one other shift matrix. Interpretation with X-diagrams provides a transparent clarification of that. Multiplying the shift matrices ‘V1‘ and ‘V2‘ corresponds to concatenating their X-diagrams:

We see that if shift matrix ‘V1‘ shifts values of its enter vector by ‘k1‘ positions upwards, and shift matrix ‘V2‘ shifts values of the enter vector by ‘k2‘ positions upwards, then the outcomes matrix “V3 = V1*V2” will shift values of the enter vector by ‘k1+k2‘ positions upwards, which implies that “V3” can be a shift matrix.

Multiplication of permutation matrices

A permutation matrix is one which, when multiplied by an enter vector ‘x‘, rearranges the order of values in ‘x‘. To behave like that, the NxN-sized permutation matrix ‘P‘ should fulfill the next standards:

- it ought to have N 1(s),

- no two 1(s) needs to be on the identical row or the identical column,

- all remaining cells needs to be 0(s).

Upon concept, multiplying 2 permutation matrices ‘P1‘ and ‘P2‘ ends in one other permutation matrix ‘P3‘. Whereas the explanation for this may not be clear sufficient if matrix multiplication within the unusual method (as scanning rows of ‘P1‘ and columns of ‘P2‘), it turns into a lot clearer if it by means of the interpretation of X-diagrams. Multiplying “P1*P2” is similar as concatenating X-diagrams of ‘P1‘ and ‘P2‘.

We see that each enter worth ‘xj‘ of the appropriate stack nonetheless has just one path for reaching another place ‘yi‘ on the left stack. So “P1*P2” nonetheless acts as a rearrangement of all values of the enter vector ‘x‘, in different phrases, “P1*P2” can be a permutation matrix.

Multiplication of triangular matrices

A triangular matrix has all zeroes both above or under its major diagonal. Right here, let’s focus on upper-triangular matrices, the place zeroes are under the principle diagonal. The case of lower-triangular matrices is analogous.

The truth that non-zero values of ‘B‘ are both on its major diagonal or above, makes all of the arrows of its X-diagram both horizontal or directed upwards. This, in flip, implies that any enter worth ‘xj‘ of the appropriate stack can have an effect on solely these output values ‘yi‘ of the left stack, which have a lesser or equal index (i.e., “i ≤ j“). That is likely one of the properties of an upper-triangular matrix.

In response to concept, multiplying two upper-triangular matrices ends in one other upper-triangular matrix. And right here too, interpretation with X-diagrams supplies a transparent clarification of that truth. Multiplying two upper-triangular matrices ‘A‘ and ‘B‘ is similar as concatenating their X-diagrams:

We see that placing two X-diagrams of triangular matrices ‘A‘ and ‘B‘ close to one another ends in such a diagram, the place each enter worth ‘xj‘ of the appropriate stack nonetheless can have an effect on solely these output values ‘yi‘ of the left stack, that are both on its stage or above it (in different phrases, “i ≤ j“). Because of this the product “A*B” additionally behaves like an upper-triangular matrix; thus, it will need to have zeroes under its major diagonal.

Conclusion

Within the present 2nd story of this collection, we noticed how matrix-matrix multiplication might be offered visually, with the assistance of so-called “X-diagrams”. We have now realized that doing multiplication “C = A*B” is similar as concatenating X-diagrams of these two matrices. This technique clearly illustrates numerous properties of matrix multiplications, like why it’s not a symmetrical operation (“A*B ≠ B*A“), in addition to explains the method:

[begin{equation*}

c_{i,j} = sum_{k=1}^{p} a_{i,k}*b_{k,j}

end{equation*}]

We have now additionally noticed why multiplication behaves in sure methods when operands are matrices of particular sorts (scale, shift, permutation, and triangular matrices).

I hope you loved studying this story!

Within the coming story, we are going to deal with how matrix transposition “AT” might be interpreted with X-diagrams, and what we will acquire from such interpretation, so subscribe to my web page to not miss the updates!

My gratitude to:

– Roza Galstyan, for cautious evaluation of the draft ( https://www.linkedin.com/in/roza-galstyan-a54a8b352 )

– Asya Papyan, for the exact design of all of the used illustrations ( https://www.behance.net/asyapapyan ).In the event you loved studying this story, be at liberty to comply with me on LinkedIn, the place, amongst different issues, I can even publish updates ( https://www.linkedin.com/in/tigran-hayrapetyan-cs/ ).

All used photographs, except in any other case famous, are designed by request of the creator.

References

[1] – Understanding matrices | Half 1: matrix-vector multiplication : https://towardsdatascience.com/understanding-matrices-part-1-matrix-vector-multiplication/